Static Code Scheduling

Understand the importance of scheduling instructions for performance enhancement, comparing static vs. dynamic architectures, various scheduling approaches, and strategies to avoid hazards and improve efficiency.

Static Code Scheduling

E N D

Presentation Transcript

Static Code Scheduling CS 671 April 1, 2008

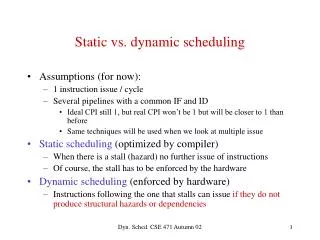

Code Scheduling • Scheduling or reordering instructions to improve performance and/or guarantee correctness • Important for dynamically-scheduled architectures • Crucial (assumed!) for statically-scheduled architectures, e.g. VLIW or EPIC • Takes into account anticipated latencies • Machine-specific, performed later in the optimization pass • How does this contrast with our earlier exploration of code motion?

Why Must the Compiler Schedule? • Many machines are pipelined and expose some aspects of pipelining to the user (compiler) • Examples: • Branch delay slots! • Memory-access delays • Multi-cycle operations • Some machines don’t have scheduling hardware

Example • Assume loads take 2 cycles and branches have a delay slot. • ____cycles

Example • Assume loads take 2 cycles and branches have a delay slot. • ____cycles

Start Op Try to fill Use Op Code Scheduling Strategy • Get resources operating in parallel • Integer data path • Integer multiply / divide hardware • FP adder, multiplier, divider • Method • Fill with computations that do not require result or same hardware resources • Drawbacks • Highly hardware dependent

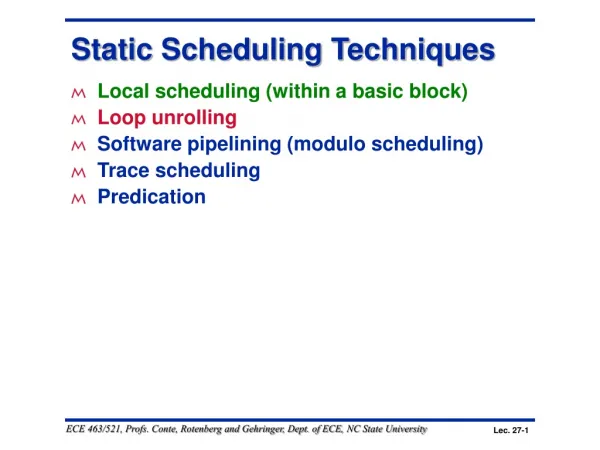

Scheduling Approaches • Local • Branch scheduling • Basic-block scheduling • Global • Cross-block scheduling • Software pipelining • Trace scheduling • Percolation scheduling

Branch Scheduling • Two problems: • Branches often take some number of cycles to complete • Can be a delay between a compare b and its associated branch • A compiler will try to fill these slots with valid instructions (rather than nop) • Delay slots – present in PA-RISC, SPARC, MIPS • Condition delay – PowerPC, Pentium

Recall from Architecture… • IF – Instruction Fetch • ID – Instruction Decode • EX – Execute • MA – Memory access • WB – Write back IF ID EX MA WB IF ID EX MA WB IF ID EX MA WB

Control Hazards Taken Branch ID EX MA WB IF IF --- --- --- --- Instr + 1 Branch Target IF ID EX MA WB IF ID EX MA WB Branch Target + 1

Data Dependences • If two operations access the same register, they are dependent • Types of data dependences Output Anti Flow r1 = r2 + r3 r2 = r5 * 6 r1 = r2 + r3 r1 = r4 * 6 r1 = r2 + r3 r4 = r1 * 6

Data Hazards Memory latency: data not ready lw R1,0(R2) IF ID EX MA WB IF ID EX stall MA WB add R3,R1,R4

Data Hazards Instruction latency: execute takes > 1 cycle addf R3,R1,R2 IF ID EX EX MA WB IF ID stall EX EX MA WB addf R3,R3,R4 Assumes floating point ops take 2 execute cycles

Multi-cycle Instructions • Scheduling is particularly important for multi-cycle operations • Alpha instructions > 1 cycle latency (partial list) mull(32-bit integer multiply) 8 mulq(64-bit integer multiply) 16 addt(fp add) 4 mult(fp multiply) 4 divs(fp single-precision divide) 10 divt(fp double-precision divide) 23

Avoiding data hazards • Move loads earlier and stores later (assuming this does not violate correctness) • Other stalls may require more sophisticated re-ordering, i.e. ((a+b)+c)+d becomes (a+b)+(c+d) • How can we do this in a systematic way??

Example: Without Scheduling • Assume: • memory instrs take 3 cycles • mult takes 2 cycles (to have • result in register) • rest take 1 cycle • ____cycles

Basic Block Dependence DAGS • Nodes - instructions • Edges - dependence between I1 and I2 • When we cannot determine whether there is a dependence, we must assume there is one • a) lw R2, (R1) • b) lw R3, (R1) 4 • c) R4 R2 + R3 • d) R5 R2 - 1 a b 2 2 2 d c

Example – Build the DAG Assume: memory instrs = 3 mult = 2 (to have result in register) rest = 1 cycle

Creating a schedule • Create a DAG of dependences • Determine priority • Schedule instructions with • Ready operands • Highest priority • Heuristics: If multiple possibilities, fall back on other priority functions

Operation Priority • Priority – Need a mechanism to decide which ops to schedule first (when you have choices) • Common priority functions • Height – Distance from exit node • Give priority to amount of work left to do • Slackness – inversely proportional to slack • Give priority to ops on the critical path • Register use – priority to nodes with more source operands and fewer destination operands • Reduces number of live registers • Uncover – high priority to nodes with many children • Frees up more nodes • Original order – when all else fails

Computing Priorities • Height(n) = • exec(n) if n is a leaf • max(height(m)) + exec(n) for m, where m is a successor of n • Critical path(s) = path through the dependence DAG with longest latency

Example – Determine Height and CP Assume: memory instrs = 3 mult = 2 = (to have result in register) rest = 1 cycle Critical path: _______

Example – List Scheduling _____cycles

VLIW • Very Long Instruction Word • Compiler determines exactly what is issued every cycle (before the program is run) • Schedules also account for latencies • All hardware changes result in a compiler change • Usually embedded systems (hence simple HW) • Itanium is actually an EPIC-style machine (accounts for most parallelism, not latencies)

Sample VLIW code VLIW processor: 5 issue 2 Add/Sub units (1 cycle) 1 Mul/Div unit (2 cycle, unpipelined) 1 LD/ST unit (2 cycle, pipelined) 1 Branch unit (no delay slots) Add/Sub Add/Sub Mul/Div Ld/St Branch c = a + b d = a - b e = a * b ld j = [x] nop g = c + d h = c - d nop ld k = [y] nop nop nop i = j * c ld f = [z] br g

1 2m 3m 4 5m 6 7m 8 9 10 Multi-Issue Scheduling Example Machine: 2 issue, 1 memory port, 1 ALU Memory port = 2 cycles, non-pipelined ALU = 1 cycle RU_map Schedule time ALU MEM 0 1 2 3 4 5 6 7 8 9 time Ready Placed 0 1 2 3 4 5 6 7 8 9

Earliest Latest Sets Machine: 2 issue, 1 memory port, 1 ALU Memory port = 2 cycles, pipelined ALU = 1 cycle 1m 2m 3 4m 5 6 7 8 9m 10

List Scheduling Algorithm • Build dependence graph, calculate priority • Add all ops to UNSCHEDULED set • time = 0 • while (UNSCHEDULED is not empty) • time++ • READY = UNSCHEDULED ops whose incoming deps have been satisfied • Sort READY using priority function • For each op in READY (highest to lowest priority) • op can be scheduled at current time? (resources free?) • Yes: schedule it, op.issue_time = time • Mark resources busy in RU_map relative to issue time • Remove op from UNSCHEDULED/READY sets • No: continue

Improving Basic Block Scheduling • Loop unrolling – creates longer basic blocks • Register renaming – can change register usage in blocks to remove immediate reuse of registers • Summary • Static scheduling complements (or replaces) dynamic scheduling by the hardware