The Xen VMM

250 likes | 434 Vues

The Xen VMM. Nathanael Thompson and John Kelm. Motivations. Full virtualization of x86 is hard and imperfect Instead, make hosted OS aware of virtualization but not hosted applications Enable performance isolation and accounting. Outline. Motivations The Xen Implementation

The Xen VMM

E N D

Presentation Transcript

The Xen VMM Nathanael Thompson and John Kelm

Motivations • Full virtualization of x86 is hard and imperfect • Instead, make hosted OS aware of virtualization but not hosted applications • Enable performance isolation and accounting

Outline • Motivations • The Xen Implementation • Performance Evaluation • Xen Extensions • Discussion topics

Paravirtualization: Design Goals • Modified OS, unmodified applications • Leverage OS knowledge of virtualization to provide high-performance VM • Enable hosting of 10’s-100’s of VM’s on a single machine

Control Plane User Apps Guest OS Dom0 Xen Paravirtualization Paravirtualization vs. Full Virtualization User Applications Ring 3 Ring 2 Ring 1 Binary Translation Guest OS VMM Ring 0 Full Virtualization

Paravirtualization: Implementation • Key Point: Make changes to OS • Paging issues: updates and faults (40% of hypervisor time, says Intel) • Optimize access virtual devices (I/O rings) • Provide fast/batch call mechanisms via hypercalls • Hide Xen in top of each VM address space—similar to VMWare (maybe?)

Problematic Instructions Guest OS 1. Privileged Instruction HLT CLI STI … RTI 5. Return From Interrupt Xen (Normal Context) Instr Handler 3. Trap and… 4. …Emulate Instruction Xen (Interrupt Context) GPF Handler Why not just paravirtualize? GPF x86 Protection Mechanisms 2. Protection Fault!

Domain 0 • Put control/VMM interface, real device drivers, etc. into a separate VM • Sets up new VMs—Could use for migration? • Why not just put this all into Xen proper? • Increased difficulty in proving isolation • Larger footprint (Remember where Xen is located in virtual memory) • Fewer services available inside hypervisor • Take advantage of guest OS driver API

Memory Management • Avoid shadow page tables, but we have to trust OS, right? • Batch updates for performance gain—Hypercalls to the rescue! • Page frame types: PT’s, DT’s, RW…why? • OS manipulates page tables—Is this safe?

“Porting” an OS to Xen • Modify OS to run in x86 ring 1 • Replace or trap sensitive instructions with equivalent without overhead of binary translation: Do the dynamic translation statically • Hypercalls to make direct transfer from GuestOS to Xen • Paper describes Linux port—about 3,000 LoC added • WinXP port did not materialize: Politics? Technical difficulties?

Communication Interfaces • Xen runs virtual firewall-router • Domain0 sets rules for firewall • Performs NAT • Isolates traffic between domains • I/O rings for both transmit and receive • Outgoing packets sent in round robin order • Xen copies packet header, but not data - for safety. Why? • Guest provides page frame for each incoming packet - no copying

Performance: Single App • Outperforms VMWare on most user-mode and OS benchmarks—was this a fair comparison? • Performance on user-mode benchmark applications nearly identical to native Linux—is this surprising? • OS performance close to native, but page manipulation (e.g. mmap, PF) still has high cost • Pathological benchmarks showed process isolation in native Linux not as strong as VM isolation in Xen • Singal handling in XenoLinux lower latency than native! How could this happen?

XenoServers: An Application of Xen • Distributed platform for running untrusted code • Applications move between servers based on location, system load, cost, etc. • Virtual machine allows complex server configurations + isolation + accounting = Xen • Reed, et al. “Xenoservers: Accountable Execution of Untrusted Programs”, HotOS ‘99

Live VM Migration • Moving OS keeps kernel state • Moving entire OS removes (some) residual dependencies • Basic approach: • Reserve resources on new machine • Copy pages • Commit • Activate • Clark, et al. “Live Migration of Virtual Machines”, NSDI 2005

Live VM Migration • Iterative pre-copy • Copy all memory pages • Then copy those dirtied during the last round • In order to finish Stop-and-Copy phase halts OS and copies final pages • 50-210ms downtime for various servers • Network? Disk? • same LAN segment and network attached storage

Future Xen Model (Intel VT Whitepaper) Native Drivers

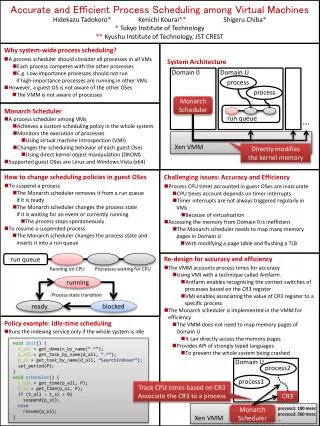

Xen with Intel VT • Intel Performance Analysis : • 40% of hypervisor time spent on paging • Shared Mem. FB provided 5-1000x speedup on X • PIC caused VM exits from I/O ports when scheduling timers—pushed it into hypervisor

Xen with Intel VT (cont.) Is this a more fair comparison of VMs? What does it tell us about HW-assisted full virtualization versus paravirtualization?

Practical Questions? • When is it worthwhile to "port" an OS to Xen? Three years later and no XP port, only works with added HW support. • Does Xen really isolate VM's? If I compromise the guest, have I compromised the host? • What does binary translation buy us? • Dynamic optimization • Higher overhead • May not work for all situations well on many architectures

Philosophical Questions? • Should Xen be in the mainline kernel tree? • Do we need standard VM API? • Does VirtualPC already use some amount of paravirtualization for Windows OSes?

Further Discussion Topics • Can performance isolation be achieved without paravirtualization? • Are evaluations convincing? • How does one measure a VMM?

Guest OS I/O Interface • I/O Port, I/O mmap, I/O channel partitioning • Virtual devices • Fast networking possible—inter-VM can be made very fast, but there is a problem… • May not have source for driver (e.g., nv Linux driver) • State issues • I/O Rings • Latency and throughput issues?

Guest OS I/O Interface (cont.) • Strive for zero-copy transfers • Block Device Accesses • Leverage OS/VM interaction to prioritize access—what about isolation? • Block caching schemes/block sharing • DMA Issues with contiguous physical regions and pinned memory? IOMMU’s?