OceanStore An Architecture for Global-scale Persistent Storage

550 likes | 700 Vues

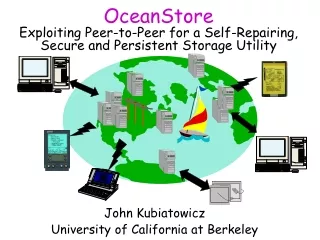

OceanStore An Architecture for Global-scale Persistent Storage. By John Kubiatowicz, David Bindel, Yan Chen, Steven Czerwinski, Patrick Eaton, Dennis Geels, Ramakrishna Gummadi, Sean Rhea, Hakim Weatherspoon, Westley Weimer, Chris Wells, and Ben Zhao http://oceanstore.cs.berkeley.edu.

OceanStore An Architecture for Global-scale Persistent Storage

E N D

Presentation Transcript

OceanStoreAn Architecture for Global-scale Persistent Storage By John Kubiatowicz, David Bindel, Yan Chen, Steven Czerwinski, Patrick Eaton, Dennis Geels, Ramakrishna Gummadi, Sean Rhea, Hakim Weatherspoon, Westley Weimer, Chris Wells, and Ben Zhao http://oceanstore.cs.berkeley.edu Presented by Yongbo Wang, Hailing Yu

OceanStore Overview • A global-scale utility infrastructure • Internet-based, distributed storage system for information appliances such as computers, PDAs, cellular phones,… • It is designed to support 1010 users, each having 104 data files (Support over 1014 files)

OceanStore Overview (cont) • Automatically recovers from server and network failures • Utilizes redundancy and client-side cryptographic techniques to protect data • Allows replicas of a data object to exist anywhere, at any time • Incorporates new resources • Adjusts to usage patterns

OceanStore • Two Unique design goals: • Ability to be constructed from an untrusted infrastructure • Servers may crash • Information can be stolen • Support of nomadic data • Data can be cached anywhere, anytime (promiscuous caching) • Data is separated from its physical location

Underlying Technologies • Naming • Access control • Data Location and Routing • Data Update • Deep Archival Storage • Introspection

Naming • Objects are identified by a globally unique identifier (GUID) • Different objects in OceanStore use different mechanism to generate their GUID

Underlying Technologies • Naming • Access control • Data Location and Routing • Data Update • Deep Archival Storage • Introspection

Access Control • Reader Restriction • Encrypt the data that is not public • Distribute the encryption key to users having read permission • Writer Restriction • The owner of an object can decide an access control list (ACL) for the object • All writes are verified by well-behaved servers and clients based on the ACL.

Underlying Technologies • Naming • Access control • Data Location and Routing • Data Update • Deep Archival Storage • Introspection

Data Location and Routing • Provides necessary service to route messages to their destinations and to locate objects in the system • Works on top of IP

Data Location and Routing • Each object in the system is identified by a globally unique identifier ,GUID (a pseudo-random fixed length bit string) • An object GUID is a secure hash function over the object’s contents • OceanStore uses 160-bit SHA-1 hash for which the probability that two out of 1014 objects hash to the same value is approximately 1 in 1020.

Data Location and Routing • In OceanStore system, entities that are accessed frequently are likely to reside close to where they are being used • Two-tiered approach: • First use a fast probabilistic algorithm • If necessary, use a slower but reliable hierarchical algorithm

Probabilistic algorithm • Each server has a set of neighbors, chosen from servers closest to it in network latency • A server associates with each neighbor a probability of finding each object in the system through that neighbor • This association is maintained in constant space using an attenuated Bloom filter

Bloom Filters • An efficient, lossy way of describing sets • A Bloom filter is a bit-vector of length w with a family of hash functions • Each hash function maps the elements of the represented set to an integer in [0,w) • To form a representation of a set, each set element is hashed and the bits in the vector corresponding to has functions’ results are set

Bloom Filters • To check if an element is in the set • Element is hashed • Corresponding bits in the filter are checked - If any of the bits are not set, it is not in the set - If all bits are set, it may be in the set • The element may not be in the set even if all of the hashed bits are set (false positive) • False positive rate of a Bloom filter is a linear function of its width, number of hash functions and cardinality of the represented set

A Bloom Filter: To check an object’s name against a Bloom filter summary, the name is hashed with n different hash functions (here, n=3) and bits corresponding to the result are checked

Attenuated Bloom Filters • An attenuated Bloom filter of depth d is an array of d normal bloom filters • For each neighbor link, an attenuated Bloom filter is kept • The k th bloom filter in the array is the merger of all Bloom filters for all of the nodes k hops away through any path starting with that neighbor link

Attenuated Bloom Filter for the outgoing link AB In FAB,the document “Uncle John’s Band” would map to potential value 1/4+1/8=3/8.

The Query Algorithm • The query node examines the 1st level of each of its neighbors’ filters • If matches are found, the query is forwarded to closest neighbor • If no filter matches, the querying node examines the next level of each filter at each step and forwards the query if a matching node founds

The probabilistic query process: n1 is looking for object X, which is hashed to bits 0,1, and 3.

Probabilistic location and routing • A filter of depth d stores information about servers d hops from the server • If a query reaches a server d hops away from its source due to a false positive, it is not forwarded further • In this case, the probabilistic algorithm gives up and forwards the query to deterministic algorithm

Deterministic location and routing Tapestry: OceanStore’s self-organizing routing and object location subsystem • IP overlay network with a distributed, fault tolerant architecture • A query is routed from node to node until the location of a replica is discovered

Tapestry • A hierarchical distributed data structure • Every server is assigned a random and unique node-ID • The node-ID ’s are then used to construct a mesh of neighbor links

Tapestry • Every node is connected to other nodes via neighbor links of various levels • Level-1 edges connect to a set of nodes closest in network latency with different values in the lowest digit of their node-ID’s • Level-2 edges connect to the closest nodes that match in the lowest digit and different second digits, etc.

Tapestry • Each node has a neighbor map with multiple levels • for example, the 9th entry of the 4th level for node 325AE is the node closest to 325AE which ends in 95AE • Messages are routed to the destination ID digit by digit • ***8=>**98=>*598=>4598

Tapestry routing example: A potential path for a message originating at node 0325 destined for node 4598

Tapestry • Each object is associated with a location root through a deterministic mapping function • To advertise an object o, the server s storing the object sends a publish message toward the object’s root, leaving location pointers at each hop

Tapestry routing example: To publish an object, the server storing the object sends a publish message toward the object’s root (e.g. node 4598), leaving location pointers at each node

Locating an object • To locate an object, a client sends a message toward the object’s root. When the message encounters a pointer, it routes directly to the object • It is proved that Tapestry can route the request to the asymptotically optimal node (in terms of the shortest path network distance) containing a replica

Tapestry routing example: To locate an object, node 0325 sends a message toward the object’s root (e.g. node 4598)

Data Location and Routing • Fault tolerance: • Tapestry uses redundant neighbor pointers when it detects a primary route failure • Uses periodic UDP probes to check link conditions • Tapestry deterministically chooses multiple root nodes for each object

Data Location and Routing • Automatic repair: • Node insertions: • A new node needs the address of at least one existing node • It then starts advertising its services and the roles it can assume to the system through the existing node • Exiting nodes: • If possible, the exiting node runs a shutdown script to inform the system • In any case, neighbors will detect its absence and update routing tables accordingly

Underlying Technologies • Naming • Access control • Data Location and Routing • Data Update • Deep Archival Storage • Introspection

Updates • Updates are made by clients and all updates are logged • OceanStore allows concurrent updates • Serializing updates: • Since the infrastructure is untrusted, using a master replica will not work • Instead, a group of server’s called inner ring is responsible for choosing final commit order

Update commitment • Inner ring is a group of servers working on behalf of an object. • It consists of a small number of highly-connected servers. • Each object has an inner ring which can be located through Tapestry

Inner ring • An object’s inner ring, • Generates new versions of an object from client updates • Generates encoded, archival fragments and distributes them • Provides mapping from active GUID to the GUID of most recent version of the object • Verifies a data object’s legitimate writers • Maintains an update history providing an undo mechanism

Update commitment • Each inner ring makes its decisions through a Byzantine agreement protocol • Byzantine agreement lets a group of 3n+1 servers reach a agreement whenever no more than n of them are faulty

Update commitment • Other nodes containing the data of that object are called secondary nodes • They do not participate in serialization protocol • They are organized into one or more multicast trees (dissemination trees)

Path of an update: • After generating an update, a client sends it directly to the object’s inner ring • While inner ring performs a Byzantine agreement to commit the update, secondary nodes propagate the update among themselves • The result of update is multicast down the dissemination tree to all secondary nodes

Cost of an update in bytes sent across the network, normalized to minimum cost needed to send the update to each of the replicas

Update commitment • Fault tolerance: • Guarantees fault tolerance if less than one third of the servers in the inner ring is malicious • Secondary nodes do not participate in the Byzantine protocol, but receive consistency information

Update commitment • Automatic repair: • Servers of the inner ring can be changed without affecting the rest of the system • Servers participating in the inner ring are altered continuously to maintain the Byzantine assumption

Underlying Technologies • Naming • Access control • Data Location and Routing • Data Update • Deep Archival Storage • Introspection

Deep Archival Storage • Each object is treated as a series of m fragments and then transformed into n fragments, where n>m • That uses Reed-Solomon encoding. • Any m of the n coded fragments are sufficient to construct the original data • Rate of encoding: r=m/n • Storage overhead=1/r=n/m

Underlying Technologies • Naming • Access control • Data Location and Routing • Data Update • Deep Archival Storage • Introspection

Introspection • It is impossible to manually administer millions of servers and objects • OceanStore contains introspection tools • Event monitoring • Event analysis • Self-adaptation

Introspection • Introspective modules on servers observe network traffic and measure local traffic. • They automatically create, replace, and remove replicas in response to object’s usage patterns