Intent Mining from Search Results

Intent Mining from Search Results. Jan Pedersen. Outline. Intro to Web Search Free text queries Architecture Why it works Result Set Mining Disambiguation Correction Amplification. The Worst Interface ( ca 1990). The Search Interface ( ca 2010). Search wasn’t always like this.

Intent Mining from Search Results

E N D

Presentation Transcript

Intent Mining from Search Results Jan Pedersen

Outline • Intro to Web Search • Free text queries • Architecture • Why it works • Result Set Mining • Disambiguation • Correction • Amplification

The Worst Interface (ca 1990) The Search Interface (ca 2010)

Search wasn’t always like this ttl/(tennis and (racquet or racket))isd/1/8/2002 and motorcyclein/newmar-julie Source: USPTO

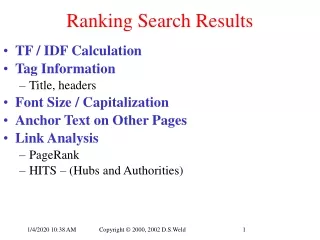

Salton’s Contribution • Free text queries • Approximate matching • Relevance ranking • Exploit redundancy • Meta data • Scored-OR Source: cs.cornell.edu

Life of a query Gerry Salton (Scored-OR 10, ([(“Gerry” or “Gerald”),0.3], [“Salton”,0.7])) Index • Separation between user query and backend query • Relevance scoring and ranking • Query-in-context summaries

Query Expansion • [Gerry Salton] [Gerry Salton Cornell] • Disambiguation via Expansion • Pseudo Relevance Feedback (Evans)

Life of a query (2) Gerry Salton (Scored-OR 10, ([(“Gerry” or “Gerald”),0.3], [“Salton”,0.7])) Gerry Salton Gerry Salton Cornell Index • Result Set Analysis • Automated Query expansion • Reranking

Spelling Correction Scored-AND(200, OR(“britinay”, “britney”), OR(“spares”, “spears”)) Blend(Scored-AND(200, “britinay”, “spares”), Scored-AND(200, “britney”, “spears”)) • Session Log Mining • Multiple queries with Blending • Behavioral feedback loop

Web Search Gerry Salton • Speller • Synonyms Third Stage reRanking: 50 (Scored-AND 200,”Gerry”, “Salton”) Second Stage reRanking: 5K First Stage reRanking: 100K News Index Local Index Index Index Index Index 100B • Query Understanding • Federation • ReRanking and Blending

Entity Detection • Grouping • Summarization

Post Result Triggering • Alternative to Answer Blending • Structured Data integration • Off-page data joins

Grouping • Reranked Results • Compressed Presentation • Coherently grouped

Summary • Web Queries are not User Intent • Suffer from ambiguity and errors • Intent can be mined from results • Query Correction • Disambiguation • Grouping and Organization