Quadratic Forms and Steepest Descent in Computational Physics

This lecture focuses on quadratic forms and their minimization using matrix methods in computational physics. It discusses the representation of a quadratic function as (f(x) = frac{1}{2} x^T A x + b^T x + c), where (A) is symmetric and positive definite. The steepest descent method is introduced as a means to find the minimum, along with the concept of conjugate directions to enhance convergence rates. Sample problems demonstrate the application of these concepts, including the use of line searches and multivariable Newton's method.

Quadratic Forms and Steepest Descent in Computational Physics

E N D

Presentation Transcript

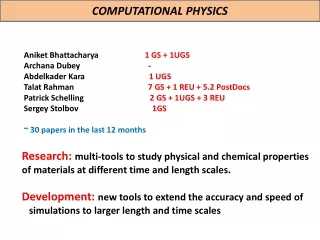

Computational Physics(Lecture 7) PHY4370

Quadratic form • A quadratic form is simply a scalar, quadratic function of a vector with the form • A is the matrix, x and b are vectors, c is a scalar constant. If A is symmetric and positive definite, f(x) is minimized by the solution to Ax=b.

Sample Problem: • c= 0

The gradient • ,…,=-b. • If A is symmetric, the equation becomes: Ax-b.

Revisit of Steepest descent • We start from an arbitrary starting point: x(0), • Slide down to the bottom of the paraboloid. • When we take the step, we choose the direction in which f decreases most quickly. • -f’(x(i))=b-Ax(i). • Error: e(i) = x(i)-x => how far from the solution. • Residual: r(i) = b-Ax(i) => how far from the correct value of b.

Suppose we start from (-2, -2). Along the steepest descent, will fall somewhere on the solid line. • X(1) = x(0)+αr(0) • How big the step we should take? • Line search • Choose α to minimize f. df(x(1))/dα=0, • So, f’(x(1))Tr(0)=0 • So α is chosen to make r(0) and f’(x(1)) orthogonal!

Determine α • F(x(1))= -r(1) • r(1)Tr(0) = 0 • (b-Ax(1))Tr(0) = 0 • (b-A(x(0)+αr(0)))Tr(0) = 0 • (b-Ax(0))Tr(0) = α(Ar(0))Tr(0) • r(0) Tr(0)=αr(0)T(Ar(0)) • α =(r(0) Tr(0))/(r(0)TAr(0))

Conjugate directions • SD often takes steps in the same direction! • If we take n orthogonal steps, each step has the correct length, afte n steps, we are done! • In general, for each step, we have • x(i+1)=x(i)+α(i)d(i) • e(i+1) should be orthogonal to d(i) • d(i)Te(i+1) = 0 • d(i)T (e(i)+ α(i)d(i))=0 • α(i)=-d(i) Te(i)/(d(i) Td(i))

Useless! We don’t know e(i). • Instead, we make d(i) and d(j) A-orthogonal, or conjugate. • d(i)TAd(j) = 0

New requirement: • e(i+1) is A-orthogonal to d(i) • Like SD, we need to find the minimum along d(i)

Conjugate Gradients • Just the Conjugate directions method where the search directions are constructed by the conjugation of the residuals. • ui=r(i)

Revised Algorithm using line minimization for nonlinear functions

Multi variable Newton method • f(x) = 0, (f = ( f1, f2, . . . , fn) and x = (x1, x2, . . . , xn).) • Taylor expansion at Xr: • f(xr) = f(x) + x ·∇f(x) + O(x2) ≃0, • AΔx = b, • Aij= ∂ fi(x)/∂xj • Bi=-fi • For Newtonian method: • Xk+1 = xk+ Δxk • Secant Method • Aij= (fi(x + hj<xj>) − fi(x))/hj • hj≃δ0 xj • δ0 isthe tolerance of the floating point of data. • f1(x, y) = exp(x^2) lny − x^2 = 0 and f2(x, y) = exp(y2) lnx − y^2 = 0, around x = y = 1.5.

// An example of searching for the root via the secant method // for f_i[x_k] for i=1,2,...,n. import java.lang.*; public class Rootm { public static void main(String argv[]) { int n = 2; intni = 10; double del = 1e-6; double x[] = {1.5, 1.5}; secantm(ni, x, del); // Output the root obtained System.out.println("The root is at x = " + x[0] + "; y = " + x[1]); } // Method to carry out the multivariable secant search. public static void secantm(intni, double x[], double del) { int n = x.length; double h = 2e-5; int index[] = new int[n]; double a[][] = new double[n][n]; int k = 0; double dx = 0.1; while ((Math.abs(dx)>del) && (k<ni)) { double b[] = f(x); for (int i=0; i<n; ++i) { for (int j=0; j<n; ++j) { double hx = x[j]*h; x[j] += hx; double c[] = f(x); a[i][j] = (c[i]-b[i])/hx; } } dx = Math.sqrt(dx/n); k++; } if (k==n) System.out.println("Convergence not" + " found after " + ni + " iterations"); } public static double[] solve(double a[][], double b[], int index[]) {...} public static void gaussian(double a[][], int index[]) {...} // Method to provide function f_i[x_k]. public static double[] f(double x[]) { double fx[] = new double[2]; fx[0] = Math.exp(x[0]*x[0])*Math.log(x[1]) -x[0]*x[0]; fx[1] = Math.exp(x[1])*Math.log(x[0])-x[1]*x[1]; return fx; } }

Extremes of a multi variable function, BFBG • f(x) = ∇g(x) = 0,