Resource Management in Data-Intensive Systems

This work explores the complex landscape of resource management in data-intensive computing environments. It highlights key challenges such as application performance, QoS requirements, and the need for effective metering tools. The paper discusses optimal resource allocation strategies that align with service level agreements and the importance of network bandwidth, memory, and CPU considerations. It further examines innovative approaches like virtualization and intelligent data placement to enhance resource utilization and reliability while addressing the unique demands of scientific applications.

Resource Management in Data-Intensive Systems

E N D

Presentation Transcript

Resource Management in Data-Intensive Systems Bernie Acs, MagdaBalazinska, John Ford, KarthikKambatla, Alex Labrinidis, Carlos Maltzahn, RamiMelhem, Paul Nowoczynski, Matthew Woitaszek, MazinYousif

Resource Utilization Problem • Resource Management Perspectives • User: • Application performance, cost, • QoS (deadlines for interactivity) • Need metering tools, job description language(e.g. JDL - developed in grid computing) • Provider: • Power, physical space • Network bandwidth, memory, CPU power, • Disk I/O, space, • Cost of metering

Resource Utilization Problem (cont’d) • Overall Management Goals of Provider • Most efficient allocation of resources to meet service level agreements • Pricing model that drives users towards more efficient/predictable usage • Maintain a certain envelope of resource utilization • Difference to conventional super computing centers: • Not only cores but network bandwidth, memory, disk • Scheduling preference based on data locality

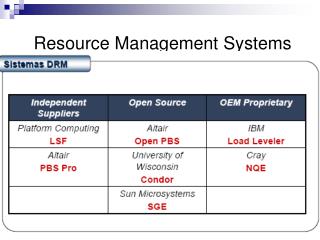

Common Challenges • What should be guaranteed? • Example: SimpleDB returns whatever can be retrieved in 5s. Not applicable for science applications • Network bandwidth, storage throughput • Management of Resources: Hardware • 3-4 year cycle, 20%/year • Resource discovery • Mapping optimized to user demand: • Upgrade based mapping history • Requires workload profiles -> elastic clustering, virtualization essential, applications servers • Managmenet of Resources: Centralized Services/Software • Big databases • Visualization • Virtualization: as a packaging and delivery service (Testing/staging environment) Licensing, • Applications (Hadoop, R, …)

Hard Problems • Failure & Recovery Resource Management • Cannot prevent, but estimate, over-provisioning • What level of failure protection is adequate? • Creeping failures • Real-time triage: extra cost -> often sampling only • Possible benefit: smaller set of libraries/apps • Two-tier approach? • Combined with security and other safety mechanisms • Interactivity (Paradigm shift for batch environment) • Def: want to see what is happening right now, or in regular intervals • Intelligent placement of data • Reserve resources -> over-provisioning/waste • Different scheduling time scale: seconds to minutes vs ms • SLAs for DIC workloads • Incorporating Power • Framework of SLAs for Science different than for commercial • Not clear whether that’s an agreement or optimization thing

Hard Problems (cont’d) • Provisioning Framework • DIC application -> what resources am I going to need? • Hadoop friendly science applications • DIC framework configuration to adapt to user & HW profiles • Performance Management • Granularity of Prediction (if predictable) • Co-location of workloads for efficiency • Real-time end-to-end scheduling (sometime too costly) • Metrics, instrumentation • Blackboxvs grey vs transparent box alternatives