Efficient Binary Translation In Co-Designed Virtual Machines

Efficient Binary Translation In Co-Designed Virtual Machines. Feb. 28th , 2006 -- Shiliang Hu. Outline. Introduction The x86vm Infrastructure Efficient Dynamic Binary Translation DBT Modeling Translation Strategy Efficient DBT for the x86 (SW)

Efficient Binary Translation In Co-Designed Virtual Machines

E N D

Presentation Transcript

Efficient Binary Translation In Co-Designed Virtual Machines Feb. 28th, 2006 -- Shiliang Hu

Outline • Introduction • The x86vm Infrastructure • Efficient Dynamic Binary Translation • DBT Modeling Translation Strategy • Efficient DBT for the x86 (SW) • Hardware Assists for DBT (HW) • A Co-Designed x86 Processor • Conclusions Thesis Defense, Feb. 2006

Conflicting Trends The Dilemma: Binary Compatibility • Two Fundamentals for Computer Architects: • Computer Applications: Ever-expanding • Software development is expensive • Software porting is also costly. Standard software binary distribution format(s) • Implementation Technology: Ever-evolving • Silicon technology has been evolving rapidly – Moore’ Law • Trend: Always needs ISA architecture innovation. • Dilemma: Binary Compatibility • Cause: Coupled Software Binary Distribution Format and Hardware/Software Interface.

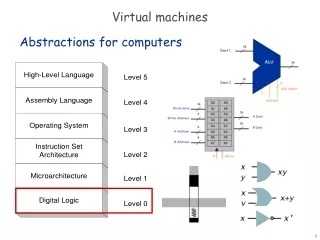

Conventional HW design Software Binary Translator VM paradigm Decoders Pipeline Code $ Pipeline Solution: Dynamic ISA Mapping Software in Architected ISA: OS, Drivers, Lib code, Apps Architected ISA e.g. x86 Dynamic Translation Implementation ISA e.g. fusible ISA HW Implementation: Processors, Mem-sys, I/O devices • ISA mapping: • Hardware-intensive translation: good for startup performance. • Software dynamic optimization: good for hotspots. • Can we combine the advantages of both? • Startup: Fast, hardware-intensive translation • Steady State: Intelligent translation / optimization for hotspots.

1.1 1 0.9 Ref: Superscalar 0.8 VM: Interp & SBT 0.7 VM: BBT & SBT 0.6 Cumulative x86 IPC (normalized) VM: Steady state 0.5 0.4 0.3 0.2 0.1 0 1 10 100 1,000 Finish 10,000 100,000 1,000,000 10,000,000 100,000,000 Time: Cycles Key: Efficient Binary Translation • Startup curves for Windows workloads

Issue: Bad Case Scenarios • Short-Running & Fine-Grain Cooperating Tasks • Performance lost to slow startup cannot be compensated for before the tasks end. • Real Time Applications • Real time constraints can be compromised due to slow. translation process. • Multi-tasking Server-like Applications • Frequent context switches b/w resource competing tasks. • Limited code cache size causes re-translations when switched in and out. • OS boot-up & shutdown (Client, mobile platforms) Thesis Defense, Feb. 2006

Related Work: State-of-the Art VM • Pioneer: IBM System/38, AS/400 • Products: Transmeta x86 Processors • Code Morphing Software + VLIW engine. • Crusoe Efficeon. • Research Project: IBM DAISY, BOA • Full system translator + VLIW engine. • DBT overhead: 4000+ PowerPC instrs per translated instr. • Other research projects: DBT for ILDP (H. Kim & JES). • Dynamic Binary Translation / Optimization • SW based: (Often user mode only) UQBT, Dynamo (RIO), IA-32 EL. Java and .NET HLL VM runtime systems. • HW based: Trace cache fill units, rePLay, PARROT, etc.

Thesis Contributions • Efficient Dynamic Binary Translation (DBT) • DBT runtime overhead modeling translation strategy. • Efficient software translation algorithms. • Simple hardware accelerators for DBT. • Macro-op Execution μ-Arch (w/ Kim, Lipasti) • Higher IPC and Higher clock speed potential. • Reduced complexity at critical pipeline stages. • An Integrated Co-designed x86 Virtual Machine • Superior steady-state performance. • Competitive startup performance. • Complexity-effective, power efficient. Thesis Defense, Feb. 2006

Outline • Introduction • The x86vm Infrastructure • Efficient Dynamic Binary Translation • DBT Modeling Translation Strategy • Efficient DBT for the x86 (SW) • Hardware Assists for DBT (HW) • A Co-Designed x86 Processor • Conclusions Thesis Defense, Feb. 2006

The x86vm Framework • Goal: Experimental research infrastructure to explore the co-designed x86 VM paradigm • Our co-designed x86 virtual machine design • Software components: VMM. • Hardware components: Microarchitecture timing simulators. • Internal implementation ISA. • Object Oriented Design & Implementation in C++ Thesis Defense, Feb. 2006

The x86vm Framework Software in Architected ISA: OS, Drivers, Lib code & Apps x 86 instructions Architected ISA e.g. x86 RISC - ops BOCHS 2.2 x86vmm DBT VMM Runtime Software Code Cache(s) Macro - ops Implementation ISA e.g. Fusible ISA Hardware Model : Microarchitecture, Timing Simulator Thesis Defense, Feb. 2006

Two-stage DBT system • VMM runtime • Orchestrate VM system EXE. • Runtime resource mgmt. • Precise state recovery. • DBT • BBT: Basic block translator. • SBT: Hot super- block translator & optimizer. Thesis Defense, Feb. 2006

Evaluation Methodology • Reference/Baseline: Best performing x86 processors • Approximation to Intel Pentium M, AMD K7/K8. • Experimental Data Collection: Simulation • Different models need different instantiations of x86vm. • Benchmarks • SPEC 2000 integer (SPEC2K). Binary generation: Intel C/C++ compiler –O3 base opt. Test data inputs, full runs. • Winstone2004 business suite (WSB2004), 500-million x86 instruction traces for 10 common Windows applications. Thesis Defense, Feb. 2006

x86 Binary Characterization • Instruction Count Expansion (x86 to RISC-ops): • 40%+ for SPEC2K. • 50%- for Windows workloads. • Instruction management and communication. • Redundancy and inefficiency. • Code footprint expansion: • Nearly double if cracked into 32b fixed length RISC-ops. • 30~40% if cracked into 16/32b RISC-ops. • Affects fetch efficiency, memory hierarchy performance. Thesis Defense, Feb. 2006

Overview of Baseline VM Design • Goal: demonstrate the power of a VM paradigm via a specific x86 processor design • Architected ISA: x86 • Co-designed VM software: SBT, BBT and VM runtime control system. • Implementation ISA: Fusible ISA FISA • Efficient microarchitecture enabled: macro-op execution Thesis Defense, Feb. 2006

- Core 32-bit instruction formats 10 b opcode 21-bit Immediate / Displacement - / 16-bit immediate / Displacement - 5b Rds 11b Immediate / Disp 5b Rsrc Add-on 16-bit instruction formats for code density - F Fusible ISA Instruction Formats Fusible Instruction Set • RISC-ops with unique features: • A fusible bit per instr. for fusing. • Dense encoding, 16/32-bit ISA. • Special Features to Support x86 • Condition codes. • Addressing modes • Aware of long immediate values. F F 10 b opcode 5b Rds F 10 b opcode 5b Rsrc F 16 bit opcode 5b Rsrc 5b Rds 5b opcode 10b Immediate / Disp F 5b opcode 5b Immd 5b Rds F 5b Rds 5b opcode 5b Rsrc

VMM: Virtual Machine Monitor • Runtime Controller • Orchestrate VM operation, translation, translated code, etc. • Code Cache(s) & management • Hold translations, lookup translations & chaining, eviction policy. • BBT: Initial emulation • Straightforward cracking, no optimization.. • SBT: Hotspot optimizer • Fuse dependent Instruction pairs into macro-ops. • Precise state recovery routines Thesis Defense, Feb. 2006

Microarchitecture: Macro-op Execution • Enhanced OoO superscalar microarchitecture • Process & execute fused macro-ops as single Instructions throughout the entire pipeline. • Analogy: All lanes car-pool on highway reduce congestion w/ high throughput, AND raise the speed limit from 65mph to 80mph. • Joint work with I. Kim & M. Lipasti. 3-1 ALUs cache ports Fuse bit Decode Wake- Align Retire WB EXE RF MEM Select Fetch Rename Fuse up Dispatch Thesis Defense, Feb. 2006

16 Bytes Fetch Align / Align / 1 1 2 2 3 3 4 4 5 5 6 6 5 5 3 3 Fuse Fuse 1 1 2 2 4 4 3 3 3 5 5 5 Decode Decode 1 1 2 2 2 4 4 4 Rename Rename slot 0 slot 0 slot 1 slot 1 slot 2 slot 2 Dispatch Dispatch Co-designed x86 pipeline frond-end Thesis Defense, Feb. 2006

2-cycle Macro-op Scheduler Wakeup issue port 0 issue port 1 issue port 2 Select lane 0 lane 0 lane 1 lane 1 lane 2 lane 2 Payload dual entry dual entry dual entry dual entry dual entry dual entry lane 0 lane 0 lane 1 lane 1 lane 2 lane 2 RF 2 read ports 2 read ports 2 read ports 2 read ports 2 read ports 2 read ports EXE 3 - 1ALU2 ALU0 ALU1 ALU2 3 - 1ALU0 3 - 1ALU1 WB/ Mem Mem Port 1 Mem Port 0 Co-designed x86 pipeline backend Thesis Defense, Feb. 2006

Outline • Introduction • The x86vm Infrastructure • Efficient Dynamic Binary Translation • DBT Modeling Translation Strategy • Efficient DBT for the x86 (SW) • Hardware Assists to DBT (HW) • An Example Co-Designed x86 Processor • Conclusions Thesis Defense, Feb. 2006

Performance: Memory Hierarchy Perspective • Disk Startup • Initial program startup, module or task reloading after swap. • Memory Startup • Long duration context switch, phase changes. x86 code is still in memory, translated code is not. • Code Cache Transient / Startup • Short duration context switch, phase changes. • Steady State • Translated code is available and placed in the memory hierarchy. Thesis Defense, Feb. 2006

Hot threshold Memory Startup Curves

Static x86 instruction execution frequency. 100 90 80 70 60 Number of static x86 instrs (X 1000) 50 40 30 20 10 0 1+ 10+ 100+ 1,000+ 10,000+ 100,000+ 10,000,000+ 1,000,000+ Execution frequency Hotspot Behavior: WSB2004 (100M) Hot threshold

Outline • Introduction • The x86vm Infrastructure • Efficient Dynamic Binary Translation • DBT Modeling Translation Strategy • Efficient DBT Software for the x86 • Hardware Assists for DBT (HW) • A Co-Designed x86 Processor • Conclusions Thesis Defense, Feb. 2006

(Hotspot) Translation Procedure 1. Translation Unit Formation: Form hotspot superblock. 2. IR generation: Crack x86 instructions into RISC-style micro-ops. 3. Machine State Mapping: Perform Cluster Analysis of embedded long immediate values and assign to registers if necessary. 4. Dependency Graph Construction for the superblock. 5. Macro-op Fusing Algorithm: Scan looking for dependent pairs to be fused. Forward scan, backward pairing. Two-pass fusing to prioritize ALU ops. 6. Register Allocation: re-order fused dependent pairs together, extend live ranges for precise traps, use consistent state mapping at superblock exits. 7. Code generation to code cache. Thesis Defense, Feb. 2006

Macro-op Fusing Algorithm • Objectives: • Maximize fused dependent pairs. • Simple & Fast. • Heuristics: • Pipelined Issue Logic: Only single-cycle ALU ops can be a head. Minimize non-fused single-cycle ALU ops. • Criticality: Fuse instructions that are “close” in the original sequence. ALU-ops criticality is easier to estimate. • Simplicity: 2 or fewer distinct register operands per fused pair. • Two-pass Fusing Algorithm: • The 1st pass, forward scan, prioritizes ALU ops, i.e. for each ALU-op tail candidate, look backward in the scan for its head. • The 2nd pass considers all kinds of RISC-ops as tail candidates

Dependence Cycle Detection • All cases are generalized to (c) due to Anti-Scan Fusing Heuristic. Thesis Defense, Feb. 2006

Fusing Algorithm: Example x86 asm: ----------------------------------------------------------- 1. lea eax, DS:[edi + 01] 2. mov [DS:080b8658], eax 3. movzx ebx, SS:[ebp + ecx << 1] 4. and eax, 0000007f 5. mov edx, DS:[eax + esi << 0 + 0x7c] RISC-ops: ----------------------------------------------------- 1. ADD Reax, Redi, 1 2. ST Reax, mem[R14] 3. LD.zx Rebx, mem[Rebp + Recx << 1] 4. AND Reax, 0000007f 5. ADD R11, Reax, Resi 6. LD Redx, mem[R11 + 0x7c] After fusing: Macro-ops ----------------------------------------------------- 1. ADD R12, Redi, 1 :: AND Reax, R12, 007f 2. ST R12, mem[R14] 3. LD.zx Rebx, mem[Rebp + Recx << 1] 4. ADD R11, Reax, Resi :: LD Rebx,mem[R11+0x7c] Thesis Defense, Feb. 2006

100% 90% 80% 70% 60% 50% Percentage of Dynamic Instructions ALU 40% FP or NOPs 30% BR ST 20% LD 10% Fused 0% 175.vpr 176.gcc 181.mcf 252.eon 254.gap Average 164.gzip 300.twolf 186.crafty 256.bzip2 255.vortex 197.parser 253.perlbmk Instruction Fusing Profile (SPEC2K) Thesis Defense, Feb. 2006

Instruction Fusing Profile (WSB2004) Thesis Defense, Feb. 2006

Macro-op Fusing Profile • Of all fused macro-ops: • 52% / 43% are ALU-ALU pairs. • 30% / 35% are fused condition test & conditional branch pairs. • 18% / 22% are ALU-MEM ops pairs. • Of all fused macro-ops. • 70+% are inter-x86instruction fusion. • 46% access two distinct source registers. • 15% (i.e. 6% of all instruction entities) write two distinct destination registers. SPEC WSB Thesis Defense, Feb. 2006

DBT Software Runtime Overhead Profile • Software BBT Profile • BBT overhead ΔBBT: About 105 FISA instructions (85 cycles) per translated x86 instruction. Mostly for decoding, cracking. • Software SBT Profile • SBT overhead ΔSBT: About 1000+ instructions per translated hotspot instruction. Thesis Defense, Feb. 2006

Outline • Introduction • The x86vm Infrastructure • Efficient Dynamic Binary Translation • DBT Modeling Translation Strategy • Efficient DBT for the x86 (SW) • Hardware Assists for DBT • A Co-Designed x86 Processor • Conclusions Thesis Defense, Feb. 2006

Principles for Hardware Assist Design • Goals: • Reduce VMM runtime software overhead significantly. • Maintain HW complexity-effectiveness & power efficiency. • HW & SW simply each other Synergetic co-design. • Observations: (Analytic Modeling & Simulation) • High performance startup (non-hotspot) is critical. • Hotspot is usually a small fraction of the overall footprint. • Approach: BBT accelerators • Front-end Dual mode decoders. • Backend HW assist functional unit(s). Thesis Defense, Feb. 2006

x86 instruction x86 μ- ops decoder Fusible RISC-ops Dual Mode CISC (x86) Decoders - op decoder μ Opcode Operand Designators Other pipeline Control signals • Basic idea: 2-stage decoder for CISC ISA • First published in Motorola 68K processor papers. • Break a Monolithic complex decoder into two separate simpler decoder stages. • Dual-mode CISC decoders • CISC (x86) instructions pass both stages. • Internal RISC-ops pass only the second stage. Thesis Defense, Feb. 2006

Dual Mode CISC (x86) Decoders • Advantages: • High performance startup similar to conventional superscalar design. • No code cache needed for non-hotspot code. • Smooth transition from conventional superscalar design. • Disadvantages: • Complexity: n-wide machine needs n such decoders. • But so does conventional design. • Less power efficient (than other VM schemes). Thesis Defense, Feb. 2006

Fetch Align / Fuse Decode Rename Dispatch FP / MM ISSUE Q FP / MM Register File 128 b X 32 GP ISSUE Q F / M – MUL Functional F / M - ADD DIV and Unit ( s ) to LD / ST Other Assist VMM long - lat ops Hardware Assists as Functional Units Thesis Defense, Feb. 2006

Hardware Assists as Functional Units • Advantages: • High performance startup. • Power Efficient. • Programmability and flexibility. • Simplicity: only one simplified decoder needed. • Disadvantages: • Runtime overhead: Reduce ΔBBT from 85 to about 20 cycles, but still involves some translation overhead. • Memory space overhead: some extra code cache space for cold code. • Must use VM paradigm, more risky than dual mode decoder. Thesis Defense, Feb. 2006

Machine Startup Models • Ref: Superscalar • Conventional processor design as the baseline. • VM.soft • Software BBT and hotspot optimization via SBT. • State-of-the-art VM design. • VM.be • BBT accelerated by backend functional unit(s). • VM.fe • Dual mode decoder at the pipeline front-end. Thesis Defense, Feb. 2006

Startup Evaluation: Hardware Assists 1.1 1 0.9 Ref: Superscalar 0.8 VM.soft 0.7 VM.be 0.6 VM.fe Cumulative x86IPC (Normalized) 0.5 VM.steady-state 0.4 0.3 0.2 0.1 0 1 10 100 1,000 Finish 10,000 100,000 1,000,000 10,000,000 100,000,000 Time: Cycles

Related Work on HW Assists for DBT • HW Assists for Profiling • Profile Buffer [Conte’96]: BBB & Hotspot Detector [Merten’99], Programmable HW Path Profiler [CGO’05] etc • Profiling Co-Processor [Zilles’01] • Many others…. • HW Assists for General VM Technology • System VM Assists: Intel VT, AMD Pacifica. • Transmeta Efficeon Processor: A new execute instruction to accelerate interpreter. • HW Assists for Translation • Trace Cache Fill Unit [Friendly’98] • Customized buffer and instructions: rePLay, PARROT. • Instruction Path Co-Processor [Zhou’00] etc.

Outline • Introduction • The x86vm Framework • Efficient Dynamic Binary Translation • DBT Modeling & Translation Strategy • Efficient DBT for the x86 (SW) • Hardware Assists for DBT (HW) • A Co-Designed x86 Processor • Conclusions Thesis Defense, Feb. 2006

VM translation / optimization software Co-designed x86 processor architecture horizontal 1 Memory Hierarchy 2 micro / Macro - op decoder x86 code vertical x86 decoder Pipeline EXE backend Rename/ Dispatch Issue buffer I - $ Code $ (Macro-op) • Co-designed virtual machine paradigm • Startup: Simple hardware decode/crack for fast translation. • Steady State: Dynamic software translation/optimization for hotspots. Thesis Defense, Feb. 2006

x86Pipeline X86 x86 x86 Fetch EXE Align Rename Dispatch wakeup Payload RF WB Retire Select Decode1 Decode2 Decode3 Macro-op Pipeline - Align/ Fetch EXE Decode Rename Dispatch wakeup Payload RF WB Retire Select Fuse Pipelined 2-cycle Issue Logic Processor Pipeline Reduced Instr. traffic throughout Reduced forwarding Pipelined scheduler • Macro-op pipeline for efficient hotspot execution • Execute macro-ops. • Higher IPC, and Higher clock speed potential. • Shorter pipeline front-end.

Performance Simulation Configuration Thesis Defense, Feb. 2006

Performance Contributors • Many factors contribute to the IPC performance improvement: • Code straightening. • Macro-op fusing and execution. • Shortened pipeline front-end (reduced branch penalty). • Collapsed 3-1 ALUs (resolve branches & addresses sooner). • Besides baseline and macro-op models, we model three middle configurations: • M0: baseline + code cache. • M1: M0 + macro-op fusing. • M2: M1 + shorter pipeline front-end. (Macro-op mode). • Macro-op: M2 + collapsed 3-1 ALUs. Thesis Defense, Feb. 2006