Morphological Parsing

Morphological Parsing. CS 4705. Parsing. Taking a surface input and analyzing its components and underlying structure Morphological parsing : taking a word or string of words as input and identifying the stems and affixes (and possibly interpreting these) E.g .:

Morphological Parsing

E N D

Presentation Transcript

Morphological Parsing CS 4705 CS 4705

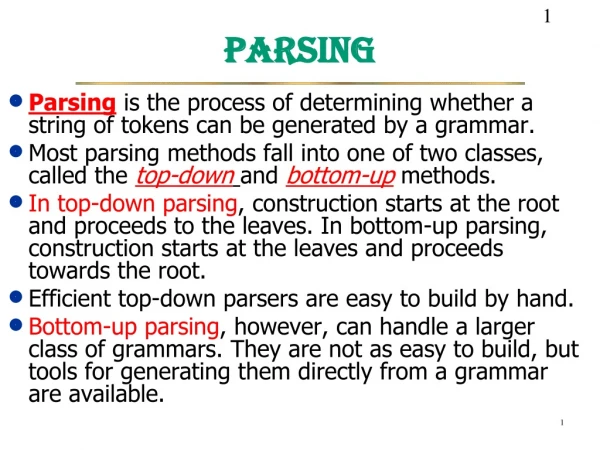

Parsing • Taking a surface input and analyzing its components and underlying structure • Morphological parsing: taking a word or string of words as input and identifying the stems and affixes (and possibly interpreting these) • E.g.: • goose goose +N +SG or goose + V • geese goose +N +PL • gooses goose +V +3SG • Bracketing: indecipherable [in [ [de [cipher] ] able] ]

Why ‘parse’ words? • To find stems • Simple key to word similarity • Yellow, yellowish, yellows, yellowed, yellowing… • To find affixes and the information they convey • ‘ed’ signals a verb • ‘ish’ an adjective • ‘s’? • Morphological parsing provides information about a word’s semantics and the syntactic role it plays in a sentence

Some Practical Applications • For spell-checking • Is muncheble a legal word? • To identify a word’s part-of-speech(pos) • For sentence parsing, for machine translation, … • To identify a word’s stem • For information retrieval • Why not just list all word forms in a lexicon?

What do we need to build a morphological parser? • Lexicon: list of stems and affixes (w/ corresponding p.o.s.) • Morphotactics of the language: model of how and which morphemes can be affixed to a stem • Orthographic rules: spelling modifications that may occur when affixation occurs • in il in context of l (in- + legal) • Most morphological phenomena can be described with regular expressions – so finite state techniques often used to represent morphological processes

Using FSAs to Represent English Plural Nouns • English nominal inflection plural (-s) reg-n q0 q1 q2 irreg-pl-n irreg-sg-n • Inputs: cats, geese, goose

q1 q2 q0 adj-root1 • Derivational morphology: adjective fragment -er, -ly, -est un- adj-root1 q5 q3 q4 -er, -est adj-root2 • Adj-root1: clear, happi, real (clearly) • Adj-root2: big, red (*bigly)

FSAs can also represent the Lexicon • Expand each non-terminal arc in the previous FSA into a sub-lexicon FSA (e.g. adj_root2 = {big, red}) and then expand each of these stems into its letters (e.g. red r e d) to get a recognizer for adjectives e r q1 q2 ε q3 q0 b d q4 q5 i g q6

But….. • Covering the whole lexicon this way will require very large FSAs with consequent search and maintenance problems • Adding new items to the lexicon means recomputing the whole FSA • Non-determinism • Some stems require modification when they acquire affixes • FSAs tell us whether a word is in the language or not – but usually we want to know more: • What is the stem? • What are the affixes and what sort are they? • We used this information to recognize the word: why can’t we store it?

cats cat +N +PL (a plural NP) Kimmo Koskenniemi’s two-level morphology Idea: word is a relationship betweenlexical level (its morphemes) and surface level (its orthography) Morphological parsing : find the mapping (transduction) between lexical and surface levels Parsing with Finite State Transducers lexical surface

Finite State Transducers can represent this mapping • FSTs map between one set of symbols and another using a FSA whose alphabet is composed of pairs of symbols from input and output alphabets • In general, FSTs can be used for • Translators (Hello:Ciao) • Parser/generators (Hello:How may I help you?) • As well as Kimmo-style morphological parsing

FST is a 5-tuple consisting of • Q: set of states {q0,q1,q2,q3,q4} • : an alphabet of complex symbols, each an i/o pair s.t. i I (an input alphabet) and o O (an output alphabet) and is in I x O • q0: a start state • F: a set of final states in Q {q4} • (q,i:o): a transition function mapping Q x to Q • Quizzical Cow Emphatic Sheep o:a m:b o:a o:a ?:! q0 q1 q2 q3 q4

FST for a 2-level Lexicon • E.g. c:c a:a t:t q3 q0 q1 q2 g e q4 q5 q6 q7 o:e o:e s

c a t +N +PL c a t s FST for English Nominal Inflection +N: reg-n +PL:^s# q1 q4 +SG:# +N: irreg-n-sg q0 q2 q5 q7 +SG:# irreg-n-pl q3 q6 +PL:# +N:

Useful Operations on Transducers • Cascade: running 2+ FSTs in sequence • Intersection: represent the common transitions in FST1 and FST2 (ASR: finding pronunciations) • Composition: apply FST2 transition function to result of FST1 transition function • Inversion: exchanging the input and output alphabets (recognize and generate with same FST) • cf AT&T FSM Toolkit and papers by Mohri, Pereira, and Riley

Orthographic Rules and FSTs • Define additional FSTs to implement rules such as consonant doubling (beg begging), ‘e’ deletion (make making), ‘e’ insertion (watch watches), etc.

Porter Stemmer (1980) • Used for tasks in which you only care about the stem • IR, modeling given/new distinction, topic detection, document similarity • Lexicon-free morphological analysis • Cascades rewrite rules (e.g. misunderstanding --> misunderstand --> understand --> …) • Easily implemented as an FST with rules e.g. • ATIONAL ATE • ING ε • Not perfect …. • Doing doe

Policy police • Does stemming help? • IR, little • Topic detection, more

Summing Up • FSTs provide a useful tool for implementing a standard model of morphological analysis, Kimmo’s two-level morphology • But for many tasks (e.g. IR) much simpler approaches are still widely used, e.g. the rule-based Porter Stemmer • Next time: • Read Ch 5:1-8 • HW1 assigned (read the assignment)