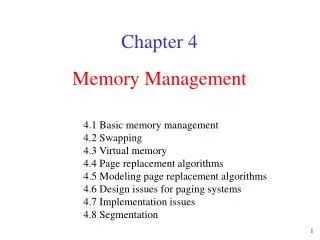

Memory Management

Memory Management. Memory Management is the managing of the pool of available memory in a computer system, allocating space to application programs and making sure that they do not interfere with each other The problem of memory management is composed of three sub-problems:. Allocation.

Memory Management

E N D

Presentation Transcript

Memory Management is the managing of the pool of available memory in a computer system, allocating space to application programs and making sure that they do not interfere with each other The problem of memory management is composed of three sub-problems:

Allocation • This is the problem of assigning suitable storage space to processes as required. • An efficient memory allocation mechanism may be expected to respond quickly to: • requests for assorted amounts of memory • to assign memory only when needed • To reclaim memory promptly when its use is complete

Relocation • This is the problem of matching programs and data to the memory locations that have been allocated to them. • A program on disk is a binary executable file, it must be loaded into memory in a process to be executed. • In general, a user process can reside anywhere in free memory. Possibly even being moved once execution has begun. Modifications must be made to addresses in machine instructions and data to reflect the addresses actually used. • Addresses represented in source programs are symbolic, such as the name of a variable. Sum • The compiler will “bind” these symbolic addresses to relocatable addresses, such as 100 bytes from the beginning of the module. • The linkage editor/loader will bind these relocatable addresses to absolute address, such as byte 74321

Protection • This is the problem of restricting a process’s access to memory that has actually been allocated to it.

When we think of the structure of an OS, an OS is generally a collection of routines • Some that are essential such as those that control physical I/O are resident (or always loaded into memory) • Others are transient, which means they are stored on disk, and read into memory only when needed. These are usually utility routines that are not essential to the control and operation of the system.

Normally the OS occupies low memory beginning with address 0, although the resident portion of the OS can be in high or low memory, the location of the interrupt vector is the primary factor in governing the kernel’s location. Control information comes first, followed by resident routines and the remaining memory forms the “transient area” where application programs and transient routines are loaded Transient Area Resident Operating System System Control Information 0

Memory allocation refers to the action of selecting and reserving particular parts of memory for use by particular processes. • Early operating systems allocated space in physical memory with little or no hardware assistance, or protection. • The need for memory management grew as OS capabilities changed. • Usually memory is allocated to a process in discrete blocks or regions that must be contiguous (they must occupy consecutive addresses in the logical or virtual address space of the process.) • These logical addresses are converted to physical addresses when data and instructions are fetched during the instruction execution cycle.

In early systems… • The evolution of memory management techniques has been shaped largely by the resource’s early characteristics. • Memory was expensive and slow • Only very limited amounts were available • Multiprogramming evolved partly because of the need to share such an expensive resource among several concurrent processes, increasing the amount of work performed. • The memory allocation strategies have evolved as OS’s have become more complex.

No Allocation • Simplest strategy is none at all. • Possible only on systems that run a single program at a time • Each program has total access to ALL portions of memory and can manage and use it in any manner. • Simple approach with costs nothing to implement (it entails no OS services) • The program can use all memory previously occupied by the OS. It’s the program’s responsibility to reload the OS when it completes. A bare machine with no memory allocation and no OS in memory

Single-user Operating Systems • The simplest type of OS serves only a single user and runs only one process at a time. • Memory needs only to be shared between the single process and the OS itself • A program may use whatever memory it needs as long as the required memory exists and is not needed by the OS.

Memory in such systems is typically viewed as being divided into two regions: one for the OS and one for the application program • The placement of the resident portion of the OS in memory can be in either high or low memory addresses: • Some systems use low memory for the resident portion of the OS because this region contains locations with special built-in properties such as interrupt vectors. • Sometimes, even though it controls special hardware locations in low memory, the OS itself may be located in high memory.

Protecting the Nucleus • RAM is easily changed. Providing allocation mechanisms is only meaningful when it is accompanied by protection. • With multiple programs in memory, it is easy for one program to destroy the contents of memory belonging to another program. • Some mechanism should exist to prevent a process’s access to unallocated locations. • Generally the OS keeps track of the space assigned to each program. In terms of the address of the first byte of memory assigned to the process, and the range (or # of bytes assigned to the process) • If a program attempts to modify the contents of memory locations that do not belong to it, the OS’s memory protection routine intervenes and usually terminates the program. • For single user OS’s, the lack of protection is typically viewed as not serious since it is a single user machine, and disruption of OS services would affect a single user.

Allocation for multiprogramming • Memory Management in a multiprogramming environment is more difficult than with a single user system. • Multiprogramming operating systems require that memory be shared among two or more processes. • A portion of memory must be chosen for allocation to each process, when it is created • Memory allocated to a process should be protected from access by another process • As part of the process we will need to keep track of the starting address of the memory assigned to the process, and the range of addresses it can access. The CPU must load this information when a process is selected for execution. Application 2 Application 1 Resident Monitor

When allocating memory, an OS can take either a static or dynamic approach

With static allocation a process is assigned all the memory it is expected to require when it is loaded and started. • It can not be loaded until sufficient memory is available • The process keeps all its memory throughout its lifetime, and cannot obtain more • Simple to manage strategy, but does not allow effective sharing of memory in a multi-programmed system, may result in under or over allocation.

Dynamic allocation offers more efficient memory use. • After an initial allocation, each process is permitted to request addition memory as needed during execution and is expected to release memory it no longer needs. • In this strategy, the OS is presented with an unpredictable sequence of allocate and free requests to assign and release individual blocks of memory • Memory is viewed as consisting of a variable number of blocks which may vary in size • Each request must be filled by a single contiguous region made available in the logical address space of the requesting process.

The next issue is HOW to divide the available memory into portions that can be allocated to processes! There are 2 general strategies.

Static memory area definition: • This is the simplest approach to managing memory. • This divides the available memory into a series of “fixed-length” partitions, each of which will hold one program. (USED BY MFT) • The # and size of each partition is determined when the OS is loaded, and once established does not change. # of processes is fixed!!! • This means that in part the some of the memory allocation decisions are made before the actual amount of space needed by a program is known. • Blocks defined and allocated via this strategy are called fixed partitions (partitions).

Dynamic memory area definition: • The transient area is viewed as an unstructured pool of free space. • When a program is loaded, the OS allocates a region of memory just sufficient to hold the program. • The number of concurrent programs depends of the size and number of requests.

Static allocation can be used with either static or dynamically memory area definition

Assuming a multiprogramming operating system uses static allocation and either static or dynamic memory area definition, several scenarios exist for loading programs into memory: • Several processes can share memory, each being allocated the same amount of space using statically-defined fixed partitions. There is a fixed maximum number of processes. • Several processes can share memory, each being allocated different amounts of space using statically-defined fixed partitions. Again, there is a fixed maximum number of processes, based on the number of partitions. • Several processes can share memory, each being allocated different amounts of space using a variable number of variable-size regions. Here the number of processes may have no fixed maximum.

Using Statically defined partitions • A fixed memory partition strategy divides all available space into a fixed number of partitions. • The partitions may or may not be all the same size (# of bytes) • Each has a fixed size established when the OS is first loaded and initialized. • Common strategy in real-time systems and early batch OS’s such as OS/360 and is no longer in use.

In this example memory is partitioned into a fixed partition system. • Four partitions allow up to four processes to execute concurrently. • Simplifies memory management, because we only need to identify if a partition is free or allocated. Usually accomplished through the use of control blocks which defines the size of an individual partition, and whether or not it is free. • Memory allocated to a process which is not needed by that process is wasted • If no waiting program can fit into a free partitions the partition remains empty, and processes must wait.

Disadvantages • # of process that can run is fixed • Unused memory at the end of each partition is wasted if the process does not need the entire partition into which it is loaded.

Dynamically Defined Regions • Allows the dynamic definition of a variable number of variable size memory regions. • The size of each region is determined when the region is allocated by the OS • The number of concurrent processes becomes variable, depending on the amount of memory available when the allocation request occurs.

Control blocks are dynamically created to keep track of which regions have been allocated to processes and which areas of memory are free. • This method has been used by several OS’s including OS/360 (MVT), some versions of Windows and Macintosh OS.

This method is a cross between static and dynamic methods • From the standpoint of the process: • allocation is static. (only one region will be allocated) • The space assigned to each process is variable and determined when the process starts, but doesn’t change. • From the OS’s standpoint: • Allocation is dynamic • It deals with many processes of different sizes that must start and stop at unpredictable times. • Memory can become fragmented as processes are allocated regions, and then free them when the processes terminate.

A disadvantage is that this method STILL does not solve the wasted space problem. • Fragmentation occurs when memory becomes divided into many small separate sections that are not large enough to meet the needs of waiting processes. • As processes terminate, regions become available but another process can not use that memory unless it will fit inside the existing region. • We need to implement methods to reorganize processes to reclaim free partitions such as swapping or compaction

Dynamic allocation • Processes are allocated memory as requested. • Principal OS support operations are allocate & free • Operations are invoked via a system call at the program interface. • Heap management • Memory is viewed as a collection of blocks of random sizes that will be allocated and freed in unpredictable order. • Essentially, a collection of blocks/holes are scattered throughout memory. These blocks are of various sizes, and we have processes waiting in the input queue. • Memory is allocated to processes until finally the memory requested by the next process is not available . • The OS can wait until sufficient resources are available, or skip down the input queue, handling requests that can be fulfilled.

Allocate • An allocate call requests a specific amount of memory from the OS. • There is no requirement that the memory be in a specific location within memory • But it must be contiguous • The OS must allocate the memory and make it available in the address space of the process. • If no suitable region is available the process must wait

Free • The free operation is used by a process to de-allocate regions it no longer needs. • Each de-allocated block must be freed in its entirety. • The OS needs to reclaim freed blocks and make them available to later allocation requests • Any remaining un-freed space belonging to a process is freed when the process terminates.

Memory Control Blocks • Blocks of memory whether allocated or free are described by memory control blocks (MCBs). • Free memory control blocks represent “free” blocks of memory (FMCBs) • Allocated memory control blocks represent allocated blocks of memory. (AMCBs) • Free blocks are maintained on a linked list of FMCB’s ordered by either decreasing size, and/or increasing memory address.

Where are Memory Control blocks stored? • FMCB’s and AMCB’s may be stored in a system control block area that is separate and protected, accessible only by the OS • Alternately, they may be a part of the corresponding memory block they describe, conveniently placed as a header at the beginning of the block

Handling requests: • When an allocation request is received, the system searches the free list for a block that is large enough for the process. If the block selected is “too big” it is split in half, part is allocated to the process and the other returned to the free list. • When a process terminates or frees allocated memory, the released block is placed back into the list of free blocks. If the newly returned block is physically adjacent to other free blocks, they are joined together to form a new larger free block.

How do we quickly determine which blocks are free or allocated

Boundary Tag Method • Devised by Knuth in 1973 • Two MCB’s are placed in each region • At the end of each region is a simplified MCB containing the size of the block and an indication of whether or not the block is allocated • At the beginning of each block is a complete MCB containing the size of the block and all of the appropriate pointers to the next and previous FMCBs or AMCBs • This method simplifies the joining of adjacent free blocks. • As blocks are freed, it is simple to check the boundary tag of adjacent blocks. IF they are free then the blocks can be combined into a single larger block.

AMCB AMCB AMCB AMCB 120K 120K 120K 120K LMCB LMCB LMCB LMCB LMCB FMCB 240K

Bit Maps • Useful when memory is partitioned into blocks of equal fixed size. • Each block is represented by a bit, and the value of the bit indicates whether the block is free or allocated (1 allocated, 0 free) • Allocation is done in multiples of these fixed size blocks • The address and size of the memory blocks allocated must be stored in or linked to the PCB • When a process terminates, the appropriate bits in the bit map must be set back to zero to indicate that the memory blocks are now free.

Allocation Strategies for supporting Dynamic Allocation of a Varying number of variable sized blocks

* * * * * * 110K 356K 100K 200K 500K 450K FMCB FMCB FMCB FMCB FMCB FMCB First Fit Process A Requests 215K

First Fit • This method examines each FMCB starting from the beginning of the list of FMCBs and selects the first one large enough to fulfill the request. • The selected block is then divided into two parts • A portion large enough to fulfill the request • Remainder is returned to the FMCB (a new smaller block is created) • Method is simple and fast • May lead to a rapid breakdown of large blocks, because a small request may be filled from a large block. • Tendency for small blocks to collect at the front of the free list.

A roving pointer is maintained which points to the location where the last search ended. • New searches begin at this location • The list is treated as a circular list, so that the pointer in the “last” FMCB is linked to the beginning of the list • If the pointer returns to the place where it began, then request can not be fulfilled • Eliminates some of the problems with the “first-fit” strategy, because small blocks do not accumulate at the beginning of the list. • Allocation is more evenly distributed throughout the list and memory is used more evenly.