Group Norm for Learning Latent Structural SVMs

Group Norm for Learning Latent Structural SVMs Daozheng Chen (UMD, College Park), Dhruv Batra (TTI-Chicago), Bill Freeman (MIT), Micah K. Johnson (GelSight, Inc.). Overview. Induce Group Norm. Our goal Estimate model parameters Learn the complexity of latent variable space.

Group Norm for Learning Latent Structural SVMs

E N D

Presentation Transcript

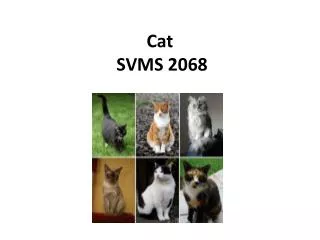

Group Norm for Learning Latent Structural SVMs Daozheng Chen (UMD, College Park), Dhruv Batra (TTI-Chicago), Bill Freeman (MIT), Micah K. Johnson (GelSight, Inc.) Overview Induce Group Norm • Our goal • Estimate model parameters • Learn the complexity of latent variable space. • Our approach • norm for regularization to estimate the parameters of a latent-variable model. • Data with complete annotation is rarely ever available. • Latent variable models capture interaction between • observed data (e.g. gradient histogram image features) • latent or hidden variables not observed in the training data (e.g. location of object parts). • Parameter estimation involve a difficult non-convex optimization problem (EM, CCCP, self-paced learning) Key Contribution Inducing Group Norm w partitioned into P groups; each group corresponds to the parameters of a latent variable state Root filters Part filters Part displacement norm for regularization Component #1 Digit Recognition Component #2 Rotation (Latent Var.) Feature Vector Images Felzenszwalb et al. car model on the PASCAL VOC 2007 data. Each row is a component of the model. Latent Structural SVM Label space Latent Space Joint feature vector Prediction Rule: Learning objective: • At group level, the norm behave like norm and induces group sparsity. • Within each group, the norm behave like norm and does not promote sparsity. Alternating Coordinate and Subgradient Descent Experiment • Digit recognition experiment (following the setup of Kumar et al. NIPS ‘10) • MNIST data: binary classification on four difficult digit pairs • (1,7), (2,7), (3,8), (8,9) • Training data 5,851 - 6,742, and testing data 974 - 1,135 • Rotate digit images with angles from -60o to 60o • PCA to form 10 dimensional feature vector Rewrite Learning Objective nonconvex convex convex -48o -36o -24o -12o 0o 12o 24o 36o 48o 60o -60o Minimize Upper bound of convex if {hi} is fixed • l2 norm of the parameter vectors for different angles over the 4 digit pairs. • Select only a few angles, much fewer than 22 angles Angles Not Selected -60o -12o 0o -48o -36o -48o -48o -60o Subgradient • Significantly higher accuracy than random sampling. • 66% faster than full model with no loss in accuracy!