Mitigating the Effects of Flaky Tests on Mutation Testing

210 likes | 234 Vues

This study explores strategies to mitigate the effects of flaky tests on mutation testing, including comparing test suites by mutation score and guiding testing based on the mutant-test matrix. The results show that flakiness can significantly impact mutation testing, and mitigation strategies based on reruns and isolation are effective.

Mitigating the Effects of Flaky Tests on Mutation Testing

E N D

Presentation Transcript

Mitigating the Effects of Flaky Testson Mutation Testing August Shi, Jonathan Bell, Darko Marinov ISSTA 2019 Beijing, China 7/18/2019 CCF-1421503 CNS-1646305 CNS-1740916 CCF-1763788 CCF-1763822 OAC-1839010

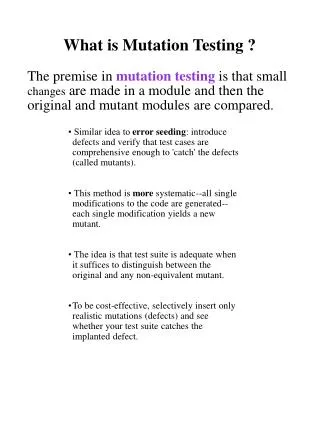

Mutation Testing Code Under Test Code Under Test test1 test2 test3 Mutant 1 Code Under Test Code Under Test UNRELIABLE Mut 1 test1 test2 test3 Killed Code Under Test Code Under Test test1 test2 test3 Mutant 2 • Compare test suites by mutation score • Guide testing based on mutant-test matrix Mut 2 Survived

Mutation Testing with Flaky Tests Code Under Test Code Under Test test1 test2 test3 Mutant 1 Code Under Test Code Under Test Code Under Test STILL FLAKY Mut 1 test1 test2 test3 Killed? Code Under Test Code Under Test test1 test2 test3 Mutant 2 Run 2 Run 1 • Get test suite with deterministic outcomes • Debug/fix flaky tests1 • Remove/ignore flaky tests Mut 2 Survived? 1August Shi et al. “iFixFlakies: A Framework for Automatically Fixing Order-Dependent Tests”. ESEC/FSE 2019

Flaky Coverage Example • Other reasons for flakiness: • Concurrency • Randomness • I/O • Order dependency 1 public classWatchDog { 2 ... 3publicvoid run() { 4 ... 5 synchronized (this) { 6 longtimeLeft = timeout – (System.currentTimeMillis() - startTime); 7 isWaiting = timeLeft > 0; 8 while (isWaiting) { 9 ... 10 wait(timeLeft); 11 ... 12 }} 13 ... 14 }} public void test() { newWatchDog.run(); ... }

Motivating Study • Measure flakiness of coverage • 30 open-source GitHub projects from prior work • No flaky test outcomes! (all 35,850 tests pass in 17 runs) • Rerun tests and measure differences in coverage • 113,356 (22%) statements with different tests covering across runs • 5,736 (16%) tests cover different statements across runs • Lots of flakiness in coverage, even without flaky outcomes!

Mutation Testing with Flaky Coverage 1 public classWatchDog { 2 ... 3publicvoid run() { 4 ... 5 synchronized (this) { 6 longtimeLeft = timeout – (System.currentTimeMillis() - startTime); 7 isWaiting = timeLeft > 0; 8 while (isWaiting) { 9 ... 10 wait(timeLeft); 11 ... 12 }} 13 ... 14 }} Mutation delete call public void test() { newWatchDog.run(); ... } Mutation not covered!

Mutation Testing Results are Unreliable • Flakiness can shift mutation testing results • Mutation scores may be inflated/deflated • Mutant-test matrix unreliable • Need to mitigate the effects of flakiness on mutation testing! • Mitigation strategies based on reruns and isolation2 • Implemented on PIT, a popular mutation testing tool for Java https://doi.org/10.6084/m9.figshare.8226332 https://github.com/hcoles/pitest/pull/534 https://github.com/hcoles/pitest/pull/545 2Jonathan Bell et al. “DeFlaker: Automatically Detecting Flaky Tests”. ICSE 2018

Mitigating Flakiness in Mutation Testing Traditional mutation testing Mutants to test Sorted tests per mutant Full test-suite coverage collection Test-mutant prioritization Mutant execution Improvements to cope with flakiness Rerun and isolate tests Run tests with least flaky coverage first Track mutations covered Rerun/isolate tests See paper

Coverage Collection • When running multiple times, union coverage • More lines covered means more mutants generated • Run tests in isolation to remove test-order dependencies

Executing Tests on Mutants • Monitor if tests actually execute mutated bytecode • Traditionally, mutant-test pair has status Killed or Survived • Only applicable if test executes the mutated bytecode • Mutant-test pair with test that does not execute mutated bytecode hasnew status Unknown • Test can potentially cover mutation, based on prior coverage

New Status for Mutants • Overall mutant status depends on status of all mutant-test pairs run for the mutant • Need to reduce number of Unknown mutants and pairs Killed Survived + Covered + Covered Unknown (not covered)

Rerunning Mutant-test Pairs • While status of mutant-test pair is Unknown, rerun • Change isolation level during reruns Mutant-test pairs for mutants in same class in same JVM Default Mutant-test Pairs More Isolation Increasing Cost Mutant-test pairs for same mutant in same JVM Most Isolation Mutant-test pairs in own JVM

Experimental Setup • Evaluate on same 30 projects in motivating study • All modifications on top of PIT mutation testing tool • RQ1: Flakiness in traditional mutation testing? • RQ2: Effect of coverage on mutants generated? • RQ3: Effect of re-executing tests on mutant status? • RQ4: Prioritize tests for mutant-test executions? See paper See paper

RQ1: Flakiness in Traditional Mutation Testing Max difference up to 23pp! Must improve mutation scores more than this variance! 9% of mutants-test pairs are unknown (max up to 55%)! Matrix results can be unreliable

RQ3: Mutant Re-execution Results • Increasing isolation greatly increases covered pairs • Unnecessary to rerun too often with the most isolation

Discussion • Flakiness can have negative effects beyond mutation testing • Tools/studies that rely on coverage must consider flakiness • Fault localization, program repair, test prioritization, test-suite reduction, test selection, test generation, runtime verification, … • Mitigation strategies applicable beyond mutation testing • Different isolation strategies for different tasks

Conclusions • Even seemingly non-flaky tests have flaky coverage • 22% of statements not covered consistently! • We present problems in mutation testing due to flakiness • We propose techniques to mitigate effects • Different combinations of reruns and isolation • We reduce Unknown mutants/pairs by 79.4%/88.1% • Flakiness can have negative effects beyond mutation testing awshi2@illinois.edu https://doi.org/10.6084/m9.figshare.8226332

Prioritizing Tests for Mutants • Run mutant-test pairs in the order that gets the overall mutant status faster, more reliably • Once mutant status known, no need to run more • Prioritize tests per mutant based on coverage • Tests with more “stable” coverage on mutant prioritized earlier • Later prioritize based on time • When to rerun? • Immediately rerun pair? • Run all pairs first before rerunning?

RQ2: Coverage and Mutant Generation • Not much difference in numbers of mutants and pairs • Can potentially use Default for mutant generation