INFORMATION SEARCH

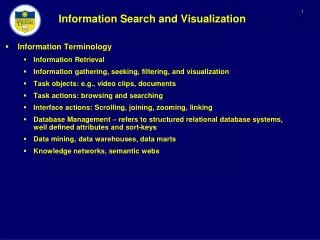

INFORMATION SEARCH. Presenter: Pham Kim Son Saint Petersburg State University. Overview. Introduction Overview of IR system and basic terminologies Classical models of Information Retrieval Boolean Model Vector Model Modern models of Information Retrieval Latent Semantic Indexing

INFORMATION SEARCH

E N D

Presentation Transcript

INFORMATION SEARCH Presenter: Pham Kim Son Saint Petersburg State University

Overview • Introduction • Overview of IR system and basic terminologies • Classical models of Information Retrieval • Boolean Model • Vector Model • Modern models of Information Retrieval • Latent Semantic Indexing • Correlation Method • Conclusion

Introduction • People need information to solve problem • Very simple thing • Complex thing • “Perfect search machine” defined by Larry Page is something that understand exactly what you mean and return exactly what you want.

Challenges to IR • Introduction of www • www is large • Heterogeneous • Gives challenges to IR

Overview of IR and basic terminologies • IR can be divided in to 3 components • Input • Processor • Output Query Processor Output Input Documents

Input Input • The main task: Obtain a good representation of each document and query for computer to use • A document representative: a list of extracted keywords • Idea: Let the computer process the natural language in the document

Input (cont) • Obstacles • Theory of human language has not been sufficiently developed for every language • Not clear how to use it to enhance information retrieval

Input (cont) • Representing documents by keywords • Step1:Removing common words • Step2: Stemming

Step1: Removing common words • Very high frequency words very common words • They should removed • comparing with stop-list • So • Non-significant words not interfere IR process • Reduce the size document between 30->50 per cent (C.J. van Rijsbergen) are be By will of the

Step2:Stemming • Def: Stemming : The process of chopping the ending of a term, e.g. removing “ed”, ”ing” • Algorithm Porter

Processor Processor Input Input • This part of the IR system are concerned with the retrieval process • Structuring the documents in an appropriate way • Performing actual retrieval function, using a predefined model

Processor Output output Input • The output will be a set of documents, assumed to be relevant to users. • Purpose of IR: • Retrieved all relevant documents • Retrieved irrelevant documents as few as possible

Relevant docs retrieved • Definition relevant docs Relevant docs retrieved All docs retrieved

Returns relevant documents but misses many useful ones too The ideal Returns most relevant documents but includes lots of junk 1 Precision 0 1 Recall

Information Retrieval Models • Classical models • Boolean model • Vector model • Novel models • Latent semantic indexing model • Correlation method

Boolean model • Earliest and simplest method, widely used in IR systems today • Based on set theories and Boolean algebra. • Queries are Boolean expressions of keywords, connected by operators AND, OR, ANDNOT • Ex: (Saint Petersburg AND Russia) | (beautiful city AND Russia)

Inverted files are widely used • Ex: • Term1 : doc 2 , doc 5, doc6 ; • Term2 : doc 2, doc4, doc5; • Query : q = (term1 AND term2) • Result: doc2, doc5 • Term-document matrix can be used

Thinking about Boolean model • Advantages: • Very simple model based on sets theory • Easy to understand and implement • Supports exact query • Disadvantages: • Retrieval based on binary decision criteria , without notion of partial matching • Sets are easy, but complex Boolean expressions are not • The Boolean queries formulated by users are most often so simplistic • Retrieves so many or so few documents • Gives unranked results

Vector Model • Why Vector Model ? • Boolean model • just takes into account the existence or nonexistence of terms in a document • Has no sense about their different contributions to documents

Overview theory of vector model • Documents and queries are displayed as vectors in index-term space • Space dimension is equal to the vocabulary size • Components of these vectors: the weights of the corresponding index term, which reflects its significant in terms of representative and discrimination power • Retrieval is based on whether the “query vector” and “document vector” are closed enough.

Set of document: • A finite set of terms : • Every document can be displayed as vector: • the same to the query:

j dj • Similarity of query q and document d: • Given a threshold , all documents with similarity > threshold are retrieved q i

Compute a good weight • A variety of weighting schemes are available • They are based on three proven principles: • Terms that occur in only a few documents are more valuable than ones that appear in many • The more often a term occur in a document, the more likely it is to be important to that document • A term that appears the same number of times in a short document and in a long one is likely to be more available for the former

tf-idf-dl (tf-idf) scheme • The term frequency of a term ti in document dj: • The length of document dj: • DLj = total number of terms occurrences in document dj • Inverted document frequency: collection of N documents, inverted document frequency of a term ti that appears in n document is : • Weight:

Think about Vector model • Advantages: • Term weighting improves the quality of the answer • Partial matching allows to retrieve the documents that approximate the query conditions • Cosine ranking formula sorts the answer • Disadvantages • Assumes the independences of terms • Polysemy and synonymy problem are unsolved

Modern models of IR • Why ? • Problems with polysemy: • Bass (fish or music ?) • Problems with synonymy: • Car or automobile ? • These failures can be traced to : • The way index terms are identified is incomplete • Lack of efficient methods to deal with polysemy • Idea to solve this problem: take the advance of implicit higher order structure(latent) in the association terms with documents .

Latent Semantic Indexing (LSI) • LSI overview • Representing documents roughly by terms is unreliability, ambiguity and redundancy • Should find a method , which can : • Documents and terms are displayed as vectors in a k-dim concepts space. Its weights indicating the strength of association with each of these concepts • That method should be flexible enough to remove the weak concepts, considered as noises

d1 d2 …. dm t1 w11 w12 … w1m t2 w21 w22 … w2m : : : : : : : : tn wn1 wn2 … wnm • Document-term matrix A[mxn] are built • Matrix A is factored in to 3 matrices, using Singular value decomposition SVD • U,V are orthogonal matrices

These special matrices show a break down of the original relationship (doc-term) to a linearly independent components (factors) • Many of these components are very small: ( considered as noises) and should be ignored

Criteria to choose k: • Ignore noises • Important information are not lost • Documents and terms are displayed as vectors in k-dim space • Theory Eckart & Young ensures us about not losing important information

Query • Should find a method to display a query to k-dim space • Query q can be seen as a document. • From equation: • We have

Similarity between objects • Term-Term: • Dot product between two rows vector of matrix Ak reflects the similarity between two terms • Term-term similarity matrix : • Can consider the rows of matrix as coordinate of terms. • The relation between taking rows of as coordinate and rows of as coordinates is simple

Document-document • Dot product between two columns vectors of matrix Ak reflect the similarity between two documents. • Can consider the row of matrix as coordinates of documents. • Term-document • This value can be obtained by looking at the element of matrix Ak • Drawback: between and within comparisons can not be done simultaneously without resizing the coordinate.

Example • q1: human machine interface for Lab ABC computer applications • q2: a survey of user opinion of computer system response time. • q3: the EPS user interface management system • q4: System and human system engineering testing of EPS • q5:Relation of user-perceived response time to error measurement • q6: The generation of random, binary, unordered tree • q7: The intersection graph of paths in trees • q8: Graph minors IV: Widths of trees and well-quasi-ordering • q9: Graph minors: A survey

Query: human computer interaction

Updating • Folding in • New document • New terms and docs has no effect on the presentation of pre-existing docs and terms • Re-computing SVD • Re-compute SVD • Requires times and memory • Choosing one of these two methods

Think about LSI • Advantages: • Synonymy problem is solved • Displaying documents in a more reliable space: Concepts space • Disadvantages: • Polysemy problem is still unsolved • A special algorithms for handling with large size matrices should be implemented

Correlation Method • Idea: If a keyword is present in the document, correlated keywords should be taken into account as well. So, the concepts containing in the document aren’t obscured by the choices of a specific vocabulary. • In vector space model: similarity vector : Depend on the user query q, we now build the best query, taking the correlated keyword into account as well.

The correlation matrix is built based on the term-document matrix • Let: A is the term-document matrix • D : number of document, is the mean vector. Clearly that : • Covariance matrix is computed: • Correlation Matrix S :

Better query: • We now use SVD to reduce noises in the correlation of keywords: • We choose the first k largest factors to obtain the k dimensional of S • Generate our best query: • Vector of similarity : • Define a projection, defined by :

Strength of correlation method • In real world, correlation between words are static. • Number of terms has a higher stability level when comparing with number of documents • Number of documents are many times larger than number of keywords. • This method is able to handle database with a very large number of documents and doesn’t have to update the correlation matrix every time adding new documents. • Its importance in the electronic networks.

Conclusion • IR overview • Classical IR models : • Boolean model • Vector model • Modern IR models : • LSI • Correlation methods

References • Gheorghe Muresan: Using document Clustering and language modeling in mediated IR. • Georges Dupret: Latent Concepts and the Number Orthogonal Factors in Latent Semantic Indexing • C.J. van RIJSBERGEN: Information Retrieval • Ricardo Baeza-Yates: Modern Information Retrieval • Sandor Dominich: Mathematical foundation of information retrieval. • IR lectures note from : www.cs.utexas.edu • Scott Deerwester, Susan T.Dumais: Indexing by Latent Semantic Analysis

Desktop Search • Design the desktop search that satisfies • Not affects computer’s performance • Privacy is protected • Ability to search as many types of files as possible( consider music file) • Multi languages search • User-friendly • Support semantic search if possible