Evaluation for M&E and Program Managers

Evaluation for M&E and Program Managers. R&M Module 3. Introduction. To the Participants/ Expectations:. Your name , organization , and position? Why did you sign up for this workshop? How do you hope this course will help you in your job ?

Evaluation for M&E and Program Managers

E N D

Presentation Transcript

Evaluation for M&E and Program Managers R&M Module 3

To the Participants/ Expectations: • Your name, organization, and position? • Why did you sign up for this workshop? • How do you hope this course will help you in your job? • What areas of evaluation are you most interested in learning about?

Course Objective To give M&E and program managers the background needed to effectively manage evaluations.

Learning Outcomes • To understand the principles and importance of evaluation. • Learn basic evaluation design and methods for data collection commonly used in field-based evaluation. • Learn key considerations in planning and managing evaluations in the field. • Construct a learning agenda and evaluation plan for your grant. • Learn key steps in ensuring effective communication and utilization of evaluation findings. • Develop a terms of reference/evaluation plan.

Learning for Pact and Learning Principles • Solicit daily feedback for ongoing feedback • Asking is learning! Open environment for asking questions throughout the presentation. • Let us know if there are areas/items/considerations missing • Learning by doing • Peer learning through development of TOR (and presentations on the last day)

Session 1 What is Evaluation?

Session Learning Objectives: • Define evaluation • Explain the difference between monitoring, evaluation, and research • Describe why evaluations are conducted • Describe different types of evaluations • Describe common barriers to evaluations

Session 1.1 Defining Evaluation

What is Evaluation? “The systematic collection of information about the activities, characteristics, and results of programs to make judgments about the program, improve or further develop program effectiveness, inform decisions about future programming, and/or increase understanding.” -Michael Patton (1997, 23)

What is Evaluation? “The systematic and objective assessment of an ongoing or completed project, programme or policy, its design, implementation and results. The aim is to determine the relevance and fulfillment of objectives, development efficiency, effectiveness, impact, and sustainability.” -Organization for Economic Co-operation and Development (OECD; 2002, 21–22)

What is Evaluation? “The systematic collection and analysis of information about the characteristics and outcomes of programs and projects as a basis for judgments, to improve effectiveness, and/or inform decisions about programming.” -US Agency for International Development (2011, 2)

What is Evaluation? • Systematic- grounded in a system, method, or plan • Specific- focused on a project/program • Versatile- ask many different types of questions • Utility- used to inform current and future programming

Evaluation is Action-Oriented Evaluation seeks to answer a range of questions that might lead to adjustment of project activities, including: • Is the program addressing a real problem, and is that problem the right one to address? • Is the intervention appropriate? • Are additional interventions necessary to achieve the objectives? • Is the intervention being implemented as planned? • Is the intervention effective and resulting in the desired change at a reasonable cost?

Session 1.2 Evaluation Versus ResearchEvaluation Versus Monitoring

Evaluation vs Research • What is an example of research? What is an example of an evaluation? • What are main differences between evaluation and research?

Evaluation vs Research (Fitzpatrick, Sanders, and Worthen, 2011).

Monitoring vs Evaluation • What are some key differences?

Differences between Monitoring and Evaluation (adapted from Jaszczolt, Potkański, and Alwasiak 2003)

Session 1.3 Why Evaluate?

Exercise: Why Evaluate? • Take a few minutes to reflect on what evaluation means to your organization based on your experience. • What would you consider as the key reasons why your organization should invest in undertaking evaluation? • Write down three points you might say to someone else to explain why evaluation is important to your project.

Why Evaluate? Common reasons to evaluate are: • To measure a program’s value or benefits • To get recommendations to improve a project • To improve understanding of a project • To inform decisions about future projects

Session 1.4 Types of Evaluations

Types of Evaluation Once you determine your evaluation purpose, you will want to consider what type of evaluation will fulfill the purpose Broad types are: • Formative evaluation • Summative evaluation • Process evaluation • Outcome evaluation • Impact evaluation

Formative Evaluations • Undertaken during program design or early in the implementation phase to assess whether planned activities are appropriate. • Examine whether the assumed ‘operational logic’ corresponds with the actual operations and what immediate consequences implementation may produce. • Sub-types include: needs assessments, contextual scans, feasibility assessments, and baseline assessments

Summative Evaluations • Final assessments done at the end of a project. • Results obtained help in making decisions about continuation or termination or whether there would be value in scaling up or replicating the program. • A summative evaluation determines the extent to which anticipated outcomes were produced. • This kind of evaluation focuses on the program‘s effectiveness, assessing the results of the program.

Process Evaluations • Examines whether a program has been implemented as intended—whether activities are taking place, whom they reach, who is conducting them, and whether inputs have been sufficient. • Also is referred to “implementation evaluation.”

Formative and Summative Evaluations "When the cook tastes the soup, that's formative; when the guests taste the soup, that's summative" Bob Stake, quoted in Scriven, 1991

Outcome Evaluations • An outcome evaluation examines a project’s short-term, intermediate, and long-term outcomes. • While process evaluation may examine the number of people receiving services and the quality of those services, outcome evaluation measures the changes that may have resulted in people’s attitudes, beliefs, behaviors, and health outcomes. • Outcome evaluation may also study changes in the environment, such as policy and regulatory changes.

Impact Evaluations • Assess the long term effect of the project on its end goals, i.e. disease prevalence, resilience, or stability. • The most rigorous types of outcome evaluations. Use statistical methods and comparison groups to attribute change to a particular project or intervention.

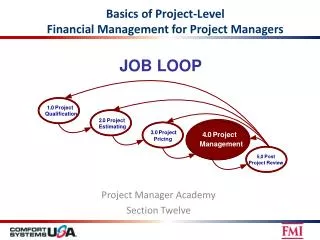

Linking Types of Evaluations to the Program Logic Model Process Evaluation Baseline/ Formative Midterm Endline Summative Outcome Impact Outcome Output Impact Inputs Activities

Exercise: Choosing Evaluation Type Identify which evaluation type is the most appropriate in each situation: • You are interested in knowing whether the project is being implemented on budget and according the workplan. • You are interested in knowing what the effect of the project has been. • You want to determine whether to scale up project activities. • You are about to begin project activities, but want to determine whether the proposed activities fit with the current context.

Internal and External Evaluations Who conducts the evaluation? • Internal evaluations are conducted by people within the implementing organization. • External evaluations are conducted by people not affiliated with the implementing organization (although conducted closely with project staff). • Hybrid evaluations are conducted with representatives from both

Session 1.5 Barriers to Evaluations

Exercise: Barriers to Evaluation Many programs are not properly evaluated, and as a result it is very difficult to duplicate or scale up the interventions that are most effective. • What are some of the barriers that prevent evaluation of programs? • To what extent do you believe that program implementers are open to evaluating their programs and what are some of the underlying reasons for this? • Write down five common barriers to evaluation.

A Few Barriers: • Lack of time, knowledge and skills • Lack of resources or budget • Poor design or planning • Startup activities compete with baselines • Too complex evaluation design • Fear of negative findings • Resistance to M&E as police work • Resistance to using resources for M&E • Perception that evaluations are not useful • Disinterest in mid or endline evaluations if no baseline • What else?

Session 2 Evaluation Purpose and Questions

Session Learning Objectives: • Use logic models to explain program theory. • Write an evaluation purpose statement. • Develop evaluation questions To begin to build terms of reference, participants will: • Describe the program using a logic model (TOR I-B and II-C). • Complete a stakeholder analysis (TOR II-A). • Write an evaluation purpose statement (TOR II-B). • Develop evaluation questions (TOR III).

Terms of Reference (TOR) • Comprehensive, written plan for the evaluation. • Articulates the evaluation’s specific purposes, the design and data collection needs, the resources available, the roles and responsibilities of different evaluation team members, the timelines, and other fundamental aspects of the evaluation. • Facilitates clear communication of evaluation plans to other people. • If the evaluation will be external, the TOR helps communicate expectations to and then managing the consultant(s). A TOR template can be found in Appendix 1 (page 89).

Evaluation Plan/TOR Roadmap • Background of the evaluation • Brief description of the program • Purpose of the evaluation • Evaluation questions • Evaluation methodology • Evaluation team • Schedule and logistics • Reporting and dissemination plan • Budget • Timeline • Ethical considerations

Session 2.1 Focusing the Evaluation

Focusing the Evaluation • Focusing an evaluation means determining what it’s major purpose is. • Why do we have to do this? • Usually a broad range of interests and expectations among stakeholders • Evaluation resources and budgets are usually limited, so not everything can be evaluated • Evaluations must focus on generating useful information; plenty of interesting information is not particularly useful • In an evaluation report, the results must come across clearly so they can be actionable • What may happen if we don’t do this?

Key Steps in Focusing the Evaluation • Use a logic model to understand and document the program logic. • Document key assumptions underlying the program logic. • Engage stakeholders to determine what they need to know from the evaluation. • Write a purpose statement for the evaluation. • Develop a realistic set of questions that will be answered by the evaluation.

Logic Model • Visually describe the program’s hypothesis of how project activities will create impact. • Useful in distilling the program logic into its key components and relationships. • Results frameworks, logframes, theories of change, and conceptual models, also facilitate visualization of program logic. • Start with describing the project’s long term goals, and work towards the left.

Logic Model • Program logic—also called the program theory—is the reasoning underlying the program design. • The logic can often be expressed with if–then statements • By making explicit the assumptions behind a program, it becomes easier to develop good evaluation questions.

Logic Model • If we give people bednets, then they will use them over their beds. Or • If we educate 60% of adults about mosquito prevention, then the mosquito population will decline.

Key Assumptions • Every program has assumptions • Use logic model can help to make those clear. • For example: a logic model could show an expected output of administering vaccinations to 2,000 children and an outcome of fewer children getting sick as a result. What are the underlying assumptions here?