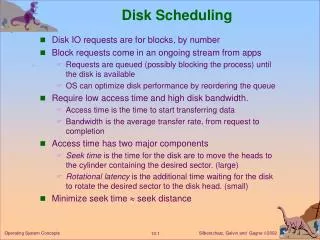

Disk Scheduling

Disk Scheduling. Because Disk I/O is so important, it is worth our time to Investigate some of the issues involved in disk I/O. One of the biggest issues is disk performance. seek time is the time required for the read head to move to the track containing the data to be read.

Disk Scheduling

E N D

Presentation Transcript

Disk Scheduling Because Disk I/O is so important, it is worth our time to Investigate some of the issues involved in disk I/O. One of the biggest issues is disk performance.

seek time is the time required for the read head to move to the track containing the data to be read.

rotational delay or latency, is the time required for the sector to move under the read head.

Seek time is the time required to move the disk arm to the specified track Ts = # tracks * disk constant + startup time ~ rotational delay Performance Parameters data transfer (latency) seek Wait for device Wait for Channel Device busy Transfer Time Tt = bytes / ( rotation_speed * bytes_on_track ) Rotational delay is the time required for the data on that track to come underneath the read heads. For a hard drive rotating at 3600 rpm, the average rotational delay will be 8.3ms.

Consider a file where the data is stored as compactly as possible, in this example the file occupies all of the sectors on 8 adjacent tracks (32 sectors x 8 tracks = 256 sectors total). Data Organization vs. Performance The time to read the first track will be average seek time 20 ms rotational delay 8.3 ms read 32 sectors 16.7 ms 45ms Assuming that there is essentially no seek time on the remaining tracks, each successive track can be read in 8.3 + 16.7 ms = 25ms. Total read time = 45ms + 7 * 25ms = 220ms = 0.22 seconds

If the data is randomly distributed across the disk: For each sector we have average seek time 20 ms rotational delay 8.3 ms read 1 sector 0.5 ms Total time = 256 sectors * 28.8 ms/sector = 7.73 seconds 28.8 ms

In the previous example, the biggest factor on performance is ? Seek time! To improve performance, we need to reduce the average seek time.

Queue Request Request Request Request … The operating system keeps a queue of requests to read/write the disk. In these exercises we assume that all of the requests are on the queue.

Queue Request Request Request Request … If requests are scheduled in random order, then we would expect the disk tracks to be visited in a random order.

Queue First-come, First-served Scheduling Request Request Request Request … If there are only a few processes competing for the drive, then we can hope for good performance. If there are a large number of processes competing for the drive, then performance approaches the random scheduling case.

While at track 15, assume some random set of read requests -- tracks 4, 40, 11, 35, 7 and 16 Head Path Tracks Traveled Track 15 to 4 11 4 to 40 36 40 to 11 29 11 to 35 24 35 to 7 28 7 to 16 9 137 tracks 40 30 20 10

Queue Shortest Seek Time First Request Request Request Request … Always select the request that requires the shortest seek time from the current position.

While at track 15, assume some random set of read requests -- tracks 4, 40, 11, 35, 7 and 16 Shortest Seek Time First Track Head Path Tracks Traveled 40 30 20 10 In a heavily loaded system, incoming requests with a shorter seek time will constantly push requests with long seek times to the end of the queue. This results In what is called “Starvation”. Problem?

While at track 15, assume some random set of read requests -- tracks 4, 40, 11, 35, 7 and 16 Shortest Seek Time First Track Head Path Tracks Traveled 40 15 – 16 1 16 – 11 5 11 – 7 4 7 – 4 3 4 – 35 31 35 – 40 5 30 20 10 49 tracks 50 100 In a heavily loaded system, incoming requests with a shorter seek time will constantly push requests with long seek times to the end of the queue. This results In what is called “Starvation”. Problem?

Queue The elevator algorithm (scan-look) Request Request Request Request … Search for shortest seek time from the current position only in one direction. Continue in this direction until all requests in this direction have been satisfied, then go the opposite direction. In the scan algorithm, the head moves all the way to the first (or last) track before it changes direction.

While at track 15, assume some random set of read requests Track 4, 40, 11, 35, 7 and 16. Head is moving towards higher numbered tracks. Scan-Look Track Tracks Traveled Head Path 40 30 20 10 Steps 50 100

While at track 15, assume some random set of read requests Track 4, 40, 11, 35, 7 and 16. Head is moving towards higher numbered tracks. Scan-Look Track Tracks Traveled Head Path 40 15 – 16 1 16 – 35 19 35 – 40 5 40 – 11 29 11 – 7 4 7 – 4 3 30 20 10 61 tracks Steps 50 100

Which algorithm would you choose if you were implementing an operating system? Issues to consider when selecting a disk scheduling algorithm: Performance is based on the number and types of requests. What scheme is used to allocate unused disk blocks? How and where are directories and i-nodes stored? How does paging impact disk performance? How does disk caching impact performance?

Disk Cache The disk cache holds a number of disk blocks in memory, usually in RAM on the disk controller. When an I/O request is made for a particular block, the disk cache is checked. If the block is in the cache, it is read. Otherwise, the required block (and often some contiguous blocks) are read into the cache.

Replacement Strategies Least Recently Used replace the block that has been in the cache the longest, without being referenced. Least Frequently Used replace the block that has been used the least

RAID Redundant Array of Independent Disks • Push Performance • Add reliability

RAID Level 0: Striping strip 0 strip 1 Physical Drive 1 Physical Drive 2 strip 2 strip 3 A Stripe strip 0 strip 1 strip 4 strip 2 strip 3 strip 5 strip 4 strip 5 strip 6 strip 6 strip 7 strip 7 o o o o o o strip 8 strip 9 strip 10 Disk Management Software strip 11 o o o Logical Disk

RAID Level 1: Mirroring High Reliability strip 0 strip 1 Physical Drive 1 Physical Drive 3 Physical Drive 2 Physical Drive 4 strip 2 strip 3 strip 0 strip 0 strip 1 strip 1 strip 0 strip 0 strip 1 strip 1 strip 4 strip 2 strip 2 strip 3 strip 3 strip 2 strip 2 strip 3 strip 3 strip 5 strip 4 strip 4 strip 5 strip 5 strip 4 strip 4 strip 5 strip 5 strip 6 strip 6 strip 6 strip 7 strip 7 strip 6 strip 6 strip 7 strip 7 o o o o o o o o o o o o strip 7 o o o o o o o o o o o o strip 8 strip 9 strip 10 Disk Management Software Duplicate writes to drive 1 and drive 2 on these disks strip 11 o o o Logical Disk

RAID Level 3: Parity High Throughput strip 0 strip 1 Physical Drive 1 Physical Drive 3 Physical Drive 2 Physical Drive 4 strip 2 strip 3 strip 0 strip 0 strip 1 strip 1 strip 2 strip 0 strip 1 para strip 4 strip 2 strip 3 strip 4 strip 3 strip 5 strip 2 strip 3 parb strip 5 strip 4 strip 6 strip 7 strip 5 strip 8 strip 4 strip 5 parc strip 6 strip 9 strip 6 strip 10 strip 7 strip 11 strip 6 strip 7 pard o o o o o o o o o o o o strip 7 o o o o o o o o o o o o strip 8 parity strip 9 strip 10 Disk Management Software strip 11 o o o Logical Disk

Thinking about what you have learned

Suppose that 3 processes, p1, p2, and p3 are attempting to concurrently use a machine with interrupt driven I/O. Assuming that no two processes can be using the cpu or the physical device at the same time, what is the minimum amount of time required to execute the three processes, given the following (ignore context switches): Process Time compute Time device 1 10 50 2 30 10 3 15 35

Process Time compute Time device 1 10 50 2 30 10 3 15 35 p3 p2 P1 0 50 60 90 120 130 10 20 30 40 70 80 110 100 105

Consider the case where the device controller is double buffering I/O. That is, while the process is reading a character from one buffer, the device is writing to the second. Process What is the effect on the running time of the process if the process is I/O bound and requests characters faster than the device can provide them? A B Device Controller The process reads from buffer A. It tries to read from buffer B, but the device is still reading. The process blocks until the data has been stored in buffer B. The process wakes up and reads the data, then tries to read Buffer A. Double buffering has not helped performance.

Consider the case where the device controller is double buffering I/O. That is, while the process is reading a character from one buffer, the device is writing to the second. Process What is the effect on the running time of the process if the process is Compute bound and requests characters much slower than the device can provide them? B A Device Controller The process reads from buffer A. It then computes for a long time. Meanwhile, buffer B is filled. When The process asks for the data it is already there. The process does not have to wait and performance improves.

Suppose that the read/write head is at track is at track 97, moving toward the highest numbered track on the disk, track 199. The disk request queue contains read/write requests for blocks on tracks 84, 155, 103, 96, and 197, respectively. How many tracks must the head step across using a FCFS strategy?

Suppose that the read/write head is at track is at track 97, moving toward the highest numbered track on the disk, track 199. The disk request queue contains read/write requests for blocks on tracks 84, 155, 103, 96, and 197, respectively. How many tracks must the head step across using a FCFS strategy? Track 97 to 84 13 steps 84 to 155 71 steps 155 to 103 52 steps 103 to 96 7 steps 96 to 197 101 steps 244 steps 199 150 100 50 Steps 100 200

Suppose that the read/write head is at track is at track 97, moving toward the highest numbered track on the disk, track 199. The disk request queue contains read/write requests for blocks on tracks 84, 155, 103, 96, and 197, respectively. How many tracks must the head step across using an elevator strategy?

Suppose that the read/write head is at track is at track 97, moving toward the highest numbered track on the disk, track 199. The disk request queue contains read/write requests for blocks on tracks 84, 155, 103, 96, and 197, respectively. How many tracks must the head step across using an elevator strategy? Track 97 to 103 6 steps 103 to 155 52 steps 155 to 197 42 steps 197 to 199 2 steps 199 to 96 103 steps 96 to 84 12 steps 217steps 199 150 100 50 Steps 100 200

In our class discussion on directories it was suggested that directory entries are stored as a linear list. What is the big disadvantage of storing directory entries this way, and how could you address this problem? Consider what happens when look up a file … The directory must be searched in a linear way.

Which file allocation scheme discussed in class gives the best performance? What are some of the concerns with this approach? Contiguous allocation schemes gives the best performance. Two big problems are: * Finding space for a new file (it must all fit in contiguous blocks) * Allocating space when we don’t know how big the file will be, or handling files that grow over time.

What is the difference between internal and external fragmentation? Internal fragmentation occurs when only a portion of a file block is used by a file. External fragmentation occurs when the free space on a disk does not contain enough space to hold a file.

Linked allocation of disk blocks solves many of the problems of contiguous allocation, but it does not work very well for random access files. Why not? To access a random block on disk, you must walk Through the entire list up to the block you need.

Linked allocation of disk blocks has a reliability problem. What is it? If a link breaks for any reason, the disk blocks after The broken link are inaccessible.