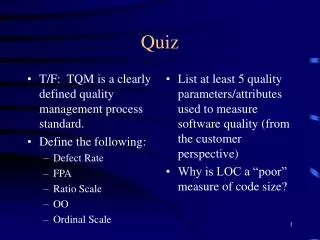

Quiz

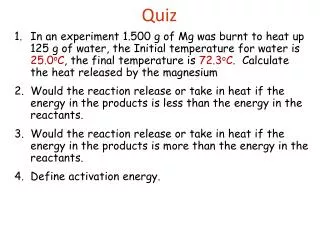

T/F: TQM is a clearly defined quality management process standard. Define the following: Defect Rate FPA Ratio Scale OO Ordinal Scale. List at least 5 quality parameters/attributes used to measure software quality (from the customer perspective) Why is LOC a “poor” measure of code size?.

Quiz

E N D

Presentation Transcript

T/F: TQM is a clearly defined quality management process standard. Define the following: Defect Rate FPA Ratio Scale OO Ordinal Scale List at least 5 quality parameters/attributes used to measure software quality (from the customer perspective) Why is LOC a “poor” measure of code size? Quiz

Project SampleOS X • Project Replaced Carbon • and NeXT and Yellow Box and... • Developers • Customers • The Media • iCEO

Software Quality EngineeringCS410 Class 3a Measurement Theory

Measurement Theory • “It is an undisputed statement that measurement is crucial to the progress of all sciences” (Kan 1995) • “Scientific progress is made through observations and generalizations based on data and measurements, the derivation of theories as a result, and in turn the confirmation or refutation of theories via hypothesis testing…” (Kan 1995)

Measurement Theory • Basic measurement theory steps • Proposition • an idea is proposed • Definition • components of the idea are defined • Operational Definition • operational characteristics of components are identified • Metric definition • metrics are identified based on operational definition

Measurement Theory • Hypothesis definitions • hypotheses are drawn from combination of proposition and definitions • Testing and metric gathering • testing is performed and empirical data is collected • Confirmation or refutation of hypothesis • hypotheses are confirmed or refuted based on analysis of empirical data

Measurement Theory • Example: • Proposition - “the more rigorous the front end of the software development process is executed, the better the quality at the back end” • Definitions • Front end SW process = design through unit test • Back end SW process = integration through system test • Rigorous implementation = total adherence to process (assume process designates 100% design and code inspections)

Measurement Theory • Operational Definitions • Rigorous implementation can be measured by amount of design inspection, and lines of code (LOC) inspection • Back end quality means low number of defects found in system test • Metric Definitions • Design inspection coverage can be expressed as percentage of designs inspected • LOC inspection coverage can be expressed as percentage of LOC inspected • Back end quality can be expressed as defects per thousand lines of code (KLOC)

Measurement Theory • Hypothesis definition(s) • The higher percentage of designs and code inspected, the lower the defect rate will be at system test. • Testing and metric gathering (multiple projects) • Track and record inspection coverage • Track and record defects found in system testing • Confirmation or refutation of hypothesis • Analyze data • Hypothesis supported?

Measurement Theory • The operationalization (definition) process produces metrics and indicators for which data can be collected, and the hypotheses can be tested empirically. • In other words - You have to gather, analyze and compare data to prove whether the hypothesis is true or not.

Level of Measurement • How measurements are classified and compared: • Nominal Scale • Ordinal Scale • Interval Scale • Ratio Scale • Scales are hierarchical, each higher level scale posses all of the properties of the lower ones. • Operationalization should take advantage of highest level scale possible (I.e. don’t use low/medium/high if you can use 1…10)

Level of Measurement • Nominal Scale • Lowest level scale • Classification of items (sort items into categories) • Two requirements • Jointly exhaustive (all items can be categorized) • Mutually exclusive (only one category applies) • Names of categories and sequence order bear no assumptions about relationships between categories • Example: • Categories of SW dev: Waterfall, Spiral, Iterative, OO • Does not imply that Waterfall is ‘better/greater’ than Spiral

Level of Measurement • Ordinal Scale • Like nominal except comparison can be applied • But - we cannot determine magnitude of difference • Example: • Categories of SW dev orgs based on CMM levels (1-5) • We can state that dev orgs at level 2 are more mature then orgs at level 1, and so on... • But we cannot state how much better 2 is than 1, or 3 is than 2, or 3 is than 1, and so on… • Likert rating scale often used at with this scale 1 = completely dissatisfied 2 = somewhat dissatisfied 3 = neutral 4 = satisfied 5 = completely satisfied

Level of Measurement • Interval Scale • Like ordinal scale, except now we can determine exact differences between measurement points • Can use addition/subtraction expressions • Requires establishment of a well-defined, repeatable, unit of measurement • Example of interval scale • Temperature in Fahrenheit (vs. cool, warm, hot) • Day 1’s high temperature was 80 degrees • Day 2’s high temperature was 87 degrees • Day 2 was 7 degrees warmer than day 1 (addition) • Day 1 was 7 degrees cooler than day 2 (subtraction)

Level of Measurement • Ratio scale • Interval scale with absolute, non-arbitrary zero point • Highest level scale • Can use multiplication and division • Example • MBNQA scores • Company A scored 800 in the range of 0...1000 • Company B scored 400 in the range of 0…1000 • Company A doubled company B’s score (multiplication) • Company B scored half as well as Company A (division)

Basic Measures • Measures are ways of analyzing and comparing data to extract meaningful information. • Data vs. Information • Data - raw numbers or facts • Information • relevant - related to subject • qualified - characteristics specified • reliable - dependable, high confidence level • Basic measures • Ratio • Proportion • Percentage • Rate

Basic Measures • Ratio • Result of dividing one quantity by another • Best use is with two distinct groups • Numerator, denominator are mutually exclusive • Examples 1: • Developers = 10, Testers = 5 • Developer to Tester ratio = 10 / 5 x 100% = 200% • Example 2: • Developers = 5, Testers = 10 • Developer to Tester ratio = 5 / 10 x 100% = 50%

Basic Measures • Proportion • Best use is with multiple categories within one group • For n categories (C) in the group (G) then • C1/G + C2/G … + Cn/G = 1 • P of category = desired category / total group size • Example • Number of customers surveyed = 50 • Number of satisfied customers = 30 • Proportion of satisfied customers = 30 / 50 or .6 • Proportion of unsatisfied customers = 20 / 50 or .4 • satisfied (.6) plus unsatisfied (.4) = 1

Basic Measures • Percentage • A proportion expressed in terms of per hundred units • Percentages represent relative frequencies • Total number of cases should always be included • Total number of cases should be sufficiently large • Example • 200 bugs found in 8 KLOC • 30 requirements bugs (30 / 200 x 100%) = 15% • 50 design bugs (50 / 200 x 100%) = 25% • 100 code bugs (100 / 200 x 100%) = 50% • 20 other bugs (20 / 200 x 100%) = 10%

Basic Measures • Rate • Associated with dynamic changes of a quantity over time • Changes in y per each unit of x • x is usually a quantity of time • time unit of xmust be expressed • Example • Opportunity For Error = 5000 (1. based on 5KLOC) • Number of defects = 200 (2. after one year) • Defect rate = 200 / 5000 * 1K = 40 defects per KLOC • Notes 1. - extremely had to determine OFE 2. - hard to know when to measure

Basic Measures • Rate • Six Sigma • A specific defect rate of 3.4 defective parts per million (ppm) which has become an industry standard for the ultimate quality goal. • Sigma is the Greek symbol for standard deviation • By definition, if the variations in the process are reduced then it’s easier to obtain Six Sigma quality • Some problems arise in SW engineering • What are the parts: lines of source code? lines of assembly code?

Reliability • Reliability - consistency of a number of measurements taken using the same measurement method on the same subject • High degree of reliability - repeated measurements are consistant • Low degree of reliability - repeated measurements have large variations • Operational definitions (specifics of how measurement is taken) are key to achieving high degrees of reliability

Validity • Validity is whether the measurement really measures what is intended to be measured • Construct Validity - validity of a metric to represent a theory • Difficult to validate abstract concepts • Example: Concept - Intelligent people attend college Measurement - Sum college enrollment Conclusion - “The sum of the college enrollment is the number of intelligent people” - Not valid

Validity • Criterion-related (predictive) Validity - validity of a metric to predict a theory or relationship • Example: Concept - Safe driving requires knowledge of the rules and regulations Measurement - Drivers license test Conclusion - Those that have low scores on driver’s license tests are more likely to have an accident • Content Validity - the degree to which a metric covers the meaning of the concept Example - A general math knowledge test needs to include more than just addition and subtraction.

Measurement Errors • Two types of measurement Errors • Systematic Errors - errors associated with validity • Random Errors - errors associated with reliability • Example: A bathroom scale which is off by 10 pounds Each time scale is used the reading equals: actual weight + 10 pounds + variation true + systematic error + random error systematic error makes reading invalid random error makes reading unreliable

Measurement Errors • Ways of assessing Reliability • Test/Restest - one or more retests are performed and results compared to previous tests • May expose random errors • Alternative-form - acquire same measurements using alternate testing means • May expose systematic errors

Correlation • Correlation - a statistical method for assessing relationships among observed or empirical data sets • If the correlation coefficient between two variables is weak, then there is no linear correlation (but there may be non-linear) • Example - negative linear relationship between LOC inspected and defects shipped

Causality • Identification of cause and effect relationships in experiments • Three criteria for cause-effect: 1. Cause must precede effect 2. Two variables are empirically related (relationship can be measured) 3. Empirical relationship is direct (not coincidence, or in error)

Summary • Operational definitions are valuable in determining levels and types of metrics to use • Scales and measures have different characteristics and different intended uses • Avoid using the wrong scale or measure • Validity and Reliability represent measurement quality • Correlation and Causality are goals of measurement (I.e. quest to identify and prove a cause-effect relationship)

Follow-up: • List at least 5 quality parameters/attributes used to measure software quality from the customer perspective

What is the difference between validity and reliability? Why are software development process models important to the study of software quality? Define Six Sigma Define MTTF T/F Defect density and PUM combined represent a true measure of customer satisfaction. T/F If a hypothesis is refuted, then the wrong metrics were used. Pop Quiz

Software Quality EngineeringCS410 Class 3b Product Quality Metrics Process Quality Metrics Function Point analysis

Software Quality Metrics • Three kinds of Software Quality Metrics • Product Metrics - describe the characteristics of product • size, complexity, design features, performance, and quality level • Process Metrics - used for improving software development/maintenance process • effectiveness of defect removal, pattern of testing defect arrival, and response time of fixes • Project Metrics - describe the project characteristics and execution • number of developers, cost, schedule, productivity, etc. • fairly straight forward

Software Quality Metrics • Product Metrics • Mean Time to Failure (MTTF) • Defect Density • Problems per User Month (PUM) • Customer Satisfaction • Process Project Metrics • Defect density during machine testing • Defect arrival patterns during machine testing • Phased-based defect removal • Defect removal effectiveness

Software Quality Metrics • Some terminology: • Error - a human mistake that results in incorrect (or incomplete) software • faulty requirement, design flaw, coding error • Fault (a.k.a. defect) - a condition within the system that causes a unit of the system to not function properly • GPF, Abend, crash, lock-up, dead-lock, error message, etc. • Failure - required function (I.e. the goal) cannot be performed • An error results in a fault which may cause one or more failures.

MTTF • Mean Time To Failure (MTTF) - measures how long the software can run before it encounters a “crash” • Difficult measurement to obtain because it’s tied to the “real” use of the product • Easier to define requirements for special purpose software than general use software • MTTF is not widely used by commercial software developers for these reasons

Defect Density • Defect Density (a.k.a. Defect Rate) - is the number of estimated defects • Estimated because defects are found throughout the entire life-cycle of the product • Important for cost and resource estimates for the maintenance phase of the life cycle

Defect Density • More specific • Defect Density (rate) = number of defects / opportunities for errors during a specified time • Number of defects can be approximated as equal to the number of unique causes of observed failures • Opportunities for error can be expressed as KLOC • Time frame (life of product or LOP) varies

Defect Density • Defect Density Example • Product is one year old, and is 10 KLOC • Unique causes of observed failures = 50 • Current Defect Density = 50/10K x 1K = 5 defects per KLOC per year • After second year • Unique causes of observed failures = 75 • Current Defect Density = 75 / 10K x 1K = 7.5 defects per KLOC per 2year or 3.75 per KLOC per year

Defect Density • Comparison Issues • How LOC is calculated • Count only executable lines • Note - what is an executable line?? HLL vs. Assembler • Count executable lines, plus data definitions • Count executable lines, plus data definitions, plus comments • Count executable lines, plus data definitions, plus comments, plus job control language • Count physical lines • Count logical lines (terminated by ‘;’) • Function Point Analysis (FPA) is an alternative measure of program size

Defect Density • Comparison Issues (cont.) • Timeframes must be the same • Cannot compare (current) defect rate for a one year old product to the (current) defect rate of a four year old product • IBM considers life of product to be 4 years • Must account for new and modified code in LOC count (otherwise metric is skewed) • LOC counting must remain consistent • Defect rate should be calculated for each release (must use change flags)

Defect Density • Change Flags Example: /* Module A - Prolog */ /* Release 1.1 modifications 12/01/97 @R11 */ /* Fix for problem report #1127 03/15/98 @F1127 */ ... Total_Records = 0; /* Init records @R11A */ ... Bad_Records = Total_Records - Good_Records; /* Calculate num bad recs @F1127C */ • Flags (a.k.a. Change Control) - CMM level 2+ A - line added by release/fix C - line changed by release/fix M - line moved by release/fix D - line deleted by release/fix (optional)

Defect Density • IBM Example: SSI (current release) = SSI (previous release) + CSI - Deleted - Changed • SSI - Shipped Source Instructions • CSI - Changed (and new) Source Instructions Defect Rate Metrics for Current Release: TVUA/KSSI - all APARS (defects) reported on the total release (inclusive of previous release) TVUA/KCSI - all APARS (defects) reported on the new release code • APAR - Authorized Program Analysis Report (Severity 1-4) • TVUA - Total Valid Unique Apars