Keystroke Dynamics

Jacob Wise and Chong Gu. Keystroke Dynamics. Introduction. People have “unique” typing patterns “Unique” in the same way that fingerprints aren't proven unique Typing patterns could be used for authentication Stronger than password Harder to copy Can use challenge-response Inexpensive.

Keystroke Dynamics

E N D

Presentation Transcript

Jacob Wise and Chong Gu Keystroke Dynamics

Introduction • People have “unique” typing patterns • “Unique” in the same way that fingerprints aren't proven unique • Typing patterns could be used for authentication • Stronger than password • Harder to copy • Can use challenge-response • Inexpensive

Previous Work • Neural Networks • Less mainstream approach • Papers co-authored by M.S. Obaidat • “Traditional” Approach • Reference Signatures computed by calculating the Mean and Standard Deviations • Measures “distance” between Reference Signature and Test Signature • Use digraph/trigraph • Rick Joyce & Gopal Gupta (1990); F. Monrose & a. Rubin (1997); F. Bergadano, D. Bunetti, and C. Picardi (2002)

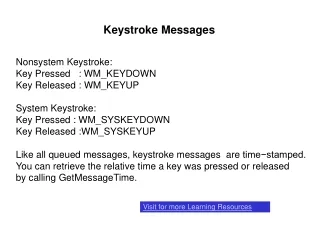

First problem - Collecting Data • Built-in .NET DateTime class • Precise only to about 10 milliseconds • Methods from kernel32.dll • About 15 significant digits (don't know for sure)

First Prototype • Timing Data for all fields • User Name • Password • Full Name • Mistakes not allowed • Signature object is serialized and saved to a file

The World of Neural Networks • User Name / Password / Full Name unsuitable • Can't train a neural network on only positive examples • Would need to collect break-in attempts by other users • Hence the “Counterexample” option in the first prototype • Everyone-Types-The-Same-Thing works better • Hence the passage collection form...

Passage Analysis Form • Tool to help analyze collected keystroke data • Data is in .psig (PassageSignature) and .signature (Signature) files • We hope this tool will be used and extended in future work on this project • Tabs for BPN (Back-Propagation Network), more traditional analyses, and others that are yet to come

[neural networks] • Explain BPN basics • This started as just a first step • Ended up taking the whole time to tune

“Traditional” Approach • Reference Signature • Computed by calculating the mean and standard deviation of samples each user has provided • Based on Press Time or Flight Time • Samples that are too far off (greater than a certain threshold above the mean) are discarded. The Means are recalculated. • This value needs to be tuned • 3 std results in 0.85% of samples being discarded • 2 std results in 5% of samples being discarded

“Traditional” Approach - Reference Signatures based on Flight Time

“Traditional” Approach - Reference Signatures based on Press Time

“Traditional” Approach- Reference Signatures • We have noticed that there is a bigger variance between users if we base our Reference Signatures on Flight Times.

“Traditional” approach- the Verifier • Two approaches have been considered, but neither is up and running • Comparing individual Press/flight time of test signature with the Mean Reference Signature. A press/flight time is considered to be valid if it is within x profile standard deviations of the mean reference digraph. (where x needs to be tuned) • Comparing the magnitude of difference between the mean reference signature (M) and the test signature (T). A certain threshold for an acceptable size of the magnitude is required. A user with a bigger variability of his/her signatures, a bigger threshold value should be used. • This approach has had some good results • Again, the threshold value needs to be tuned.

Conclusion • We have... • Done lots of work but just barely scratched the surface • Focused getting some usable analysis tools up and running • Implemented fairly standard algorithms according to previous research • There is a lot of work to be done!

Epilogue • Papers that excite us and into which we didn't have time to seriously delve: • “User Authentication through Keystroke Dynamics” Bergadano, Gunetti, Picardi (2002) • “Password hardening based on keystroke dynamics” Monrose, Reiter, Wetzel (2001) • Not just authentication