Testing Database Applications

Testing Database Applications. Donald Kossmann http://www.dbis.ethz.ch. Joint work with: Carsten Binnig, Eric Lo. Thanks: i-TV-T AG, Porsche, Microsoft, Baden-Würtemberg. Quotes. „50% of our cost is on testing (QA)“ (Bill Gates @ Opening of Gates Building)

Testing Database Applications

E N D

Presentation Transcript

Testing Database Applications Donald Kossmann http://www.dbis.ethz.ch Joint work with: Carsten Binnig, Eric Lo Thanks: i-TV-T AG, Porsche, Microsoft, Baden-Würtemberg

Quotes • „50% of our cost is on testing (QA)“ (Bill Gates @ Opening of Gates Building) • „Testing alone makes up for six months of the 18 month product release cycle“ (Anonymous SAP Executive) • Estimated damage of USD 60 bln per year in USA caused by software bugs (US Department of Commerce, 2004) • Mercury: 30% of testing can be automated • HP: Buys Mercury for $4.5 bln

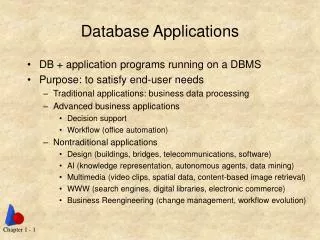

Observations • Everybody loves writing codeEverybody hates testing it • more work on new models etc. than on testing • solution: automate the testing! • (Computers are cheap and do not complain) • Test Automation is a DB Problem • several optimizations in different flavors • it is all about logical data independence • it is a far cry from being solved! • Research on Testing (Automation) is fun!

Test Automation • Idea: Testing ~ Programming • Testprg = Actions + (desired) Responses • Examples: JUnit, Caputre & Replay • But ... • Programming is expensive; Tests aren‘t any better • Maintenance of (Test-) Programs is expensive • Test-programs often have more bugs than the systems they test • Test-programs are not enough: test databases • Test-programs must be optimized • Idea of Test Automation is good!Risk: Going from bad to worse.

Project Goals • Higher level of Abstraction of Tests (Prgs+DBs) • Avoid „over-specification“ of test components • Automate testing: execution, generation, evol., ... • Automate Generation of Test Databases • Generate relevant Test Databases • Generate scalable Test Databases • Automate Execution of Regression Tests • Optimization and Parallelization • (Automate Evolution of Test-DBs + Programs)

Project Goals • Higher level of Abstraction of Tests (Prgs+DBs) • Avoid „over-specification“ of test components • Automate testing: execution, generation, evol., ... • Automate Generation of Test Databases • Generate relevant Test Databases • Generate scalable Test Databases • Automate Execution of Regression Tests • Optimization and Parallelization There are tools for some of these goals available. But they do not fit together, and have limitations.

Approach • Bottom-up: Various ideas and tools • model-based testing (not invented by ETH) • Reverse Query Processing (ETH) • HTPar (ETH) • Exploit „Standards“ • Databases everywhere: „Fluch & Segen“ • Web-based apps (simplifies tooling) • Prototypes and Industry Collaboration • Canoo, i-TV-T, Microsoft, Porsche • Everything is implemented and „tested“

Agenda • Motivation and Overview • Database Regression Testing: Overview • RQP: Generating Test Databases • Related Work • Conclusion

Structure of a Test Program • Phase 1: Setup • Initialize Variables / State (= DB) • (long) sequence of SQL „insert“ statements • Phase 2: Execute • Execute test step by step • Check responses of the system; compute ‘s • Phase 3: Cleanup • Release resources • e.g., SQL „drop table“ statements • Phase 4: Report • Report ‘s from execute

What is our contribution? • Get rid of setup und cleanup • Only specify the id of a test-DB used for initialization • implicit setup / cleanup by testing infrastructure • reduces size of test code by up to 80% (IBM) • optimize the setup and cleanupimprove testing performance by a factor of 500 (Unilever) • Execute • Model-based testing (HTTrace: Web-based C&R Toolkit) • Only specify behavior you want to test; ignore randomness • flexible, app-dependent function • XML representation of test runs for better evolution / queries • Report • Understand the HTML page (tables, keys, same errors, etc.)

How to optimize regression tests • Test 1: Insert a new order (Load Test-DB 1) insertOrder(Schmitz, 1000, Staples) showAllOrders() • Test 2: Show all pending orders (Load Test-DB 1) showAllOrders()

How to optimize regression tests • Test 1: Insert a new order (Load Test-DB 1) insertOrder(Schmitz, 1000, Staples) showAllOrders() • Test 2: Show all pending orders (Load Test-DB 1) showAllOrders() • How do you execute these 2 tests efficiently? • How do you do that with 1 million tests? • IBM manually defines test buckets! (bad!)

Solution Overview [Haftmann et al. 2007] • Optimistic Execution of Test Programs • execute a test (setup = cleanup = NOP) • if it fails, reset the database and try again • if it fails again, then it really fails • (watch out for „false negatives“) • Slice Algorithm • remember conflicts between test runs • create a conflict graph between test runs • order execution of test runs according to graph • (more smarts in the fineprint of the algo) • Parallelization • shared nothing vs. shared DB • clever scheduling and reset strategies

Model-based Testing <projectname="SimpleTest“basedir=".“default="main"> <propertyname="webtest.home“location="C:/java/webtest"/> <importfile="${webtest.home}/lib/taskdef.xml"/> <targetname="main"> <webtest name="myTest"> <config host=www.myserver.comport="8080“ protocol="http“ basepath="myApp"/> <steps><invoke description="getLoginPage“url="login"/> <verifyTitle description=“blabla“ text="Login Page"/> </steps> </webtest> </target></project>

Agenda • Motivation and Overview • Database Regression Testing: Overview • RQP: Generating Test Databases • Related Work • Conclusion

State-of-the Art: DB Generation • Commercial Products and Open Source Tools • Input: • DB Schema (Tables + Constraints) • Scaling Factor • (Constants) • Output: SQL „insert“ Statements • Result of the following query on generated DB? SELECT c.name, o.price FROM Customer c, Order o, Region r, Product p WHERE c.region = r.id AND r.name = Asia ...

State-of-the Art: DB Generation • Commercial Product and Open Source Tools • Input: • DB Schema (Tables + Constraints) • Scaling Factor • (Constants) • Output: SQL „insert“ Statements • What is the result of the following query? SELECT c.name, o.price FROM Customer c, Order o, Region r, Product p WHERE c.region = r.id AND r.name = Asia ...

The Solution • Reverse Query Processing • Input: • DB Schema (Tables + Constraints) • Scaling Factor • (Constants) • Application Program (SQL Queries) • Meaningful Query Results (Tables) • Output: SQL „insert“ Statements • Generate „Relevant“ Test-DBs • a Test-DB must be specific to the application • Evolution: Create new or extend Test-DB when the schema evolves and application has new queries

Finding the right query • In practice, application has many queries • workflow might involve sequence of queries • independent workflows have multiple queries • Idea: generate one generic query that „encodes“ needs of a whole workflow • logically a „join/union“ of all queries • Status: Create this query manually • still better than state-of-the-art: higher abstraction • but, indeed sometimes difficult to find • Future Work: semi-automatic process • symbolic computation helps (SIGMOD 07)

RQP: Problem Statement • Given: • Query Q, Table R • Schema S (including integrity constraints) • Generate a database instance D such that: R = Q(D) • such that D matches S and its constraints • Yes, the problem is undecidable (Q with “-”) • even undecidable whether such a D exists • Who cares? You can always check D? • semi-automatic approach, if check fails

RQP Example R select c.name, sum(amount) as revenue from order o, customer c where o.cid = c.cid and c.age > 18 group by c.name; Database Constraint: Order.amount <= 70 Q S

RQP Example R select c.name, sum(amount) as revenue from Order o, Customer c where o.cid = c.cid and c.age > 18 group by c.name; Database Constraint: Order.amount <= 70 Q S Order Customer D

Trichotomy (thanks to MJF) Answers Queries Database

Trichotomy (thanks to MJF) Answers Queries Query Processing Database

Trichotomy (thanks to MJF) Answers Reverse Query Processing Queries Database

Trichotomy (thanks to MJF) Answers Programming By Example Queries Database

Architecture Reverse Query Processor compile-time Query parser and translator Query Q run-time Reverse query tree TQ Model checker Bottom-up query annotation Database Schema S Annotated T+Q Formula L Instantiation I Query optimizer Top-down data instantiation Optimized T’Q Database D RTable R Parameter values

Example • SQL Query Q: • SELECT SUM(price) • FROM Lineitem, Orders • WHERE l_oid=oid • GROUP BY orderdate • HAVING AVG(price)<=100; • Schema S:

-1l_oid=oid Parser - RRA Tree П-1SUM(price) σ-1AVG(price)<=100 orderdateχ-1SUM(price), AVG(price) Lineitem Orders Traditional SQL Parsing; 1:1 relationship from RA to RRA

-1l_oid=oid Parser - RRA Tree П-1SUM(price) σ-1AVG(price)<=100 Data Flow orderdateχ-1SUM(price), AVG(price) Lineitem Orders Traditional SQL Parsing; 1:1 relationship from RA to RRA

П-1SUM(price) σ-1AVG(price)<=100 orderdateχ-1SUM(price), AVG(price) Lineitem Orders -1l_oid=oid Reverse Projection П-1SUM(price)

Reverse Projection 1st attempt (count=1): orderdate!=19900102 & sum_price=120 & avg_price<=100 & sum_price=price1 & avg_price=sum_price/1 • Not Satisfiable! 2nd trial (count=2): orderdate!=19900102 & sum_price=120 & avg_price<=100 & sum_price=price1+price2 & avg_price=sum_price/2 • Satisfiable! Instantiation sum_price=120, avg_price=60, price1=80, price2=40, orderdate=20060731

Reverse Selection σ-1AVG(price)<=100

Reverse Aggregation orderdateχ-1SUM(price), AVG(price)

-1l_oid=oid Reverse Equi Join

Architecture Reverse Query Processor compile-time Query parser and translator Query Q run-time Reverse query tree TQ Model checker Bottom-up query annotation Database Schema S Annotated T+Q Formula L Instantiation I Query optimizer Top-down data instantiation Optimized T’Q Database D RTable R Parameter values

Reverse Query Optimization • Some observations • projections and group by‘s are expensive • (equi-) joins and selections are cheap • nested queries are expensive • calls to the model checker are expensive • depend on number of free variables, types of vars • Conclusions • apply „smarts“ to avoid model checker calls • apply smarts to simplify model checker calls • do aggressive query rewriting • but do not worry about join ordering, push-down

Correctness Criterion R = Q(D) • Rewrite of Plan P1 into Plan P2 allowed iff Q(P1(R) = Q(P2(R)) = R • That is, P1 and P2 may produce different databases!!! That is okay. • Goal (here): generate large databases fast. (Alternative goal: „good“ DBs)

Query Unnesting select name from lineitem where l_oid not in (select max(cid) from orders group by odate) becomes select name from lineitem • (An empty „orders“ table is generated!)

Query Unnesting select name, price from lineitem where price = (select min(price) from lineitem) becomes select name, price from lineitem • (Precise definition of rules in the Tech.Rep.) • (Of course, all traditional rules are applicable.)

Some Tricks (see TechRep for complete list) • (Constrictive) Independent Attributes • can take random values (avoid model checker) • or can take fixed values (in „distinct“ queries) • Infer cardinalities from AVG and SUM • avoid trial-error algorithm • Bound cardinalities from MAX, MIN, SUM • limit trial-error algorithm • Simplify constraint formulae • use SUM(a) / n for aggregations • Memoization: cache model checker calls

Performance Experiments • Use dbgen in order to generate TPC-H DBs • use three scaling factors: 100 MB, 1 GB, 10 GB • Run 22 TPC-H Queries on DBs (PostGres) • get 22 x 3 RTables • Run RQP on 22x3 RTables • get 22x3 different DBs • Compare original DB with generated DBs • Measure Running Time of RQP

Other RQP Applications • Updating (non-updateable) Views • find all possible update scenarios • define a policy that selects update scenario • Privacy / Security • what can be inferred from the published data • SQL Debugger • determine operator that screws up result • Program Verification (weakest pre-condition) • Database Compression / Sampling • real DB -> queries -> results -> queries -> small DB

Symbolic SQL Computation • Goal: control of intermediate results • selectivity of query operators, cardinality, distr., ... • test database system (not app); e.g., optimizer • Idea: Process tuples with variables as values • Put constraints on variables: e.g., $z > 1000 • (Reverse/Forward) process variables with query • instantiate variables at the end -> test database

Agenda • Motivation and Overview • Database Regression Testing: Overview • RQP: Generating Test Databases • Related Work • Conclusion

Related Work • Testing owned by Software Eng. Community • JUnit: Mother of regression testing for Java • focus on processes and methodology • database often simulated using mock objects :-) • noticeable exception: AGENDA Project (Chays et al.) • no mention of „optimization“, „data independence“ • Generating Test Databases • Gray et al. (SIGMOD 94), ... • Bruno, Chaudhuri, Thomas (TKDE 06) • Generating SQL Test Queries • Slutz (VLDB 98), Poess, Stephens (VLDB 04)

Conclusion • Automated testing has many hidden costs • Definition of test modules / buckets • Definition of the order of test execution (manual parallel.) • Generierating Test-DBs (adjusting Test-DBs) • Evolution of tests and Test-DBs with new releases • Writing code for setup and cleanup • Definition of delta function • ... • Vendors solve one problem at cost of another • We don‘t have a good solution, but ... • we have somefun ideas • and we are honest