MPI (continue)

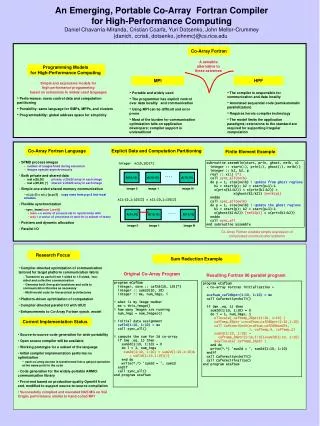

MPI (continue). An example for designing explicit message passing programs Advanced MPI concepts. An design example (SOR). What is the task of a programmer of message passing programs? How to write a shared memory parallel program? Decide how to decompose the computation into parallel parts.

MPI (continue)

E N D

Presentation Transcript

MPI (continue) • An example for designing explicit message passing programs • Advanced MPI concepts

An design example (SOR) • What is the task of a programmer of message passing programs? • How to write a shared memory parallel program? • Decide how to decompose the computation into parallel parts. • Create (and destroy) processes to support that decomposition. • Add synchronization to make sure dependences are covered. • Does it work for MPI programs?

SOR shared memory program grid temp 1 1 2 2 3 3 4 4 proc1 proc2 proc3 procN

MPI program complication: memory is distributed grid grid Can we still use The same code For sequential Program? 2 3 temp temp 2 3 proc2 proc3

Exact same code does not work: need additional boundary elements grid grid 2 3 temp temp 2 3 proc2 proc3

Boundary elements result in communications grid grid proc2 proc3

Assume now we have boundaries • Can we use the same code? for( i=from; i<to; i++ ) for( j=0; j<n; j++ ) temp[i][j] = 0.25*( grid[i-1][j] + grid[i+1][j] + grid[i][j-1] + grid[i][j+1]); • Only if we declare a giant array (for the whole mesh on each process). • If not, we will need to translate the indices.

Index translation for( i=0; i<n/p; i++) for( j=0; j<n; j++ ) temp[i][j] = 0.25*( grid[i-1][j] + grid[i+1][j] + grid[i][j-1] + grid[i][j+1]); • All variables are local to each process, need the logical mapping!

Task for a message passing programmer • Divide up program in parallel parts. • Create and destroy processes to do above. • Partition and distribute the data. • Communicate data at the right time. • Perform index translation. • Still need to do synchronization? • Sometimes, but many times goes hand in hand with data communication.

More on MPI • Nonblocking point-to-point routines • Deadlock • Collective communication

Non-blocking send/recv • Most hardware has a communication co-processor: communication can happen at the same time with computation. Proc 0 proc 1 … MPI_Send_start MPI_Recv_start Comp … Comp …. MPI_Send_wait MPI_Recv_wait No comm/comp overlaps Proc 0 proc 1 … MPI_Send MPI_Recv Comp … Comp …. No comm/comp overlaps

Non-blocking send/recv routines • Non-blocking primitives provide the basic mechanisms for overlapping communication with computation. • Non-blocking operations return (immediately) “request handles” that can be tested and waited on. MPI_Isend(start, count, datatype, dest, tag, comm, request) MPI_Irecv(start, count, datatype, dest, tag, comm, request) MPI_Wait(&request, &status)

One canalso test without waiting: MPI_Test(&request, &flag, status) • MPI allows multiple outstanding non-blocking operations. MPI_Waitall(count, array_of_requests, array_of_statuses) MPI_Waitany(count, array_of_requests, &index, &status)

Process 0 Send(1) Recv(1) Process 1 Send(0) Recv(0) Sources of Deadlocks • Send a large message from process 0 to process 1 • If there is insufficient storage at the destination, the send must wait for memory space • What happens with this code? • This is called “unsafe” because it depends on the availability of system buffers

Process 0 Send(1) Recv(1) Process 0 Sendrecv(1) Process 1 Recv(0) Send(0) Process 1 Sendrecv(0) Some Solutions to the “unsafe” Problem • Order the operations more carefully: Supply receive buffer at same time as send:

Process 0 Bsend(1) Recv(1) Process 0 Isend(1) Irecv(1) Waitall Process 1 Bsend(0) Recv(0) Process 1 Isend(0) Irecv(0) Waitall More Solutions to the “unsafe” Problem • Supply own space as buffer for send (buffer mode send) Use non-blocking operations:

MPI Collective Communication • Send/recv routines are also called point-to-point routines (two parties). Some operations require more than two parties, e.g broadcast, reduce. Such operations are called collective operations, or collective communication operations. • Non-blocking collective operations in MPI-3 only • Three classes of collective operations: • Synchronization • data movement • collective computation

Synchronization • MPI_Barrier( comm ) • Blocks until all processes in the group of the communicator comm call it.

P0 P0 A A P1 P1 A P2 P2 A P3 P3 A A A B C D Collective Data Movement Broadcast Scatter B C D Gather

P0 P0 A ABCD Reduce P1 P1 B P2 P2 C P3 P3 D A A AB B Scan ABC C ABCD D Collective Computation

MPI Collective Routines • Many Routines: Allgather, Allgatherv, Allreduce, Alltoall, Alltoallv, Bcast, Gather, Gatherv, Reduce, Reduce_scatter, Scan, Scatter, Scatterv • Allversions deliver results to all participating processes. • V versions allow the hunks to have different sizes. • Allreduce, Reduce, Reduce_scatter, and Scan take both built-in and user-defined combiner functions.

MPI discussion • Ease of use • Programmer takes care of the ‘logical’ distribution of the global data structure • Programmer takes care of synchronizations and explicit communications • None of these are easy. • MPI is hard to use!!

MPI discussion • Expressiveness • Data parallelism • Task parallelism • There is always a way to do it if one does not care about how hard it is to write the program.

MPI discussion • Exposing architecture features • Force one to consider locality, this often leads to more efficient program. • MPI standard does have some items to expose the architecture feature (e.g. topology). • Performance is a strength in MPI programming. • Would be nice to have both world of OpenMP and MPI.