File System Implementation

File System Implementation. Chapter 12. File system Organization. Application programs Logical file system manages directory information manages file control blocks File-organization module Knows about logical blocks and physical blocks Basic file system

File System Implementation

E N D

Presentation Transcript

File System Implementation Chapter 12

File system Organization • Application programs • Logical file system • manages directory information • manages file control blocks • File-organization module • Knows about logical blocks and physical blocks • Basic file system • issue generic commands to the appropriate device driver • I/O control • Device drivers • Interrupt handlers • devices

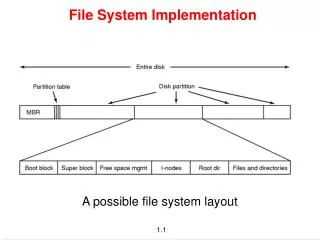

On disk structures • Boot control block • information needed by the system to boot the OS • in the partition boot sector • Partition control block • partition details • # of blocks in partition • size of blocks • free-block count • free-block pointers • free FCB count • FCB pointers • Unix – superblock • NTFS – Master File Table • Directory Structure • FCB • Unix – inode • NTFS – stored in Master file table • Relational structure

In-memory structures • In-memory partition table containing info about each mounted partition • In-memory directory structure • directory “cache” • system-wide open-file table • FCB of each open file • per-process open file table • pointer to the system-wide open-file table

Creating a new file • Application program calls the logical file system • scan directory structure • read appropriate directory in memory • allocate a new FCB • update directory structure • write directory structure back to disk

Typical FCB File Permissions File dates (create access write) File owner, group, ACL File Size File data blocks

Opening a File • User program issues an open call • Directory structure searched for filename • If file not already opened – FCB copied into system-wide open-file table • Includes a count of number of processes that possess the file • Entry made in the per-process open-file table • File operations made via this entry • File name not necessarily in memory (why?)

Closing a file • Per-process table entry is removed • System-wide entry count is reduced • When all processes that have opened the file close it, it is written back to disk

Directory Implementation • Linear List • file pointers • name data blocks • create file • search directory for duplicate names • add new entry at end of the directory • delete file • search for named file • release space allocated to it • To reuse a directory entry • mark the entry as unused • attach it to the list of free directory entries • copy last directory entry to freed location (shorter)

Directory Implementation • Linear List • Disadvantages: • linear search • Sorted list • faster search • more complex insert and delete • B-tree • fast for both

Directory Implementation • Hash table • Advantage: Speed • Disadvantage: • ??

Allocation Methods • Contiguous Allocation • Each file occupies a set of contiguous blocks on the disk • Fast search time • Block location can be computed from relative block number • Directory structures need only contain the first block number for the file • Easy access for read and write

Allocation Methods • Contiguous Allocation • Dynamic storage allocation problem • first fit or best fit algorithms • Suffers from external fragmentation • How much space is needed for a file? • Solved by using extents • another chunk of disk space linked to the end of the file • Fixed or variable size?

Allocation Methods • Linked Allocation • Each file is a linked list of disk blocks • Directory entry is pointer to first link • Advantages • No external fragmentation • Size of file need not be declared when file is created • File can grow as long as free blocks are available • Disadvantages • Effective only for sequential access • space required for pointers • can be mitigated by using clusters • reliability • what happens if a link is lost?

Allocation Methods • FAT • file allocation table • keeps the block table in one place on disk • One entry for each disk block, indexed by block number • Entry for a block contains the block number for the next block in the file • Fat needs to be in memory to avoid large number of disk seeks

Allocation Methods • Linked allocation solves • External Fragmentation • Size-declaration problems • But has problems with • Efficient access (with no FAT)

Indexed Allocation 9 jeep 19 Directory Entry 16 1 10 25 -1 -1 -1 Block 19

Allocation Methods • Indexed allocation • All pointers are in an index block • Each file has its own index block • Directory contains address of index block • To read ith block, use pointer in ith block entry to find the block

File Allocation Table FAT test … 217 0 Directory Entry 618 217 339 end of file 618 339 no. of disk blocks

Indexed Allocation • Supports direct access without suffering from external fragmentation • Suffers from wasted space • pointer overhead is greater than with linked allocation • all nil index entries are wasted. • What size should index block be? • one block may be either too big or too small.

Index Block Variants • Linked Scheme • An index block is normally one disk block • For large files the last word in the file is a link to an additional index block • Multilevel index • First index block contains pointers to second level index blocks • with 4096-byte blocks this approach would support 4gb files • Combined approach • First 15 or so pointers as direct index blocks • next pointer is to a single indirect index • following pointer is to double indirect • last pointer is to triple indirect

Unix inode mode owners(2) data timestamps(3) size block data count data direct blocks . . . . . . data . . . data . . . data data single indirect . . . data double indirect . . . data triple indirect data

Indexed Allocation • Disadvantages • Since blocks are not localized on disk, disk access can be slower because of excessive seeks.

Allocation Performance • Which allocation method to use depends on how files will be used in the system • Systems with mostly sequential access should use a different system than systems with mostly random access • For both types of access, contiguous allocation requires only one access per block • Linked allocation requires up to i disk reads for the ith block

Performance • Some OS’s • support direct-access files by contiguous allocation • Maximum length must be declared on creation • support sequential access by linked allocation • sequential access must be declared on creation • OS must support both allocation systems

Allocation Performance • Other OS’s • Combine contiguous allocation with indexed allocation • contiguous allocation for small files (3-4 blocks) • switch to indexed allocation if file grows large

Allocation Performance • Study of disk performance can lead to optimizations • Sun changed cluster size to 56k in order to improve performance • also implemented read ahead and free behind • went from 50% of CPU for 1.5 Mb per second • to a substantial increase in throughput with less CPU

Free Space management • Bit vector • each unallocated block is set to 1 in a bit vector • advantage is efficiency in finding first free block or n consecutive free blocks • disadvantage is that the bit vector can be large: 1.3GB disk needs 332kb for vector • Linked List • free blocks make a linked list • inefficient. why? • Fat • automatically has free blocks

Free Space Management • Grouping • store address of first n free blocks in first free block • nth pointer actually pointer to next group • Counting • like grouping but also store a count of number of contiguous free blocks • overall list is shorter

Efficiency • Unix pre-allocates inodes across partitions in order to improve performance • Unix also tries to keep a file’s data near its inodes • BSD Unix varies the cluster size as a file grows in order to reduce internal fragmentation • Many OS’s have supported various sized pointers in order to increase the addressable disk space

Performance • Disk Cache • Page Cache • Unified virtual memory • both disk cache and virtual memory use the same virtual memory space • Unified buffer cache • Both memory-mapped IO and regular system calls use memory-mapped addresses • The regular disk buffer is not used in this case