Rainbow Tool Kit

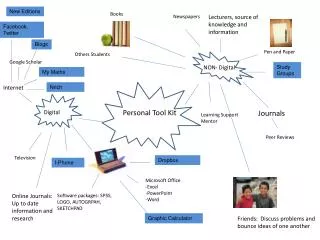

Rainbow Tool Kit . Matt Perry Global Information Systems Spring 2003. Outline. Introduction to Rainbow Description of Bow Library Description of Rainbow methods Naïve Bayes TFIDF/Rocchio K Nearest Neighbor Probabilistic Indexing Demonstration of Rainbow 20 newsgroups example.

Rainbow Tool Kit

E N D

Presentation Transcript

Rainbow Tool Kit Matt Perry Global Information Systems Spring 2003

Outline • Introduction to Rainbow • Description of Bow Library • Description of Rainbow methods • Naïve Bayes • TFIDF/Rocchio • K Nearest Neighbor • Probabilistic Indexing • Demonstration of Rainbow • 20 newsgroups example

What is Rainbow? • Publicly available executable program that performs document classification • Part of the Bow (or libbow) library • A library of C code useful for writing statistical text analysis, language modeling and information retrieval programs • Developed by Andrew McCallum of Carnegie Mellon University

About Bow Library • Provides facilities for • Recursively descending directories, finding text files. • Finding `document' boundaries when there are multiple documents per file. • Tokenizing a text file, according to several different methods. • Including N-grams among the tokens. • Mapping strings to integers and back again, very efficiently. • Building a sparse matrix of document/token counts. • Pruning vocabulary by word counts or by information gain. • Building and manipulating word vectors.

About Bow Library • Provides facilities for • Setting word vector weights according to Naive Bayes, TFIDF, and several other methods. • Smoothing word probabilities according to Laplace (Dirichlet uniform), M-estimates, Witten-Bell, and Good-Turning. • Scoring queries for retrieval or classification. • Writing all data structures to disk in a compact format. • Reading the document/token matrix from disk in an efficient, sparse fashion. • Performing test/train splits, and automatic classification tests. • Operating in server mode, receiving and answering queries over a socket.

About Bow Library • Does Not • Have English parsing or part-of-speech tagging facilities. • Do smoothing across N-gram models. • Claim to be finished. • Have good documentation. • Claim to be bug-free. • Run on a Windows Machine.

About Bow Library • In Addition to Rainbow, Bow contains 3 other executable programs • Crossbow - does document clustering • Arrow - does document retrieval – TFIDF • Archer - does document retrieval • Supports AltaVista-type queries • +, -, “”, etc.

Back to Rainbow • Classification Methods used by Rainbow • Naïve Bayes (mostly designed for this) • TFIDF/Rocchio • K-Nearest Neighbor • Probabilistic Indexing

Description of Naïve Bayes • Bayesian reasoning provides a probabilistic approach to learning. • Idea of Naïve Bayes Classification is to assign a new instance the most probable target value, given the attribute values of the new instance. • How?

Description of Naïve Bayes • Based on Bayes Theorem • Notation • P(h) = probability that a hypothesis h holds • Ex. Pr (document1 fits the sports category) • P(D) = probability that training data D will be observed • Ex. Pr (we will encounter document1)

Description of Naïve Bayes • Notation Continued • P(D|h) probability of observing data D given that hypothesis h holds. • Ex. Probability that we will observe document 1 given that document 1 is about sports • P(h|D) probability that h holds given training data D. • This is what we want • Probability that document 1 is a sports document given the training data D

Description of Naïve Bayes • Bayes Theorem

Description of Naïve Bayes • Bayes Theorem • Provides a way to calculate P(h|D) from P(h), together with P(D) and P(D|h). • Increases with P(D|h) and P(h) • Decreases with P(D) • Implies that it is more probable to observe D independent of h. • Less evidence D provides in support of h.

Description of Naïve Bayes • Approach: Assign the most probable target value given the attributes

Description of Naïve Bayes • Simplification based on Bayes Theorem

Description of Naïve Bayes • Naïve Bayes assumes (incorrectly) that the attribute values are conditionally independent given the target value

Rainbow Algorithm • Let = probability that a document belongs to class • Let = probability that a randomly drawn word from class will be the word

Rainbow Algorithm • Estimate

Rainbow Algorithm • Collect all words, punctuation, and other tokens that occur in examples • Calculate the required and probability terms • Return the estimated target value for the document Doc

TFIDF/Rocchio • Most major component of the Rocchio algorithm is the TFIDF (term frequency / inverse document frequency) word weighting scheme. • TF(w,d) (Term Frequency) is the number of times word w occurs in a document d. • DF(w) (Document Frequency) is the number of documents in which the word w occurs at least once.

TFIDF/Rocchio • The inverse document frequency is calculated as

TFIDF/Rocchio • Based on word weight heuristics, the word wi is an important indexing term for a document d if it occurs frequently in that document • However, words that occurs frequently in many document spanning many categories are rated less importantly

TFIDF/Rocchio • Each document is D is represented as a vector within a given vector space V:

TFIDF/Rocchio • Value of d(i) of feature wifor a document d is calculated as the product • d(i) is called the weight of the word wi in the document d.

TFIDF/Rocchio t3 d2 • Documents that are “close together” in vector space talk about the same things. d3 d1 θ φ t1 d5 t2 d4 http://www.stanford.edu/class/cs276a/handouts/lecture4.ppt

t 3 d2 d1 θ t 1 t 2 TFIDF/Rocchio • Distance between vectors d1 and d2captured by the cosine of the angle x between them. • Note – this is similarity, not distance http://www.stanford.edu/class/cs276a/handouts/lecture4.ppt

TFIDF/Rocchio • Cosine of angle between two vectors • The denominator involves the lengths of the vectors • So the cosine measure is also known as the normalized inner product http://www.stanford.edu/class/cs276a/handouts/lecture4.ppt

TFIDF/Rocchio • A vector can be normalized (given a length of 1) by dividing each of its components by the vector's length • This maps vectors onto the unit circle: • Then, • Longer documents don’t get more weight • For normalized vectors, the cosine is simply the dot product: http://www.stanford.edu/class/cs276a/handouts/lecture4.ppt

Rainbow Algorithm • Construct a set of prototype vectors • One vector for each class • This serves as learned model • Model is used to classify a new document D • D is assigned to the class with the most similar vector

K Nearest Neighbor • Features • All instances correspond to points in an n-dimensional Euclidean space • Classification is delayed until a new instance arrives • Classification done by comparing feature vectors of the different points • Target function may be discrete or real-valued

K Nearest Neighbor • 1 Nearest Neighbor

K Nearest Neighbor • An arbitrary instance is represented by (a1(x), a2(x), a3(x),.., an(x)) • ai(x) denotes features • Euclidean distance between two instances d(xi, xj)=sqrt (sum for r=1 to n (ar(xi) - ar(xj))2) • Find the k-nearest neighbors whose distance from your test cases falls within a threshold p. • If x of those k-nearest neighbors are in category ci, then assign the test case to ci, else it is unmatched.

Rainbow Algorithm • Construct a model of points in n-dimensional space for each category • Classify a document D based on the k nearest points

Probabilistic Indexing • Idea • Quantitative model for automatic indexing based on some statistical assumptions about word distribution. • 2 Types of words: function words, specialty words • Function words = words with no importance for defining classes (the, it, etc.) • Specialty words = words that are important in defining classes (war, terrorist, etc.)

Probabilistic Indexing • Idea • Function words follow a Poisson distribution over the set of all documents • Specialty words do not follow a Poisson distribution over the set of all documents • Specialty word distribution can be described by a Poisson process within its class • Specialty words distinguish more than one class of documents

Rainbow Method • Goal is to estimate P(C|si, dm) • Probability that assignment of term si to the document dm is correct • Once terms have been identified, assign Form Of Occurrence (FOC) • Certainty that term is correctly identified • Significance of Term

Rainbow Method • If term t appears in document d and a term descriptor from t to s exists, s an indexing term, then generate a descriptor indictor • Set of generated term descriptors can be evaluated and a probability calculated that document d lies in class c

Rainbow Demonstration • 20 newsgroups example • References • http://www.stanford.edu/class/cs276a/handouts/lecture4.ppt • http://www-2.cs.cmu.edu/~mccallum/bow/ • http://webster.cs.uga.edu/~miller/SemWeb/Project/ApMlPresent.ppt • http://citeseer.nj.nec.com/vanrijsbergen79information.html • http://citeseer.nj.nec.com/54920.html • Mitchell, Tom M. Machine Learning. 1997 • http://www-2.cs.cmu.edu/~tom/book.html

Rainbow Commands • Create a model for the classes: • rainbow -d ~/model --index training directory • Classifying Documents: • Pick Method (naivebayes, knn, tfidf, prind ) • rainbow -d ~/model --method=tfidf --test=1 • Automatic Test: • rainbow -d ~/model --test-set=0.4 --test=3 • Test 1 at a time: • rainbow -d ~/model –query test file

Rainbow Demonstration • Can also run as a server: • rainbow -d ~/model --query-server=port • Use telnet to classify new documents • Diagnostics: • List the words with the highest mutual info: • rainbow -d ~/model -I 10 • Perl script for printing stats: • rainbow -d ~/model --test-set=0.4 --test=2 | rainbow-stats.pl