Science-Driven Data with Amazon Mechanical Turk

10 likes | 144 Vues

What is Mechanical Turk. Quality. 1. 4. Science-Driven Data with Amazon Mechanical Turk. Quality doesn’t come for free If results are blindly accepted, up to 50% are bad. Options to control quality: Filter workers: performance history

Science-Driven Data with Amazon Mechanical Turk

E N D

Presentation Transcript

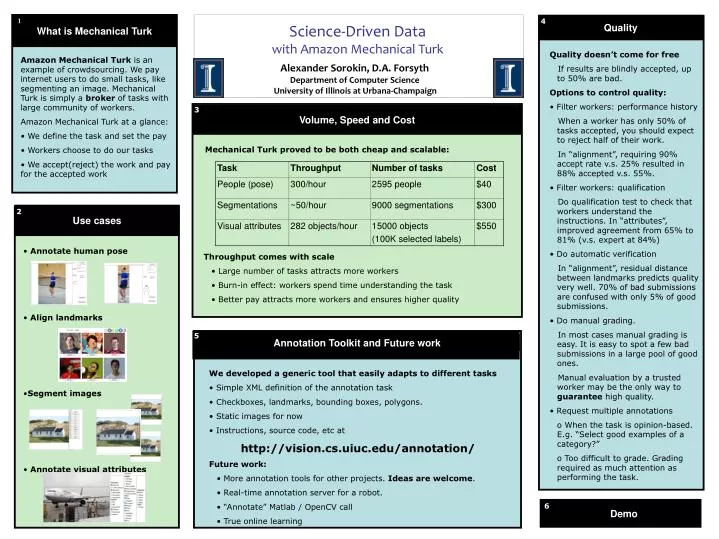

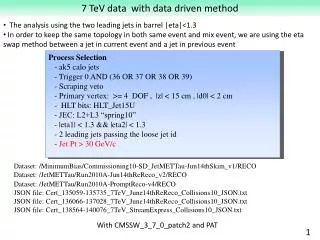

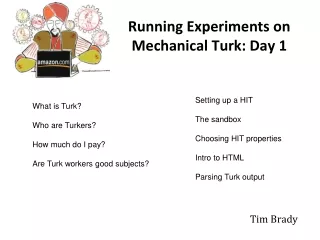

What is Mechanical Turk Quality 1 4 Science-Driven Data with Amazon Mechanical Turk • Quality doesn’t come for free • If results are blindly accepted, up to 50% are bad. • Options to control quality: • Filter workers: performance history • When a worker has only 50% of tasks accepted, you should expect to reject half of their work. • In “alignment”, requiring 90% accept rate v.s. 25% resulted in 88% accepted v.s. 55%. • Filter workers: qualification • Do qualification test to check that workers understand the instructions. In “attributes”, improved agreement from 65% to 81% (v.s. expert at 84%) • Do automatic verification • In “alignment”, residual distance between landmarks predicts quality very well. 70% of bad submissions are confused with only 5% of good submissions. • Do manual grading. • In most cases manual grading is easy. It is easy to spot a few bad submissions in a large pool of good ones. • Manual evaluation by a trusted worker may be the only way to guarantee high quality. • Request multiple annotations • When the task is opinion-based. E.g. “Select good examples of a category?” • Too difficult to grade. Grading required as much attention as performing the task. • Amazon Mechanical Turk is an example of crowdsourcing. We pay internet users to do small tasks, like segmenting an image. Mechanical Turk is simply a broker of tasks with large community of workers. • Amazon Mechanical Turk at a glance: • We define the task and set the pay • Workers choose to do our tasks • We accept(reject) the work and pay for the accepted work Alexander Sorokin, D.A. Forsyth Department of Computer Science University of Illinois at Urbana-Champaign 3 Volume, Speed and Cost Mechanical Turk proved to be both cheap and scalable: Use cases 2 • Annotate human pose • Align landmarks • Segment images • Annotate visual attributes • Throughput comes with scale • Large number of tasks attracts more workers • Burn-in effect: workers spend time understanding the task • Better pay attracts more workers and ensures higher quality 5 Annotation Toolkit and Future work • We developed a generic tool that easily adapts to different tasks • Simple XML definition of the annotation task • Checkboxes, landmarks, bounding boxes, polygons. • Static images for now • Instructions, source code, etc at • http://vision.cs.uiuc.edu/annotation/ • Future work: • More annotation tools for other projects. Ideas are welcome. • Real-time annotation server for a robot. • “Annotate” Matlab / OpenCV call • True online learning 6 Demo