Chapter 5, CLR Textbook

Chapter 5, CLR Textbook. Algorithms on Grids of Processors . Logical 2D Grids of Processors. In this chapter, we develop algorithms for a 2-D grid (often just called grid ). See Figure 5.1(a) example of a square grid with p = q 2 .

Chapter 5, CLR Textbook

E N D

Presentation Transcript

Chapter 5, CLR Textbook Algorithms on Grids of Processors

Logical 2D Grids of Processors • In this chapter, we develop algorithms for a 2-D grid (often just called grid). • See Figure 5.1(a) example of a square grid with p = q2. • Processors are indexed by their row and column, Pi,jwith 0 i,j < q. • One popular variation of the grid topology is obtained by adding loops to form what is called a 2-D torus (or torus). • In this case, every processor belongs to two rings. • The bidirectional torus is a very convenient and this will be our default version. • For simplicity, we will always assume a square grid, although algorithms here can be adapted to rectangular grid using somewhat more cumbersome notation.

Logical 2-D Grids of Processors (cont) • We assume that communication can occur on several links at the same time. • The standard assumptions about concurrent sending, receiving, and computing apply (See Section 3.3). • We make the assumption that links are full-duplex, allowing communication to flow both directions without contention. • This assumption may or may not hold for platform being used. • Algorithms can easily be adjusted if full-duplex is not supported. • A processor to concievably be involved in one send and one receive on all of its network links concurrently. of its for bidirectional links is the multi-port model

Logical 2-D Grids of Processors (cont) • Assuming that all previous communications can occur in a processor with no decrease in communication speed over a single communication is the multi-port model. • If only two concurrent operations are allowed, one being sent and the other received, this is the 1-port model. • This chapter includes performance analysis for both the 1 port and 4 port model. • There are actual platforms whose physical topology are include grids and/or rings. • Intel Paragon – grid • IBM Blue Gene/L – 3D torus topology contains rings & grids.

Logical 2-D Grids of Processors (cont) • When both a ring and grid maps well to physical platform, the grid is often preferable. • Given p processors, • a torus has 2p network links • a grid has 2(p - p) network • a ring has p network links. • As a result, the torus and grid can support more concurrent communication. • Even in platforms without a grid, writing some algorithms assuming a grid topology is useful.

Grid Communication Details • The processor in row i and column j of a qq mesh for 0i,j<q are denoted Pi,j or P(i,j). • A processor can find the indices of is row and column using the following functions: My_Proc_Row() and My_Proc_Col() • A processor can determine the total number p=q2 by calling Num_Proc(). • Rectangular grids require two functions to give the total number of rows and the total number of columns. • A processor can send a message of L data items stored at address addr to one of its neighbors by calling Send(dest, addr,L) where dest has value North, South, West, or East

Grid Communication Details (cont) • With grid topology, some dest values are not allowed • The torus topology is used in the majority of algorithms . • The neighbors of Pi,j are • North neighbor: P(i-1 mod q, j) • South neighbor: P(i+1 mod q, j) • West neighbor: P(i, j-1 mod q) • East neighbor: P(i, j+1 mod q) • Often the modulo is omitted and modulo q is assumed. • Each Send call has a matching Recv call: Recv(src, addr, L) • As in Chapter 4, the following are used: • Non-blocking sends • Both blocking and non-blocking receives

Grid Communication Details (cont) • Broadcast command from Pi,j to all processors in row i: BroadcastRow(i,j,srcaddr, dstaddr, L) • srcaddr is the address in Pi,j of message • dstaddr where message is stored in receiving processors. • L is the length of the message • Broadcast command from Pi,j to all PEs in column j: BroadcastCol(i,j,srcaddr, dstaddr, L) • Technically a row/column broadcast is a multi-cast. • With a torus, each row and column is a ring, so can use the pipelined implementation of a ring broadcast in 3.3.4 • If links are bidirectional, then broadcast can be speeded up by sending broadcast both directions.

Grid Communication Details (cont) • If topology is not a torus, but links are bidirectional, then row & column broadcasts can be implemented by sending message both directions. • If topology is not a torus and links are not bidirectional, then these broadcast functions can not be implemented. • Simplifying assumption: If a processor calls a broadcast function but is not in the row/column for broadcast, the processor returns immediately. • Allows us to omit the column/row processor number in calls.

Matrix Multiplication on a Grid • Assume that the matrix is stored on a square qq grid with p = q2 processors. • Assume the matrix is also square with dimensions nn and that q divides n. • If m = n/q, the standard approach is to partition the matrix over the grid by assigning a mm block of each matrix to each processor. • Technically, processor Pi,j for 0 i,j < n holds matrix elements Ak,l , Bk,l , and Ck,l. • This is illustrated on the next slide.

Outer-Product Algorithm • While standard matrix multiplication is computed using a sequence of inner product computations, we consider the outer-product order of computing these products. • Assuming all Ci,j are initialized to 0, the outer-product is for k = 0 to n-1 do for i = 0 to n-1 do for j = 0 to n-1 do Ci,j = Ci,j + Ai,kAk,j • This outer-product leads to a simple and elegant parallelization on a torus of processors. • At each step k, all Ci,j are updated • Since all matrices are partitioned into q2 blocks of size mm

Outer-Product Algorithm • This algorithm can be summarized in terms of matrix blocks and matrix multiplications as • Next we consider executing this algorithm on a torus of p = q2 processors. • Processor Pi,j holds block Ci,j and updates it each step. • To perform Step k, Pi,jneeds blocks Ai,j& Bi,j . • At Step k, Pi,jalready holds block Ai,j. • For all other steps, Pi,j must obtain Ai,k from Pi,k.

Outer-Product Algorithm • This is true for all processors Pi,j with jk. • Note this means that at step k, processor Pi,k must broadcast its block of matrix A to all processors Pi,j on its row. • This is true for all rows i, as well. • Similarly, blocks of matrix B must be broadcast at step k by Pk,j to all processors on row – and for all j. • The resulting communication pattern is shown on the next slide. • The outer product algorithm is given on the following slide in Algorithm 5.1

Outer Product Algorithm Steps • Statement 1 declares the square blocks of the three matrices stored by each processor. • The matrix C is assumed to be initialized to zero • Arrays A & B contain sub-matrices in PEs in Fig 5.2 • Statement 2 declares two helper buffers used by PEs • In Statement 3, PEs determines value of q • In Statement 4-5, PEs determine their location on torus • The q steps of program occur in lines 7-19 inside loop 6 • In statements 7-8, all q processors in column k broadcast (in parallel) their block of A to the processors in each of their rows. • Statements 9-10 implement similar broadcasts of blocks of matrix B along processor columns.

Outer Product Algorithm Steps (cont) • Comments: • When preceding broadcasts are complete, each PE holds all the needed blocks. • Each processor will multiply a block of A by a block of B and adds the result to the block of C, for which it is responsible. • The algorithm uses the notation MatrixMultiplyAdd() for PE matrix block operations of Ci,j Ci,j+ Ai,kBk,j. • In lines 12-13, if the PE is on both row k & column k, then it can just multiply the two blocks of A and B that it holds. • Lines 14-15: If the PE is on row k but not on column k, then it will multiply the block of A that it receives with the block of B that it holds.

Outer Product Algorithm Steps (cont) • Lines 16-17: Similarly, if a PE is on column k but not row k, then it multiplies the block of A it holds with the block of B it just received. • Lines 18-19 (General Case): If a PE is neither on row k or column k, then it will multiply the block of A it receives with the block of B that it receives. Generalization of Matrix Multiply: • By allotting rectangular blocks of Matrix A and B to processors, the preceding algorithm can be adapted to work for non-square matrix products.

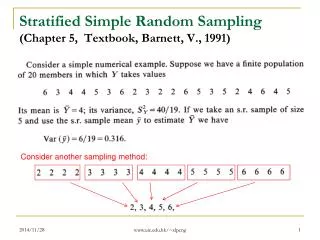

Performance Analysis of Algorithm • At each of the q passes through the loop, each processor is involved in two broadcast messages containing m2 elements sent to q-1 processors. • Using the pipelined broadcast implementation on a ring in Section 3.3.4, the time for each broadcast is where L is the communications startup cost, b is the time to communicate a matrix element. • After 2 broadcasts, each processor computes a mm matrix multiplication, which takes m3 w time, where w is the computation time for a basic matrix operation.

Performance Analysis (cont) • After 0th step (i.e. loop), communication at step k can always occur in parallel with computation at step k-1 • No communication occurs during last computation step. • The total execution time (for 1-port model) is • For the 4-port model, both broadcasts can occur concurrently. • The execution time of the algorithm is obtained by removing the factor of 2 in front of each • Recalling p = q2 and m = n/q, as n becomes large, and • This indicates that algorithm achieves an efficiency of 1.

Grid vs Ring • An optimal asymptotic matrix multiplication algorithm was already given for the ring. • The ring is a simpler topology, so why bother to implement another asymptotically optimal matrix algorithm for the grid? • Since matrix computation has an O(n3) complexity and O(n2) size, getting an asymptotic optimal algorithm is relatively easy. • However, communication costs that become negligible as n becomes large do matter for practical values of n. • As discussed below, the grid topology is better than ring topology for reducing this practical communication cost. • A detailed analysis in CLR pg 155 shows that the algorithm on the grid spends p/2 fewer steps communicating than the algorithm on a ring.

Grid vs Ring (cont) • With the 4-port, this factor is p. • This advantage can be attributed to the presence of more network links and to the fact that many of these links can be used concurrently. • For matrix multiplication, the 2D data distribution induced by the grid topology is inherently better than the 1D topology induced by a ring, regardless of the underlying physical topology. • In particular, the total number of elements sent on the network is lower by at least a factor of 2p than is the case of the algorithm on a ring. • The implementation is that for purposes of matrix multiplication, the grid topology and induced 2D data distribution is at least as good and possibly better than when using the ring topology.

Grid vs Ring (cont) • As a result, when implementing a parallel matrix multiplication in a physical topology on which all communications are serialized (e.g., on a bus architecture), one should opt for a logical grid topology with a 2D data distribution to reduce the amount of transferred data.