Parallel Sorting: Strategies for Distributed Data Values and Sequential Algorithms

E N D

Presentation Transcript

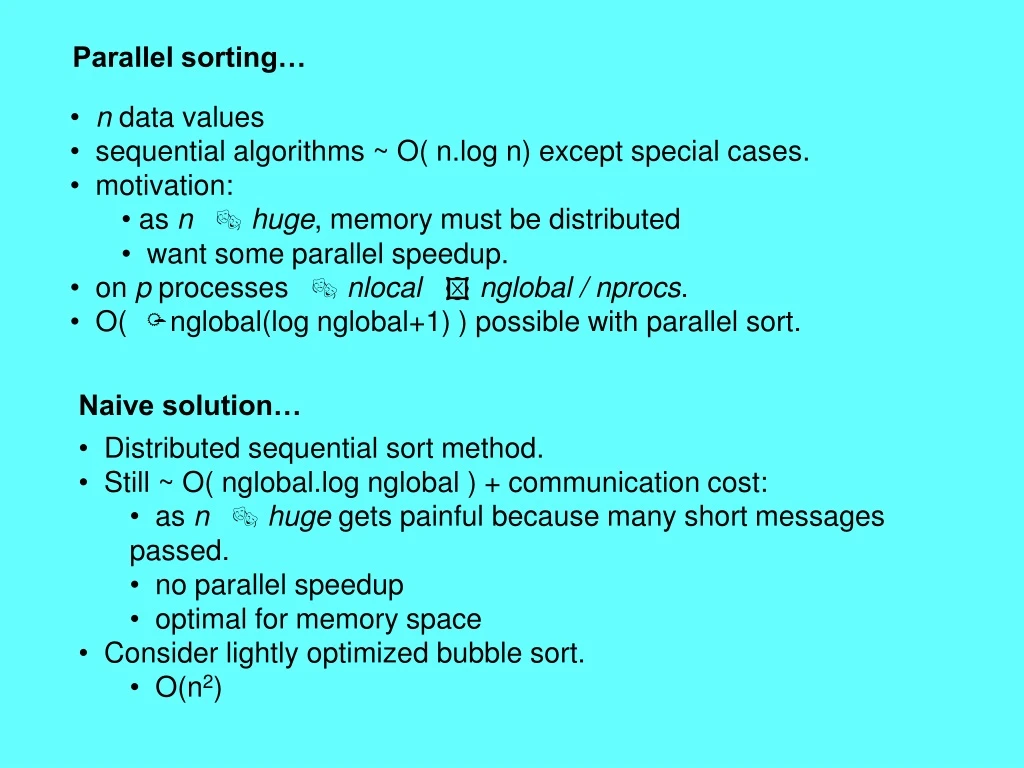

Parallel sorting… • n data values • sequential algorithms ~ O( n.log n) except special cases. • motivation: • as n huge, memory must be distributed • want some parallel speedup. • on p processes nlocal nglobal / nprocs. • O(nglobal(log nglobal+1) ) possible with parallel sort. Naive solution… • Distributed sequential sort method. • Still ~ O( nglobal.log nglobal )+ communication cost: • as n huge gets painful because many short messages passed. • no parallel speedup • optimal for memory space • Consider lightly optimized bubble sort. • O(n2)

Distributed sequential sort… S S O O R R T T I I N N G G E E X X A A M M P P L L E E O O N N E E A A Partition Sort I N O R S T A E G M P X A E E L N O Compare/swap I A N O R S T A I E G M P X A E E L N O Compare/swap A E A I N O R S T I N E I G M P X E A E E L N O Compare/swap Sort X O P R S T I L M N N O A A E E E G Compare/swap I P R S T X O O I L M N N O A A E E E G Compare/swap Sort I L M N N O O X O T P P R R S S R S T T P X X O A A E E E G DONE

Odd-even sort… S S O O R R T T I I N N G G E E X X A A M M P P L L E E O O N N E E A B Partition odd/even R I N S T T M A A E M P X E P A L N E N E O N A A E G T I N A E G A M P A M P E X E First iteration p1 R S T p3 p5 Evens merge Odds return high Odds merge Odds send left Evens send left Evens return high Sort data[0,…,nlocal-1] I S S O N R O G E A R E G S X X T L M E E L P X O O O O R S R R S T G E E G X L A E E E O A L N E N O p0 I N T p2 M A P M X P p4 Second iteration O L N P O X A A I N E G O A R S E T E O L R A S M E E T N L R M S M P T X N E E I N Evens send left Odds merge Evens return high Odds return high Evens merge Odds send left O I N R S T A R E S E T M A P E E X A E G I N O I N O R A E S G T L R M M S P X T N L L O N P N O O X Third iteration S T X O P X G N E E O L N M O N R S T A E E I N Evens return high Odds send left Evens merge O I N L R M S N T N O A E I L M N A A I E N E O A E G E I E G O P R R S T O P X S T X DONE ceil(nprocs/2) iterations

Odd-even sort… (subtleties) • Allocate contiguous memory for merge • int * data, *buffer; • data = (int*)calloc(2*nlocal, sizeof(int)); // 2*nlocal ints allocated. data [0,…,nlocal-1] is significant • buffer = &data[nlocal]; // buffer points to second half of the data[ ] array • Need to figure out the left and right neighbors: • left_neighbor = mype-1; // mype will send nlocal ints from &data[0] to left_neighbor’s &buffer[0] • right_neighbor = mype+1; // mype will send nlocal ints from &buffer[0] to right_neighbor’s &data[0] • BUT… • …the left-most pe (mype == 0) has nowhere to send the low values in data[0,…,nlocal-1] since it has no left neighbor. • This pe’s left_neighbor should be set to MPI_PROC_NULL. Sending to MPI_PROC_NULL returns immediately without doing anything. • AND … • …the right-most pe (mype == nprocs-1) has nowhere to return the high data pointed to by buffer because it has no right neighbor. • This pe should send the high values in &buffer[0,nlocal-1] to MPI_PROC_NULL, and • EITHER • When initializing data[ ], this pe should also pad buffer[0,…,nlocal-1] with INT_MAX (assuming we’re sorting ints) so that the merge step operates correctly, • OR • This pe should not execute the merge step of the iteration.

Shearsort… • Have seen treating processes as 1D array decomposed into odd/even. • How about odd-even sort on 2D array of processes? • Big speed-up requires special ordering Smallest number O 1 2 3 7 6 5 4 8 9 10 11 15 14 13 12 Largest number

Shearsort… • For i = 1,2,3,…,log(n)+1 • If i is odd • sort even rows biggest at left, smallest at right • sort odd rows smallest at left, biggest at right • If i is even • sort all columnsso smallest number is at top, and biggest at bottom S A A A A O O E R I E E E R G G S G T G E I E T N N N N N E M I M M M G L N L G L L I E E I I E n=4 n=2 n=5 n=3 n=1 Done N N N N X A N A M N N N O P M P P P T T P X P O X N O O X X S T R N O S E S R S L R L X E R O T

Other sorts… • Bucketsort: • Pe’s partition their data into small buckets. • Pe’s send appropriate chunks to “large buckets” on each pe. • Pe’s sort “large buckets”. • (Optional: preprocessing stage where master collects info on distribution, assigns buckets to slaves. This procedure deals with non-uniform distribution problems). • Parallel mergesort O(log2n) • Odd-even implementation of standard mergesort. • Parallel quicksort: O(n) • master-slave, difficult to balance sub-tasks. • tree implementation – even harder to balance tree • hypercube topology can be optimal! • still O(n2) worst case.