Enhancing Dasher with CTW: A Language Modeling Approach for Efficient Text Input

This presentation explores the integration of the Context Tree Weighting (CTW) model into Dasher, an innovative text input method. It covers the fundamental concepts of Dasher and CTW, implementation strategies, and how to optimize modeling costs for better performance. The findings reveal that CTW improves prediction accuracy compared to traditional methods like Prediction by Partial Match (PPM). Future work includes adapting CTW for mobile use, reducing memory footprint, and combining multiple language models to enhance usability.

Enhancing Dasher with CTW: A Language Modeling Approach for Efficient Text Input

E N D

Presentation Transcript

Using CTW as a language modeler in Dasher Martijn van Veen 05-02-2007 Signal Processing Group Department of Electrical Engineering Eindhoven University of Technology

Overview • What is Dasher • And what is a language model • What is CTW • And how to implement it in Dasher • Decreasing the model costs • Conclusions and future work

Dasher • Text input method • Continuous gestures • Language model • Let’s give it a try! Dasher

Dasher: Language Model • Conditional probability for each alphabet symbol, given the previous symbols • Similar to compression methods • Requirements: • Sequential • Fast • Adaptive • Model is trained • Better compression -> faster text input

Dasher: Language model • PPM: Prediction by Partial Match • Predictions by models of different order • Weight factor for each model

Dasher: Language model • Asymptotically PPM reduces to fixed order context model • But the incomplete model works better!

CTW: Tree model • Source structure in the model, parameters memoryless • KT estimator: a = number of zeros b = number of ones

CTW: Context tree • Context-Tree Weighting: combine all possible tree models up to a maximum depth

CTW: Implementation • Current implementation • Ratio of block probabilities stored in each node • Efficient but patented • Develop a new implementation • Use only integer arithmetic, avoid divisions • Represent both block probabilities as fractions • Ensure denominators equal by cross-multiplication • Store the numerators, scale if necessary

CTW for Text • Binary decomposition • Adjust zero-order estimator

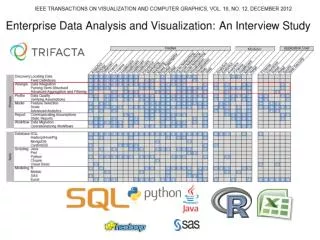

Results • Comparing PPM and CTW language models • Single file • Model trained with English text • Model trained with English text and user input

CTW: Model costs • What are model costs?

CTW: Model costs • Actual model and alphabet size fixed -> Optimize weight factor alpha • Per tree -> not enough parameters • Per node -> not enough adaptivity • Optimize alpha per depth of the tree

CTW: Model costs • Exclusion: only use Betas of the actual model • Iterative process • Convergent? • Approximation: To find actual model use Alpha = 0.5

CTW: Model costs • Compression of an input sequence • Model costs significant, especially for short sequence • No decrease by optimizing alpha per depth?

CTW: Model costs • Maximize probability in the root, instead of the probability per depth • Exclusion based on alpha = 0.5 almost optimal

CTW: Model costs Results in Dasher scenario: • Trained model • Negative effect if no user text is available • Trained with concatenated user text • Small positive effect if user text added to training text, and very similar to it

Conclusions • New CTW Implementation • Only integer arithmetic • Avoids patented techniques • New decomposition tree structure • Dasher language model based on CTW • 6 percent more are accurate predictions than PPM-D • Decreasing the model costs • Only insignificant decrease possible with our method

Future work • Make CTW suitable for MobileDasher • Decrease memory usage • Decrease number of computations • Combine language models • Select locally best model, or weight models together • Combine languages in 1 model • Models differ in structure or in parameters?

Thank you for your attention Ask away!

CTW: Implementation • Store the numerators of the block probabilities