REFERENCE ARCHITECTURE

REFERENCE ARCHITECTURE. ASHISH CHHHONKAR (IIS2013003) SOUMAVA ROY (IIS2013001) BRIJRAJ SINGH (IIS2013015) UTKARSH AGRAWAL(IIS2013004).

REFERENCE ARCHITECTURE

E N D

Presentation Transcript

REFERENCE ARCHITECTURE ASHISH CHHHONKAR (IIS2013003) SOUMAVA ROY (IIS2013001) BRIJRAJ SINGH (IIS2013015) UTKARSH AGRAWAL(IIS2013004)

The purpose of reference architecture is to stimulate the design, control and software engineers to organize their knowledge and activities in a way conducive to using the theoritical fundamentals and theoritical approaches. WHAT IS REFRENCE ARCHITECTURE?

The RCS architecture was implemented in a number of versions over the last 20 years at the NIST. • NIST- RCS was first implemented by A. Barbera for laboratory robotics in the mid 1970. • It was adapted by A. Barbera and others for manufacturing control in the NIST Automated manufacturing research facility during the early 1980. • Since 1986, RCS has been implemented for a number of additional application including multiple autonomous undersea vehicle “MAUV” project of NBS/DARPA. EVOLUTION OF RCS

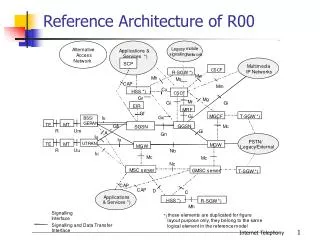

VALUE JUDGEMENT Value judgement check the feasibility of plan and send command to BG Here BG makes the plan according to the states of world model. Checking the validity of sensory processing input WM create future model a/c to plans and send this model to value judgement VJ reply value to WM VJ take expected value from WM and validate input. After checking validation, it create/update the world model To actuator states REFERENCE ARCHITECTURE From sensor Predicted input BG returns prepared plans to world model. SENSORY PROCESSING WORLD MODEL BEHAVIOR GENERATION

The Working Robot Objective : To Search the table in the room and bring it at the front.

Robot is having its objective provided by the human ”Bring the table from back of the room to the front”. Now robot will divide this objective into various sub tasks at the level of behavior generation. BRING THE TABLE AT THE FRONT To confirm Presence in the room To hold To pick up Walk To push Rotate head to see Is table there? Rotate on leg to turn

Assumptions: 1. Robot is standing at the center of the room. Table is at the back of robot at wall side of the room. There is no obstacle in the room.

Steps : • Camera of the robot will take image of the room. • Camera will send the image to the sensory processing. • World model will send the predicted input i.e. room environment to the sensory processing. • Sensory Processing will correlate both of the inputs and sends the refined image to value judgment .

Now VJ will see whether this image is same as expected image (image of room’s interior). • If it is not then it will inform the WM that it is a bad image so don’t accept it and WM willn’t take this to update its model. • Else world model will accept the image to prepare the Room model. • Now this room model will be sent to BG.

Else BG will generate the plan based on the model • Executer of the BG will check whether the subtask is completed or not(Comparing the plan with desired). • If completed then next subtask will be started. • Let in this image table is not found, So BG will make plan to the see the table(Rotate head),and send this plan to WM. • Using these plan WM will create simulated new model. • This new futuristic model will again be sent to VJ to get evaluated on the basis of Cost/Attractiveness/feasibility, to check whether moving head would help in getting table.

If it is accepted then will be transferred to BG. • Now BGwill direct the actuators according to plan to rotate head. • Now sensory processing will percept new image of the room and forward it to VJ. • If VJ finds it OK, it will say yes to BG. • BGwill compare this with its desired state if achieved move to the next sub task.

process data from visual, auditory, tactile, proprioceptive, taste, or smell sensors. • Contain filtering, masking, differencing, correlation, matching, and recursive estimation algorithms, as well as feature detection and pattern recognition algorithms. • Interactions between WM and SP modules can generate a variety of filtering and detection processes such as Kalman filtering and reeursive estimation, Fourier transforms, and phase lock loops. Sensory Processing (SP) modules

Vision system SP modules process images to detect brightness, color, and range discontinuities, optical flow, stereo disparity, and utilize a variety of signal detection and pattern recognition algorithms to analyze scenes and compute information needed for manipulation, locomotion, and spatio-temporal reasoning.

Behavior is defined as the ordered set of consecutive-concurrent changes(in time) of the states registered at the output of a system. • Behavior Generation is the process of planning and execution of actions designed to achieve the desired goals. • These actions includes feed forward and feedback control actions. • A behavioral goal is a desired result that an action is designed to achieve or maintain. • Thus the behavior generation module receives a goal fromt the upper level of hierarchy. BEHAVIOR GENERATION

It fulfills the following requirements • Process of planning to solve problems of the real world. • Formulation of algorithms • Execution of actions(virtual actuator) • It is also useful for the capability or feasibility assessment of any task to be done. Why is BG Required

Planner: The main functioning of the planner is to select a plan(A plan is a series of functions that allow the system to achieve the desired goals).It has two sub modules: Job Assignment and Scheduler. • Executor : Its main functioning is to transform the plans into a set of output commands to the adjacent level. Behavior Generation Modules

It is responsible for spatial task decomposition. • It segments the input task command into N spatially distinct jobs to be performed by N-physically distinct subsystems. • This module assigns tools and allocate physical resources to each of the subordinate levels for performing their assigned job Job Assignment Sub module

It is responsible for decomposing the job assigned to its subsystem into a temporal sequence of planned subtask. • Selection of sub tasks can be rule based or can be performed by search based algorithms that searches the space of possible actions. • Constraints and coordination requirements among subsystems are also looked after by the scheduler • If conflicts are found, constraint relaxation algorithm may be applied or it may report a failure to the job assignment sub module or to the next higher level BG module Scheduler Sub module

Its main functionality is succesful execution of the plans,generated by the scheduler within the respective planners. • Planner issues commands to its respective executor modules which are inputs to the actuators • It constantly measure the current state of the world and measures the difference between current world state and the desired planned subgoal state and henceforth issues a sub-command to nullify the difference. • Actions performed by the executor result in changes on the world, which are agained sensed by the sensors and sensory processing module and these sensed values are used to update the world model. • This entire process occurs in recursion until the dersired state is reached and the final goal is achieved. Executor Module

Planning Stage: • Select the set of participating agents • The schedules of motion are assigned to the selected agents • The command strings are computed for the feed-forward control(FFC). • Execution Stage: • The sequence of FFC commands are encoded and adjusted. • Error of functioning is evaluated. • The compensation component of the input is computed for the feedback control. Working stages of BG

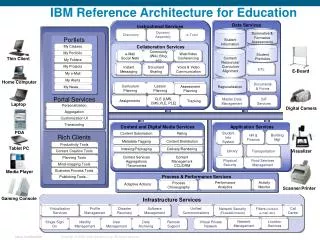

MODEL THE STATE SPACE OF THE PROBLEM DOMAINONTAIN INFORMATION STORAGE • RETRIEVAL MECHANISM • ALGORITHMS FOR TRANSFORMING INFORMATION FROM ONE CO-ORDINATE SYSTEM TO ANOTHER WORLD MODELLING MODULES

IT use dynamic models to generate expectations, and predict the results of current and future actions. • IT MAY contain recursive estimation algorithms and processes that compute lists of attributes from images, graphics engines that generate images from symbolic lists, and recognition and detection algorithms that perform pattern matching operations necessary to verify the identification of features, surfaces, objects, and groups. • maintains a knowledge database (KD), acts as a question answering system, and uses information from the KD to predict or simulate the future.

The world model functions: • To create a knowledge database (map) and keep it current and consistent: • It manages the data gathered by Sensory processing. • The world model process also provides functions to update and fuse data and to manage the map. • To generate predictions of expected sensory input based on the current and future states of world. • The world model constructs and maintains all the information for path planning.

The primary use of the model data is to plan safe and efficient paths. • The path planning module uses the map to select a locally optimal path from the current position to the commanded goal. • The planner heuristically selects a path. • The path is updated (replanned) frequently.

VALUE JUDGEMENT The value judgment computes the cost, benefit, risk and expected payoff of plans. It assigns value to objects, events and situations. It decides what is important or trivial, what is rewarding and punishing, and what degree of confidence to assign to entries in the world model. It uses bayseian , demster - scapher statistical models to evaluate.

It evaluates both the observed state of the world and the predicted results of hypothesized plans by computing their cost risk and benefits. • It computes the probability of correctness and assigns believability and uncertainty parameters to state variables. • Provides the basis for decision making or choosing one action instead of another. • Without VJ any biological creature would soon be destroyed and any artificial intelligent system would soon be disabled by its own inappropriate actions. Value Judgement (cont….)

Since intelligent systems are goal oriented, their functioning requires them to know ,at each step, what is better and what is worse. • We will try and determine how these concepts can fit into a more general paradigm of intelligent system. • To fulfill above requirements of an artificial intelligent system value judgment module is included in its architecture. Why do we need VJ?

VJ module produce evaluation that can be represented as value state variables. • These can be assigned to the attribute list in entity frames of objects, persons, events, situations and reasons of space. • They can also be assigned to the attribute lists of plans and actions in task frames. • Hence VJ provide criteria for decisions about which course of action to take. Working of VJ

Goodness/badness • Pleasure/pain • Success observed/expected • Hope • Frustrating • Love/hate • Comfort • Fear/joy • Despair/happiness • Confidence/uncertainity Some basis of comparision

Machine workstation • Space station telerobotic servicer • Architecture for coal mining • Submarines maneuvering system • Remote driving Applications of rcs model