Inside-Outside Reestimation from Partially Bracketed Corpora for Learning SCFGs

E N D

Presentation Transcript

Inside-outside reestimation from partially bracketed corpora F. Pereira and Y. Schabes ACL 30, 1992 CS730b 김병창 NLP Lab. 1998. 10. 29

Contents • Motivation • Partially Bracketed Text • Grammar Reestimation • The Inside-Outside Algorithm • The Extended Algorithm • Complexity • Experimental Evaluation • Inferring the Palindrome Language • Experiments on the ATIS Corpus • Conclusions and Further Work NLP Lab., POSTECH

Motivation I • Very simple method for learning SCFGs [Charniak] • Generate all possible SCFG rules • Assign some initial probabilities • Run the training algorithm on a sample text raw text • remove those rules with zero probabilities • Difficulties in using SCFGs • Time complexity - O(n3|w|3) • n : the number of non-terminals w : training sentence • cf. O(s2|w|) : training an HMM with s states • Bad convergence properties • The larger number of non-terminals, the worse. • Inferred only by chance NLP Lab., POSTECH

Motivation II • Extension of the Inside-Outside algorithm • Inferring grammars from a partially parsed corpus • Advantages • constituent boundary information in grammar • reduced number of iteration for training • better time complexity NLP Lab., POSTECH

Partially Bracketed Text • Example • (((VB(DT NNS(IN((NN)(NN CD)))))).) • (((List (the fares(for((flight)(number 891)))))).) • Notations • Corpus C = { c | c = ( w, B) }, w : string, B : bracketing of w • w=w1w2wiwi+1 wj w|w| • (i,j) delimits iwj • consistent : no overlapping in a bracketing • compatible : union of two bracketing is consistent • valid : a span is compatible with a bracketing • span in derivation 01m=w • if j=m, span of wi in j is (i-1,i) • if j<m, j=A, j+1=X1Xk, span A in j is (i1,jk) NLP Lab., POSTECH

Grammar Reestimation • Using reestimation algorithm • parameter estimates for a SCFG derived by other means • grammar inferring from scratch • Grammar inferring • Given set N of Non-terminals, set of terminals • n=|N|, t=|| • N={A1,,An}, ={b1,,bt} • CNF SCFG over N, : n3+nt probabilities • Bp,q,r on ApAqAr: n3 • Up,m on Apbm: nt • Meaning of rule probabilities : intuition of context freeness NLP Lab., POSTECH

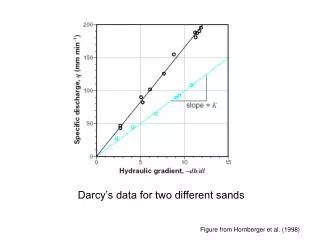

S i 1 s-1 s t t+1 T The Inside-Outside Algorithm • Definition of inner (e) and outer (f) probabilities i Inner probability S i Outer probability Special thanks to ohwoog NLP Lab., POSTECH

The Extended Algorithm • Compatible function • Extended algorithm • Table 1. 참조 • Inside probabilities : (1), (2) ; (2)에 compatible function 사용. • Outside probabilities : (3), (4); (4)에 compatible function 사용. • Parameter reestimation : (5), (6) ; original algorithm과 같음. • Stopping criterior • When the cross entropy estimate becomes negligible. NLP Lab., POSTECH

Complexity • Complexity of original algorithm : O(|w|3) for each sentence • computation of inside probability, computation of outside probability and rule probability reestimation : 각각 O(|w|3) for each sentence • Complexity of extended algorithm : O(|w|) at best case • In the case of full binary bracketing B of a string w • O(|w|) spans in B • Only one split point for each (i,k) • Each valid span must be a member of B. • Preprocessing • Enumerating valid spans and split points NLP Lab., POSTECH

Experimental Evaluation • Two experiments • Artificial Language ; Palindrome • Natural Language ; Penn Treebank • Evaluation • Bracketing accuracy • proportion of phrases that are compatible NLP Lab., POSTECH

Inferring the Palindrome Language • L={wwR|w{a,b}*} • Initial grammar : 135 rules ( =53+5*2 ) • Training with 100 sentences • Inferred grammar : correct palindrome language grammar • Bracketing accuracy : above 90% (100% in several cases) • In the unbracketing training : 15% - 69% NLP Lab., POSTECH

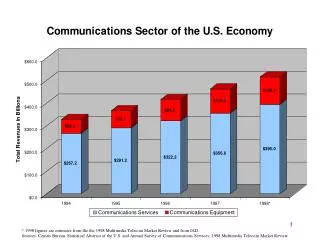

Experiments on the ATIS Corpus • ATIS(Air Travel Information System) corpus ; 770 sentences (7812 words) • 700 training set, 70 test set (901 words) • Initial grammar : 4095 rules ( =153+15*48) • 15 nonterminals, 48 terminal symbols for POS tags • Bracketing accuracy : 90.36% after 75 iteration • In the unbracketing training : 37.35% • In the case (A) • (Delta flight number) : not compatible • (the cheapest) : linguistically wrong ; lack of information • 16 incompatibles in GR • In the case (B) • fully compatible • 9 incompatibles in GR NLP Lab., POSTECH

Conclusions and Further Work • The use of partially bracketed corpus can • reduce the number of iterations for convergence • find good solution • infer grammars specifying linguistically reasonable constituent boundaries • reduce time complexity (linear in the best case) • More Extensions • determination of sensitivity to the • initial probability assignments • training corpus • lack or misplacement of brackets. • larger terminal vocabularies NLP Lab., POSTECH