### Understanding Boosting: A Comprehensive Overview of the Algorithm and Its Applications in Machine Learning ###

Boosting is a significant machine learning advancement that combines multiple weak classifiers to create a strong predictive model. This iterative process adjusts weights assigned to training points, enhancing accuracy over iterations. The core of Boosting lies in its additive model, where individual classifiers are aggregated for final predictions. With algorithms like AdaBoost, we minimize error using exponential loss functions, effectively improving classification results. This presentation highlights the functionality, performance metrics, and real-world applications of Boosting, particularly within data mining challenges. ###

### Understanding Boosting: A Comprehensive Overview of the Algorithm and Its Applications in Machine Learning ###

E N D

Presentation Transcript

Introduction to Boosting Slides Adapted from Che Wanxiang(车万翔) at HIT, and Robin Dhamankar of Many thanks!

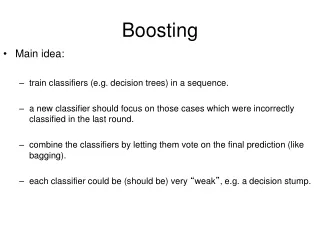

Ideas • Boosting is considered to be one of the most significant developments in machine learning • Finding many weak rules of thumb is easier than finding a single, highly prediction rule • Key in combining the weak rules

Boosting(Algorithm) • W(x) is the distribution of weights over the N training points ∑ W(xi)=1 • Initially assign uniform weights W0(x) = 1/N for all x, step k=0 • At each iteration k: • Find best weak classifier Ck(x) using weights Wk(x) • With error rate εk and based on a loss function: • weight αk the classifier Ck‘s weight in the final hypothesis • For each xi , update weights based on εk to get Wk+1(xi ) • CFINAL(x) =sign [ ∑ αi Ci (x) ]

Boosting As Additive Model • The final prediction in boosting f(x) can be expressed as an additive expansion of individual classifiers • The process is iterative and can be expressed as follows. • Typically we would try to minimize a loss function on the training examples

Boosting As Additive Model • Simple case: Squared-error loss • Forward stage-wise modeling amounts to just fitting the residuals from previous iteration. • Squared-error loss not robust for classification

Boosting As Additive Model • AdaBoost for Classification: • L(y, f (x)) = exp(-y ∙ f (x)) - the exponential loss function

Boosting As Additive Model First assume that β is constant, and minimize w.r.t. G:

Boosting As Additive Model errm : It is the training error on the weighted samples The last equation tells us that in each iteration we must find a classifier that minimizes the training error on the weighted samples.

Boosting As Additive Model Now that we have found G, we minimize w.r.t. β:

AdaBoost(Algorithm) • W(x) is the distribution of weights over the N training points ∑ W(xi)=1 • Initially assign uniform weights W0(x) = 1/Nfor all x. • At each iteration k: • Find best weak classifier Ck(x) using weights Wk(x) • Compute εk the error rate as εk= [ ∑ W(xi )∙ I(yi ≠ Ck(xi )) ] / [ ∑ W(xi )] • weight αk the classifier Ck‘s weight in the final hypothesis Set αk = log ((1 – εk )/εk ) • For each xi , Wk+1(xi ) = Wk(xi ) ∙ exp[αk ∙ I(yi ≠ Ck(xi ))] • CFINAL(x) =sign [ ∑ αi Ci (x) ]

AdaBoost(Example) Original Training set : Equal Weights to all training samples Taken from “A Tutorial on Boosting” by Yoav Freund and Rob Schapire

AdaBoost(Example) ROUND 1

AdaBoost(Example) ROUND 2

AdaBoost(Example) ROUND 3

AdaBoost (Characteristics) • Why exponential loss function? • Computational • Simple modular re-weighting • Derivative easy so determing optimal parameters is relatively easy • Statistical • In a two label case it determines one half the log odds of P(Y=1|x) => We can use the sign as the classification rule • Accuracy depends upon number of iterations ( How sensitive.. we will see soon).

Boosting performance Decision stumps are very simple rules of thumb that test condition on a single attribute. Decision stumps formed the individual classifiers whose predictions were combined to generate the final prediction. The misclassification rate of the Boosting algorithm was plotted against the number of iterations performed.

Boosting performance Steep decrease in error

Boosting performance • Pondering over how many iterations would be sufficient…. • Observations • First few ( about 50) iterations increase the accuracy substantially.. Seen by the steep decrease in misclassification rate. • As iterations increase training error decreases ? and generalization error decreases ?

Can Boosting do well if? • Limited training data? • Probably not .. • Many missing values ? • Noise in the data ? • Individual classifiers not very accurate ? • It cud if the individual classifiers have considerable mutual disagreement.

Application : Data mining • Challenges in real world data mining problems • Data has large number of observations and large number of variables on each observation. • Inputs are a mixture of various different kinds of variables • Missing values, outliers and variables with skewed distribution. • Results to be obtained fast and they should be interpretable. • So off-shelf techniques are difficult to come up with. • Boosting Decision Trees ( AdaBoost or MART) come close to an off-shelf technique for Data Mining.