Challenges and Approaches in Machine Translation: From Direct to Example-Based Methods

This paper explores various machine translation (MT) approaches, including Direct MT, Example-Based MT, and Statistical MT, and identifies their unique challenges. Key issues addressed include orthography, language directionality, segmentation, morphology, and semantic nuances. The paper highlights the importance of considering contextual, social, and cultural factors in translation, as well as the role of code-switching in multilingual environments. Through comprehensive analysis, this work aims to enhance understanding of the complexities involved in effective machine translation technologies.

Challenges and Approaches in Machine Translation: From Direct to Example-Based Methods

E N D

Presentation Transcript

Issues in Machine Translation • Orthography • Writing from left-to-right vs right-to-left • Character sets (alphabetic, logograms, pictograms) • Segmentation into word/word-like units • Morphology • Lexical: Word senses • bank “river bank”, “financial institution” • Syntactic: Word order • Subject-verb-object subject-object-verb • Semantic: meaning • “ate pasta with a spoon”, “ate pasta with marinara”, “ate pasta with John” • Pragmatic: world knowledge • “Can you pass me the salt?” • Social: conversational norms • pronoun usage depends on the conversational partner • Cultural: idioms and phrases • “out of the ballpark”, “came from leftfield” • Contextual • In addition for Speech Translation • Prosody: JOHN eats bananas: John EATS bananas; John eats BANANAS • Pronunciation differences • Speech recognition errors • In a multilingual environment • Code Switching: Use of linguistic apparatus of one language to express ideas in another language.

MT Approaches: Different levels of meaning transfer Interlingua Semantic Interpretation Semantic Generation Depth of Analysis Syntactic Structure Syntactic Structure Transfer-based MT Syntactic Generation Parsing Target Source Direct MT

Direct Machine Translation • Words are replaced using a dictionary • Some amount of morphological processing • Word reordering is limited • Quality depends on the size of the dictionary, closeness of languages Spanish : ajá quiero usar mi tarjeta de crédito English : yeah I wanna use my credit card Alignment : 1 3 4 5 7 0 6 • English :I need to make a collect call • Japanese :私は コレクト コールを かける 必要があります • Alignment : 1 5 0 3 0 2 4

Translation Memory • Idea is to reuse translations that were done in the past • Useful for technical terminology • Ideally used in a sub-language translation • System helps in matching new instances against previously translated instances • Choices are presented to a human translator through a GUI • Human translator selects and “stitches” the available options to cover the source language sentence • If no match is found, the translator introduces a new translation pair into the translation memory. • Pros: • Maintains consistency in translation across multiple translators • Improves efficiency of translation process • Issues: How is the matching done? • Word level matching, morphological root matching • Determines robustness of the translation memory

ALIGNMENT (transfer) MATCHING (analysis) RECOMBINATION (generation) Exact match (direct translation) Target Source Example-based MT • Translation-by-analogy: • A collection of source/target text pairs • A matching metric • An word or phrase-level alignment • Method for recombination • ATR EBMT System (E. Sumita, H. Iida, 1991); CMU Pangloss EBMT (R. Brown, 1996)

Example run of EBMT English-Japanese Examples in the Corpus: • He buys a notebook Kare wa noto o kau • I read a book on international politics Watashi wa kokusai seiji nitsuite kakareta hon o yomu Translation Input:He buysa book on international politics Translation Output:Kare wakokusai seiji nitsuite kakareta hono kau • Challenge: Finding a good matching metric • He bought a notebook • A book was bought • I read a book on world politics

Variations in EBMT • Database of Sentence Aligned corpus • Analysis of the SL • Depends on how the database is stored • Full sentences, sentence fragments, tree fragments • Matching metric: idea is to arrive at a semantic closeness • Exact match • N-gram match • Fuzzy match • Similarity-based match • Matching with variables • Regeneration of the TL • Depends on how the database produces the output

Issues in EBMT • Parallel corpora • Granularity of examples • Size of example-base • Does accuracy improve by growing example-base? • Suitability of examples • Diversity and consistency of examples • Contradictory examples • Exceptional examples (a) Watashi wa komputa o kyoyosuru I share the use of a computer (b) Watashi wa kuruma o tsukau I use a car (c) Watashi wa dentaku o shiyosuru I share the use of a calculator I use a calculator

Issues in EBMT • How are examples stored? • Context-based examples • “OK” depends on dialog context; • “wakarimashita (I understand)”; • “iidesu yo (I agree)” • or “ijo desu (lets change the subject)” • Annotated tree structures • Eg. Kanojo wa kami ga nagai (She has long hair) • Trees with linking nodes • Multi-level lattices with typographic, orthographic, lexical, syntactic and other information. • Pos information, predicate-argument, chunks, dependency trees • Generalized Examples • Tokenize Dates, Names, cities, gender, number, tense are replaced by generalized tokens • Precision-Recall tradeoff • A continuum from plain strings to context sensitive rules

Issues in EBMT • String based • Sochira ni okeru We will send it to you • Sochira wa jimukyoku desu This is the office • Generalized String • X o onegai shimasu may I speak to the X • X o onegai shimasu please give me the X • Template Format • N1 N2 N3 N2’ N3’ for N1’ • (N1 = sanka “participation”, N2 = moshikomi “application” N3=yoshi “form”) • Distance in a thesaurus is used to select the method.

Issues in EBMT • Matching: • Metric used to measure the similarity of the SL input to the SLs in the example database. • Exact Character-based matching • Edit-distance based matching • Word-based matching • Thesaurus similarity/Wordnet based similarity • A man eats vegetables Hito wa yasai o taberu • Acid eats metal san wa kinzoku o okasu • He eats potatoes kare wa jagaimo o taberu • Sulphuric acid eats iron Ryusan wa tetsu o okasu • Thesaurus free similarity matching based on distributional clustering • Annotated word-based matching • POS based matching • Relaxation techniques • Exact match with dels and insertions word-order differences morphological variants POS differences

Matching in EBMT (contd) • Structure-based Matching • Tree-based edit distance • Case-frame based matching • Partial matching • Not entire input need match with the example database • Chunks, substrings, fragments can match • Assembling the TL output is more challenging.

Adaptability and Recombination in EBMT • Problem: • a. Identify which portion of the associated translation corresponds to the matched portion of the source text (Adaptability) • b. Recombining the portions in an appropriate manner. • Alignment: can be done using statistical techniques or using bilingual dictionaries. • Boundary friction problem: For English-Japanese, translations of noun phrases can be reused independent of them being subjects or objects. • The handsome boy entered the room • The handsome boy ate his breakfast • I saw the handsome boy • Not in German: • Der schone Junge aB seine Fruhstuck • Ich sah den schonen Jungen

Adaptability • Example-retrieval can be scored on two counts: • the closeness of the match between the input text and the example, and • the adaptability of the example, on the basis of the relationship between the representations of the example and its translation. • Use the Offset Command to increase the spacing between the shapes. • a. Use the Offset Command to specify the spacing between the shapes. • b. Mit der Option Abstand legen Sie den Abstand zwischen den Formen fest. • a. Use the Save Option to save your changes to disk. • b. Mit der Option Speichern können Sie ihre Anderungen auf Diskette speichern.

Recombination options are ranked using n-gram model a. Ich sah den schönen Jungen. b. * Ich sah der schöne Junge.

Flavors of EBMT • EBMT used as a component in an MT system which also has more traditional elements: • EBMT may be used • in parallel with these other “engines”, • or just for certain classes of problems • when some other component cannot deliver a result. • EBMT may be better suited to some kinds of applications than others. • Dividing line between EBMT and so-called “traditional” rule-based approaches may not be obvious.

When to apply EBMT • When one of the following conditions holds true for a linguistic phenomenon, [rule-based] MT is less suitable than EBMT. • (a) Translation rule formation is difficult. • (b) The general rule cannot accurately describe [the] phenomen[on] because it represents a special case. • (c) Translation cannot be made in a compositional way from target words.

Learning translation patterns • Kare wa kuruma o kuji de ateru. • HE topic CAR obj LOTTERY inst STRIKES • Lit. ‘He strikes a car with the lottery.’ • He wins a car as a prize in the lottery. • Learn pattern (c) from to correct (a) to be like (b)

Generation of Translation Templates • “Two phase” EBMT methodology: “learning” of templates (i.e. transfer rules) from a corpus. • Parse the translation pairs; align the syntactic units with the help of a bilingual dictionary. • Generalized by replacing the coupled units with variables marked for syntactic category. • a. X[NP] no nagasa wa saidai 512 baito de aru. The maximum length of X[NP] is 512 bytes. • b. X[NP] no nagasa wa saidai Y[N] baito de aru. The maximum length of X[NP] is Y[N] bytes. • Any coupled unit pair can be replaced by variables. Refine templates which give rise to a conflict • a. play baseball yakyu o suru • b. play tennis tenisu o suru • c. play X[NP]!X[NP] o suru • a. play the piano piano o hiku • b. play the violin baiorin o hiku • c. play X[NP]!X[NP] o hiku • “refined” by the addition of “semantic categories” • a. play X[NP/sport] X[NP] o suru • b. play X[NP/instrument] X[NP] o hiku • Also, automatic generalization techniques from paired strings

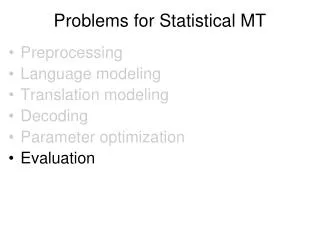

Statistical Machine Translation • Can all the steps of EBMT technique be induced from a parallel corpus? • What are the parameters of such a model? • What are the components of SMT? Slides adapted from Dorr and Monz, Knight, Schafer and Smith

Word-Level Alignments • Given a parallel sentence pair we can link (align) words or phrases that are translations of each other: • Where do we get the sentence pairs from?

Parallel Resources • Newswire: DE-News (German-English), Hong-Kong News, Xinhua News (Chinese-English), • Government: Canadian-Hansards (French-English), Europarl (Danish, Dutch, English, Finnish, French, German, Greek, Italian, Portugese, Spanish, Swedish), UN Treaties (Russian, English, Arabic, . . . ) • Manuals: PHP, KDE, OpenOffice (all from OPUS, many languages) • Web pages: STRAND project (Philip Resnik)

Sentence Alignment • If document De is translation of document Df how do we find the translation for each sentence? • The n-th sentence in De is not necessarily the translation of the n-th sentence in document Df • In addition to 1:1 alignments, there are also 1:0, 0:1, 1:n, and n:1 alignments • Approximately 90% of the sentence alignments are 1:1

Sentence Alignment (c’ntd) • There are several sentence alignment algorithms: • Align (Gale & Church): Aligns sentences based on their character length (shorter sentences tend to have shorter translations then longer sentences). Works astonishingly well • Char-align: (Church): Aligns based on shared character sequences. Works fine for similar languages or technical domains • K-Vec (Fung & Church): Induces a translation lexicon from the parallel texts based on the distribution of foreign-English word pairs.

Computing Translation Probabilities • Given a parallel corpus we can estimate P(e | f) The maximum likelihood estimation of P(e | f) is: freq(e,f)/freq(f) • Way too specific to get any reasonable frequencies! Vast majority of unseen data will have zero counts! • P(e | f ) could be re-defined as: • Problem: The English words maximizing • P(e | f ) might not result in a readable sentence

Decoding • The decoder combines the evidence from P(e) and P(f | e) to find the sequence e that is the best translation: • The choice of word e’ as translation of f’ depends on the translation probability P(f’ | e’) and on the context, i.e. other English words preceding e’

Translation Modeling • Determines the probability that the foreign word f is a translation of the English word e • How to compute P(f | e) from a parallel corpus? • Statistical approaches rely on the co-occurrence of e and f in the parallel data: If e and f tend to co-occur in parallel sentence pairs, they are likely to be translations of one another

Finding Translations in a Parallel Corpus • Into which foreign words f, . . . , f’ does e translate? • Commonly, four factors are used: • How often do e and f co-occur? (translation) • How likely is a word occurring at position i to translate into a word occurring at position j? (distortion) For example: English is a verb-second language, whereas German is a verb-final language • How likely is e to translate into more than one word? (fertility) For example: defeated can translate into eine Niederlage erleiden • How likely is a foreign word to be spuriously generated? (null translation)

Translation Model? Generative approach: Mary did not slap the green witch Source-language morphological analysis Source parse tree Semantic representation Generate target structure Maria no dió una botefada a la bruja verde

Translation Model? Generative story: Mary did not slap the green witch Source-language morphological analysis Source parse tree Semantic representation Generate target structure What are all the possible moves and their associated probability tables? Maria no dió una botefada a la bruja verde

The Classic Translation ModelWord Substitution/Permutation [IBM Model 3, Brown et al., 1993] Generative approach: Mary did not slap the green witch n(3|slap) Mary not slap slap slap the green witch P-Null Mary not slap slap slap NULL the green witch t(la|the) Maria no dió una botefada a la verde bruja d(j|i) Maria no dió una botefada a la bruja verde Probabilities can be learned from raw bilingual text.

Statistical Machine Translation … la maison … la maison bleue … la fleur … … the house … the blue house … the flower … All word alignments equally likely All P(french-word | english-word) equally likely

Statistical Machine Translation … la maison … la maison bleue … la fleur … … the house … the blue house … the flower … “la” and “the” observed to co-occur frequently, so P(la | the) is increased.

Statistical Machine Translation … la maison … la maison bleue … la fleur … … the house … the blue house … the flower … “house” co-occurs with both “la” and “maison”, but P(maison | house) can be raised without limit, to 1.0, while P(la | house) is limited because of “the” (pigeonhole principle)

Statistical Machine Translation … la maison … la maison bleue … la fleur … … the house … the blue house … the flower … settling down after another iteration

Statistical Machine Translation … la maison … la maison bleue … la fleur … … the house … the blue house … the flower … • Inherent hidden structure revealed by EM training! • For details, see: • “A Statistical MT Tutorial Workbook” (Knight, 1999). • “The Mathematics of Statistical Machine Translation” (Brown et al, 1993) • Software: GIZA++

Statistical Machine Translation … la maison … la maison bleue … la fleur … … the house … the blue house … the flower … P(juste | fair) = 0.411 P(juste | correct) = 0.027 P(juste | right) = 0.020 … Possible English translations, to be rescored by language model new French sentence

IBM Models 1–5 • Model 1: Bag of words • Unique local maxima • Efficient EM algorithm (Model 1–2) • Model 2: General alignment: • Model 3: fertility: n(k | e) • No full EM, count only neighbors (Model 3–5) • Deficient (Model 3–4) • Model 4: Relative distortion, word classes • Model 5: Extra variables to avoid deficiency

IBM Model 1 • Given an English sentence e1 . . . el and a foreign sentence f1 . . . fm • We want to find the ’best’ alignment a, where a is a set pairs of the form {(i , j), . . . , (i’, j’)}, • 0<= i , i’ <= l and 1<= j , j’<= m • Note that if (i , j), (i’, j) are in a, then i equals i’, i.e. no many-to-one alignments are allowed • Note we add a spurious NULL word to the English sentence at position 0 • In total there are (l + 1)m different alignments A • Allowing for many-to-many alignments results in (2l)m possible alignments A

IBM Model 1 • Simplest of the IBM models • Does not consider word order (bag-of-words approach) • Does not model one-to-many alignments • Computationally inexpensive • Useful for parameter estimations that are passed on to more elaborate models

IBM Model 1 • Translation probability in terms of alignments: • where: • and:

IBM Model 1 • We want to find the most likely alignment: • Since P(a | e) is the same for all a: • Problem: We still have to enumerate all alignments

IBM Model 1 • Since P(fj | ei) is independent from P(fj’ | ei’) we can find the maximum alignment by looking at the individual translation probabilities only • Let , then for each aj: • The best alignment can computed in a quadratic number of steps: (l+1 x m)

Computing Model 1 Parameters • How to compute translation probabilities for model 1 from a parallel corpus? • Step 1: Determine candidates. For each English word e collect all foreign words f that co-occur at least once with e • Step 2: Initialize P(f | e) uniformly, i.e. P(f | e) = 1/(no of co-occurring foreign words)

Computing Model 1 Parameters • Step 3: Iteratively refine translation probablities: • 1 for n iterations • 2 set tc to zero • 3 for each sentence pair (e,f) of lengths (l,m) • 4 for j=1 to m • 5 total=0; • 6 for i=1 to l • 7 total += P(fj | ei); • 8 for i=1 to l • 9 tc(fj | ei) += P(fj | ei)/total; • 10 for each word e • 11 total=0; • 12 for each word f s.t. tc(f | e) is defined • 13 total += tc(f | e); • 14 for each word f s.t. tc(f | e) is defined • 15 P(f | e) = tc(f | e)/total;

IBM Model 1 Example • Parallel ‘corpus’: the dog :: le chien the cat :: le chat • Step 1+2 (collect candidates and initialize uniformly): P(le | the) = P(chien | the) = P(chat | the) = 1/3 P(le | dog) = P(chien | dog) = P(chat | dog) = 1/3 P(le | cat) = P(chien | cat) = P(chat | cat) = 1/3 P(le | NULL) = P(chien | NULL) = P(chat | NULL) = 1/3

IBM Model 1 Example • Step 3: Iterate • NULL the dog :: le chien • j=1 total = P(le | NULL)+P(le | the)+P(le | dog)= 1 tc(le | NULL) += P(le | NULL)/1 = 0 += .333/1 = 0.333 tc(le | the) += P(le | the)/1 = 0 += .333/1 = 0.333 tc(le | dog) += P(le | dog)/1 = 0 += .333/1 = 0.333 • j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 tc(chien | NULL) += P(chien | NULL)/1 = 0 += .333/1 = 0.333 tc(chien | the) += P(chien | the)/1 = 0 += .333/1 = 0.333 tc(chien | dog) += P(chien | dog)/1 = 0 += .333/1 = 0.333

IBM Model 1 Example • NULL the cat :: le chat • j=1 total = P(le | NULL)+P(le | the)+P(le | cat)=1 tc(le | NULL) += P(le | NULL)/1 = 0.333 += .333/1 = 0.666 tc(le | the) += P(le | the)/1 = 0.333 += .333/1 = 0.666 tc(le | cat) += P(le | cat)/1 = 0 +=.333/1 = 0.333 • j=2 total = P(chien | NULL)+P(chien | the)+P(chien | dog)=1 tc(chat | NULL) += P(chat | NULL)/1 = 0 += .333/1 = 0.333 tc(chat | the) += P(chat | the)/1 = 0 += .333/1 = 0.333 tc(chat | cat) += P(chat | dog)/1 = 0 += .333/1 = 0.333