Exploring Logic and Semantics in Natural Language Processing

110 likes | 226 Vues

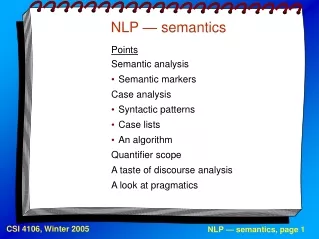

This presentation delves into the complexities of semantics in NLP, focusing on how people reason with language through various examples, such as comparing experiences and logical structures in sentences. It discusses quantifiers and their role in meaning, introduces Combinatory Categorial Grammar (CCG), and highlights challenges in parsing and semantic coherence. Utilizing MaxEnt supertaggers and the NLTK framework, we explore the computational demands of high-level semantic processing and the importance of statistical methods in achieving coherent interpretations in language.

Exploring Logic and Semantics in Natural Language Processing

E N D

Presentation Transcript

Semantics in NLP(part 2) MAS.S60 Rob Speer Catherine Havasi * Lots of slides borrowed for lots of sources! See end.

Are people doing logic? • Language Log: “Russia sentences” • *More people have been to Russia than I have.

Are people doing logic? • Language Log: “Russia sentences” • *More people have been to Russia than I have. • *It just so happens that more people are bitten by New Yorkers than they are by sharks.

Are people doing logic? • The thing is, is people come up with new ways of speaking all the time.

Quantifiers • Every/all: \P. \Q. all x. (P(x) -> Q(x)) • A/an/some: \P. \Q. exists x. (P(x) & Q(x)) • The: • \P. \Q. Q(x) • P(x) goes in the presuppositions

High-level overview of C&C • Find the highest-probability result with coherent semantics • Doesn’t this create billions of parses that need to be checked? • Yes.

High-level overview of C&C • Parses using a Combinatorial Categorial Grammar (CCG) • fancier than a CFG • includes multiple kinds of “slash rules” • lots of grad student time spent transforming Treebank • MaxEnt “supertagger” tags each word with a semantic category

High-level overview of C&C • Find the highest-probability result with coherent semantics • Doesn’t this create billions of parses that need to be checked?

High-level overview of C&C • Find the highest-probability result with coherent semantics • Doesn’t this create millions of parses that need to be checked? • Yes. A typical sentence uses 25 GB of RAM. • That’s where the Beowulf cluster comes in.

Can we do this with NLTK? • NLTK’s feature-based parser has some machinery for doing semantics