Kernel Machines and Support Vector Machines (SVM) in Supervised Learning

Explore the intricacies of SVM and its use as a classifier in supervised learning, focusing on optimal hyperplane separation and overcoming difficulties for linearly separable patterns. Learn about Lagrange Multipliers, dual space conversion, and Kernel Trick for nonlinear data.

Kernel Machines and Support Vector Machines (SVM) in Supervised Learning

E N D

Presentation Transcript

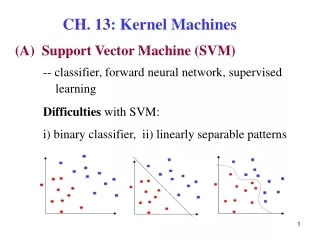

CH. 13: Kernel Machines (A) Support Vector Machine (SVM) -- classifier, forward neural network, supervised learning Difficulties with SVM: i) binary classifier, ii) linearly separable patterns

SVM finds optimal separating hyperplane (OSH) With the maximal margin between two support hyperplaneswhich are formed bysupport vectors.

Data points: Let the equation of the OSH be : normal vectorpoints toward positive data : distance to the origin e.g.,

Let : support hyperplanes Distances between them Then Rewrite Likewise, Margin:

Replace Minimizing Maximizing margin subject to ( ) are called satisfying support vectors Lagrange Multiplier Method – converts a constrained to an unconstrained problem.

The objective function: The optimal solution is given by the saddle point of , which is minimized w.r.t. w and b, while maximized w.r.t. i.e., . ThroughKarush–Kuhn–Tucker (KKT) conditions, L defined in the primal space of w, b, is translated to the dual space of

--- (A) --- (B) --- (C)

From (B), From (A), The problem becomes

After solving by letting find w by (A) . For non-support vectors, From (C), Support vectorsare those whose Determine b using any support vector. Consider any support vector : # support vectors

Overlapping patterns: the patterns that violate Define the constraint as Soft margin: : slack variables Two ways of violation:

Problem: Find a separating hyperplane for which • minimal (ii) (iii) minimal (soft error) Lagrange objective function in the primal space, C:penalty factor

ThroughKKT conditions, Dual space: the space of subject to Different from the separable case in that

e.g., 2D 3D (B) Kernel Machines 13.5 Kernel Trick Cover’s theorem: Make nonlinearly separable data linearly separable by mapping them from low to high dimensional space x : a vector in the original N-D space

: a set of functions that transform x to a space of infinite dimensionality. Let The OSH in the new space where 14

Substitute (2), (3) into (1), : kernel function Let 15

Mercer conditions: requirements of a kernel function A kernel function can be considered as a a measure of similarity between data points. 1. Symmetric 2. 3. 4.

13.6 Examples of Kernel Functions i) Linear kernel: ii) Polynomial kernel with degree d : e.g., d = 2

iii) Perceptron kernel: iv) Sigmoidal kernel: v) Radial basis functionkernel: 13.8 Multiple Kernel Learning A new kernel can be constructed by combining simpler kernels, e.g.,

(K > 2 classes) 13.9 Multiclass Kernel Machines • Train K 2-class classifiers , each one distinguishing one class from all other classes combined. During testing, 2. Train K(K-1)/2 pairwise classifiers 3. Train a single multiclass classifier

13.10 Kernel Machines for Regression • Consider a linear model Define constraints: : slack variables Problem: subject to constraints

The Lagrangian Through KKT conditions:

The dual: subject to

(a) The examples that fall in the tube have (b) The support vectors satisfy

The fitted line kernel function