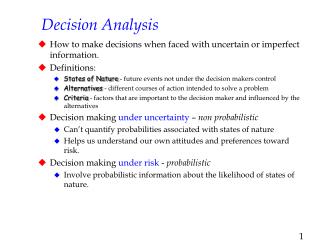

Decision Analysis

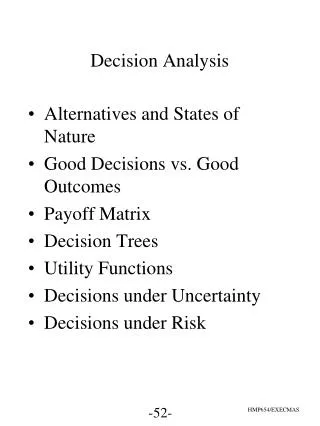

Decision Analysis. (to p2). What is it? What is the objective? More example Tutorial: 8 th ed:: 5, 18, 26, 37 9 th ed: 3, 12, 17, 24. (to p5). (to p50). What is it?. It concerns with making a decision or an action based on a series of possible outcomes

Decision Analysis

E N D

Presentation Transcript

Decision Analysis (to p2) What is it? What is the objective? More example Tutorial: 8th ed:: 5, 18, 26, 37 9th ed: 3, 12, 17, 24 (to p5) (to p50)

What is it? It concerns with making a decision or an action based on a series of possible outcomes The possible outcomes are normally presented in a table format called “ Payoff Table” (to p3) (to p1)

Payoff Table A payoff table consists of Columns: state of nature (ie events) Rows: choice of decision It corresponding entries are “payoff values” (to p4) Example

Payoff Table (to p2)

Objective Our objective here is to determine which decision should be chosen based on the payoff table How to achieve it? Simple two-dimensional cases Posterior analysis with Bayesian rules (to p6) (to p20) (to p1)

How to achieve it? • The final decision could be based on one of the following criteria: 1) maximax, 2) maximin, 3) minimax regret, 4) Hurwicz, 5) equal likelihood • Each one is better one? • Ex 4 and 7 • QM programs (to p7) (to p9) (to p11) (to p15) (to p16) (to p17) (to p18) (to p5)

The Maximax Criterion Known as “optimistic” person, he/she will pick the decision that has the maximum of maximum payoffs . Example: (to p8) How to derive this decision?

Procedural Steps: Step1: For each row (or decision), select its max values Step 2: Choose the decision that has the max value of Step1 Example: Max 50,000 100,000 30,000 max 100,000 (to p3)

The Maximin Criterion Known as the “pessimistic” person, she/he will select the decision that has the maximum of the minimum from the payoff table Example Select this action (to p10) How to obtain it?

Procedural steps: Step 1: For each row (or decision), obtain its min values Step 2: Choose the decision that has the max value from step 1 Min 30,000 -40,000 10,000 (to p3)

The Minimax: Regret Criterion Regret is the difference between the payoff from the best decision and all other decision payoffs. The decision maker attempts to avoid regret by selecting the decision alternative that minimizes the maximum regret. Solution (to p12) How to obtain it?

Procedural steps: Step 1: Find out the max value for each column Step 2: Subtract value of step 1 to all entry of that column Step 3: Find out the max value for each row Step 4: Choose the decision that has smallest value of step 3 Example Step 1: (to p13) Max 100,000 30,000

Step 2:Subtract value of step 1 to all entry of that column (100,000 - 50,000) (30,000 - 30,000) Note: this is a regret table. (to p14)

Step 3: Find out the max value for each row Step 4: Choose the decision that has smallest value of step 3 Max 50,000 70,000 70,000 Min (to p3) Select this decision

Decision Making without ProbabilitiesThe Hurwicz Criterion - The Hurwicz criterion is a compromise between the maximax and maximin criterion. - A coefficient of optimism, , is a measure of the decision maker’s optimism. - The Hurwicz criterion multiplies the best payoff by and the worst payoff by 1- ., for each decision, and the best result is selected. Decision Values Apartment building $50,000(.4) + 30,000(.6) = 38,000 Office building $100,000(.4) - 40,000(.6) = 16,000 Warehouse $30,000(.4) + 10,000(.6) = 18,000 Example: Select this,max (to p3)

Decision Making without ProbabilitiesThe Equal Likelihood Criterion - The equal likelihood ( or Laplace) criterion multiplies the decision payoff for each state of nature by an equal weight, thus assuming that the states of nature are equally likely to occur. DecisionValues Apartment building $50,000(.5) + 30,000(.5) = 40,000 Office building $100,000(.5) - 40,000(.5) = 30,000 Warehouse $30,000(.5) + 10,000(.5) = 20,000 Example: Select this, Max (to p3)

Decision Making without ProbabilitiesSummary of Criteria Results A dominant decision is one that has a better payoff than another decision under each state of nature. The appropriate criterion is dependent on the “risk” personality and philosophy of the decision maker. Criterion Decision (Purchase) Maximax Office building Maximin Apartment building Minimax regret Apartment building Hurwicz Apartment building Equal liklihood Apartment building (to p3)

Decision Making without ProbabilitiesSolutions with QM for Windows (1 of 2) Exhibit 12.1

Decision Making without ProbabilitiesSolutions with QM for Windows (2 of 2) Exhibit 12.2 Exhibit 12.3

Posterior analysis with Bayesian rules (to p21) Here, we need to understand the following concepts: 1) Expected values 2) Expected Opportunity loss 3) Expected values of Perfect Information (Ex. 18 and 26) 4) Decision Tree a) basic tree b) sequential tree 5) Posterior analysis with Bayesian rules (Ex. 33, 35 and 37) (to p22) (to p26) (tp p29) (to p36) (to p40) (to p2)

Expected Value • Expected value is computed by multiplying each decision outcome under each state of nature by the probability of its occurrence. • EV(Apartment) = $50,000(.6) + 30,000(.4) = 42,000 • EV(Office) = $100,000(.6) - 40,000(.4) = 44,000 • EV(Warehouse) = $30,000(.6) + 10,000(.4) = 22,000 Table 12.7 Payoff table with Probabilities for States of Nature Select this, Max (to p20)

Decision Making with ProbabilitiesExpected Opportunity Loss The expected opportunity loss is the expected value of the regret for each decision (Minimax) EOL(Apartment) = $50,000(.6) + 0(.4) = 30,000 EOL(Office) = $0(.6) + 70,000(.4) = 28,000 EOL(Warehouse) = $70,000(.6) + 20,000(.4) = 50,000 Table 12.8 Regret (Opportunity Loss) Table with Probabilities for States of Nature Select this, Min (to p20)

Decision Making with ProbabilitiesSolution of Expected Value Problems with QM for Windows Exhibit 12.4

Decision Making with ProbabilitiesSolution of Expected Value Problems with Excel and Excel QM(1 of 2) Exhibit 12.5

Decision Making with ProbabilitiesSolution of Expected Value Problems with Excel and Excel QM(2 of 2) Exhibit 12.6

Expected Value of Perfect Information The expected value of perfect information (EVPI) is the maximum amount a decision maker would pay for additional information. EVPI = (expected value with perfect information) –(expected value without perfect information) EVPI = the expected opportunity loss (EOL) for the best decision. How to compute them? (to p27)

Decision Making with ProbabilitiesEVPI Example Table 12.9 Payoff Table with Decisions, Given Perfect Information Best outcome Worst outcome Best outcome for each column Decision with perfect information: $100,000(.60) + 30,000(.40) = $72,000 Decision without perfect information: EV(office) = $100,000(.60) - 40,000(.40) = $44,000 EVPI = $72,000 - 44,000 = $28,000 EOL(office) = $0(.60) + 70,000(.4) = $28,000 (to p20) (back p47)

Decision Making with ProbabilitiesEVPI with QM for Windows Exhibit 12.7

A decision tree is a diagram consisting of decision nodes (represented as squares), probability nodes (circles), and decision alternatives (branches). Decision Trees (1 of 2) Table 12.10 Payoff Table for Real Estate Investment Example Figure 12.1 Decision tree for real estate investment example (to p30)

Decision Trees (2 of 2) The expected value is computed at each probability node: EV(node 2) = .60($50,000) + .40(30,000) = $42,000 EV(node 3) = .60($100,000) + .40(-40,000) = $44,000 EV(node 4) = .60($30,000) + .40(10,000) = $22,000 And Choose branch with max value: (to p20) Use to denote not chosen path(s) (to p43)

Decision Making with ProbabilitiesDecision Trees with QM for Windows Exhibit 12.8

Decision Making with ProbabilitiesDecision Trees with Excel and TreePlan(1 of 4) Exhibit 12.9

Decision Making with ProbabilitiesDecision Trees with Excel and TreePlan(2 of 4) Exhibit 12.10

Decision Making with ProbabilitiesDecision Trees with Excel and TreePlan(3 of 4) Exhibit 12.11

Decision Making with ProbabilitiesDecision Trees with Excel and TreePlan(4 of 4) Exhibit 12.12

Sequential Decision Trees(1 of 2) A sequential decision tree uses to illustrate a series of decisions. . How to make use of them? (to p37)

Sequential Decision Trees(2 of 2) Compute the expected values for each stage Then choose action that produces the highest value. Extra information 0.6*2M+0.4*0.225M Max (1.29M-0.8M, 1.36M-0.2M) =1.16M (to p20)

Sequential Decision Tree Analysis with QM for Windows Exhibit 12.13

Sequential Decision Tree Analysis with Excel and TreePlan Exhibit 12.14

Bayesian Analysis(1 of 3) Bayesian analysis deals with posterior information, and it can be computed based on their conditional probabilities Example: Consider the following example. Using expected value criterion, best decision was to purchase office building with expected value of $444,000, and EVPI of $28,000. Now, what posterior information can be obtained here? (to p41)

Bayesian Analysis(2 of 3) Let consider the following information: g = good economic conditions p = poor economic conditions P = positive economic report N = negative economic report From Economic analyst,we can obtain the following “conditional probability”, the probability that an event will occur given that another event has already occurred. P(Pg) = .80 P(Ng) = .20 P(Pp) = .10 P(Np) = .90 From about information, we can further compute the posterior information such as: P(gP) How it works? New Total = 0.8+0.2 = 1.0 (to p42)

Bayesian Analysis(3 of 3) (A posterior probability is the altered marginal probability of an event based on additional information) Example for its application: Let P(g) = .60; P(p) = .40 Then, the posterior probabilities is: P(gP) = P(Pg)P(g)/[P(Pg)P(g) + P(Pp)P(p)] = (.80)(.60)/[(.80)(.60) + (.10)(.40)] = .923 Systematic way to compute it? Other posterior (revised) probabilities are: P(gN) = .250 P(pP) = .077 P(pN) = .750 Where are these values located/appeared in our tree diagram? (to p43) (to p44)

Form this table to compute them 0.52 Table 12.12 Computation of Posterior Probabilities P(P) = P(P/g)P(g) +P(P/p) P(p) =0.8* 0.6 +0.1* 0.4 = 0.52 (to p42)

Decision Trees with Posterior Probabilities (1 of 2) - Decision tree below differs from earlier versions in that : 1. Two new branches at beginning of tree represent report outcomes; 2. Newly introduced Figure 12.5 Decision tree with posterior probabilities Old one Making Decision (to p45) (to p30)

Decision Trees with Posterior Probabilities (2 of 2) - EV (apartment building) = $50,000(.923) + 30,000(.077) = $48,460 - EV (strategy) = $89,220(.52) + 35,000(.48) = $63,194 The derivative is obtained from slide44 (to p47) (to p46) What good are these values?

We can make use these information to determine how much should we pay for, say, sampling a survey How? Example (to p47)

The Expected Value of Sample Information • The expected value of sample information (EVSI) • = expected value with information – expect value without information.: • For example problem, EVSI = $63,194 - 44,000 = $19,194 • How efficiency is this value? (to p45) From the decision tree From without perfect information (to p27) (to p48)

Efficiency • The efficiency of sample information is the ratio of the expected value of sample information to the expected value of perfect information: • efficiency = EVSI /EVPI = $19,194/ 28,000 = 0.68 (to p2)

Decision Analysis with Additional Information Utility Table 12.13 Payoff Table for Auto Insurance Example Expected Cost (insurance) = .992($500) + .008(500) = $500 Expected Cost (no insurance) = .992($0) + .008(10,000) = $80 - Decision should be do not purchase insurance, but people almost always do purchase insurance. - Utility is a measure of personal satisfaction derived from money. - Utiles are units of subjective measures of utility. - Risk averters forgo a high expected value to avoid a low-probability disaster. - Risk takers take a chance for a bonanza on a very low-probability event in lieu of a sure thing.

Example Problem Solution(1 of 7) Consider: a. Determine the best decision without probabilities using the 5 criteria of the chapter. b. Determine best decision with probabilities assuming .70 probability of good conditions, .30 of poor conditions. Use expected value and expected opportunity loss criteria. c. Compute expected value of perfect information. d. Develop a decision tree with expected value at the nodes. e. Given following, P(Pg) = .70, P(Ng) = .30, P(Pp) = 20, P(Np) = .80, determine posterior probabilities using Bayesians rule. f. Perform a decision tree analysis using the posterior probability obtained in part e. (to p51) (to p53) (to p54) (to p55) (to p56) (to p1)