Text mining

Text mining. Gergely Kótyuk Laboratory of Cryptography and System Security (CrySyS) Budapest University of Technology and Economics www.crysys.hu. Introduction. Generic model Document preprocessing Text mining methods. Text Mining Tasks. Classification (supervised learning)

Text mining

E N D

Presentation Transcript

Text mining Gergely Kótyuk Laboratory of Cryptography and System Security (CrySyS) Budapest University of Technology and Economics www.crysys.hu

Introduction • Generic model • Document preprocessing • Text mining methods

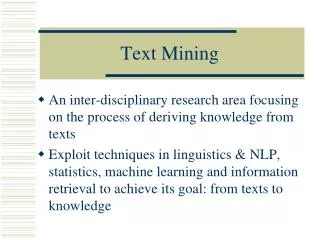

Text Mining Tasks • Classification (supervised learning) • Binary classification • Single label (multi-class) classification • Multi-label classification • Multi-level (hierarchical) classification • Clustering (unsupervised learning) • Summarization • Extraction: only parts of the original text • Abstraction: introduces text that is not included in the original text

Solutions • Classification • Decision tree • Neural network • Bayes network • Clustering • k-means

Document preprocessing • Goal: represent any text briefly, in a fixed number of parameters • Representation: vector space model

Vector space model • The text is tokenized to words • The words are canonized to base wordswe refer to base words as terms • A dictionary is built, that is the set of the terms in the document • The document is represented as a vector:the ith element of the vector is the number the ith term of the dictionary occurs in the document • The collection of documents is represened in the term-document matrix • Problem: the number of dimensions is too largeSolution: feature selection

Dimension Reduction • Feature Selection: find a subset of original variables • Document Frequency Thresholding • Omit the words with occurences greater than a threshold value, because these words are not discriminative • Omit the words with occurences less then a threshold value, because these words do not carry much information • Information gain based feature selection (information theory) • Chi-square based feature selection (statistics) • Feature Extraction: transform the data to fewer dims • Latent Semantic Indexing (LSI) • Principal Component Analysis (PCA) • Nonlinear methods

Latent Semantic Indexing (LSI) • SVD is applied to the term-document matrix • The features belonging to the k largest eigenvalues represent the term-document matrix well, these features are used • LSI regards documents with many common words as being semantically near

Principal Component Analysis (PCA) • Also called Karhunen-Loève transform (KLT) • A linear technique • Maps the data to a lower dimensional space in a way that the variance in the low-dimensional representation is maximized • The algorithm • The correlation matrix of the data is constructed • The eigenvectors and eigenvalues of the correlation matrix are calculated • The original space is reduced to the space spanned by the eigenvectors that belong to the largest eigenvalues

Kernel PCA • A nonlinear method • PCA + kernel trick • Kernel trick (generally) • we map observations from a general set S into a higher dimensional space V • we hope that the general classification in S reduces to the linear classification in V • the trick lets us avoid the calculation of mapping the observations from S to V • We use a learning algorithm that needs only the dot product operation in V • We use a mapping that allows to calculate the dot product within V by a kernel function K within S (the original space)

Manifold learning techniques • they minimize a cost function that retains local properties of the data • methods • Locally Linear Embedding (LLE) • Hessian LLE • Laplacian Eigenmaps • Local tangent space alignment (LTSA) • Maximum Variance Unfolding (MVU)

Maximum Variance Unfolding (MVU) • instead of defining a fixed kernel, it tries to learn the kernel using semidefinite programming • exactly preserves all pairwise distances between nearest neighbors • maximizes the distances between points that are not nearest neighbors