Test Oracles in Software Testing

Explore the taxonomy of test oracles, generations of automation, challenges faced, and types of oracles in software testing. Discover the advantages, limitations, and evolving methodologies. Source: Douglas Hoffman, SQM, LLC.

Test Oracles in Software Testing

E N D

Presentation Transcript

Taxonomy of Test Oracles Mudit Agrawal Course: Introduction to Software Testing, Fall ‘06 Software Testing, Fall 2006

Motivation • Shortcomings of automated tests (as compared to human ‘eye-ball oracle’) • Factors influencing test oracles • Environmental (private, global) variables • State (initial and present) of the program • Dependence on test case • What kind of oracle(s) are best suited for which applications?

Contents • Mutating Automated Tests – Challenges for test oracles • Generations of Automations and Oracles • Challenges for Oracles • Types of Oracles • Conclusion

Automated Tests • Advantages • No intervention needed after launching tests • Automatically sets up and/or records relevant test environment • Evaluates actual against expected results • Reports Analysis of pass/fail • Limitations: • Less likely to cover latent defects • Doesn’t do anything different each time it runs • Oracle is not ‘complete’

Limited by Test Oracle • ‘Predicting’ and comparing the results • Playing twenty questions with all the questions written down in advance! • Has to be influenced by • Data • Program State • Configuration of the system environment

Testing with the Oracle Source: Douglas Hoffman, SQM, LLC

Generations of Automation:First Generation Automation • Automate existing tests by creating equivalent exercises • Self verifying tests • Hard Coded oracles • Limitations • Handling negative test cases

Second Generation Automation • Automated Oracles • Emphasis on expected results – more exhaustive input variation, coverage • Increasing • Frequency • Intensity • Duration of automated test activities (load testing)

Second Generation continued… • Random selection among alternatives • Partial domain coverage • Dependence of system state on previous test cases • Mechanism to determine whether SUT’s behavior is expected • Pseudo random number generator • Test recovery

Third Generation Automation • Take into account knowledge and visibility • Software instrumentation • Multi-threaded tests • Fall back compares • Using other oracles

Third Generation continued… • Heuristic Oracles • Fuzzy comparisons, approximations • Diagnostics • Looks for errors • Performs additional tests based on the specific type of error encountered

Challenges for Oracles • Independence and completeness – difficult to achieve both • Independence from • Algorithms • Sub-programs, libs • Platforms • OS • Completeness in form of information • Comparing computed functions, screen navigations and asynchronous event handling • Speed of predictions • Time of Execution of oracle

Challenges for Oracle continued… • Better an oracle is, more complex it becomes • Comprehensive oracles make up for long test cases (DART paper) • More it predicts, more dependent it is on SUT • More likely for it to contain the same fault

Challenges for Oracle continued… • Legimitate oracle – an oracle that produces accurate rather than estimated outputs • Generates results based on the formal specs • What if formal specs are wrong? • Very few errors cause noticeable abnormal test termination

IORL – Input/Output Req. Lang. • Graphics based Language • Optimal representation between the informal requirements and the target code

Oracles for different scenarios • Transducers • That read an input sequence and produce and output sequence • Logical correspondence between I/O structures • e.g. native file format to HTML conversions in web applications • Solution – CFGs • System translates a formal specs of I/O files into an automated oracle

Embedded Assertion Languages[Oracles for different scenarios] • Asserts! • Problems: • Non-local assertions • Asserts for pre/post condition pairs with a procedure as a whole • e.g. asserts for each method that modifies the object state • State caching • Saving parts or all of ‘before’ values

Embedded Assertion Languages[Oracles for different scenarios] • Auxiliary variables • Quantification

Extrinsic Interface Contracts [Oracles for different scenarios] • Instead of inserting asserts within the program, checkable specs are kept separate from the implementation • Extrinsic specs are written in notations • Less tightly coupled with target programming language • Useful when source-code need not be touched

Pure Specification Languages [Oracles for different scenarios] • Problem with older approach: specs were not pure • Z and object-Z are model based specification languages • Describe intended behavior using familiar mathematical objects • Free of the constraints of the language

Trace Checking [Oracles for different scenarios] • Uses a partial trace of events • Such a trace can be checked by an oracle derived from formal specs of externally observable behavior

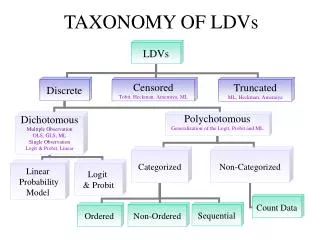

Types of Oracles Categorized based on Oracle-Outputs • True Oracle • Faithfully reproduces all relevant results • Uses independent platform, processes, compilers, code etc. • Lesser commonality => more confidence in the correctness of results • e.g. sin(x) [problem: how is ‘all inputs’ defined?

Types of Oracles continued… • Stochastic Oracle • Statistically random input selection • Error prone areas of the software are no more or less likely to be encountered • sin() – pseudo random generator to select input values

Types of Oracles continued… • Heuristic Oracle • Reproduces selected results for the SUT • Remaining values are checked based on heuristics • No exact comparison • Sampling • Values are selected using some criteria (not random) • e.g. boundary values, midpoints, maxima, minima

Types of Oracles continued… • Consistent Oracle • Uses results from one test run as the Oracle for subsequent runs • Evaluating the effects of changes from one revision to another

Which category best describes GUI Testing? a. Heuristic b. Trace Checking c. Transducers d. None