Preference Elicitation in Combinatorial Auctions

620 likes | 779 Vues

This paper discusses preference elicitation in combinatorial auctions, focusing on the perspectives of both bidders and auctioneers. It highlights how allowing bidders to express their true wants can minimize exposure problems and improve efficiency. We cover the challenges, including the NP-complete binary winner determination problem, and the revelation problem in direct-revelation mechanisms. Algorithms for eliciting bids efficiently, including Elicitor Clearing and Pareto-optimal methods, are presented, aiming to minimize elicitation effort while maximizing outcomes.

Preference Elicitation in Combinatorial Auctions

E N D

Presentation Transcript

Preference Elicitation in Combinatorial Auctions Tuomas Sandholm Carnegie Mellon University Computer Science Department (papers on this topic available via www.cs.cmu.edu/~sandholm)

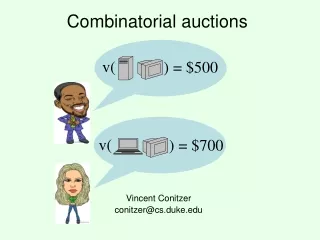

Combinatorial auction • Can bid on combinations of items [Rassenti,Smith & Bulfin 82]... • Bidder’s perspective • Allows bidder to express what she really wants • Avoids exposure problems • No need for lookahead / counterspeculationing of items • Auctioneer’s perspective: • Automated optimal bundling • Binary winner determination problem: • Label bids as winning or losing so as to maximize sum of bid prices • Each item can be allocated to at most one bid • NP-complete [Rothkopf et al 98 using Karp 72] • Inapproximable [Sandholm IJCAI-99, AIJ-02 using Hastad 99]

Another complex problem in combinatorial auctions: “Revelation problem” • In direct-revelation mechanisms (e.g. VCG), bidders bid on all 2#items combinations • Need to compute the valuation for exponentially many combination • Each valuation computation can be NP-complete • For example if a carrier company bids on trucking tasks: TRACONET [Sandholm AAAI-93] • Need to communicate the bids • Need to reveal the bids • Loss of privacy & strategic info

Revelation problem … • Agents need to decide what to bid on • Waste effort on counter-speculation • Waste effort making losing bids • Fail to make bids that would have won • Reduces economic efficiency & revenue

? for $ 1,000 for $ 1,500 for What info is needed from an agent depends on what others have revealed Elicitor Clearing algorithm Elicitor decides what to ask next based on answers it has received so far Conen & S. IJCAI-01 workshop on Econ. Agents, Models & Mechanisms, ACMEC-01

Elicitor [Conen & Sandholm 2001] • Have auctioneer incrementally elicit information from bidders • based on the info received from bidders so far

Elicitation … • Goal: minimize elicitation • Regardless of computational / storage cost • (Future work: explore tradeoffs across these) • Approach: • At each phase: • Elicitor decides what to ask (and from which bidder) • Elicitor asks that and propagates the answer in its data structures • Elicitor checks whether the auction can already be cleared optimally given the information in hand

Setting Combinatorial auction: m items for sale • Private values auction, no allocative externalities • Each bidder i has value function, vi: 2m R • Unique valuations (to ease presentation)

Outline • Query policy dependent (= rank lattice based) elicitor algorithms • Policy independent elicitor algorithms • Note: Private values model

Rank lattice Bundle Ø A B AB Rank for Agent 1 4 2 3 1 Rank for Agent 2 4 3 2 1 [1,1] [1,2] [2,1] [1,3] [2,2] [3,1] [1,4] [2,3] [3,2] [4,1] [2,4] [3,3] [4,2] [3,4] [4,3] [4,4] Infeasible Feasible Dominated

A search algorithm for the rank lattice Algorithm PAR “PAReto optimal“ OPEN [(1,...,1)] while OPEN [] do Remove(c,OPEN); SUC suc(c); if Feasible(c) then PAR PAR {c}; Remove(SUC,OPEN) else foreachnode SUC do if node OPEN andUndominated(node,PAR) thenAppend(node,OPEN) • Thrm. Finds all feasible Pareto-undominated allocations (if bidders’ utility functions are injective) • Welfare maximizing solution(s) can be selected as a post-processor by evaluating those allocations • Call this hybrid algorithm MPAR (for “maximizing” PAR)

Value-augmented rank lattice Bundle Ø A B AB Value for Agent 1 0 4 3 8 Value for Agent 2 0 1 6 9 17 [1,1] 14 13 [1,2] [2,1] 10 12 9 [1,3] [2,2] [3,1] 8 9 [1,4] [2,3] [3,2] [4,1] [2,4] [3,3] [4,2] [3,4] [4,3] [4,4]

Search algorithm family for the value-augmented rank lattice Algorithm EBF “Efficient Best First“ OPEN {(1,...,1)} loop if |OPEN| = 1 then c combination in OPEN else M {k OPEN | v(k) = maxnode OPENv(node) } if |M| 1 node M with Feasible(node) thenreturnnode else choose c M such that c is not dominated by any node M OPEN OPEN \ {c} if Feasible(c) then return c elseforeachnode suc(c) do if node OPEN then OPEN OPEN {node} • From now on, assume quasilinear utility functions • Thrm. Any EBF algorithm finds welfare maximizing allocations • Thrm. VCG payments can be determined from the information already elicited

Best & worst case elicitation effort • Best case: rank vector (1,...,1) is feasible • One bundle query to each agent, no value queries • (VCG payments: 0) • Thrm. Any EBF algorithm requires at worst (2#items #bidders – #bidders#items)/2 + 1 value queries • Proof idea. Upper part of the lattice is infeasible and not less in value than the solution • Not surprising because worst-case communication complexity of the problem is exponential [Nisan 01]

EBF minimizes feasibility checks • Def: An algorithm is admissible if it always finds a welfare maximizing allocation • Def: An algorithm is admissibly equipped if it only has • value queries, and • a feasibility function on rank vectors, and • a successor function on rank vectors • Thrm: There is no admissible, admissibly equipped algorithm that requires fewer feasibility checks (for every problem instance) than any EBF algorithm

MPAR minimizes value queries • Thrm. No admissible, admissibly equipped algorithm (that calls the valuation function for bundles in feasible rank vectors only) will require fewer value queries than MPAR

Differential-revelation • Extension of EBF • Information elicited: differences between valuations • Hides sensitive value information • Motivation: max ∑ vi(Xi) min ∑ [vi(r-1(1)) – vi(Xi)] • Maximizing sum of value Minimizing difference between value of best ranked bundle and bundle in the allocation • Thrm. Differences suffice for determining welfare maximizing allocations & VCG payments • 2 low-revelation incremental ex post incentive compatible mechanisms ...

Differential elicitation ... • Questions (start at rank 1) • “tell me the bundle at the current rank” • “tell me the difference in value of that bundle and the best bundle“ • increment rank • Natural sequence: from “good” to “bad” bundles

Differential elicitation ... • Variation: Bitwise decrement mechanism • Is the difference in value between the best bundle and the bundle at the current rank greater than δ? • if „yes“ increment δ, requires min. Increment • allows establishing a „bit stream“ (yes/no answers)

Some of our elicitor’s query types • Order information: Which bundle do you prefer, A or B? • Value information: What is your valuation for bundle A? (Answer: Exact or Bounds) • Rank information: • What is the rank of bundle b? • What bundle is at rank x? • Given bundle b, what is the next lower (higher) ranked bundle?

General Algorithmic Framework for Elicitation Algorithm Solve(Y,G) whilenotDone(Y,G) do o = SelectOp(Y,G) Choose question I = PerformOp(o,N) Ask bidder G = Propagate(I,G) Update data structures with answer Y = Candidates(Y,G) Curtail set of candidate allocations Output: Y – set of optimal allocations Input: Y – set of candidate allocations (some may turn out infeasible, some suboptimal) G – partially augmented order graph

(Partially) Augmented Order Graph ∞ ∞ ∞ ∞ ∞ ∞ Agent1 Ø B A AB A Ø 0 0 0 0 > Allocations B B 4 0 3 6 2 6 1 9 Ø A B AB Agent2 1 1 0 0 1 6 [1,1] [1,2] [2,1] Rank Upper Bound [1,3] [2,2] [3,1] 1 9 [1,4] [2,3] [3,2] [1,4] AB [2,4] [3,3] [4,2] 6 [3,4] [4,3] Lower Bound [4,4] Some interesting procedures for combining different types of info

Constraint Network 111 1 per agent 110 101 011 100 010 001 000

Constraint Network [0,] 111 Upper bound [0,] [0,] [0,] 110 101 011 Lower bound [0,] [0,] [0,] 100 010 001 [0] 000

Constraint Propagation [0,] 111 vi(110)=5 [0,] [0,] [5] 110 101 011 [0,] [0,] [0,] 100 010 001 [0] 000

Constraint Propagation [0,] 111 vi(110)=5 [0,] [0,] [5] 110 101 011 [0,] [0,] [0,] 100 010 001 [0] 000

Constraint Propagation [5,] 111 vi(110)=5 [0,] [0,] [5] 110 101 011 [0,] [0,5] [0,5] 100 010 001 [0] 000

Constraint Propagation [5,] 111 vi(110)=5 [0,] [0,] [5] 110 101 011 [0,] [0,5] [0,5] 100 010 001 [0] 000

Constraint Propagation [5,] 111 vi(110)=5 [0,] [0,] [5] 110 101 011 [0,] [0,5] [0,5] 100 010 001 [0 ,5] 000 000 Additional edges from order queries

What to query should the elicitor ask (next) ? • Simplest answer: value query • Ask for the value of a bundle vi(b) • How to pick b, i? • First try: Randomly (subject to not asking queries whose answer can be inferred from info already elicited)

Random elicitation with value queries only • Thrm. If the full-revelation (direct) mechanism makes Q value queries and the best value-elicitation policy makes q queries, we make value queries • Proof idea: We have q red balls, and the remaining balls are blue; how many balls do we draw before removing all q red balls? • Universal revelation reducer • Is it tight? Run experiments

Experimental setup for all graphs in this talk • Simulations: • Draw agents’ valuation functions from a random distribution where free disposal is honored • Run the auction: auctioneer asks queries of agents, agents look up answer from a file • Each point on plots is average of 10 runs

Random elicitation • Not much better than theoretical bound queries queries 4 items 2 agents 80 1000 60 Full revelation 100 Queries 40 10 20 1 2 3 4 5 6 9 2 3 4 5 6 7 8 10 agents items

Querying random allocatable bundle-agent pairs only… • Bundle-agent pair (b,i) is allocatable if some yet potentially optimal allocation allocates bundle b to agent i • How to pick (b,i)? • Pick a random allocatable one • Asking only allocatable bundles means throwing out some queries • Thrm. This restriction causes the policy to make at worst twice as many expected queries as the unrestricted random elicitor. (Tight) • Proof idea: These ignored queries are either • Not useful to ask, or • Useful, but we would have had low probability of asking it, so no big difference in expectation

Querying random allocatable bundle-agent pairs only… • Much better • Almost (#items / 2) fewer queries than unrestricted random • Vanishingly small fraction of all queries asked ! • Subexponential number of queries queries queries 80 1000 60 Full revelation 100 40 Queries 10 20 1 2 3 4 5 6 9 2 3 4 5 6 7 8 10 agents items

Best value query elicitation policy so far Focus on allocations that have highest upper bound. Ask a (b,i) that is part of such an allocation and among them, pick the one that affects (via free disposal) the largest number of bundles in such allocations. Fraction of values queried before optimal allocation found & proven Number of items for sale Hudson & S. AMEC-02

Order queries • Order query: “agent i, is bundle b worth more to you than bundle b’ ?” • Motivation: Often easier to answer than value queries • Order queries are insufficient for determining welfare maximizing allocations • How to interleave order, value queries? • How to choose i, b, b’ ?

Value and order queries • Interleave: • 1 value query (of random allocatable agent-bundle pair) • 1 order query (pick arbitrary allocatable i, b, b’ ) • To evaluate, in the graphs we have • value query costs 1 • order query costs 0.1

queries queries 80 1000 Full revelation 60 Total cost 100 Value cost 40 10 20 Order cost 1 2 3 4 5 6 9 2 3 4 5 6 7 8 10 agents items Value and order queries … • Elicitation cost reduced compared to value queries only • Cost reduction depends on relative costs of order & value queries

Rank lattice based elicitation • Go down the rank lattice in best-first order (= EBF) • Performance not as good as value-based; why? • #nodes in rank lattice is 2#bidders #items • #feasible nodes is only #bidders#items queries queries 80 1000 Full revelation 60 100 40 Queries 10 20 1 2 3 4 5 6 2 4 6 8 10 12 agents items

Bound-approximation queries • Often bidders can determine their valuations more precisely by allocating more time to deliberation [S. AAAI-93, ICMAS-95, ICMAS-96, IJEC-00; Larson & S. TARK-01, AGENTS-01 workshop, SITE-02; Parkes IJCAI workshop-99] • Get better bounds UBi(b) and LBi(b) with more time spent deliberating • Idea: don’t ask for exact info if it is not necessary • Query: “agent i, hint: spend t time units tightening the upper (lower) bound on b” • How to choose i, b, t, UB or LB ? • For simplicity, in the experiment graph, fix t = 0.2 time units (1 unit gives exact)

Bound-approximation query policy • Could choose the query randomly • More sophisticated policy does slightly better: • Choose query that will change the bounds on allocatable bundles the most • Don’t know exactly how much bounds will change • Assume all legal answers equiprobable, sample to get expectation

Bound-approximation queries • This policy does quite well • Future work: try other related policies queries queries 160 1000 Full revelation 120 100 Query cost 80 10 40 1 2 3 4 5 6 9 2 3 4 5 6 7 8 10 agents items

Supplementing bound-approximation queries with order queries • Integrated as before • Computationally more expensive queries queries 160 1000 Full revelation 120 Total cost 100 80 Order cost 10 Value cost 40 1 2 3 4 5 6 9 2 3 4 5 6 7 8 10 agents items

Incentive compatibility • Elicitor’s questions leak information about others’ preferences • Can be made ex post incentive compatible • Ask enough questions to determine VCG prices • Worst approach: #bidders+1 “elicitors” • Could interleave these “extra” questions with “real” questions • To avoid lazyness; Not necessary from an incentive perspective • Agents don’t have to answer the questions & may answer questions that were not asked • Unlike in price feedback (“tatonnement”) mechanisms [Bikhchandani-Ostroy, Parkes-Ungar, Wurman-Wellman, Ausubel-Milgrom, Bikhchandani-deVries-Schummer-Vohra, …] • Push-pull mechanism

Universal revelation reducer • Def. A universal revelation reducer is an elicitor that will ask less than everything whenever the shortest certificate includes less than all queries • Thrm [Hudson & Sandholm 03] No determionistic universal revelation reducer exists • A randomized one exists • (E.g., the one that asks random unknown value queries)

Elicitation where worst-case number of queries is polynomial in items

Read-once valuations PLUS MAX GATEk,m ALL ALL 1000 500 400 100 200 150 • Thrm. If an agent has a read-once valuation function, the number of queries needed to elicit the function is polynomial in items • Thrm. If an agent’s valuation function is approximable by a read-once function (with only MAX and PLUS nodes), elicitor finds an approximation in a polynomial number of queries [Zinkevich, Blum, Sandholm ACMEC-03]

![Preference Elicitation [Conjoint Analysis]](https://cdn2.slideserve.com/5322185/preference-elicitation-conjoint-analysis-dt.jpg)