10/10

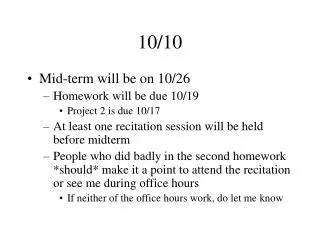

10/10. Mid-term will be on 10/26 Homework will be due 10/19 Project 2 is due 10/17 At least one recitation session will be held before midterm People who did badly in the second homework *should* make it a point to attend the recitation or see me during office hours

10/10

E N D

Presentation Transcript

10/10 • Mid-term will be on 10/26 • Homework will be due 10/19 • Project 2 is due 10/17 • At least one recitation session will be held before midterm • People who did badly in the second homework *should* make it a point to attend the recitation or see me during office hours • If neither of the office hours work, do let me know

Solving problems using propositional logic • Need to write what you know as propositional formulas • Theorem proving will then tell you whether a given new sentence will hold given what you know • Three kinds of queries • Is my knowledge base consistent? (i.e. is there at least one world where everything I know is true?) Satisfiability • Is the sentence S entailed by my knowledge base? (i.e., is it true in every world where my knowledge base is true?) • Is the sentence S consistent/possibly true with my knowledge base? (i.e., is S true in at least one of the worlds where my knowledge base holds?) • S is consistent if ~S is not entailed • But cannot differentiate between degrees of likelihood among possible sentences

Added after discussion in class What if the new fact is inconsistent with KB? • Suppose we have a KB {P, P => ~F, Q=>J, R}; and our friend comes running to tell you that M and F are true in the world. • We notice that we can’t quite add F to KB since ~F is entailed. • So what are our options? • Ask our friend to take a hike • Revise our theory so that F can be accommodated. • To do this, we need to ensure that ~F is not entailed • ..which means we have to stop the proof of ~F from going through. • Since the proof for ~F is {P, P=>~F |= ~F}, we have to either change the sentence P or the sentence P=>~F so that the proposition won’t go through • Often there are many ways of doing this revision with little guidance as to which revision is the best • For example, we could change the second sentence to P&~M => ~F • (But we could equally well have changed the sentence to P& L => ~F)

Added after discussion in class What is “monotonic” vs. “non-monotonic” logic? • Prop calculus (as well as the first order logic we shall discuss later) are monotonic, in that once you prove a fact F to be true, no amount of additional knowledge can allow us to disprove F. • But, in the real world, we jump to conclusions by default, and revise them on additional evidence • Consider the way the truth of the statement “F: Tweety Flies” is revised by us when we are given facts in sequence: 1. Tweety is a bird (F)2. Tweety is an Ostritch (~F) 3. Tweety is a magical Ostritch (F) 4. Tweety was cursed recently (~F) 5. Tweety was able to get rid of the curse (F) • How can we make logic show this sort of “defeasible” (aka defeatable) conclusions? • Many ideas, with one being negation as failure • Let the rule about birds be Bird & ~abnormal => Fly • The “abnormal” predicate is treated specially—if we can’t prove abnormal, we can assume ~abnormal is true • (Note that in normal logic, failure to prove a fact F doesn’t allow us to assume that ~F is true since F may be holding in some models and not in other models). • Non-monotonic logic enterprise involves (1) providing clean semantics for this type of reasoning and (2) making defeasible inference efficient

Pearl lives in Los Angeles. It is a high-crime area. Pearl installed a burglar alarm. He asked his neighbors John & Mary to call him if they hear the alarm. This way he can come home if there is a burglary. Los Angeles is also earth-quake prone. Alarm goes off when there is an earth-quake. Burglary => Alarm Earth-Quake => Alarm Alarm => John-calls Alarm => Mary-calls If there is a burglary, will Mary call? Check KB & E |= M If Mary didn’t call, is it possible that Burglary occurred? Check KB & ~M doesn’t entail ~B Example

Pearl lives in Los Angeles. It is a high-crime area. Pearl installed a burglar alarm. He asked his neighbors John & Mary to call him if they hear the alarm. This way he can come home if there is a burglary. Los Angeles is also earth-quake prone. Alarm goes off when there is an earth-quake. Pearl lives in real world where (1) burglars can sometimes disable alarms (2) some earthquakes may be too slight to cause alarm (3) Even in Los Angeles, Burglaries are more likely than Earth Quakes (4) John and Mary both have their own lives and may not always call when the alarm goes off (5) Between John and Mary, John is more of a slacker than Mary.(6) John and Mary may call even without alarm going off Burglary => Alarm Earth-Quake => Alarm Alarm => John-calls Alarm => Mary-calls If there is a burglary, will Mary call? Check KB & E |= M If Mary didn’t call, is it possible that Burglary occurred? Check KB & ~M doesn’t entail ~B John already called. If Mary also calls, is it more likely that Burglary occurred? You now also hear on the TV that there was an earthquake. Is Burglary more or less likely now? Example (Real)

Eager way: Model everything! E.g. Model exactly the conditions under which John will call He shouldn’t be listening to loud music, he hasn’t gone on an errand, he didn’t recently have a tiff with Pearl etc etc. A & c1 & c2 & c3 &..cn => J (alsothe exceptions may have interactions c1&c5 => ~c9 ) Ignorant (non-omniscient) and Lazy (non-omnipotent) way: Model the likelihood In 85% of the worlds where there was an alarm, John will actually call How do we do this? Non-monotonic logics “certainty factors” “probability” theory? How do we handle Real Pearl? Qualification and Ramification problems make this an infeasible enterprise

Suppose we know the likelihood of each of the (propositional) worlds (aka Joint Probability distribution) Then we can use standard rules of probability to compute the likelihood of all queries (as I will remind you) So, Joint Probability Distribution is all that you ever need! In the case of Pearl example, we just need the joint probability distribution over B,E,A,J,M (32 numbers) --In general 2n separate numbers (which should add up to 1) If Joint Distribution is sufficient for reasoning, what is domain knowledge supposed to help us with? --Answer: Indirectly by helping us specify the joint probability distribution with fewer than 2n numbers ---The local relations between propositions can be seen as “constraining” the form the joint probability distribution can take! Burglary => Alarm Earth-Quake => Alarm Alarm => John-calls Alarm => Mary-calls Probabilistic Calculus to the Rescue Only 10 (instead of 32) numbers to specify!

If in addition, each proposition is equally likely to be true or false, Then the joint probability distribution can be specified without giving any numbers! All worlds are equally probable! If there are n props, each world will be 1/2n probable Probability of any propositional conjunction with m (< n) propositions will be 1/2m If there are no relations between the propositions (i.e., they can take values independently of each other) Then the joint probability distribution can be specified in terms of probabilities of each proposition being true Just n numbers instead of 2n Easy Special Cases

Suppose we know the likelihood of each of the (propositional) worlds (aka Joint Probability distribution) Then we can use standard rules of probability to compute the likelihood of all queries (as I will remind you) So, Joint Probability Distribution is all that you ever need! In the case of Pearl example, we just need the joint probability distribution over B,E,A,J,M (32 numbers) --In general 2n separate numbers (which should add up to 1) If Joint Distribution is sufficient for reasoning, what is domain knowledge supposed to help us with? --Answer: Indirectly by helping us specify the joint probability distribution with fewer than 2n numbers ---The local relations between propositions can be seen as “constraining” the form the joint probability distribution can take! Burglary => Alarm Earth-Quake => Alarm Alarm => John-calls Alarm => Mary-calls Probabilistic Calculus to the Rescue Only 10 (instead of 32) numbers to specify!

Prob. Prop logic: The Game plan • We will review elementary “discrete variable” probability • We will recall that joint probability distribution is all we need to answer any probabilistic query over a set of discrete variables. • We will recognize that the hardest part here is not the cost of inference (which is really only O(2n) –no worse than the (deterministic) prop logic • Actually it is Co-#P-complete (instead of Co-NP-Complete) (and the former is believed to be harder than the latter) • The real problem is assessing probabilities. • You could need as many as 2n numbers (if all variables are dependent on all other variables); or just n numbers if each variable is independent of all other variables. Generally, you are likely to need somewhere between these two extremes. • The challenge is to • Recognize the “conditional independences” between the variables, and exploit them to get by with as few input probabilities as possible and • Use the assessed probabilities to compute the probabilities of the user queries efficiently.

Takes O(2n) for most natural queries of type P(D|Evidence) NEEDS O(2n) probabilities as input Probabilities are of type P(wk)—where wk is a world Directly using Joint Distribution Can take much less than O(2n) time for most natural queries of type P(D|Evidence) STILL NEEDS O(2n) probabilities as input Probabilities are of type P(X1..Xn|Y) Directly using Bayes rule Can take much less than O(2n) time for most natural queries of type P(D|Evidence) Can get by with anywhere between O(n) and O(2n) probabilities depending on the conditional independences that hold. Probabilities are of type P(X1..Xn|Y) Using Bayes rule With bayes nets

10/12 White Basket Ball Player

Blog questions(…if the mountain wont’t come to Mohammad…) • 1. We saw that propositional logic is monotonic and that real world requried "defeasible" or "non-monotonic" reasoning. Is probabilistic reasoning monotonic or non-monotonic? Explain.2. What is the difference between "Probability" and "Statistics"?3. We made a big point about the need for representing joint distribution compactly. Much of elementary probability/statistics handles continuous and multi-valued variables, where specifying the distribution of the single variable itself will need a huge number of numbers. How is this normally punted in elementary probability?

Extensional Specification (“possible worlds”) [prop logic] Enumerate all worlds consistent with what you know (models of KB) [prob logic] Provide likelihood of all worlds given what you know Intensional (implicit) specification [prop logic] Just state the local propositional constraints that you know (e.g. p=>q which means no world where p is true and q is false is a possible world) [prop logic] Just state the local probabilistic constraints that you know (e.g. P(q|p) = .99) The local knowledge implicitly defines the extensional specification. Local knowledge acts as a constraint on the possible worlds As you find out more about the world you live in, you eliminate possible worlds you could be in (or revise their likelihood) Two ways of specifying world knowledge

If B=>A then P(A|B) = ? P(B|~A) = ?

If you know the full joint, You can answer ANY query

P(CA & TA) = P(CA) = P(TA) = P(CA V TA) = P(CA|~TA) =

P(CA & TA) = 0.04 P(CA) = 0.04+0.06 = 0.1 (marginalizing over TA) P(TA) = 0.04+0.01= 0.05 P(CA V TA) = P(CA) + P(TA) – P(CA&TA) = 0.1+0.05-0.04 = 0.11 P(CA|~TA) = P(CA&~TA)/P(~TA) = 0.06/(0.06+.89) = .06/.95=.0631 Think of this as analogous to entailment by truth-table enumeration!

Problem: --Need too many numbers… --The needed numbers are harder to assess

Joint distribution requires us to assess probabilities of type P(x1,~x2,x3,….~xn) This means we have to look at all entities in the world and see which fraction of them have x1,~x2,x3….~xm true Difficult experiment to setup.. Conditional probabilities of type P(A|B) are relatively much easier to assess You just need to look at the set of entities having B true, and look at the fraction of them that also have A true Relative ease/utility of Assessing various types of probabilities • Among the conditional probabilities, causal probabilities of the form P(effect|cause) are better to assess than diagnostic probabilities of the form P(cause|effect) • Causal probabilities tend to me more stable compared to diagnostic probabilities • (for example, a text book in dentistry can publish P(TA|Cavity) and hope that it will hold in a variety of places. In contrast, P(Cavity|TA) may depend on other fortuitous factors—e.g. in areas where people tend to eat a lot of icecream, many tooth aches may be prevalent, and few of them may be actually due to cavities.

Generalized bayes rule P(A|B,e) = P(B|A,e) P(A|e) P(B|e) Get by with easier to assess numbers Think of this as analogous to inference rules (like modus-ponens) A be Anthrax; Rn be Runny Nose P(A|Rn) = P(Rn|A) P(A)/ P(Rn)

Can we avoid assessing P(S)? P(M|S) = P(S|M) P(M)/P(S) P(~M|S) = P(S|~M) P(~M)/P(S) ---------------------------------------------------------------- 1 = 1/P(S) [ P(S|M) P(M) + P(S|~M) P(~M) ] So, if we assess P(S|~M), then we don’t need to assess P(S) “Normalization”

Is P(M|~S) any easier to assess than P(~S)? • P(S|M) is clearly easy to assess (just look at the fraction of meningitis patients that have stiff neck • P(S) seems hard to assess—you need to ask random people whether they have stiff neck or not • P(S|~M) seems just as hard to assess… • And in general there seems to be no good argument that it is always easier to assess than P(S) • In fact they are related in a quite straightforward way • P(S) =P(S|M)*P(M) + P(S|~M)*P(~M) • (To see this, note that P(S)= P(S&M)+P(S&~M) and then use product rule) • The real reason we assess P(S|~M) is that often we need the posterior distribution rather than just the single probability • For boolean variables, you can get the distribution given one value • But for multi-valued variables, we need to assess P(D=di|S) for all values di of the variable D. To do this, we need P(S|D=di) type probabilities anyway…

Need to know this! If n evidence variables, We will need 2n probabilities! What happens if there are multiple symptoms…? Conditional independence To the rescue Suppose P(TA,Catch|cavity) = P(TA|Cavity)*P(Catch|Cavity) Patient walked in and complained of toothache You assess P(Cavity|Toothache) Now you try to probe the patients mouth with that steel thingie, and it catches… How do we update our belief in Cavity? P(Cavity|TA, Catch) = P(TA,Catch| Cavity) * P(Cavity) P(TA,Catch) = a P(TA,Catch|Cavity) * P(Cavity)

Generalized bayes rule P(A|B,e) = P(B|A,e) P(A|e) P(B|e)

Takes O(2n) for most natural queries of type P(D|Evidence) NEEDS O(2n) probabilities as input Probabilities are of type P(wk)—where wk is a world Directly using Joint Distribution Can take much less than O(2n) time for most natural queries of type P(D|Evidence) STILL NEEDS O(2n) probabilities as input Probabilities are of type P(X1..Xn|Y) Directly using Bayes rule Can take much less than O(2n) time for most natural queries of type P(D|Evidence) Can get by with anywhere between O(n) and O(2n) probabilities depending on the conditional independences that hold. Probabilities are of type P(X1..Xn|Y) Using Bayes rule With bayes nets