Local Computations in Large-Scale Networks

830 likes | 995 Vues

Local Computations in Large-Scale Networks. Idit Keidar Technion. Material. I. Keidar and A. Schuster: “ Want Scalable Computing? Speculate! ” SIGACT News Sep 2006. http://www.ee.technion.ac.il/people/idish/ftp/speculate.pdf

Local Computations in Large-Scale Networks

E N D

Presentation Transcript

Local Computations in Large-Scale Networks Idit Keidar Technion

Material • I. Keidar and A. Schuster: “Want Scalable Computing? Speculate!”SIGACT News Sep 2006.http://www.ee.technion.ac.il/people/idish/ftp/speculate.pdf • Y. Birk, I. Keidar, L. Liss, A. Schuster, and R. Wolff: “Veracity Radius - Capturing the Locality of Distributed Computations”. PODC'06.http://www.ee.technion.ac.il/people/idish/ftp/veracity_radius.pdf • Y. Birk, I. Keidar, L. Liss, and A. Schuster: “Efficient Dynamic Aggregation”. DISC'06. http://www.ee.technion.ac.il/people/idish/ftp/eff_dyn_agg.pdf • E. Bortnikov, I. Cidon and I. Keidar: “Scalable Load-Distance Balancing in Large Networks”. DISC’07. http://www.ee.technion.ac.il/people/idish/ftp/LD-Balancing.pdf

Brave New Distributed Systems • Large-scale Thousands of nodes and more .. • Dynamic … coming and going at will ... • Computations … while actually computing something together. This is the new part.

Today’s Huge Dist. Systems • Wireless sensor networks • Thousands of nodes, tens of thousands coming soon • P2P systems • Reporting millions online (eMule) • Computation grids • Harnessing thousands of machines (Condor) • Publish-subscribe (pub-sub) infrastructures • Sending lots of stock data to lots of traders

Not Computing Together Yet • Wireless sensor networks • Typically disseminate information to central location • P2P & pub-sub systems • Simple file sharing, content distribution • Topology does not adapt to global considerations • Offline optimizations (e.g., clustering) • Computation grids • “Embarrassingly parallel” computations

Emerging Dist. Systems – Examples • Autonomous sensor networks • Computations inside the network, e.g., detecting trouble • Wireless mesh network (WMN) management • Topology control • Assignment of users to gateways • Adapting p2p overlays based on global considerations • Data grids (information retrieval)

Autonomous Sensor Networks The data center is too hot! Let’s turn on the sprinklers (need to backup first) Let’s all reduce power

Autonomous Sensor Networks • Complex autonomous decision making • Detection of over-heating in data-centers • Disaster alerts during earthquakes • Biological habitat monitoring • Collaboratively computing functions • Does the number of sensors reporting a problem exceed a threshold? • Are the gaps between temperature reads too large?

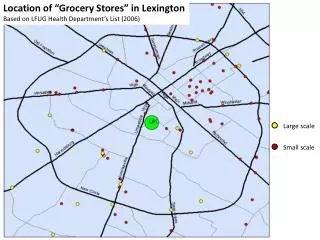

Wireless Mesh Networks • Infrastructure (unlike MANET) • City-wide coverage • Supports wireless devices • Connections to Mesh and out to the Internet • “The last mile” • Cheap • Commodity wireless routers (hot spots) • Few Internet connections

Decisions, Decisions • Assigning users to gateways • QoS for real-time media applications • Network distance is important • So is load • Topology control • Which links to set up out of many “radio link” options • Which nodes connect to Internet (act as gateways) • Adapt to varying load

Centralized Solutions Don’t Cut It • Load • Communication costs • Delays • Fault-tolerance

Classical Dist. Solutions Don’t Cut It • Global agreement / synchronization before any output • Repeated invocations to continuously adapt to changes • High latency, high load • By the time synchronization is done, the input may have changed … the result is irrelevant • Frequent changes -> computation based on inconsistent snapshot of system state • Synchronizing invocations initiated at multiple locations typically relies on a common sequencer (leader) • difficult and costly to maintain

Locality to the Rescue! L • Nodes make local decisions based on communication (or synchronization) with some proximate nodes, rather than the entire network • Infinitely scalable • Fast, low overhead, low power, …

The Locality Hype • Locality plays a crucial role in real life large scale distributed systems John Kubiatowicz et.al, on global storage: “In a system as large as OceanStore, locality is of extreme importance… C. Intanagonwiwat et.al, on sensor networks: “An important feature of directed diffusion is that … are determined by localized interactions...” N. Harvey et.al, on scalable DHTs: “The basic philosophy of SkipNet is to enable systems to preserve useful content and path locality…”

What is Locality? • Worst case view • O(1) in problem size [Naor & Stockmeyer,1993] • Less than the graph diameter [Linial, 1992] • Often applicable only to simplistic problems or approximations • Average case view • Requires an a priori distribution of the inputs To be continued…

Interesting Problems Have Inherently Global Instances • WMN gateway assignment: arbitrarily high load near one gateway • Need to offload as far as the end of the network • Percentage of nodes whose input exceeds threshold in sensor networks: near-tie situation • All “votes” need to be counted Fortunately, they don’t happen too often

Speculation is the Key to Locality • We want solutions to be “as local as possible” • WMN gateway assignment example: • Fast decision and quiescence under even load • Computation time and communication adaptive to distance to which we need to offload • A node cannot locally know whether the problem instance is local • Load may be at other end of the network • Can speculate that it is (optimism )

Computations are Never “Done” • Speculative output may be over-ruled • Good for ever-changing inputs • Sensor readings, user loads, … • Computing ever-changing outputs • User never knows if output will change • due to bad speculation or unreflected input change • Reflecting changes faster is better • If input changes cease, output will eventually be correct • With speculation same as without

Summary: Prerequisites for Speculation • Global synchronization is prohibitive • Many instances amenable to local solutions • Eventual correctness acceptable • No meaningful notion of a “correct answer” at every point in time • When the system stabilizes for “long enough”, the output should converge to the correct one

The Challenge: Find aMeaningful Notion for Locality • Many real world problems are trivially global in the worst case • Yet, practical algorithms have been shown to be local most of the time ! • The challenge: find a theoretical metric that captures this empirical behavior

Reminder: Naïve Locality Definitions • Worst case view • Often applicable only to simplistic problems or approximations • Average case view • Requires an a priori distribution of the inputs

Instance-Locality • Formal instance-based locality: • Local fault mending [Kutten,Peleg95, Kutten,Patt-Shamir97] • Growth-restricted graphs [Kuhn, Moscibroda, Wattenhofer05] • MST [Elkin04] • Empirical locality: voting in sensor networks • Although some instances require global computation, most can stabilize (and become quiescent) locally • In small neighborhood, independent of graph size • [Wolff,Schuster03, Liss,Birk,Wolf,Schuster04]

“Per-Instance” Optimality Too Strong • Instance: assignment of inputs to nodes • For a given instance I, algorithm AIdoes: • if (my input is as in I) output f(I)else send message with input to neighbor • Upon receiving message, flood it • Upon collecting info from the whole graph, output f(I) • Convergence and output stabilization in zero time on I • Can you beat that? Need to measure optimality per-class notper-instance Challenge: capture attainable locality

Local Complexity [BKLSW’06] • Let • G be a family of graphs • P be a problem on G • M be a performance measure • Classification CG of inputs to P on a graph G into classes C • For class of inputs C, MLB(C) be a lower bound for computing P on all inputs in C • Locality: GGCCGIC : MA(I) const MLB(C) • A lower bound on a single instance is meaningless!

The Trick is in The Classification • Classification based on parameters • Peak load in WMN • Proximity to threshold in “voting” • Independent of system size • Practical solutions show clear relation between these parameters and costs • Parameters not always easy to pinpoint • Harder in more general problems • Like “general aggregation function”

Veracity Radius – Capturing the Locality of Distributed Computations Yitzhak Birk, Idit Keidar, Liran Liss, Assaf Schuster, and Ran Wolf

Dynamic Aggregation • Continuous monitoring of aggregate value over changing inputs • Examples: • More than 10% of sensors report of seismic activity • Maximum temperature in data center • Average load in computation grid

The Setting • Large graph (e.g., sensor network) • Direct communication only between neighbors • Each node has a changing input • Inputs change more frequently than topology • Consider topology as static • Aggregate function f on multiplicity of inputs • Oblivious to locations • Aggregate result computed at all nodes

Goals for Dynamic Aggregation • Fast convergence • If from some time t onward inputs do not change … • Output stabilization time from t • Quiescence time from t • Note: nodes do not know when stabilization and quiescence are achieved • If after stabilization input changes abruptly… • Efficient communication • Zero communication when there are zero changes • Small changes little communication

Standard Aggregation Solution: Spanning Tree 20 black, 12 white Global communication! black! 7 black, 1 white black! 2 black 1 black

Spanning Tree: Value Change 19 black, 13 white Global communication! 6 black, 2 white

The Bad News • Virtually every aggregation function has instances that cannot be computed without communicating with the whole graph • E.g., majority voting when close to the threshold “every vote counts” • Worst case analysis: convergence, quiescence times are (diameter)

Local Aggregation – Intuition • Example – Majority Voting: • Consider a partition in which every set has the same aggregate result (e.g., >50% of the votes are for ‘1’) • Obviously, this result is also the global one! 51% 73% 98% 57% 84% 91% 88% 93% 76% 59% 80%

Veracity Radius (VR) for One-Shot Aggregation [BKLSW,PODC’06] • Roughly speaking: the min radius r0 such that"r> r0: all r-neighborhoods have same result • Example: majority Radius 1: wrong result Radius 2: correct result VR=2

Introducing Slack • Examine “neighborhood-like” environments that: • (1) include an a(r)-neighborhood for some a(r)<r • (2) are included in an r-neighborhood • Example: a(r)=max{r-1,r/2} r = 2: wrong result Global result: VRa=3

I only b’s I’ only a’s n1 a’s v r-1 n1 a’s v r-1 n2 b’s n2 b’s VR Yields a Class-Based Lower Bound • VR for both input assignments is r • Node v cannot distinguish between I and I’ in fewer than r steps • Lower bound of r on both output stabilization and quiescence • Trivially tight bound for output stabilization

Veracity Radius Captures the Locality of One-Shot Aggregation [BKLSW,PODC’06] • I-LEAG (Instance-Local Efficient Aggregation on Graphs) • Quiescence and output stabilization proportional to VR • Per-class within a factor of optimal • Local: depends on VR, not graph size! • Note: nodes do not know VR or when stabilization and quiescence are achieved • Can’t expect to know you’re “done” in dynamic aggregation…

Local Partition Hierarchy • Topology static • Input changes more frequently • Build structure to assist aggregation • Once per topology change • Spanning tree, but with locality properties

Mesh edge: Level 0 edge: Level 1 edge: Level 2 edge: Level 0 pivot: Level 1 pivot: Level 2 pivot: Minimal Slack LPH for Mesheswith a(r)=max(r-1,r/2)

The I-LEAG Algorithm • Phases correspond to LPH levels • Communication occurs within a cluster only if there are nodes with conflicting outputs • All of the cluster’s nodes hold the same output when the phase completes • All clusters’ neighbors know the cluster’s output • Conflicts are detected without communication • I-LEAG reaches quiescence once the last conflict is detected

I-LEAG’s Operation(Majority Voting) • Legend: Input: Output: Message: Tree edge: ! Conflict: Initialization: Node’s output is its input

Startup: Communication AmongTree Neighbors • Legend: Input: Output: Message: Tree edge: ! Conflict: Recall neighbor values will be used in all phases

Phase 0 Conflict Detection • Legend: Input: Output: ! Message: ! Conflict: ! ! ! !

Phase 0 Conflict Resolution Updates sent by clusters that had conflicts • Legend: Input: Output: Message: Tree edge: ! Conflict:

Phase 1 Conflict Detection • Legend: ! Input: Output: Message: Tree edge: ! ! ! Conflict: ! No new Communication

Phase 1 Conflict Resolution Updates sent by clusters that had conflicts • Legend: Input: Output: Message: Tree edge: ! Conflict:

Phase 2 Conflict Detection Using information sent at phase 0 • Legend: Input: Output: Message: Tree edge: ! Conflict: No Communication

Phase 2 Conflict Resolution This region has been idle since phase 0 • Legend: Input: Output: Message: Tree edge: ! Conflict: No conflicts found, no need for resolution