BUT SWS 2013 - Massive parallel approach

140 likes | 286 Vues

BUT SWS 2013 - Massive parallel approach Brno University of Technology Faculty of Information Technology Speech@FIT Igor Sz öke , Lukáš Burget, František Grézl , Lucas Ondel. MediaEval SWS 2013 workshop, October 18.-19. 2013, Barcelona. Outlines.

BUT SWS 2013 - Massive parallel approach

E N D

Presentation Transcript

BUT SWS 2013 - Massive parallel approach Brno University of Technology Faculty of Information Technology Speech@FIT Igor Szöke, Lukáš Burget, František Grézl, Lucas Ondel MediaEval SWS 2013 workshop, October 18.-19. 2013, Barcelona

Outlines • Systems overview & Underlyingtechnologies • AKWS • DTW • Calibration • Fusion • Resultsand discussion

System overview • Ourinternaltaskwas: • To reuse as many Atomic systems as we have and • fuse them on the detection level. • We end up with: • 13 Atomic systems, 26 QbE sub-systems, • 19 languages (16 unique). • zero resourced system • Ingredients • Phonemerecognizer, AcousticKeywordSpotting, • DTW, Calibration, Fusion

System overview Igor’s Greeting

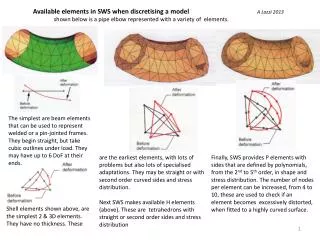

Subsystem • Sentence meannormalization • Neuralnetwork basedfeatures • threestatephoneposteriors • Querydetector • AKWS • DTW

Atomic system • Adaptation on target data (GP and BABEL NNs) • Original NN used for target data labeling (state level) • Then, universal context, bottle-neck neural network base • classifier trained. • LCRC, SWS2012 without any adaptation.

AKWS QbE subsystem • Query -> example-to-text using phoneme recognizer • Omit initial and final silence • Omit queries having less than 3 non-silence phonemes • No LM constrains

DTW QbE subsystem • Segmental DTW (query can start in any frame of utterance) • Log dot product over phoneme state posteriors • Path cost: 1, 1, 1 • On-line normalizing of the path • While filling a cell in a distant matrix, the value already • considers the length of the previous path • We add VAD as late submission -> really huge impact • Initial and final silence frames were removed from • examples

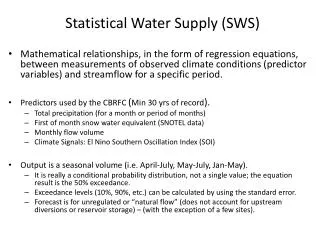

Calibration • Really important! • No-norm, z-norm, z-norm_sideinfo, m-norm (the best) • Experiments with adding sideinfo [log(#term_occ), #phn, • log(#nonsilence frames)] • Linear model was trained (using logistic regresion) • Good improvement • M-norm – find the peak in histogram of term scores • Calculate variance of data <peak, +inf> • Apply variance norm on the whole data set • Subtract the peak (shift the peak to 0) • Event better than z-norm • Sideinfo does not helped! • (means m-norm is calibrated enough)

DTW AWKS Orig Z-norm M-norm

Calibration 1 AKWS subsystem MTWV (UBTWV) orig 0.0000 (0.1012) z-norm 0.0330 (0.1434) z-norm_side 0.0603 (0.1436) m-norm 0.0769 (0.1611)

Fusion • Linear combination of subsystems (and one bias) • Trained with respect to minimizing of cross entropy • (binary logistic regression) • Detections are clustered • System not producing any score at given time get a • default score

Results • MTWV(UBTWV) • UBTWV – non-pooled TWV, ideal calibration, oracle calibration • DTW is superior to AKWS… but the speed… • Still having some gaps in calibration • (the difference between DEV and EVAL TWV) • NN unsupervised adaptation helped • 1 AKWS subsystem: 0.0443(0.1154) -> 0.0769(0.1630) • m-norm! • Lot of directions for research