Contents of Chapter 5

720 likes | 2.1k Vues

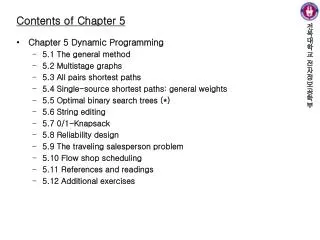

Contents of Chapter 5. Chapter 5 Dynamic Programming 5.1 The general method 5.2 Multistage graphs 5.3 All pairs shortest paths 5.4 Single-source shortest paths: general weights 5.5 Optimal binary search trees (*) 5.6 String editing 5.7 0/1-Knapsack 5.8 Reliability design

Contents of Chapter 5

E N D

Presentation Transcript

Contents of Chapter 5 • Chapter 5 Dynamic Programming • 5.1 The general method • 5.2 Multistage graphs • 5.3 All pairs shortest paths • 5.4 Single-source shortest paths: general weights • 5.5 Optimal binary search trees (*) • 5.6 String editing • 5.7 0/1-Knapsack • 5.8 Reliability design • 5.9 The traveling salesperson problem • 5.10 Flow shop scheduling • 5.11 References and readings • 5.12 Additional exercises

5.1 The General Method • Dynamic programming • An algorithm design method that can be used when the solution can be viewed as the result of a sequence of decisions • Many problems in earlier chapters can be viewed this way • Example 5.1 (Knapsack) • Example 5.2 (Optimal merge patterns) • Example 5.3 (shortest path) • Some solvable by Greedy method under the condition • Condition: an optimal sequence of decisions can be found by making the decisions one at a time and never making an erroneous decision • For many other problems • Not possible to make stepwise decisions (based only on local information) in a manner like Greedy method • Example 5.4 (Shortest path: from vertex i to j)

5.1 The General Method • Enumeration vs. dynamic programming • Enumeration • Enumerating all possible decision sequences and picking out the best prohibitive time and storage requirements • Dynamic programming • Drastically reducing the time and storage by avoiding some decision sequences that cannot possibly be optimal • Making explicit appeal to the principle of optimality • Definition 5.1 [Principle of optimality] The principle of optimality states that an optimal sequence of decisions has the property that whatever the initial state and decision are, the remaining decisions must constitute an optimal decision sequence with regard to the state resulting from the first decision.

5.1 The General Method • Greedy method vs. dynamic programming • Greedy method • Only one decision sequence is ever generated • Dynamic programming • Many decision sequences may be generated • But sequences containing suboptimal subsequences discarded because they cannot be optimal due to the principle of optimality • Principle of optimality • Example 5.5 (Shortest path) • Example 5.6 (0/1 knapsack)

5.1 The General Method • Notation and formulation for the principle • Notation and formulation • S0: initial problem state • n decisions di, 1≤i≤n have to be made to solve the problem and D1={r1,r2,…,rj} is the set of possible decision values for d1 • Si is the problem state when ri chosen, and Γi is an optimal sequence wrt Si • Then, when the principle of optimality holds, an optimal sequence wrt S0 is the best of the decision sequences ri,Γi, 1≤i≤n • Examples 5.7 and 5.9 (Shortest path) • Examples 5.8 and 5.10 (0/1 knapsack) • g0(m) = max {g1(m), g1(m-w1)+p1} (5.2) • gi(y) = max {gi+1(y), gi+1(y-wi+1)+pi+1} (5.3)

5.1 The General Method • Example 5.11 (0/1 knapsack) • n=3, (w1,w2,w3)=(2,3,4), (p1,p2,p3)=(1,2,5), m=6 • Recursive application of (5.3) • g0(6) = max {g1(6), g1(4)+1} = max {5, 5+1} = 6 • g1(6) = max {g2(6), g2(3)+2} = max {5,2} = 5 • g2(6) = max {g3(6), g3(2)+5} = max {0,5} = 5 • g2(3) = max {g3(3), g3(3-4)+5} = max {0,-∞} = 0 • g1(4) = max {g2(4), g2(1)+2} = max {5,0} = 5 • g2(4) = max {g3(4), g3(0)+5} = max {0,5} = 5 • g2(1) = max {g3(1), g3(1-4)+5} = max {0,-∞} = 0

5.2 Multistage Graphs • Definition: multistage graph G(V,E) • A directed graph in which the vertices are partitioned into k≥2 disjoint sets Vi, 1≤i≤k • If <u,v> ∈ E, then u ∈ Vi and v ∈ Vi+1 for some I, 1≤i<k • |V1|= |Vk|=1, and s(source) ∈ V1 and t(sink) ∈ Vk • c(i,j)=cost of edge <i,j> • Definition: multistage graph problem • Find a minimum-cost path from s to t • e.g., Figure 5.2 (5-stage graph)

5.2 Multistage Graphs • Many problems can be formulated as multistage graph problem • An example: resource allocation problem • n units of resource are to be allocated to r projects • N(i,j) = net profit when j units of resource allocated to project i • V(i,j) = vertex representing the state in which a total of j units have already been allocated to projects 1,2,..,i-1

5.2 Multistage Graphs • e.g., Figure 5.3 (3 projects with n=4)

5.2 Multistage Graphs • DP formulation • Every s to t path is the result of a sequence of k-2 decisions • The principle of optimality holds (Why?) • p(i,j) = a minimum-cost path from vertex j in Vi to vertex t • cost(i,j) = cost of path p(i,j) • - (5.5) • Solving (5.5) • cost(k-1,j) = c(j,t) if <j,t> ∈ E, ∞ otherwise • Then computing cost(k-2,j) for all j ∈ Vk-2 • Then computing cost(k-3,j) for all j ∈ Vk-3 • … • Finally computing cost(1,s)

5.2 Multistage Graphs • Example: Figure 5.2 (k=5) • Stage 5 • cost(5,12) = 0.0 • Stage 4 • cost(4,9) = min {4+cost(5,12)} = 4 • cost(4,10) = min {2+cost(5,12)} = 2 • cost(4,11) = min {5+cost(5,12)} = 5 • Stage 3 • cost(3,6) = min {6+cost(4,9), 5+cost(4,10)} = 7 • cost(3,7) = min {4+cost(4,9), 3+cost(4,10)} = 5 • cost(3,8) = min {5+cost(4,10), 6+cost(4,11)} = 7

5.2 Multistage Graphs • Example: Figure 5.2 (k=5) (Continued) • Stage 2 • cost(2,2) = min {4+cost(3,6), 2+cost(3,7), 1+cost(3,8)} = 7 • cost(2,3) = min {2+cost(3,6), 7+cost(3,7)} = 9 • cost(2,4) = min {11+cost(3,8)} = 18 • cost(2,5) = min {11+cost(3,7), 8+cost(3,8)} = 15 • Stage 1 • cost(1,1) = min {9+cost(2,2), 7+cost(2,3), 3+cost(2,4), 2+cost(2,5)} = 16 • Important notes: avoiding the recomputation of cost(3,6), cost(3,7), and cost(3,8) in computing cost(2,2)

5.2 Multistage Graphs • Recording the path • d(i,j) = value of l (l is a vertex) that minimizes c(j,l)+cost(i+1,l) in equation (5.5) • In Figure 5.2 • d(3,6)=10; d(3,7)=10; d(3,8)=10 • d(2,2)=7; d(2,3)=6; d(2,4)=8; d(2,5)=8 • d(1,1)=2 • When letting the minimum-cost path 1,v2,v3,…,vk-1,t, • v2 = d(1,1) = 2 • v3 = d(2,d(1,1)) = 7 • v4 = d(3,d(2,d(1,1))) = d(3,7) = 10 • So the solution (minimum-cost path) is 1271012 and its cost is 16

5.2 Multistage Graphs • Algorithm (Program 5.1) • Vertices numbered in order of stages (like Figure 5.2) void Fgraph (graph G, int k, int n, int p[] ) // The input is a k-stage graph G = (V,E) with n vertices indexed in order // of stages. E is a set of edges and c[i][j] is the cost of <i, j>. // p[1 : k] is a minimum-cost path. { float cost[MAXSIZE]; int d[MAXSIZE], r; cost[n] = 0.0; for (int j=n-1; j >= 1; j--) { // Compute cost[j]. let r be a vertex such that <j, r> is an edge of G and c[j][r] + cost[r] is minimum; cost[j] = c[j][r] + cost[r]; d[j] = r; } // Find a minimum-cost path. p[1] = 1; p[k] =n ; for ( j=2; j <= k-1; j++) p[j] = d[ p[ j-1 ] ]; }

5.2 Multistage Graphs • Backward approach • -- (5.6)

5.3 All Pairs Shortest Paths • Problem definition • Determine a matrix A such that A(i,j) is the length of a shortest path from i to j • One method using the algorithm ShortestPaths in Section 4.8 • Each vertex requiring O(n2) time, so total time is O(n3) • Restriction: no negative edge allowed • DP algorithm • The principle of optimality (Does it hold? Why?) • (weaker) restriction: no cycle with negative length (Figure 5.5)

5.3 All Pairs Shortest Paths • DP algorithm (Continued) • Ak(i,j) = the length of a shortest path from i to j going through no vertex of index greater than k • -- (5.8) • Example 5.15 (Figure 5.6)

5.3 All Pairs Shortest Paths • Algorithm (Program 5.3) • The computation of line 13 is carried out in-place (Why is it possible?) void AllPaths(float cost[][SIZE], float A[][SIZE], int n) // cost[1:n][1:n] is the cost adjacency matrix of // a graph with n vertices; A[i][j] is the cost // of a shortest path from vertex i to vertex j. // cost[i][i] = 0.0, for 1 <= i <= n. { for (int i=1; i<=n; i++) for (int j=1; j<=n; j++) A[i][j] = cost[i][j]; // Copy cost into A. for (int k=1; k<=n; k++) for (i=1; i<=n; i++) for (int j=1; j<=n; j++) A[i][j] = min(A[i][j], A[i][k]+A[k][j]); }

5.6 String Editing • Problem • Given two strings X=x1x2…xn and Y=y1y2…ym • Edit operations and their costs • D(xi) = cost of deleting xi from X • I(yj) = cost of inserting yj into X • C(xi,yj) = cost of changing xi of X into yj • The problem is to identify a minimum-cost sequence of edit operations that will transform X into Y • Example 5.19 • X=x1x2…x5=aabab and Y=y1y2…y4=babb • D()=I()=1 and C()=2 • One way of transforming • D(x1) D(x2) D(x3) D(x4) D(x5) I(y1) I(y2) I(y3) I(y4) = 9 • Another way • D(x1) D(x2) I(y4) = 3 • The principle of optimality holds (Why?)

5.6 String Editing • Dynamic programming formulation • cost(i,j) = the minimum cost of any edit sequence for transforming x1x2…xi and y1y2…yj (for 0in and 0jm) • For i=0 and j=0, cost(i,j) = 0 • For j=0 and i>0, cost(i,0) = cost(i-1,0)+D(xi) • For j>0 and i=0, cost(0,j) = cost(0,j-1)+I(yj) • For i>0 and j>0, x1x2…xi can be transformed into y1y2…yj in one of three ways • 1. Transform x1, x2, …,xi-1 into y1, y2, …, yj using a minimum-cost edit sequence and then delete xi. The corresponding cost is cost(i-1, j) + D(xi). • 2. Transform x1, x2, …,xi-1 into y1, y2, …, yj-1 using a minimum-cost edit sequence and then change the symbol xi to yj. The associated cost is cost(i-1, j-1) + C(xi,yj). • 3. Transform x1, x2, …,xi into y1, y2, …, yj-1 using a minimum-cost edit sequence and then insert yj. This corresponds to a cost of cost(i, j-1) + I(yj).

5.6 String Editing • - (5.3)

5.6 String Editing • Example 5.20 Consider the string editing problem of Example 5.19. X = a, a, b, a, b and Y = b, a, b, b. Each insertion and deletion has a unit cost and a change costs 2 units. For the cases i=0, j>1 and j=0, i>1, cost(i, j) can be computed first (Figure 5.18). Let us compute the rest of the entries in row-major order. The next entry to be computed is cost(1,1). cost(1,1) = min { cost(0,1) + D(x1), cost(0,0) + C(x1,y1), cost(1,0) + I(y1) } = min { 2, 2, 2 } = 2 cost(1,2) = min { cost(0,2) + D(x1), cost(0,1) + C(x1,y2), cost(1,1) + I(y2) } = min { 3, 1, 3 } = 1

5.9 The Traveling Salesperson Problem • Problem • TSP is a permutation problem (not subset problem) • Usually the permutation problem is harder than the subset one • Because n! > 2n • Given a directed graph G(V,E) • cij= edge cost • A tour is a directed simple cycle that includes every vertex in V • The TSP is to find a tour of minimum cost • Many applications • 1. Routing a postal van to pick up mail from mail boxes located at n different sites • 2. Planning robot arm movements to tighten the nuts on n different positions • 3. Planning production in which n different commodities are manufactured on the same sets of machines

5.9 The Traveling Salesperson Problem • DP formulation • Assumption: A tour starts and ends at vertex 1 • The principle of optimality holds (Why?) • g(i,S) = length of a shortest path starting at vertex i, going through all vertices in S, and terminating at vertex 1 g(1,V-{1}) = - (5.20) g(i,S) = - (5.21) • Solving the recurrence relation • g(i,Φ) = ci1, 1≤i≤n • Then obtain g(i,S) for all S of size 1 • Then obtain g(i,S) for all S of size 2 • Then obtain g(i,S) for all S of size 3 • … • Finally obtain g(1,V-{1})

1 2 4 3 (a) (b) 5.9 The Traveling Salesperson Problem • Example 5.26 (Figure 5.21) • For |S|=0 • g(2,Φ)=c21=5 • g(3,Φ)=c31=6 • g(4,Φ)=c41=8 • For |S|=1 • g(2,{3})=c23+g(3,Φ)=15 • g(2,{4})=c24+g(4,Φ)=18 • g(3,{2})=c32+g(2,Φ)=18 • g(3,{4})=c34+g(4,Φ)=20 • g(4,{2})=c42+g(2,Φ)=13 • g(4,{3})=c43+g(3,Φ)=15

5.9 The Traveling Salesperson Problem • Example 5.26 (Continued) • For |S|=2 • g(2,{3,4}) = min {c23+g(3,{4}), c24+g(4,{3})} = 25 • g(3,{2,4}) = min {c32+g(2,{4}), c34+g(4,{2})} = 25 • g(4,{2,3}) = min {c42+g(2,{3}), c43+g(3,{2})} = 23 • For |S|=3 • g(1,{2,3,4} = min {c12+g(2,{3,4}), c13+g(3,{2,4}), c14+g(4,{2,3})} = = min {35, 40, 43} = 35 • Time complexity • Let N be the number of g(i,S) that have to be computed before (5.20) can be used to compute g(1,V-{1}) • N = • Totaltime = O(n22n) • This is better than enumerating all n! different tours