Artificial Intelligence 10. Neural Networks

150 likes | 175 Vues

Understand linear regression and neural networks for predictive modeling, along with optimization techniques like gradient descent and back propagation. Enhance your knowledge on approximating functions using neural networks and handling overfitting issues.

Artificial Intelligence 10. Neural Networks

E N D

Presentation Transcript

Artificial Intelligence10. Neural Networks Japan Advanced Institute of Science and Technology (JAIST) Yoshimasa Tsuruoka

Outline • Regression • Linear regression • Gradient descent • Neural networks • Back propagation • Lecture slides • http://www.jaist.ac.jp/~tsuruoka/lectures/

Linear regression • Input: vector • Output: numerical value • Example • Predict the level of comfortableness from temperature and humidity

Optimizing the weight vector • Minimize the sum of squared errors

Gradient descent • Move in the direction of the negative gradient

Optimizing the weight vector • Squared errors summed over the whole training samples • Squared error on a particular sample n • Stochastic gradient computed from samplen

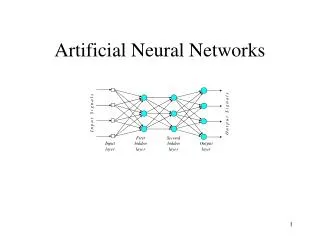

Neural networks • Two-layer neural network Hidden Layer Input Activation Output Input Output

Activation function • Transforms the activation level of a unit into an output

Optimizing the weight vector • Error w.r.t. a particular samplen • Gradient First layer Second layer

Gradient • Second layer Error

Gradient • First layer

Gradient • In summary, Error in the first layer

Back propagation • Backward propagation of errors The same technique can be applied to neural networks with more than one layer of hidden units

Neural networks • Capacity of approximating an arbitrary function • Prone to overfitting • The error function is not convex • Gradient descent can only give you local minima

Questionnaires • Lecture codeI2152