ATLAS Tier-0 exercise

ATLAS Tier-0 exercise. Export report. Outline. Goals Setup Ramp-up Observations CASTOR stager limits dCache problems Conclusions Future plans. Goals. Nominal export to all Tier-1 sites First to each site individually Then collectively to all Tier-1s Gradual involvement of Tier-2s

ATLAS Tier-0 exercise

E N D

Presentation Transcript

ATLAS Tier-0 exercise Export report

Outline • Goals • Setup • Ramp-up • Observations • CASTOR stager limits • dCache problems • Conclusions • Future plans

Goals • Nominal export to all Tier-1 sites • First to each site individually • Then collectively to all Tier-1s • Gradual involvement of Tier-2s • Rates (in MB/s): • Total of 997MBytes/sec out of CERN • These are the daily peak rates we want to achieve. • Average rates are 40% lower (ATLAS Computing Model day has 50k secs)

Goals • Use DQ2 0.3 • Use new ARDA Dashboard monitoring functionality • Exercise central VO BOXes • New LFC bulk GUID lookup method

Setup • New LFC server version @ CERN • (1.6.3) • ORACLE-based DQ2 0.3 central catalogs • 2 load-balanced front-ends • Central DQ2 0.3 site services • Contacting FTS servers and LFCs, handling bookkeeping and file transfers • Managed machines, lemon monitoring, RPM auto-updates, …

Setup • Tier-0 plug-in updated for DQ2 0.3 • Improved exception handling • File sizes, checksums recorded centrally on DQ2 catalogs (previously only on LFC) • Separate ARDA dashboard instance for Tier-0 tests • Will be kept later as a DQ2 testbed

Setup • ATLAS daily operations morning meeting • Involving all developers • ARDA • DDM • Tier-0 • .. and others whenever necessary

Ramp-up (summary) • 1st week: • finalizing setup, SLC3/4/python versions • FZK, LYON, BNL • End of 1st week (weekend): • SARA, CNAF • 2nd week: • PIC, TRIUMF, ASGC • 3rd week: • NDGF • Missing: • RAL - was upgrading to CASTOR 2

Observations • A single observation at first… • … unstable CASTOR • GridFTP errors dominating the export • Similar errors (rfcp timeouts) when importing data to CASTOR • Failure rate as high as 95% reading from CASTOR during ‘near collapse’ periods • .. and constant multiple transfer attempts per file • Did spot T1 failures (e.g. unstable SRM/storages) but these were at the noise level • hard to take meaningful conclusions regarding T1 stabilities from this run • … then went to the details

CASTOR stager limits • The CASTOR architecture is highly dependant on a database (ORACLE) and schedulingsystem (LSF) • The LSF-plugin - the part that “connects” CASTOR with its LSF backend - had a well-known limitation • Could cope with ~1000 PENDing jobs in queue (configured limit to avoid a complete stop of the scheduler) • As long as the scheduler is capable of dispatching the jobs to the diskservers there could be many thousands of parallel jobs… • The Tier-0 internal processing puts a load of ~ 100 jobs into CASTOR • The Tier-0->Tier-1 export puts a load of ~ 300 to 600 jobs into CASTOR • depending on rate, sites being served etc • So, not much left for the rest: • Simulation production, Tier1->Tier-0, Tier2->Tier0, analysis @ CERNCAF • as soon as other activities started, CASTOR died

CASTOR stager limits • ATLAS has centrally organized its CASTOR usage into 4 groups: atlas001 atlas002

CASTOR stager limits • Shown is ‘atlas001’ requests to CASTOR • (will show later atlas002, atlast0) • … under load, requests stopping being served or were served very slowly CASTOR overloaded this T0 exercise didn’t yet have the dedicated ‘atlast0’ account

CASTOR stager limits • … same period for export (atlas002) and internal Tier-0 (atlast0) • correlation found between simulation production activities, internal Tier-0 processing and Tier-0 export • Tier-0 affected by other users’ activities • Particularly BAD since CASTOR would be serving most aggressive user (and Tier-0 is the most carefully throttled user!) Jobs pending! (long timeouts) atlas002 atlast0

CASTOR limits • Simultaneous activities of various users caused spikes and exceed 1K limit onto CASTOR-LSF • which then lead to slow serving of requests • … rfcp’s hanging for > 1 hour (same for GridFTP transfers) • But the stager was not the only problem with CASTOR • files ending up in invalid states (very few) • backend database problems • ORACLE DB appeared to be swapping and under heavy load • Database H/W was upgraded (DB server memory) and situation has since improved

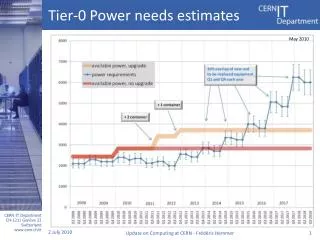

Overview of export to Tier-1s Transfer requests not served and overall many timeouts 3rd week of exercise, 4hours period

Useful error categorization CASTOR problems

… more error categorization a sample of non-T0 errors

file transfer history … keeping track of full file transfer history (example above: 3 DQ2 transfer attempts, no internal FTS retries, CASTOR timeouts)

7 days period in March Throughput measurement also includes downtime periods of CASTOR (several hours)

New CASTOR stager • Agreed with IT to fix CASTOR with maximum urgency • task force setup and lead by Bernd • the existing CASTOR had reached its limits and no longer was sufficient for our needs! • decided to setup a separate stager initially, running a new CASTOR version • to keep our normal production activities going as before while we test the new one • New stager introduces a rewritten LSF-plugin that should cope with significantly higher number of jobs in the queue • Will also enable more advanced scheduling as originally planned in CASTOR design

While waiting for new CASTOR stager… • … decide to use the new ARDA data management monitoring to understand all transfer errors • First lowered the rate for processing at CERN and reduced export to BNL and LYON only • not to reach CASTOR limits • Then went to analyze all error messages as well as throughput: • (next slides)

Sorting out the streams… • FTS sets the number of “file streams” • those are the number of parallel file transfers • but unfortunately, these are not necessarily all active all the time • because the SRM handling is included in the FTS transfer “slot” Slot 1 SRM GridFTP SRM SRM GridFTP SRM SRM GridFTP SRM Slot 2 no network usage… :-(

Sorting out the streams… • GridFTP sets the number of TCP streams • That’s TCP streams per file transfer • Current numbers for TCP streams and FTS file streams are a result of the Service Challenge tests • a few things varied though: e.g. ATLAS file sizes • ATLAS is also running 1st pass processing at the Tier-0! • An important point is that there is no established activity to monitoring state of network • … particularly without all the “overhead” • from storages, SRM, FTS, … • so we do not really know if our GridFTP timeouts are actually due to CASTOR, dCache or the network (or all combined!) • Therefore, now started parallel activity doing pure GridFTP transfers to BNL • From diskserver to diskserver, no CASTOR or dCache, to try and understand TCP streams and network first • then parallel file transfers • and double check if we see the same GridFTP errors as for the export

Sorting out the errors… • Also did detailed analysis of all error messages • Important CASTOR errors: • 451 Local resource failure: malloc: Cannot allocate memory: not CASTOR error actually but due to GridView taking up all memory on diskserver!! • Important GridFTP errors: • 421 Timeout (900 seconds): closing control connection.: only dominant error not understood so far • Happens all the time whether the system is under load or not • Operation was aborted (the GridFTP transfer timed out).: error appears to happen when either CASTOR is overloaded or destination storage is overloaded or as a consequence of other errors (e.g. “end-of-file was reached) • Important dCache errors: • an end-of-file was reached: appears to happen when file system on diskserver is full (why doesn’t PtP fail??) • We do have many other errors but at low rate (1/10000) which disappear after retrial

dCache problems • One occurrence of a dCache storage full at LYON • expect “graceful” handling from dCache • not quite… Illegible I know.. But the point is, a single cause - storage full - originated over 11k errors of ~20 types! (we were expecting PtP fail, storage full) State from FTS: Failed; Retries: 1; Reason: SOURCE error during PREPARATION phase: [PERMISSION] [SrmPing] failed: SOAP-ENV:Client - CGSI-gSOAP: GSS Major Status: General failureGSS Minor Status Error Chain:acquire_cred.c:125: gss_acquire_cred: Error with GSI credentialglobus_i_gsi_gss_utils.c:1323: globus_i_gsi_gss_cred_read: Error with gss credential handleglobus_i_gsi_gss_utils.c:15 32: globus_i_gsi_gss_create_cred: Error with gss credential handleglobus_i_gsi_gss_utils.c:2103: globus_i_gsi_gssapi_init_ssl_con text: Error with GSI credentialglobus_gsi_system_config.c:3475: globus_gsi_sysconfig_get_cert_dir_unix: Could not find a valid tr usted CA certificates directoryglobus_gsi_system_config.c:2996: globus_i_gsi_sysconfig_get_home_dir_unix: Error getting password entry for current user: Error occured for uid: 17680 2 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 45 1 451 rfio read failure: Connection reset by peer. 2 State from FTS: Failed; Retries: 1; Reason: DESTINATION error during PREPARATION phase: [PERMISSION] [SrmPing] failed: SOAP-ENV:C lient - CGSI-gSOAP: GSS Major Status: General failureGSS Minor Status Error Chain:acquire_cred.c:125: gss_acquire_cred: Error wit h GSI credentialglobus_i_gsi_gss_utils.c:1323: globus_i_gsi_gss_cred_read: Error with gss credential handleglobus_i_gsi_gss_utils .c:1532: globus_i_gsi_gss_create_cred: Error with gss credential handleglobus_i_gsi_gss_utils.c:2103: globus_i_gsi_gssapi_init_ss l_context: Error with GSI credentialglobus_gsi_system_config.c:3475: globus_gsi_sysconfig_get_cert_dir_unix: Could not find a val id trusted CA certificates directoryglobus_gsi_system_config.c:2996: globus_i_gsi_sysconfig_get_home_dir_unix: Error getting pass word entry for current user: Error occured for uid: 17680 1 State from FTS: Failed; Retries: 1; Reason: SOURCE error during PREPARATION phase: [PERMISSION] [SrmGet] failed: SOAP-ENV:Client - CGSI-gSOAP: GSS Major Status: General failureGSS Minor Status Error Chain:acquire_cred.c:125: gss_acquire_cred: Error with GSI credentialglobus_i_gsi_gss_utils.c:1323: globus_i_gsi_gss_cred_read: Error with gss credential handleglobus_i_gsi_gss_utils.c:153 2: globus_i_gsi_gss_create_cred: Error with gss credential handleglobus_i_gsi_gss_utils.c:2103: globus_i_gsi_gssapi_init_ssl_cont ext: Error with GSI credentialglobus_gsi_system_config.c:3475: globus_gsi_sysconfig_get_cert_dir_unix: Could not find a valid tru sted CA certificates directoryglobus_gsi_system_config.c:2996: globus_i_gsi_sysconfig_get_home_dir_unix: Error getting password e ntry for current user: Error occured for uid: 17680 1 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] an end-of-file was reached 8215 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 42 5 425 Cannot open port: java.lang.Exception: Pool manager error: Best pool <pool-disk-sc3-12> too high : 2.0E8 4585 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 42 5 425 Cannot open port: java.lang.Exception: Pool manager error: Best pool <pool-disk-sc3-6> too high : 2.0E8 1677 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] a system call failed (Connection refu sed) 126 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 42 1 421 Timeout (900 seconds): closing control connection. 123 State from FTS: Failed; Retries: 1; Reason: SOURCE error during PREPARATION phase: [GENERAL_FAILURE] CastorStagerInterface.c:2145 Internal error (errno=0, serrno=1015) 69 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 45 1 451 Local resource failure: malloc: Cannot allocate memory. 46 State from FTS: Failed; Retries: 1; Reason: DESTINATION error during PREPARATION phase: [REQUEST_TIMEOUT] failed to prepare Desti nation file in 180 seconds 28 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 42 5 425 Cannot open port: java.lang.Exception: Pool manager error: Best pool <pool-disk-sc3-6> too high : 2.000000000357143E8 17 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 42 6 426 Data connection. data_write() failed: Handle not in the proper state 11 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] globus_l_ftp_control_read_cb: Error w hile searching for end of reply 9 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 55 3 553 /pnfs/in2p3.fr/data/atlas/disk/sc4/multi_vo_tests/sc4tier0/04/05/T0.E.run008462.ESD._lumi0030._0001__DQ2-1175760332: Cannot create file: CacheException(rc=10006;msg=Pnfs request timed out) 3 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 55 3 553 /pnfs/in2p3.fr/data/atlas/disk/sc4/multi_vo_tests/sc4tier0/04/05/T0.A.run008462.AOD.AOD02._0001__DQ2-1175760207: Cannot cre ate file: CacheException(rc=10006;msg=Pnfs request timed out) 1 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 55 0 550 /castor/cern.ch/grid/atlas/t0/perm/T0.B.run008456.AOD.AOD04/T0.B.run008456.AOD.AOD04._0001: Address already in use. 1 State from FTS: Failed; Retries: 1; Reason: SOURCE error during PREPARATION phase: [PERMISSION] [SrmGetRequestStatus] failed: SOA P-ENV:Client - CGSI-gSOAP: GSS Major Status: General failureGSS Minor Status Error Chain:acquire_cred.c:125: gss_acquire_cred: Er ror with GSI credentialglobus_i_gsi_gss_utils.c:1323: globus_i_gsi_gss_cred_read: Error with gss credential handleglobus_i_gsi_gs s_utils.c:1532: globus_i_gsi_gss_create_cred: Error with gss credential handleglobus_i_gsi_gss_utils.c:2103: globus_i_gsi_gssapi_ init_ssl_context: Error with GSI credentialglobus_gsi_system_config.c:3475: globus_gsi_sysconfig_get_cert_dir_unix: Could not fin d a valid trusted CA certificates directoryglobus_gsi_system_config.c:2996: globus_i_gsi_sysconfig_get_home_dir_unix: Error getti ng password entry for current user: Error occured for uid: 17680 1 State from FTS: Failed; Retries: 1; Reason: SOURCE error during PREPARATION phase: [PERMISSION] [SetFileStatus] failed: SOAP-ENV: Client - CGSI-gSOAP: GSS Major Status: General failureGSS Minor Status Error Chain:(null) 1 State from FTS: Failed; Retries: 1; Reason: TRANSFER error during TRANSFER phase: [GRIDFTP] the server sent an error response: 45 1 451 rfio read failure: Connection closed by remote end. 1 State from FTS: Failed; Retries: 1; Reason: No status updates received since more than [360] seconds. Probably the process servin g the transfer is stuck

dCache problems • We found transfers problems to BNL in another occasion • After investigation by BNL (explanation by M. Ernst): “a full filesystem has lead to the "end-of-file reached" error at BNL, we meant to say that this condition arose on the gridftp server node (the log filled the filesystem because of a high log level configured on this particular node). The problem was not caused due to lack of space on the actual storage repositories. So, since this failure occurred due to the fact that log rotation wasn't working properly - which is rarely happening - I would consider a re-occurance as fairly unlikely.” • Such errors put considerably more load onto the Tier-0 storage system • which again affected the overall behaviour…

FTS 2.0 • About ~1 week ago started using FTS 2.0 (Pilot installation) to serve LYON transfers from the Tier-0 exercise • FTS 2.0 introduces error categorization, e.g.: • [REQUEST_TIMEOUT] • [INVALID_PATH] • [GRIDFTP] • [GENERAL_FAILURE] • [SRM_FAILURE] • [PERMISSION] • [INVALID_SIZE] • We are using FTS 2.0 server with existing FTS 1.x clients • Smooth transition without any problem so far! • Plan for FTS 2.0: • continue with existing pilot service • then try new FTS 2.0 client • then FTS 2.0 to Production? • FTS 2.0 will be able, in the future, to split SRM from GridFTP handling.. • this is very important to carefully throttle number of file streams

Conclusions (so far…) • Detailed analysis of transfer errors, including automatic categorization provided by our monitoring is very useful! • FTS 2.0 clearly an improvement here • At some point have to assume errors will always exist: • e.g. when everything goes “smooth” we see error rate varying between 15% and 5%… • Still figuring out what to do with bad error reporting: • e.g. storage full, how to cope with it? • Interference between sources/destination is worrying: • a bad file system in one of the BNL diskservers blocked a few transfer slots onto CASTOR@CERN • and we have so “few” slots with current CASTOR :( • Diverting traffic away from Tier-0? • e.g. our AOD goes to all Tier-1s but should it all come from CERN?

Conclusions (so far…) • Half-way in our tests • Tests a success since we found early a critical CASTOR limitation • … for which there is a very good prospect of being solved quickly! • Nonetheless, ATLAS testing schedule is essentially ~1 month late • … we should be testing T1->T2s by now! • Bigthank you to everyone in IT and at the Tier-1s for their support!

Future plans • Fix CASTOR • new stager almost ready for ATLAS • Re-run exercise using new stager • Tier-0 -> Tier-1s • Then Tier-1 -> Tier2s • More monitoring summaries huge amount of information now available centrally and we need to summarize it … • DQ2 developments now considering: • Further split between data taking, simulation production and end-user activities • Improvements on data re-routing

More information • https://twiki.cern.ch/twiki/bin/view/Atlas/TierZero20071 • http://dashb-atlas-data-test.cern.ch/dashboard/request.py/site • http://atlas.web.cern.ch/Atlas/GROUPS/SOFTWARE/DC/Tier0/monitoring/short.html