Collaborative work beneath the surface

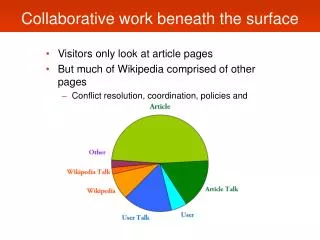

Collaborative work beneath the surface. Visitors only look at article pages But much of Wikipedia comprised of other pages Conflict resolution, coordination, policies and procedures. Types of work. Talk, user, procedure. Article. Direct work Immediately consumable. Indirect work

Collaborative work beneath the surface

E N D

Presentation Transcript

Collaborative work beneath the surface • Visitors only look at article pages • But much of Wikipedia comprised of other pages • Conflict resolution, coordination, policies and procedures

Types of work Talk, user, procedure Article Direct work Immediately consumable Indirect work Coordination, conflict Maintenance work Reverts, vandalism

Less direct work • Decrease in proportion of edits to article page 70%

More indirect work • Increase in proportion of edits to user talk 8%

More indirect work • Increase in proportion of edits to user talk • Increase in proportion of edits to procedure 11%

More maintenance work • Increase in proportion of edits that are reverts 7%

More wasted work • Increase in proportion of edits that are reverts • Increase in proportion of edits reverting vandalism 1-2%

Global level • Coordination costs are growing • Less direct work (articles) • More indirect work (article talk, user, procedure) • More maintenance work (reverts, vandalism) Kittur, Suh, Pendleton, & Chi, 2007

Article lifespan • How do articles change over time? • High discussion and coordination • Kittur et al., 2007; Viegas et al., 2007 • When does this happen? • Hyp 1: Early when articles are growing • Hyp 2: Late when articles are more stable

User lifespan • How do users change over time?

Centralization in Wikipedia • How much centralization? • “Gang of 500” (Jimmy Wales, 2004) • Small group of ~500 does half the work • Masses do the work (Aaron Swartz, 2006) • New users add most of the words

Hypotheses • Masses dominate • Elite privileged group • Shift from elites to masses • Technology adoption (Rogers, 1962) Masses Elites Shift

Elites • Admins • Editing status (fixed-size) • Editing status (scaling)

Admins • Waxing and waning of admin influence Proportion of all edits Nature News, 2/2007; Kittur, Chi, Pendleton, Suh, Mytkowicz, 2007

Admins • Similar for changed words Proportion of words changed

Elites • Admins • Editing status (fixed-size) • Editing status (scaling)

Elites • Admins • Editing status (fixed-size) • Editing status (scaling)

Editing status (scaling) • Proportional influence of elites still high • Though absolute number of elites growing

Summary: Centralization • Centralized elite influence is waning • Decline in admin influence • Decline in data-driven “Gang of 500” • Decentralized proportional influence remains high • Top 1/3/5% of users account for ~50/70/80% of edits • The “Bourgeosie”

Challenges for Wikipedia • Coordination costs • Organization structure • Conflict

Conflict at the article level • What leads to conflict in articles? • Build a characterization model of article conflict • Identify page features and metrics associated with conflict • Automatically identify high-conflict articles

Page metrics • Chose metrics for identifying conflict in articles • Easily computable, scalable

Defining conflict • Operational definition for conflict • Revisions tagged controversial • Conflict revision count

Machine learning • Predict conflict from page metrics • Training set of “controversial” pages • Support vector machine regression predicting # controversial revisions (SMOreg; Smola & Scholkopf, 1998) • Not just conflict/no conflict, but how much conflict

Performance: Cross-validation • 5x cross-validation, R2 = 0.897

Performance: Cross-validation • 5x cross-validation, R2 = 0.897

Determinants of conflict • —Revisions (talk) • —Minor edits (talk) • ˜Unique editors (talk) • —Revisions (article) • ˜Unique editors (article) • —Anonymous edits (talk) • ˜Anonymous edits (article) Highly weighted metrics of conflict model:

Identifying untagged articles • Detect conflicts for unlabeled articles • Majority of articles have never been conflict tagged • Testing model generalization • Applied model to untagged articles • Sample of 28 articles rated by 13 expert Wikipedians • Significant positive correlation with predicted scores • By rank correlation, p < 0.013 (Spearman’s rho)

Conflict at the user level • How can we identify conflict between users? • Reverts between users as a proxy for user conflict • Force directed layout to cluster users • Group similar viewpoints • Find conflicts between groups

Group D Group A Group C Group B Dokdo/Takeshima opinion groups

Terry Schiavo Anonymous (vandals/spammers) Sympathetic to husband Mediators Sympathetic to parents

Distributed collaboration • Lots of people • Each doing a little bit of work • Leads to high quality outcome (i.e., “wisdom of crowds”) Francis Galton Scale Ox

Distributed collaboration • Applications of distributed collaboration: • Judging: weight of an ox, temperature of a room • Search: Google PageRank • Predicting: Iowa Electronic Market, Las Vegas, HP • Filtering: Digg, Reddit • Organizing: del.icio.us • Common characteristics: • Independent judgments • Independent aggregation

Wikipedia and the wisdom of crowds • But these are not characteristic of Wikipedia: • Independent judgments • High coordination costs(Kittur et al., 2007) • Independent aggregation • Competitive aggregation(everyone is editing the same information) • To the extent that judgments and aggregation of individual tasks are not independent and instead require coordination and engender conflict, having more editors may not be beneficial and may even be harmful

Travesty of the commoners? • Increasing size of group generally has negative consequences: • Increased coordination costs • Increased anonymity and social loafing • Decreased attribution and individual reward • More negative social relations • Greater conflict and misbehavior • Loss of control • Cognitive overload see Bettenhausen, 1991; Levine & Moreland, 1990

Wilkinson & Huberman, 2007 • Examined featured articles vs. non-featured articles • Controlling for PageRank (i.e., popularity) • Featured articles = more edits, more editors • “More work, better outcome”: WP similar to other distributed collaboration systems Nature News (2/27/07)

Problem: Distribution of work • However, articles can have different distributions of work, even with same edits/editors • If an article has 1000 edits and 100 editors, it could have: • 1 editor making 901 edits, 99 making 1 edit • 100 editors making 10 edits each <>

Capturing skew • Gini coefficient: measures inequality of distribution • Measure Gini coefficient for each article • Count how many edits each editor makes, calculate ratio • If an article is driven by few, gini -> 1 • If an article is driven by many, gini -> 0 http://en.wikipedia.org/wiki/Gini_coefficient

New results • Sampled articles at a variety of quality levels • Defined and rated by expert Wikipedians • Hundreds of thousands of articles rated

Cross-sectional analysis • 900 articles sampled from Start through Featured • Higher quality associated with higher gini, higher editors