Simultaneous Multithreading (SMT)

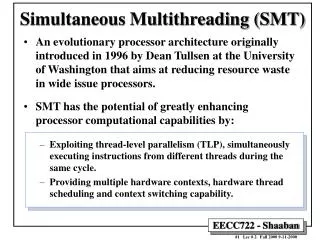

Simultaneous Multithreading (SMT). An evolutionary processor architecture originally introduced in 1995 by Dean Tullsen at the University of Washington that aims at reducing resource waste in wide issue processors.

Simultaneous Multithreading (SMT)

E N D

Presentation Transcript

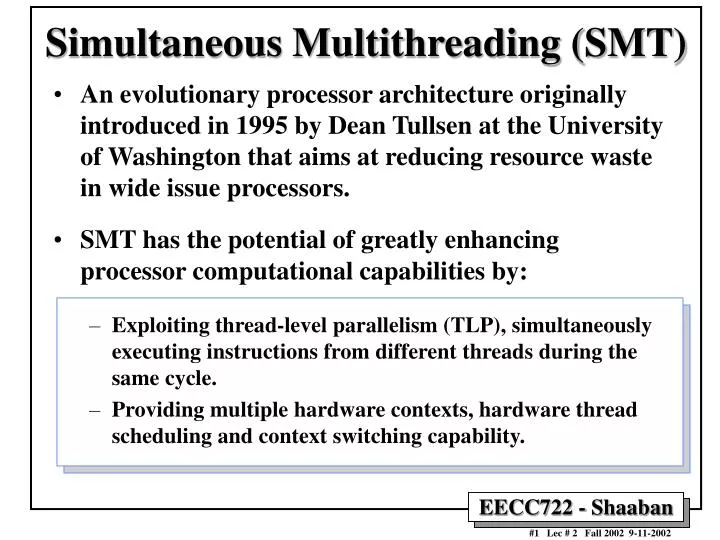

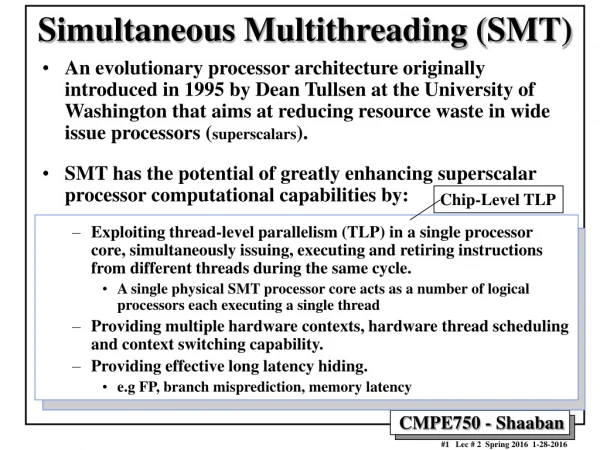

Simultaneous Multithreading (SMT) • An evolutionary processor architecture originally introduced in 1995 by Dean Tullsen at the University of Washington that aims at reducing resource waste in wide issue processors. • SMT has the potential of greatly enhancing processor computational capabilities by: • Exploiting thread-level parallelism (TLP), simultaneously executing instructions from different threads during the same cycle. • Providing multiple hardware contexts, hardware thread scheduling and context switching capability.

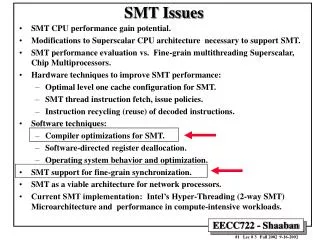

SMT Issues • SMT CPU performance gain potential. • Modifications to Superscalar CPU architecture necessary to support SMT. • SMT performance evaluation vs. Fine-grain multithreading Superscalar, Chip Multiprocessors. • Hardware techniques to improve SMT performance: • Optimal level one cache configuration for SMT. • SMT thread instruction fetch, issue policies. • Instruction recycling (reuse) of decoded instructions. • Software techniques: • Compiler optimizations for SMT. • Software-directed register deallocation. • Operating system behavior and optimization. • SMT support for fine-grain synchronization. • SMT as a viable architecture for network processors. • Current SMT implementation: Intel’s Hyper-Threading (2-way SMT) Microarchitecture and performance in compute-intensive workloads.

Evolution of Microprocessors Source: John P. Chen, Intel Labs

CPU Architecture Evolution: Single Threaded/Issue Pipeline • Traditional 5-stage integer pipeline. • Increases Throughput: Ideal CPI = 1

CPU Architecture Evolution: Superscalar Architectures • Fetch, decode, execute, etc. more than one instruction per cycle (CPI < 1). • Limited by instruction-level parallelism (ILP).

Superscalar Architectures: Issue Slot Waste Classification • Empty or wasted issue slots can be defined as either vertical waste or horizontal waste: • Vertical waste is introduced when the processor issues no instructions in a cycle. • Horizontal waste occurs when not all issue slots can be filled in a cycle.

Sources of Unused Issue Cycles in an 8-issue Superscalar Processor. Processor busy represents the utilized issue slots; all others represent wasted issue slots. 61% of the wasted cycles are vertical waste, the remainder are horizontal waste. Workload: SPEC92 benchmark suite. Source: Simultaneous Multithreading: Maximizing On-Chip Parallelism Dean Tullsen et al., Proceedings of the 22rd Annual International Symposium on Computer Architecture, June 1995, pages 392-403.

Superscalar Architectures: All possible causes of wasted issue slots, and latency-hiding or latency reducing techniques that can reduce the number of cycles wasted by each cause. Source: Simultaneous Multithreading: Maximizing On-Chip Parallelism Dean Tullsen et al., Proceedings of the 22rd Annual International Symposium on Computer Architecture, June 1995, pages 392-403.

Advanced CPU Architectures: Fine-grain or Traditional Multithreaded Processors • Multiple HW contexts (PC, SP, and registers). • One context gets CPU for x cycles at a time. • Limited by thread-level parallelism (TLP): • Can reduce some of the vertical issue slot waste. • No reduction in horizontal issue slot waste. • Example Architectures: HEP, Tera.

Advanced CPU Architectures: VLIW: Intel/HP IA-64 Explicitly Parallel Instruction Computing (EPIC) • Strengths: • Allows for a high level of instruction parallelism (ILP). • Takes a lot of the dependency analysis out of HW and places focus on smart compilers. • Weakness: • Limited by instruction-level parallelism (ILP) in a single thread. • Keeping Functional Units (FUs) busy (control hazards). • Static FUs Scheduling limits performance gains. • Resulting overall performance heavily depends on compiler performance.

Advanced CPU Architectures: Single Chip Multiprocessor • Strengths: • Create a single processor block and duplicate. • Takes a lot of the dependency analysis out of HW and places focus on smart compilers. • Weakness: • Performance limited by individual thread performance (ILP).

Advanced CPU Architectures: Single Chip Multiprocessor

SMT: Simultaneous Multithreading • Multiple Hardware Contexts running at the same time (HW context: registers, PC, and SP). • Reduces both horizontal and vertical waste by having multiple threads keeping functional units busy during every cycle. • Builds on top of current time-proven advancements in CPU design: superscalar, dynamic scheduling, hardware speculation, dynamic HW branch prediction. • Enabling Technology: VLSI logic density in the order of hundreds of millions of transistors/Chip.

SMT • With multiple threads running penalties from long-latency operations, cache misses, and branch mispredictions will be hidden: • Reduction of both horizontal and vertical waste and thus improved Instructions Issued Per Cycle (IPC) rate. • Pipelines are separated until issue stage. • Functional units are shared among all contexts during every cycle: • More complicated writeback stage. • More threads issuing to functional units results in higher resource utilization.

3 3 1 1 2 2 2 4 4 2 2 3 2 2 3 3 4 5 1 1 1 1 1 1 1 1 1 5 5 4 5 1 1 1 1 1 1 1 1 1 1 2 2 3 2 2 2 1 2 4 3 1 1 2 5 4 4 4 The Power Of SMT Time (processor cycles) Superscalar Traditional Multithreaded Simultaneous Multithreading Rows of squares represent instruction issue slots Box with number x: instruction issued from thread x Empty box: slot is wasted

Inst Code Description Functional unit A LUI R5,100 R5 = 100 Int ALU B FMUL F1,F2,F3 F1 = F2 x F3 FP ALU C ADD R4,R4,8 R4 = R4 + 8 Int ALU D MUL R3,R4,R5 R3 = R4 x R5 Int mul/div E LW R6,R4 R6 = (R4) Memory port F ADD R1,R2,R3 R1 = R2 + R3 Int ALU G NOT R7,R7 R7 = !R7 Int ALU H FADD F4,F1,F2 F4=F1 + F2 FP ALU I XOR R8,R1,R7 R8 = R1 XOR R7 Int ALU J SUBI R2,R1,4 R2 = R1 – 4 Int ALU K SW ADDR,R2 (ADDR) = R2 Memory port SMT Performance Example • 4 integer ALUs (1 cycle latency) • 1 integer multiplier/divider (3 cycle latency) • 3 memory ports (2 cycle latency, assume cache hit) • 2 FP ALUs (5 cycle latency) • Assume all functional units are fully-pipelined

SMT Performance Example (continued) • 2 additional cycles to complete program 2 • Throughput: • Superscalar: 11 inst/7 cycles = 1.57 IPC • SMT: 22 inst/9 cycles = 2.44 IPC

Modifications to Superscalar CPUs Necessary to support SMT • Multiple program counters and some mechanism by which one fetch unit selects one each cycle (thread instruction fetch policy). • A separate return stack for each thread for predicting subroutine return destinations. • Per-thread instruction retirement, instruction queue flush, and trap mechanisms. • A thread id with each branch target buffer entry to avoid predicting phantom branches. • A larger register file, to support logical registers for all threads plus additional registers for register renaming. (may require additional pipeline stages). • A higher available main memory fetch bandwidth may be required. • Larger data TLB with more entries to compensate for increased virtual to physical address translations. • Improved cache to offset the cache performance degradation due to cache sharing among the threads and the resulting reduced locality. • e.g Private per-thread vs. shared L1 cache.

Current Implementations of SMT • Intel’s recent implementation of Hyper-Threading Technology (2-thread SMT) in its current P4 Xeon processor family represent the first and only current implementation of SMT in a commercial microprocessor. • The Alpha EV8 (4-thread SMT) originally scheduled for production in 2001 is currently on indefinite hold :( • Current technology has the potential for 4-8 simultaneous threads: • Based on transistor count and design complexity.

A Base SMT hardware Architecture. Source: Exploiting Choice: Instruction Fetch and Issue on an Implementable Simultaneous Multithreading Processor, Dean Tullsen et al. Proceedings of the 23rd Annual International Symposium on Computer Architecture, May 1996, pages 191-202.

Example SMT Vs. Superscalar Pipeline • The pipeline of (a) a conventional superscalar processor and (b) that pipeline modified for an SMT processor, along with some implications of those pipelines. Source: Exploiting Choice: Instruction Fetch and Issue on an Implementable Simultaneous Multithreading Processor, Dean Tullsen et al. Proceedings of the 23rd Annual International Symposium on Computer Architecture, May 1996, pages 191-202.

Intel Xeon Processor Pipeline Source: Intel Technology Journal , Volume 6, Number 1, February 2002.

Intel Xeon Out-of-order Execution Engine Detailed Pipeline Source: Intel Technology Journal , Volume 6, Number 1, February 2002.

Multiprogramming workload Superscalar Traditional SMT Threads Multithreading 1 2.7 2.6 3.1 2 - 3.3 3.5 4 - 3.6 5.7 8 - 2.8 6.2 Parallel Workload Superscalar MP2 MP4 Traditional SMT Threads Multithreading 1 3.3 2.4 1.5 3.3 3.3 2 - 4.3 2.6 4.1 4.7 4 - - 4.2 4.2 5.6 8 - - - 3.5 6.1 SMT Performance Comparison • Instruction throughput from simulations by Eggers et al. at The University of Washington, using both multiprogramming and parallel workloads:

Simultaneous Vs. Fine-Grain Multithreading Performance Instruction throughput as a function of the number of threads. (a)-(c) show the throughput by thread priority for particular models, and (d) shows the total throughput for all threads for each of the six machine models. The lowest segment of each bar is the contribution of the highest priority thread to the total throughput. Source: Simultaneous Multithreading: Maximizing On-Chip Parallelism Dean Tullsen et al., Proceedings of the 22rd Annual International Symposium on Computer Architecture, June 1995, pages 392-403.

Simultaneous Multithreading Vs. Single-Chip Multiprocessing • Results for the multiprocessor MP vs. simultaneous multithreading SM comparisons.The multiprocessor always has one functional unit of each type per processor. In most cases the SM processor has the same total number of each FU type as the MP. Source: Simultaneous Multithreading: Maximizing On-Chip Parallelism Dean Tullsen et al., Proceedings of the 22rd Annual International Symposium on Computer Architecture, June 1995, pages 392-403.

Impact of Level 1 Cache Sharing on SMT Performance • Results for the simulated cache configurations, shown relative to the throughput (instructions per cycle) of the 64s.64p • The caches are specified as: [total I cache size in KB][private or shared].[D cache size][private or shared] For instance, 64p.64s has eight private 8 KB I caches and a shared 64 KB data Source: Simultaneous Multithreading: Maximizing On-Chip Parallelism Dean Tullsen et al., Proceedings of the 22rd Annual International Symposium on Computer Architecture, June 1995, pages 392-403.

SMT Thread Instruction Fetch Scheduling Policies • Round Robin: • Instruction from Thread 1, then Thread 2, then Thread 3, etc. (eg RR 1.8 : each cycle one thread fetches up to eight instructions RR 2.4 each cycle two threads fetch up to four instructions each) • BR-Count: • Give highest priority to those threads that are least likely to be on a wrong path by by counting branch instructions that are in the decode stage, the rename stage, and the instruction queues, favoring those with the fewest unresolved branches. • MISS-Count: • Give priority to those threads that have the fewest outstanding Data cache misses. • I-Count: • Highest priority assigned to thread with the lowest number of instructions in static portion of pipeline (decode, rename, and the instruction queues). • IQPOSN: • Give lowest priority to those threads with instructions closest to the head of either the integer or floating point instruction queues (the oldest instruction is at the head of the queue).

Instruction throughput & Thread Fetch Policy Source: Exploiting Choice: Instruction Fetch and Issue on an Implementable Simultaneous Multithreading Processor, Dean Tullsen et al. Proceedings of the 23rd Annual International Symposium on Computer Architecture, May 1996, pages 191-202.

Possible SMT Instruction Issue Policies • OLDEST FIRST: Issue the oldest instructions (those deepest into the instruction queue). • OPT LAST and SPEC LAST: Issue optimistic and speculative instructions after all others have been issued. • BRANCH FIRST: Issue branches as early as possible in order to identify mispredicted branches quickly. Source: Exploiting Choice: Instruction Fetch and Issue on an Implementable Simultaneous Multithreading Processor, Dean Tullsen et al. Proceedings of the 23rd Annual International Symposium on Computer Architecture, May 1996, pages 191-202.

RIT-CE SMT Project Goals • Investigate performance gains from exploiting Thread-Level Parallelism (TLP) in addition to current Instruction-Level Parallelism (ILP) in processor design. • Design and simulate an architecture incorporating Simultaneous Multithreading (SMT) including OS interaction (LINUX-based kernel?). • Study operating system and compiler optimizations to improve SMT processor performance. • Performance studies with various workloads using the simulator/OS/compiler: • Suitability for fine-grained parallel applications? • Effect on multimedia applications?

Simulator (sim-SMT) @ RIT CE • Execution-driven, performance simulator. • Derived from Simple Scalar tool set. • Simulates cache, branch prediction, five pipeline stages • Flexible: • Configuration File controls cache size, buffer sizes, number of functional units. • Cross compiler used to generate Simple Scalar assembly language. • Binary utilities, compiler, and assembler available. • Standard C library (libc) has been ported. • Sim-SMT Simulator Limitations: • Does not keep precise exceptions. • System Call’s instructions not tracked. • Limited memory space: • Four test programs’ memory spaces running on one simulator memory space • Easy to run out of stack space

sim-SMT Simulation Runs & Results • Test Programs used: • Newton interpolation. • Matrix Solver using LU decomposition. • Integer Test Program. • FP Test Program. • Simulations of a single program • 1,2, and 4 threads. • System simulations involve a combination of all programs simultaneously • Several different combinations were run • From simulation results: • Performance increase: • Biggest increase occurs when changing from one to two threads. • Higher issue rate, functional unit utilization.

Performance (IPC) Simulation Results:

Instruction Issue Rate Simulation Results:

Performance Vs. Issue BW Simulation Results:

Functional Unit Utilization Simulation Results:

No Functional Unit Available Simulation Results:

Horizontal Waste Rate Simulation Results:

Vertical Waste Rate Simulation Results:

SMT: Simultaneous Multithreading • Strengths: • Overcomes the limitations imposed by low single thread instruction-level parallelism. • Multiple threads running will hide individual control hazards (branch mispredictions). • Weaknesses: • Additional stress placed on memory hierarchy Control unit complexity. • Sizing of resources (cache, branch prediction, TLBs etc.) • Accessing registers (32 integer + 32 FP for each HW context): • Some designs devote two clock cycles for both register reads and register writes.