Grading evidence and recommendations

Grading evidence and recommendations. Holger Schünemann Andy Oxman for the GRADE Working Group. Professional good intentions and plausible theories are insufficient for selecting policies and practices for protecting, promoting and restoring health . Iain Chalmers.

Grading evidence and recommendations

E N D

Presentation Transcript

Grading evidence and recommendations Holger Schünemann Andy Oxman for the GRADE Working Group

Professional good intentions and plausible theories are insufficient for selecting policies and practices for protecting, promoting and restoring health. Iain Chalmers

How can we judge the extent of our confidence that adherence to a recommendation will do more good than harm?

GRADE Grades of Recommendation Assessment, Development and Evaluation

What do you know about GRADE? • Have prepared a guideline • Read the BMJ paper • Have prepared a systematic review and a summary of findings table • Have attended a GRADE meeting, workshop or talk

About GRADE • Began as informal working group in 2000 • Researchers/guideline developers with interest in methodology • Aim: to develop a common system for grading the quality of evidence and the strength of recommendations that is sensible and to explore the range of interventions and contexts for which it might be useful* • 12 meetings (~10 – 35 attendants) • Evaluation of existing systems and reliability* • Workshops at Cochrane Colloquia, WHO and GIN since 2000 *Grade Working Group. CMAJ 2003, BMJ 2004, BMC 2004, BMC 2005

David Atkins, chief medical officera Dana Best, assistant professorb Peter A Briss, chiefc Martin Eccles, professord Yngve Falck-Ytter, associate directore Signe Flottorp, researcherf Gordon H Guyatt, professorg Robin T Harbour, quality and information director h Margaret C Haugh, methodologisti David Henry, professorj Suzanne Hill, senior lecturerj Roman Jaeschke, clinical professork Gillian Leng, guidelines programme directorl Alessandro Liberati, professorm Nicola Magrini, directorn James Mason, professord Philippa Middleton, honorary research fellowo Jacek Mrukowicz, executive directorp Dianne O’Connell, senior epidemiologistq Andrew D Oxman, directorf Bob Phillips, associate fellowr Holger J Schünemann, associate professorg,s Tessa Tan-Torres Edejer, medical officer/scientistt Helena Varonen, associate editoru Gunn E Vist, researcherf John W Williams Jr, associate professorv Stephanie Zaza, project directorw a) Agency for Healthcare Research and Quality, USA b) Children's National Medical Center, USA c) Centers for Disease Control and Prevention, USA d) University of Newcastle upon Tyne, UK e) German Cochrane Centre, Germany f) Norwegian Centre for Health Services, Norway g) McMaster University, Canada h) Scottish Intercollegiate Guidelines Network, UK i) Fédération Nationale des Centres de Lutte Contre le Cancer, France j) University of Newcastle, Australia k) McMaster University, Canada l) National Institute for Clinical Excellence, UK m) Università di Modena e Reggio Emilia, Italy n) Centro per la Valutazione della Efficacia della Assistenza Sanitaria, Italy o) Australasian Cochrane Centre, Australia p) Polish Institute for Evidence Based Medicine, Poland q) The Cancer Council, Australia r) Centre for Evidence-based Medicine, UK s) National Cancer Institute, Italy t) World Health Organisation, Switzerland u) Finnish Medical Society Duodecim, Finland v) Duke University Medical Center, USA w) Centers for Disease Control and Prevention, USA GRADE Working Group

Why guidelines? • users looking for different things • just tell me what to do (recommendation) • what to do, and on strong or weak grounds • recommendation and grade • recommend, grade, evidence summary, values • systematic review, value statement • evidence from individual studies

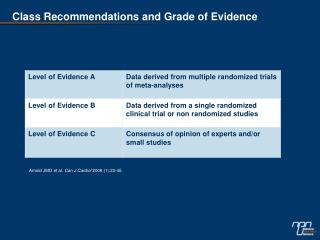

Grading System • current profusion: can there be consensus? • trade-off benefits and risks • do it (or don’t do it) • probably do it (or probably don’t do it) • quality of underlying evidence • high quality (well done RCT) • intermediate (quasi-RCT) • low (well done observational) • very low (anything else)

Moving down • poor RCT design, implementation • inconsistency • indirect • A vs B, but have A to C, B to C • patients, interventions, outcomes • reporting bias • reporting bias

Moving up • magnitude of effect • dose-response • biases favor control • for-profit, not-for-profit

When to make a recommendation? • never • patient values differ • just lay out benefits and risks • when evidence strong enough • when very weak, too uncertain • clinicians need guidance • intense study demands decision

Why bother about grading? • People draw conclusions about the • quality of evidence • strength of recommendations • Systematic and explicit approaches can help • protect against errors • resolve disagreements • facilitate critical appraisal • communicate information • However, there is wide variation in currently used approaches

Evidence Recommendation II-2 B C+ 1 Strong Strongly recommended Organization USPSTF ACCP GCPS Who is confused?

EvidenceRecommendation B Class I C+ 1 IV C Organization AHA ACCP SIGN Still not confused? Recommendation for use of oral anticoagulation in patients with atrial fibrillation and rheumatic mitral valve disease

Example ACCP • First ACCP guidelines in 1986 (J. Hirsh; J. Dalen) • Initially aimed at consensus • Methodologists involved since beginning • Now formally convening every 2 to 3 years • > 200.000 copies in 2001 • Seventh conference held in 2003 • 87 panel members, 22 chapters • Across subspecialties • 565 recommendations, 230 new • Evidence Based Recommendations

What makes guidelines evidence based (in 2005)? • Evidence – recommendation: transparent link • Explicit inclusion criteria • Comprehensive search • Standardized consideration of study quality • Conduct/use meta-analysis • Grade recommendations • Acknowledge values and preferences underlying recommendations Schünemann et al. Chest 2004

Transparent link between evidence and recommendations&Explicit inclusion criteria Albers et al. Chest 2004

Quality of evidence The extent to which one can be confident that an estimate of effect or association is correct. It depends on the: • study design (e.g. RCT, cohort study) • study quality/limitations (protection against bias; e.g. concealment of allocation, blinding, follow-up) • consistency of results • directness of the evidence including the • populations (those of interest versus similar; for example, older, sicker or more co-morbidity) • interventions (those of interest versus similar; for example, drugs within the same class) • outcomes (important versus surrogate outcomes) • comparison (A - C versus A - B & C - B)

Quality of evidence The quality of the evidence (i.e. our confidence) may also be REDUCEDwhen there is: • Sparse or imprecise data • Reporting bias The quality of the evidence (i.e. our confidence) may be INCREASEDwhen there is: • A strong association • A dose response relationship • All plausible confounders would have reduced the observed effect • All plausible biases would have increased the observed lack of effect

Categories of quality • High: Further research is very unlikely to change our confidence in the estimate of effect. • Moderate: Further research is likely to have an important impact on our confidence in the estimate of effect and may change the estimate. • Low: Further research is very likely to have an important impact on our confidence in the estimate of effect and is likely to change the estimate. • Very low: Any estimate of effect is very uncertain.

Judgements about the overall quality of evidence • Most systems not explicit • Options: • strongest outcome • primary outcome • benefits • weighted • separate grades for benefits and harms • no overall grade • weakest outcome • Based on lowest of all the critical outcomes • Beyond the scope of a systematic review

Strength of recommendation The extent to which one can be confident that adherence to a recommendation will do more good than harm. • trade-offs (the relative value attached to the expected benefits, harms and costs) • quality of the evidence • translation of the evidence into practice in a specific setting • uncertainty about baseline risk

Judgements about the balance between benefits and harms • Before considering cost and making a recommendation • For a specified setting, taking into account issues of translation into practice

Clarity of the trade-offs between benefits and the harms • the estimated size of the effect for each main outcome • the precision of these estimates • the relative value attached to the expected benefits and harms • important factors that could be expected to modify the size of the expected effects in specific settings; e.g. proximity to a hospital

Balance between benefits and harm • Net benefits: The intervention does more good than harm. • Trade-offs: There are important trade-offs between the benefits and harms. • Uncertain net benefits: It is not clear whether the intervention does more good than harm. • Not net benefits: The intervention does not do more good than harm.

Judgements about recommendations This should include considerations of costs; i.e. “Is the net gain (benefits-harms) worth the costs?” • Do it • Probably do it No recommendation • Probably don’t do it • Don’t do it

Will GRADE lead to change? Should healthy asymptomatic postmenopausal women have been given oestrogen + progestin for prevention in 1992? • Quality of evidence across studies for • CHD • Hip fracture • Colorectal cancer • Breast cancer • Stroke • Thrombosis • Gall bladder disease • Quality of evidence across critical outcomes • Balance between benefits and harms • Recommendations

Evidence profile: Quality assessmentOestrogen + progestin for prevention in 1992 (before WHI and HERS) Oestrogen + progestin versus usual care

Further developments • Diagnostic tests • Complexity • Costs • (Equity) • Empirical evaluations

Empirical evaluations • Critical appraisal of other systems • Pilot test + sensibility • “Case law” + practical experience • Guidance for judgements • Single studies • Sparse data or imprecise data • Agreement • Validity? • Comparisons with other systems • Alternative presentations

Comparison of GRADE and other systems • Explicit definitions • Explicit, sequential judgements • Components of quality • Overall quality • Relative importance of outcomes • Balance between health benefits and harms • Balance between incremental health benefits and costs • Consideration of equity • Evidence profiles • International collaboration • Software • Consistent judgements? • Communication?

Who is interested in GRADE • WHO • American Endocrine Society • American College of Chest Physicians (ACCP) • Italian National Cancer Institute • Clinical Evidence • Norwegian Centre for Health Services • UpToDate • Close relationship with Cochrane Collaboration • American Society of Clinical Oncology (ASCO)

We will serve the public more responsibly and ethically when research designed to reduce the likelihood that we will be misled by bias and the play of chance has become an expected element of professional and policy making practice, not an optional add-on. Iain Chalmers

A prerequisitePractitioners and policy makers must make much clearer that they need rigorous evaluative research to help ensure that they do more good than harm. Iain Chalmers