Random Variables

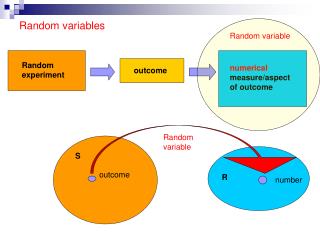

Random Variables. an important concept in probability. A random variable , X, is a numerical quantity whose value is determined be a random experiment. Examples Two dice are rolled and X is the sum of the two upward faces.

Random Variables

E N D

Presentation Transcript

Random Variables an important concept in probability

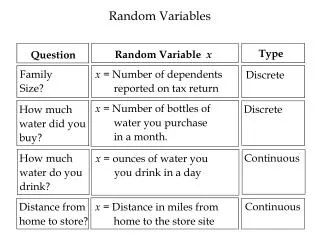

A random variable , X, is a numerical quantity whose value is determined be a random experiment Examples • Two dice are rolled and X is the sum of the two upward faces. • A coin is tossed n = 3 times and X is the number of times that a head occurs. • We count the number of earthquakes, X, that occur in the San Francisco region from 2000 A. D, to 2050A. D. • Today the TSX composite index is 11,050.00, X is the value of the index in thirty days

Examples – R.V.’s - continued • A point is selected at random from a square whose sides are of length 1. X is the distance of the point from the lower left hand corner. point X • A chord is selected at random from a circle. X is the length of the chord. chord X

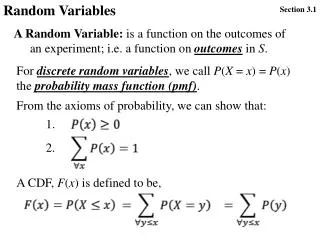

Definition – The probability function, p(x), of a random variable, X. For any random variable, X, and any real number, x, we define where {X = x} = the set of all outcomes (event) with X = x.

Definition – The cumulative distribution function, F(x), of a random variable, X. For any random variable, X, and any real number, x, we define where {X≤x} = the set of all outcomes (event) with X ≤x.

Examples • Two dice are rolled and X is the sum of the two upward faces. S , sample space is shown below with the value of X for each outcome

Graph p(x) x

The cumulative distribution function, F(x) For any random variable, X, and any real number, x, we define where {X≤x} = the set of all outcomes (event) with X ≤x. Note {X≤x} =f if x < 2. Thus F(x) = 0. {X≤x} ={(1,1)} if 2 ≤ x < 3. Thus F(x) = 1/36 {X≤x} ={(1,1) ,(1,2),(1,2)} if 3 ≤ x < 4. Thus F(x) = 3/36

Continuing we find F(x) is a step function

A coin is tossed n = 3 times and X is the number of times that a head occurs. The sample Space S = {HHH (3), HHT (2), HTH (2), THH (2), HTT (1), THT (1), TTH (1), TTT (0)} for each outcome X is shown in brackets

Graphprobability function p(x) x

Examples – R.V.’s - continued • A point is selected at random from a square whose sides are of length 1. X is the distance of the point from the lower left hand corner. point X • A chord is selected at random from a circle. X is the length of the chord. chord X

E Examples – R.V.’s - continued • A point is selected at random from a square whose sides are of length 1. X is the distance of the point from the lower left hand corner. point X S An event, E, is any subset of the square, S. P[E] = (area of E)/(Area of S) = area of E

S The probability function Thus p(x) = 0 for all values of x. The probability function for this example is not very informative

S The Cumulative distribution function

The probability density function, f(x), of a continuous random variable Suppose that X is a random variable. Let f(x) denote a function define for -∞ < x < ∞ with the following properties: • f(x) ≥ 0 Then f(x) is called the probability density function of X. The random, X, is called continuous.

Thus if X is a continuous random variable with probability density function, f(x) then the cumulative distribution function of X is given by: Also because of the fundamental theorem of calculus.

Example A point is selected at random from a square whose sides are of length 1. X is the distance of the point from the lower left hand corner. point X

Now and

Recall p(x) = P[X = x] = the probability function of X. This can be defined for any random variable X. For a continuous random variable p(x) = 0 for all values of X. Let SX ={x| p(x) > 0}. This set is countable (i. e. it can be put into a 1-1 correspondence with the integers} SX ={x| p(x) > 0}= {x1, x2, x3, x4, …} Thus let

Proof: (thatthe set SX ={x| p(x) > 0} is countable) (i. e. can be put into a 1-1 correspondence with the integers} SX = S1 S2 S3 S3 … where i. e.

Thus the elements of SX = S1 S2 S3 S3 … can be arranged {x1, x2, x3, x4, … } by choosing the first elements to be the elements of S1 , the next elements to be the elements of S2 , the next elements to be the elements of S3 , the next elements to be the elements of S4 , etc This allows us to write

A Discrete Random Variable A random variable X is called discrete if That is all the probability is accounted for by values, x, such that p(x) > 0.

Discrete Random Variables For a discrete random variable X the probability distribution is described by the probability function p(x), which has the following properties

Graph: Discrete Random Variable p(x) b a

Continuousrandom variables For a continuous random variable X the probability distribution is described by the probability density function f(x), which has the following properties : • f(x) ≥ 0

Graph: Continuous Random Variableprobability density function, f(x)

A Probability distribution is similar to a distribution ofmass. A Discrete distribution is similar to a pointdistribution ofmass. Positive amounts of mass are put at discrete points. p(x4) p(x2) p(x1) p(x3) x4 x1 x2 x3

A Continuous distribution is similar to a continuousdistribution ofmass. The total mass of 1 is spread over a continuum. The mass assigned to any point is zero but has a non-zero density f(x)

The distribution function F(x) This is defined for any random variable, X. F(x) = P[X ≤ x] Properties • F(-∞) = 0 and F(∞) = 1. Since {X ≤ - ∞} = f and {X ≤ ∞} = S then F(- ∞) = 0 and F(∞) = 1.

F(x) is non-decreasing (i. e. if x1 < x2 then F(x1) ≤F(x2) ) If x1 < x2 then {X ≤ x2} = {X ≤ x1} {x1 < X ≤ x2} Thus P[X ≤ x2] = P[X ≤ x1] + P[x1 < X ≤ x2] or F(x2) = F(x1) + P[x1 < X ≤ x2] Since P[x1 < X ≤ x2] ≥ 0 then F(x2) ≥F(x1). • F(b) – F(a) = P[a < X ≤ b]. If a < bthen using the argument above F(b) = F(a) + P[a < X ≤ b] Thus F(b) – F(a) = P[a < X ≤ b].

p(x) = P[X = x] =F(x) – F(x-) Here • If p(x) = 0 for all x (i.e. X is continuous) then F(x) is continuous. A function F is continuous if One can show that Thus p(x) = 0 implies that

For Discrete Random Variables F(x) is a non-decreasing step function with F(x) p(x)

For Continuous Random Variables Variables F(x) is a non-decreasing continuous function with f(x) slope F(x) x

Success (S) • Failure (F) Suppose that we have a experiment that has two outcomes These terms are used in reliability testing. Suppose that p is the probability of success (S) and q = 1 – p is the probability of failure (F) This experiment is sometimes called a Bernoulli Trial Let Then

The probability distribution with probability function is called the Bernoulli distribution p q = 1- p