Real-world protein aligners

290 likes | 387 Vues

Explore MUSCLE and ProbCons, high-throughput protein aligners known for their accuracy and reasonable speed. Learn their methodologies, iterative refinement, and specialized capabilities, offering rapid global alignments and improving accuracy.

Real-world protein aligners

E N D

Presentation Transcript

Real-world protein aligners • MUSCLE • High throughput • One of the best in accuracy • ProbCons • High accuracy • Reasonable speed

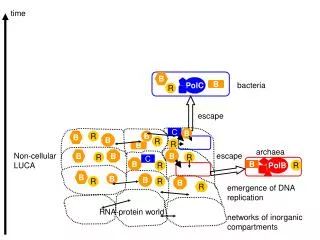

MUSCLE at a glance • Fast measurement of all pairwise distances between sequences • DDRAFT(x, y) defined in terms of # common k-mers (k~3) – O(N2 L logL) time • Build tree TDRAFT based on those distances, with UPGMA • Progressive alignment over TDRAFT, resulting in multiple alignment MDRAFT • Only perform alignment steps for the parts of the tree that have changed • Measure new Kimura-based distances D(x, y) based on MDRAFT • Build tree T based on D • Progressive alignment over T, to build M • Iterative refinement; for many rounds, do: • Tree Partitioning: Split M on one branch and realign the two resulting profiles • If new alignment M’ has better sum-of-pairs score than previous one, accept

PROBCONS at a glance • Computation of all posterior matrices Mxy : Mxy(i, j) = Prob(xi ~ yj), using a HMM • Re-estimation of posterior matrices M’xy with probabilistic consistency • M’xy(i, j) = 1/N sequence zk Mxz(i, k) Myz (j, k); M’xy = Avgz(MxzMzy) • Compute for every pair x, y, the maximum expected accuracy alignment • Axy: alignment that maximizes aligned (i, j) in AM’xy(i, j) • Define E(x, y) = aligned (i, j) in AxyM’xy(i, j) • Build tree T with hierarchical clustering using similarity measure E(x, y) • Progressive alignment on T to maximize E(.,.) • Iterative refinement; for many rounds, do: • Randomized Partitioning: Split sequences in M in two subsets by flipping a coin for each sequence and realign the two resulting profiles

Some Resources Genome Resources Annotation and alignment genome browser at UCSC http://genome.ucsc.edu/cgi-bin/hgGateway Specialized VISTA alignment browser at LBNL http://pipeline.lbl.gov/cgi-bin/gateway2 ABC—Nice Stanford tool for browsing alignments http://encode.stanford.edu/~asimenos/ABC/ Protein Multiple Aligners http://www.ebi.ac.uk/clustalw/ CLUSTALW – most widely used http://phylogenomics.berkeley.edu/cgi-bin/muscle/input_muscle.py MUSCLE – most scalable http://probcons.stanford.edu/ PROBCONS – most accurate

Rapid Global Alignments How to align genomic sequences in (more or less) linear time

Motivation • Genomic sequences are very long: • Human genome = 3 x 109 –long • Mouse genome = 2.7 x 109 –long • Aligning genomic regions is useful for revealing common gene structure • It is useful to compare regions > 1,000,000-long

The UCSC Browser • http://genome.ucsc.edu/cgi-bin/hgGateway

Main Idea Genomic regions of interest contain islands of similarity, such as genes • Find local alignments • Chain an optimal subset of them • Refine/complete the alignment Systems that use this idea to various degrees: MUMmer, GLASS, DIALIGN, CHAOS, AVID, LAGAN, TBA, & others

Saving cells in DP • Find local alignments • Chain -O(NlogN) L.I.S. • Restricted DP

Methods toCHAINLocal Alignments Sparse Dynamic Programming O(N log N)

The Problem: Find a Chain of Local Alignments (x,y) (x’,y’) requires x < x’ y < y’ Each local alignment has a weight FIND the chain with highest total weight

Quadratic Time Solution • Build Directed Acyclic Graph (DAG): • Nodes: local alignments [(xa,xb) (ya,yb)] & score • Directed edges: local alignments that can be chained • edge ( (xa, xb, ya, yb), (xc, xd, yc, yd) ) • xa < xb < xc < xd • ya < yb < yc < yd Each local alignment is a node vi with alignment score si

Quadratic Time Solution Initialization: Find each node va s.t. there is no edge (u, va) Set score of V(a) to be sa Iteration: For each vi, optimal path ending in vi has total score: V(i) = maxjs.t. there is edge (vj, vi) ( weight(vj, vi) + V(j) ) Termination: Optimal global chain: j = argmax ( V(j) ); trace chain from vj Worst case time: quadratic

Sparse Dynamic Programming Back to the LCS problem: • Given two sequences • x = x1,…, xm • y = y1, …, yn • Find the longest common subsequence • Quadratic solution with DP • How about when “hits” xi = yj are sparse?

Sparse Dynamic Programming • Imagine a situation where the number of hits is much smaller than O(nm) – maybe O(n) instead

Sparse Dynamic Programming – L.I.S. • Longest Increasing Subsequence • Given a sequence over an ordered alphabet • x = x1,…, xm • Find a subsequence • s = s1, …, sk • s1 < s2 < … < sk

Sparse Dynamic Programming – L.I.S. Let input be w: w1,…, wn INITIALIZATION: L: last LIS elt. array L[0] = -inf L[1] = w1 L[2…n] = +inf B: array holding LIS elts; B[0] = 0 P: array of backpointers // L[j]: smallest jth element wi of j-long LIS seen so far ALGORITHM for i = 2 to n { Find j such that L[j – 1] < w[i] ≤ L[j] L[j] w[i] B[j] i P[i] B[j – 1] } That’s it!!! • Running time?

Sparse LCS expressed as LIS x Create a sequence w • Every matching point (i, j), is inserted into w as follows: • For each column j = 1…m, insert in w the points (i, j), in decreasing row i order • The 11 example points are inserted in the order given • a = (y, x), b = (y’, x’) can be chained iff • a is before b in w, and • y < y’ y

Sparse LCS expressed as LIS x Create a sequence w w = (4,2) (3,3) (10,5) (2,5) (8,6) (1,6) (3,7) (4,8) (7,9) (5,9) (9,10) Consider now w’s elements as ordered lexicographically, where • (y, x) < (y’, x’) if y < y’ Claim: An increasing subsequence of w is a common subsequence of x and y y

Sparse Dynamic Programming for LIS x Example: w = (4,2) (3,3) (10,5) (2,5) (8,6) (1,6) (3,7) (4,8) (7,9) (5,9) (9,10) L = [L1] [L2] [L3] [L4] [L5] … • (4,2) • (3,3) • (3,3) (10,5) • (2,5) (10,5) • (2,5) (8,6) • (1,6) (8,6) • (1,6) (3,7) • (1,6) (3,7) (4,8) • (1,6) (3,7) (4,8) (7,9) • (1,6) (3,7) (4,8) (5,9) • (1,6) (3,7) (4,8) (5,9) (9,10) y Longest common subsequence: s = 4, 24, 3, 11, 18

Sparse DP for rectangle chaining • 1,…, N: rectangles • (hj, lj): y-coordinates of rectangle j • w(j): weight of rectangle j • V(j): optimal score of chain ending in j • L: list of triplets (lj, V(j), j) • L is sorted by lj: smallest (North) to largest (South) value • L is implemented as a balanced binary tree h l y

Sparse DP for rectangle chaining Main idea: • Sweep through x-coordinates • To the right of b, anything chainable to a is chainable to b • Therefore, if V(b) > V(a), rectangle a is “useless” for subsequent chaining • In L, keep rectangles j sorted with increasing lj-coordinates sorted with increasing V(j) score V(b) V(a)

Sparse DP for rectangle chaining Go through rectangle x-coordinates, from lowest to highest: • When on the leftmost end of rectangle i: • j: rectangle in L, with largest lj < hi • V(i) = w(i) + V(j) • When on the rightmost end of i: • k: rectangle in L, with largest lk li • If V(i) > V(k): • INSERT (li, V(i), i) in L • REMOVE all (lj, V(j), j) with V(j) V(i) & lj li j i k Is k ever removed?

Example x 2 a: 5 V 5 5 11 8 12 13 6 b: 6 9 10 c: 3 16 15 5 9 11 11 L 13 12 12 5 11 8 d: 4 14 e: 2 15 3 d a c b 16 y • When on the leftmost end of rectangle i: • j: rectangle in L, with largest lj < hi • V(i) = w(i) + V(j) • When on the rightmost end of i: • k: rectangle in L, with largest lk li • If V(i) > V(k): • INSERT (li, V(i), i) in L • REMOVE all (lj, V(j), j) with V(j) V(i) & lj li

Time Analysis • Sorting the x-coords takes O(N log N) • Going through x-coords: N steps • Each of N steps requires O(log N) time: • Searching L takes log N • Inserting to L takes log N • All deletions are consecutive, so log N per deletion • Each element is deleted at most once: N log N for all deletions • Recall that INSERT, DELETE, SUCCESSOR, take O(log N) time in a balanced binary search tree

Examples Human Genome Browser ABC

Next 2 years: 20+ mammals, & many other animals, will be sequenced & aligned