Creating a Bottom-Up Parser Automatically

Creating a Bottom-Up Parser Automatically. From: Section 2.2.5, Modern Compiler Design , by Dick Grun et al. Roadmap. LR(0) parsing LR(0) conflicts SLR(1) parsing LR(1) parsing LALR(1) parsing Making a grammar LR(1) — or not Error handling in LR parsers

Creating a Bottom-Up Parser Automatically

E N D

Presentation Transcript

Creating a Bottom-Up Parser Automatically From: Section 2.2.5, Modern Compiler Design, by Dick Grun et al.

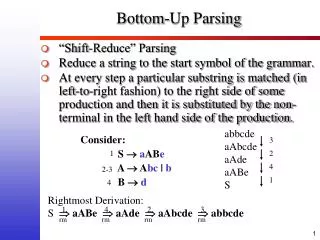

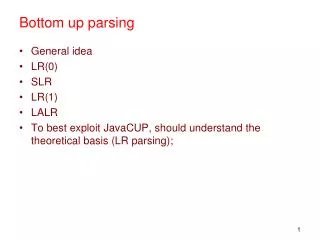

Roadmap • LR(0) parsing • LR(0) conflicts • SLR(1) parsing • LR(1) parsing • LALR(1) parsing • Making a grammar LR(1) — or not • Error handling in LR parsers • A traditional bottom-up parser generator — yacc/bison Bottom-up parsing

Introduction • The main task of a bottom-up parser is to find the leftmost node that has not yet been constructed but all of whose children have been constructed. • The sequence of children is called the handle. • Creating a parent node N and connecting the children in the handle to N is called reducing to N. (1,6,2) is a handle Bottom-up parsing

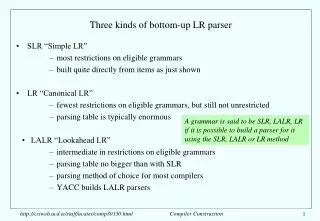

Introduction • Bottom-up algorithms • Precedence parsing: pretty weak • BC(k,m): Out of fashion • LR(0): theoretically important but too weak to be useful; • SLR(1): an upgraded version of LR(0), but still fairly weak; • LR(1): like LR(0) but both very powerful and very memory-consuming; and • LALR(1): a slightly water-down version of LR(1), which is both powerful and usable: the workhorse of the present-day bottom-up parsing. Bottom-up parsing

LR(0) parsing • One of the immediate advantage of bottom-up parsing is that it has no problem with left-recursion. Bottom-up parsing

LR(0) parsing • In lexical analysis, • dotted items are used to summarize the state of search for tokens and • set of items to represent set of hypothesis of next tokens. • LR parsing: • Item sets are kept in which each item is a hypothesis about the handle. • An LR item N • Maintaining the hypothesis of as a possible handle, • This is to be reduced to N, when applicable, and • The part has already been recognized directly to the left of this point. • An item with the dot at the end is called a reduced item; others are called shift items. • The various LR parsing methods differ in the exact form of their LR items, bit not their methods of using them. Bottom-up parsing

LR(0) parsing • We demonstrate how LR items are used to do bottom-up parsing. • Assume the input is i+i$. • The initial item set, s0, consists of the first possibility with closure. ZE$ ET EE+T Ti T(E) s0 i + i $ Bottom-up parsing

LR(0) parsing ZE$ EE+T Ti ET s0 T + i $ s0 E + i $ s0 E s1 + i $ i T T i i Bottom-up parsing

LR(0) parsing EE+T Ti T(E) EE+T Ti T(E) EE+T Ti T(E) s0 E s1 + s2 T $ s0 E s1 $ s0 E s1 + s2 T $ i i T E + T T i i i T i Bottom-up parsing

LR(0) parsing ZE$ EE+T ZE$ EE+T s0 E s1 $ s0 Z E $ E + T i E + T T i i T i Bottom-up parsing

LR(0) parsing Precomputing the item set • The above demonstration of LR parsing shows two features that need to be discussed further: • The computation of the item sets and • The use of these sets. Bottom-up parsing

LR(0) parsingLR(0) conflicts • We cannot make a deterministic ACTION table for just any grammar. • Shift-reduce conflict • Some states may have both outgoing arrows and reduce items • Reduce-reduce conflict • Some states may contain more than one reduced items. • In both cases the ACTION table contains entries with multiple values and the algorithm is no longer deterministic. • If the ACTION table produced from a grammar in the above way is deterministic, the grammar is called an LR(0) grammar. Bottom-up parsing

LR(0) parsingLR(0) conflicts • Very few grammars are LR(0). • A grammar with an ε-rule cannot be LR(0). • Suppose the grammar has the production rule A→ε. • Then an item A→ will be predicted by ant item of the form P→A. • A shift-reduce conflict on A. • Adding the production rule T→i[E] to the grammar on Fig. 2.85. Ti Ti[E] A shift-reduce conflict S5 • Allowing assignments by adding the rules: Z→V:=E$ and V→i. Ti Vi A reduce-reduce conflict S5 • The above examples show that LR(0) method is just too weak to be useful. Bottom-up parsing

SLR(1) parsing • The SLR(1) method is based on the consideration that a handle should not be reduced to a non-terminal N if the look-ahead is a token that cannot follow N: • A reduced item N→ is applicable only if the look-ahead is in FOLLOW(N). • Consequently, SLR(1) has the same transition diagram as LR(0) for a grammar, the same GOTO table, but a different ACTION table. • On the FOLLOW sets, we construct the SLR(1) table for the grammar of Fig.2.85. FOLLOW(Z)={$} FOLLOW(E)={) + $} FOLLOW(T)={) + $} Bottom-up parsing

1: ZE$ 2: ET 3: EE+T 4: Ti 5: T(E) Bottom-up parsing

LR(1) parsing • The reason why conflict resolution by FOLLOW set does not nearly as well as one might wish is that: • it replaces the look-ahead of a single item of a rule N in a given LR state by FOLLOW set of N, which is the union of all the look-ahead of all alternatives of N in all states. • LR(1) item sets are more discriminating: • a look-ahead set is kept with each separate item, to be used to resolve conflicts when a reduce item has been reached. • This greatly increases the strength of the parser, but also the size of its parse tables. Bottom-up parsing

LR(1) parsing • Not LL(1): S exhibits a FIRST/FIRST conflict on x. • Not SLR(1): the shift-reduce conflict in Fig. 2.96. Bottom-up parsing

LR(1) parsing • The LR(1) techniques does not rely on FOLLOW sets, but rather keeps the specific look-ahead with each item. • An LR(1) item: N {}, • is the set of tokens that can follow this specific item. • When the dot has reached the end of the item, N {}, the item is an acceptable reduce item only if the look-ahead at that moment is in ; otherwise the item is ignored. Bottom-up parsing

LR(1) parsing • The rules for determining the look-ahead sets: • When creating the initial set: • The look-ahead set of the initial items in the initial item set S0 contains only one token, the end-of-file token ($), since that is the only token that can follow the start symbol of the grammar. • When doing -moves: • The prediction rule creates new items for the alternative of N in the presence of items of the form P N {}; the look-ahead set of each of these items is FIRST({}), since that is what can follow this specific item in this specific position. Definition of FIRST({}): If FIRST() does not contain , FIRST({}) is just equal to FIRST(); if can produce , FIRST({}) contains all the tokens in FIRST(), excluding , plus the tokens in . Bottom-up parsing

The shift-reduce conflict has gone. Bottom-up parsing

LALR(1) parsing • LR(1) states are split-up version of LR(0). • This split is the power of the LR(1) automaton, but this power is not needed in each and every states. • Combine states S8 and S12 into one new state S12 • The automaton obtained by combining all states of an LR(1) automaton that have the same cores is the LALR(1) automaton. • LALR(1) combines power – it is only marginally weaker than LR(1) – with efficiency – it is the same memory requirements as LR(0). Bottom-up parsing

LALR(1) parsing Bottom-up parsing

LALR(1) parsing Bottom-up parsing

LALR(1) parsing Bottom-up parsing

LALR(1) parsing Bottom-up parsing