Transactions and Locks

370 likes | 563 Vues

In this lesson, we explore the fundamental concepts of transactions in SQL Server, focusing on the ACID properties that ensure data integrity: Atomicity, Consistency, Isolation, and Durability. We discuss the transaction log’s role in recording changes, the process of checkpoints, and how SQL Server employs autorecovery to handle unexpected failures. The lesson also covers implicit and explicit transactions, the importance of rollback, and how distributed transactions are managed through the Microsoft Distributed Transaction Coordinator (DTC).

Transactions and Locks

E N D

Presentation Transcript

Transactions and Locks Lesson 22

Transaction • A transaction is a series of steps that perform a logical unit of work. • Transactions must adhere to ACID properties, that is: • Atomicity: Either all of the steps must succeed or none of them may succeed. • Consistency: The data must be left in a predictable and usable state. • Isolation: Changes made must not be influenced by other concurrent transactions. • Durability: The changes made must be permanent in the database and survive even system failures.

Transactions • SQL Server records all changes made in the transaction log to allow any changes to be undone (rolled back) or redone (rolled forward) in case of a system failure. • When updating or inserting a record into a database, the record is first allocated in buffer memory, and the buffer manager guarantees that the transaction log is written before the changes to the database file are written. • It does this by keeping track of a log position using a log sequence number (LSN).

Checkpoints • At certain intervals, SQL Server issues a checkpoint in the transaction log that issues a write from the transaction log to the data file. • Depending on the setting of the transaction log defined in the database recovery model, the transaction log will keep the committed and written records in the transaction log or truncate the log.

Autorecovery • This process of working with the transaction log and recording actions in the transaction log before applying them to the actual data files allows SQL Server to recover from failures in case of an unexpected shutdown.

Autorecovery • The autorecovery process will check the database to see what the last-issued checkpoint and written LSN were and will then write all committed records from the transaction log that were not recorded yet in the data file to the data file. • This process is a rollforward. • Different from other database systems such as Oracle, SQL Server automatically issues a transaction (autocommitted) on every statement, so you don’t need to explicitly commit these transactions.

Transaction Logs • SQL Server uses a buffer cache, which is an in-memory structure, into which it retrieves data pages from disk for use by applications and users. • Each modification to a data page is made to the copy of the page in the buffer cache. • A modified buffer page in the cache that has not yet been written to disk is called a dirty page.

Transaction Logs • The modification is recorded in the transaction log before it is written to disk. • For this reason, the SQL Server transaction log is called a write-ahead transaction log. • SQL Server has internal logic to ensure that a modification is recorded in the transaction log before the associated dirty page is written to disk. • When SQL Server writes the dirty page in the cache to the disk, it is called flushing the page.

Checkpoint • The checkpoint process is designed to minimize the recovery time if the server fails by minimizing the number of pages in the buffer cache that have not been written to disk. • Checkpoints occur whenever: • A CHECKPOINT statement is issued. • The ALTER DATABASE statement is used. • An instance of SQL Server is stopped normally. • An automatic checkpoint is issued.

Checkpoint • Automatic checkpoints are generated periodically based on the number of records in the active portion of the transaction log, not on the amount of time that has elapsed since the last checkpoint.

Executing Implicit and Explicit Transactions • By default, SQL Server automatically commits a transaction to the database, and every transaction is handled as a single process. • Because this process occurs without any explicit request from you to confirm the action, this is called an autocommit.

Executing Implicit and Explicit Transactions • explicit transaction: A group of SQL statements enclosed within transaction delimiters that define both the start and end of the transaction. • implicit transaction: A connection option in which each SQL Statement executed by the connection is considered a separate transaction.

Transaction Rollback • When you want to confirm a transaction, you issue a COMMIT TRANSACTION statement. • This will close the open statements and confirm the grouped DML statements. • If you don’t want a transaction to occur, that is, you want to cause a transaction rollback, you issue a ROLLBACK TRANSACTION statement.

Distributed Transaction • When executing a distributed transaction, SQL Server doesn’t really differ a lot from executing an explicit transaction. • The transaction, however, will be considered to execute over a remote connection and will be managed and coordinated by the Microsoft Distributed Transaction Coordinator (DTC). • In a distributed environment, you work over the network segment, so the execution of the transaction will take place using a two-phase commit.

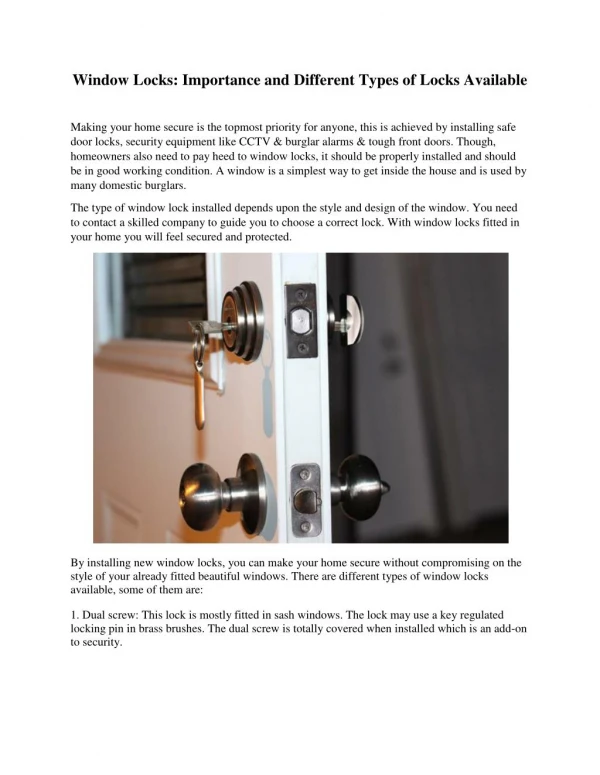

Locks • Locks prevent users from reading or modifying data that other users are in the process of changing. • Two main types of lock come into play: • Read locks: Read locks ensure other users don’t change the data you are retrieving but allow other users to read the data at the same time. • Write locks: Write locks ensure no other users can examine or change the data you are updating.

Deadlock • A deadlock is a situation when two (or more) users, each having a lock on one piece of data, attempt to acquire a lock on the other’s piece. • Unless one of the user processes is terminated, each user would wait indefinitely for the other to release the lock. • SQL Server detects deadlocks and terminates one user’s process.

Locks and Serialization • Locks permit the serialization of transactions, meaning only one person can change a data element at a time. • During a transaction, SQL Server controls and sets the appropriate level of locking. • You can control how some locks are used by including locking hints in your query.

Concurrency • Specific types of locks allow users to access and update data at the same time. • A SELECT may be allowed at the same time as an UPDATE, but the UPDATE blocks a second UPDATE. • This concept, known as concurrency, can increase response time from the user’s perspective. • Concurrency control means changes made by one person do not adversely affect modifications other users make.

Concurrency • There are two types of concurrency control: • Pessimistic: Pessimistic concurrency control locks data when data are read in preparation for an update. • Optimistic: Optimistic concurrency control does not lock data when data are initially read.

Locks and Transaction Integrity • When users access data concurrently, a risk exists that one user’s actions might affect the records another user is accessing. • Locks can prevent the following situations that compromise transaction integrity: • Lost updates • Uncommitted dependencies (dirty read) • Inconsistent analysis (nonrepeatable read) • Phantom reads

Locks and Concurrent Transactions • SQL Server uses different locking modes to control how resources can be accessed by concurrent transactions. • These types of locks are: • Shared • Exclusive • Intent • Update • Schema • Bulk update

Data Manipulation Language Queries • The data Manipulation Language keywords SELECT, INSERT, UPDATE, DELETE and MERGE examine and change data in your tables. • To retrieve data from one or more tables, use these keywords in this order: • SELECT • FROM • WHERE • GROUP BY • HAVING • ORDER BY

Presenting Data in a Different Order • The ORDER BY clause provides the primary means of organizing data, and it has two major keywords: • ASCending • DESCending

Error Handling • SQL Server has greatly improved error-handling capabilities when compared to other database platforms. • RAISERROR • @@ERROR

Error Messages • ERROR_LINE • ERROR_MESSAGE • ERROR_NUMBER • ERROR_PROCEDURE • ERROR_SEVERITY • ERROR_STATE

TRY…CATCH Blocks • TRY…CATCH blocks are a great way to implement error handling in SQL Server. • These blocks work the same as (or very similar to) any programming language that uses a TRY…CATCH construct and they will catch every error that has a severity level greater than 10 but not cause any termination in the database connection. • TRY…CATCH blocks can also be used in stored procedures and triggers.

Using INSERT and DELETE Operations • During an INSERT operation the inserted table is dynamically created and during a DELETE operation the deleted table is likewise created in memory for the life of the transaction. Now, instead of a trigger, you may use the OUTPUT clause to manipulate these data.

Summary • Working with transactions allows you to roll back or cancel a transaction to execute in case of a certain event or condition, or even roll back multiple grouped statements in a distributed environment

Summary • SQL Server supports various recovery models. The most common—but also the one with the biggest transaction log size—is the Full recovery model. • However, if you perform large amounts of batch and bulk inserts, it might be useful not to set the recovery model to Full and instead use the Bulk-Logged recovery model.

Summary • The error-handling in SQL Server is one of the best error-handling capabilities so far in the SQL language because it implements a TRY…CATCH block, just as do programming languages such as Visual Basic and C#.

Summary of Certification Examination • Understand and be able to use transactions. You need to truly understand how transactions work and how you can enforce an explicit transaction within a SQL batch. • It is also important to understand how distributed transactions work and how you can implement error handling within the transactional processing.

Summary of Certification Examination • Know how to identify collations. You need to understand that SQL Server uses collations to play around with different sort orders and character sets within the database. • Collations can be designed on a database level, but they also are implemented with the table creation—or even enforced by explicitly casting or converting to a different collation type. • Understand how to handle exceptions and errors. • The main focus on error handling should be on how to implement a TRY…CATCH block and roll back transactions within the error handling. You need to be familiar with the new methods in error handling and how to use their syntax.

Summary of Certification Examination • Understand how to configure database recovery models. When configuring database recovery models, you need to fully understand that a BULK INSERT statement has a huge impact on the size of your transaction log when defined in a Full recovery model. Therefore, you must be able to identify when to use a Bulk-Logged recovery model to minimize the impact on the transaction log and transaction log performance.

Summary of Certification Examination • Know how to format query results. When working with queries, it is important to understand data type conversion and the various functions that can be used within T-SQL to format a query layout.