Understanding Kernel Functions in Machine Learning: Mercer’s Conditions and PAC Learning Model

This text discusses the characterization of kernel functions, the role of Mercer’s conditions, and the Probabilistically Approximately Correct (PAC) learning model in machine learning. Learn about positive semi-definite matrices, translation invariant kernels, and generalization error bounds.

Understanding Kernel Functions in Machine Learning: Mercer’s Conditions and PAC Learning Model

E N D

Presentation Transcript

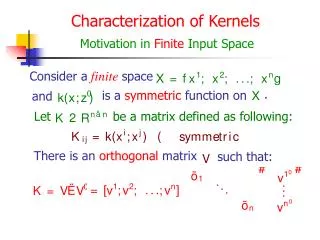

Consider afinitespace . is a symmetric function on and Characterization of Kernels Motivation in Finite Input Space Let be a matrix defined as following: There is an orthogonal matrix such that:

Characterization of Kernels Be Positive Semi-definite Assume: Let where

be afinitespace Let . the corresponding matrix is Any finite subset of Mercer’s Conditions: Guarantee the Existence of Feature Space is a symmetric function on and Then is a kernel function if and only if is positive semi-definite. is infinite (but compact)? • What if Mercer’s conditions: positive semi-definite.

Making Kernels Kernels Satisfy a Number of Closure Properties Let be kernels over be a kernel over and be a symmetric positive semi-definite. Then the following functions are kernels:

The kernels are in the form: Translation Invariant Kernels • The inner product (in the feature space) of two inputs is unchanged if both are translated by the same vector. • Some examples: • Gaussian RBF: • Multiquadric: • Fourier:see Example 3.9 on p. 37

The kernel is negative definite A Negative Definite Kernel • Does not satisfy Mercer’s conditions • Oliv L. Mangansarian used this kernel to solve XOR classification problem • Generalized Support Vector Machine

Key assumption: Training and testing data are generated i.i.d. ( i.e. “average Probably Approximately Correct Learning pac Model fixed but unknown distribution according to an • When we evaluate the “quality” of a hypothesis (classification function) we should take the into account unknown distribution error” or “expected error” ) made by the • We call such measure risk functional and denote it as

Let training be a set of examples choseni.i.d.according to as ar.v. • Treat the generalization error depending on the random selection of r.v. • Find a bound of the trail of the distribution of in the form ,where and is a function of is the confidence level of the error bound which is given by learner Generalization Error of pac Model

will be less • The error made by the hypothesis then the error bound that is not depend on the unknown distribution Probably Approximately Correct • We assert: or