Algebraic Laws

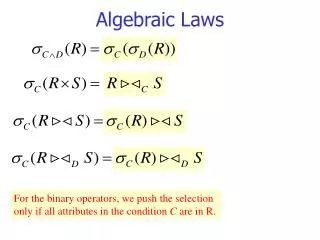

Algebraic Laws. For the binary operators, we push the selection only if all attributes in the condition C are in R. Example:. Consider relation schemas R ( A , B ) and S ( B , C ) and the expression below: ( A =1 OR A =3) AND B < C ( R S )

Algebraic Laws

E N D

Presentation Transcript

Algebraic Laws For the binary operators, we push the selection only if all attributes in the condition C are in R.

Example: • Consider relation schemas R(A,B) and S(B,C)and the expression below: (A=1 OR A=3) AND B<C(RS) • Splitting ANDA=1 OR A=3(B < C(RS)) • Push to S A=1 OR A=3(RB < C(S)) • Push to RA=1 OR A=3(R) B < C(S)

Pushing selections • Usually selections are pushed down the expression tree. • The following example shows that it is sometimes useful to pull selection up in the tree. StarsIn(title,year,starName) Movie(title,year,length,studioName) CREATE VIEW MoviesOf1996 AS SELECT * FROM MOVIE WHERE year=1996; Query:Which stars worked for which studios in 1996? SELECT starName,studioName FROM MoviesOf1996 NATURAL JOIN StarsIN;

pull selection up then push down

Laws for (bag) Projection • A simple law: Project out attributes that are not needed later. • I.e. keep only the input attr. and any join attribute.

Examples for pushing projection Schema R(a,b,c), S(c,d,e)

starName starName year=1996 year=1996 starName,year StarsIn StarsIn Example: Pushing Projection • Schema: StarsIn(title,year,starName) • Query:SELECT starName FROM StarsIn WHERE year = 1996; Should we transform to ? Depends! Is StarsIn stored or computed?

Reasons for not pushing the projection • If StarsIn is stored, the for the projection we have to scan the relation. • If the relation is pipelined from some previous computation, then yes, we better do the projection (on the fly). • Also, if for example there is an index on year for StarsIn, such index is useless in the projected relation starName,year(StarsIn) • While such an index is very useful for the selection on “year=1996”

Laws for duplicate elimination and grouping • Try to move in a position where it can be eliminated altogether E.g. when is applied on • A stored relation with a declared primary key • A relation that is the result of a operation, since grouping creates a relation with no duplicates. absorbs Also: What’s M?

Improving logical query plans • Push as far down as possible (sometimes pull them up first). • Do splitting of complex conditions in in order to push even further. • Push as far down as possible, introduce new early (but take care for exceptions) • Combine with to produce -joinsor equi-joins • Choose an order for joins

title title starname=name AND birthdate LIKE ‘%1960’ starName=name birthdate LIKE ‘%1960’ MovieStar StarsIn StarsIn MovieStar Example of improvement SELECT title FROM StarsIn, MovieStar WHERE starName = name AND birthdate LIKE ‘%1960’;

title starName=name name StarsIn birthdate LIKE ‘%1960’ MovieStar And a better plan introducing a projection to filter out useless attributes:

Estimating the Cost of Operations • We don’t want to execute the query in order to learn the costs. So, we need to estimate the costs. • What’s the cost? • The number of I/O’s to needed to manage the intermediate relations. • This number will be a function of the size of intermediate relations, • i.e. number of their tuples times the number of bytes per tuple • How can we estimate the number of tuples in an intermediate relation? Rules about estimation formulas: • Give (somehow) accurate estimates • Easy to compute

Projection • Projection retains duplicates, so the number of tuples in the result is the same as in the input. • Result tuples are usually shorter than the input tuples. • The size of a projection is the only one we can compute exactly.

Selection Let S = A=c (R) We can estimate T(S) = T(R) / V(R,A) Let S = A<c (R) On average, T(S) would be T(R)/2, but more properly: T(R)/3 Let S = Ac (R), Then, an estimate is: T(S) = T(R)*[(V(R,A)-1)/V(R,A)], or simply T(S) = T(R)

Selection ... Let S = C AND D(R) = C(D(R))and U = D(R). First estimate T(U) and then use this to estimate T(S). Example S = a=10 ANDb<20(R) T(R) = 10,000, V(R,a) = 50 T(S) = (1/50)* (1/3) * T(R) = 67 Note: Watch for selections like: a=10 AND a>20(R)

Selection ... • Let S = C OR D(R). • Simple estimate: T(S) = T(C(R)) + T(D(R)). • Problem: It is possible that T(S)T(R)! A more accurate estimate • Let: • T(R)=n, • m1 = size of selection on C, and • m2 = size of selection on D. • Then T(S) = n(1-(1-m1/n)(1-m2/n))Why? • Example: S = a=10 OR b<20(R). T(R) = 10,000, V(R,a) =50 • Simple estimation: T(S) = 3533 • More accurate: T(S) = 3466

Natural Join R(X,Y) S(Y,Z) • Anything could happen! • No tuples join: T(R S) = 0 • Y is the key in S and a foreign key in R (i.e., R.Y refers to S.Y): Then, T(R S) = T(R) • All tuples join: i.e. R.Y=S.Y = a. Then, T(R S) = T(R)*T(S)

Two Assumptions • Containment of value sets • If V(R,Y) ≤V(S,Y), then every Y-value in R is assumed to occur as a Y-value in S • When such thing can happen? • For example when:Y is foreign key in R, and key in S • Preservation of set values • If A is an attribute of R but not S, then is assumed that • V(R S, A)=V(R, A) • This may be violated when there are dangling tuples in R • There is no violation when: Y is foreign key in R, and key in S

Natural Join size estimation • Let, R(X,Y) and S(Y,Z), where Y is a single attribute. • What’s the size of T(R S)? • Let r be a tuple in R and s be a tuple in S. What’s the probability that r and s join? • Suppose V(R,Y) V(S,Y) • By the containment of set values we infer that: • Every Y’s value in R appears in S. • So, the tuple r of R surely is going match with some tuples of S, but what’s the probability it matches with s? • It’s 1/V(S,Y). • Hence, T(R S) = T(R)*T(S)/V(S,Y) • When V(R,Y) V(S,Y) • By a similar reasoning, for the case when V(S,Y) V(R,Y), we get T(R S) = T(R)*T(S)/V(S,Y). • So, sumarizing we have as an estimate: T(R S) = T(R)*T(S)/max{V(R,Y),V(S,Y)}

Remember: • T(R S) = T(R)*T(S)/max{V(R,Y),V(S,Y)} • Example: • R(a,b), T(R)=1000, V(R,b)=20 • S(b,c), T(S)=2000, V(S,b)=50, V(S,c)=100 • U(c,d), T(U)=5000, V(U,c)=500 • Estimate the size of R S U • T(R S) = • 40,000, • T((R S)U) = • 400,000 • T(S U) = • 20,000, • T(R (S U)) = • 400,000 • The equality of results is not a coincidence. • Note 1: estimate of final result should not depend on the evaluation order • Note 2:intermediate results could be of different sizes

Natural join with multiple join attrib. • R(x,y1,y2) S(y1,y2,z) • T(R S) = T(R)*T(S)/m1*m2, where m1 = max{V(R,y1),V(S,y1)} m2 = max{V(R,y2),V(S,y2)} • Why? • Let r be a tuple in R and s be a tuple in S. What’s the probability that r and s agree on y1? From the previous reasoning, it’s 1/max{V(R,y1),V(S,y1)} • Similarly, what’s the probability that r and s agree on y2? It’s 1/max{V(R,y2),V(S,y2)} • Assuming that aggrements on y1 and y2 are independent we estimate: T(R S) = T(R)*T(S)/[max{V(R,y1),V(S,y1)} * max{V(R,y2),V(S,y2)}] Example: T(R)=1000, V(R,b)=20, V(R,c)=100 T(S)=2000, V(S,d)=50, V(S,e)=50 R(a,b,c) R.b=S.d AND R.c=S.e S(d,e,f) T(R S) = (1000*2000)/(50*100)=400

Another example: (one of the previous) • R(a,b), T(R)=1000, V(R,b)=20 • S(b,c), T(S)=2000, V(S,b)=50, V(S,c)=100 • U(c,d), T(U)=5000, V(U,c)=500 • Estimate the size of R S U • Observe that R S U = (R U) S • T(R U) = • 1000*5000 = 5,000,000 • Note that the number of b’s in the product is 20 (V(R,b)), and the number of c’s is 500 (V(U,c)). • T((R U)S) = • 5,000,000 * 2000 / (50 * 500) = 400,000