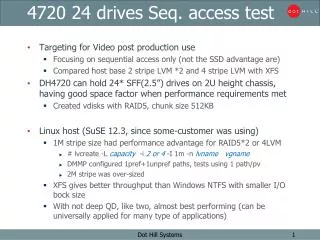

4720 24 drives Seq. access test

4720 24 drives Seq. access test. Targeting for Video post production use Focusing on sequential access only (not the SSD advantage are) Compared host base 2 stripe LVM * 2 and 4 stripe LVM with XFS

4720 24 drives Seq. access test

E N D

Presentation Transcript

472024 drives Seq. access test • Targeting for Video post production use • Focusing on sequential access only (not the SSD advantage are) • Compared host base 2 stripeLVM *2 and 4 stripeLVM with XFS • DH4720 can hold 24* SFF(2.5”)drives on 2U height chassis, having good space factor when performance requirements met • Created vdisks with RAID5,chunk size 512KB • Linux host(SuSE12.3, since some-customer was using) • 1M stripe size had performance advantage for RAID5*2 or 4LVM • # lvcreate -L capacity -i 2 or 4-I 1m-n lvnamevgname • DMMP configured 1pref+1unprefpaths, tests using 1 path/pv • 2M stripe was over-sized • XFS gives better throughput than WindowsNTFS with smaller I/O bock size • With not deep QD, like two, almost best performing(can be universally applied for many type of applications) Dot Hill Systems

Configuration1. 2d-LVM*2 3TB R5 3TB R5 DellR610 SuSELinux12.3 QLE2564 HBA IOmeter 2010 Create striped VG using two PVs from same controller (2* workers). Create200GBxfs with several stripe size. 3TB R5 3TB R5 4720 24 drives 1 chassis FC-DAS 8Gb*2 or 4 1 or 2 vd / port 600GB 10K SAS *6 600GB 10K SAS *6 FRUKA19 (shorty v2 chassis) KF64 (SFF 600GB 10K SAS) Hitachi HUC1060* x 24 600GB 10K SAS *6 600GB 10K SAS *6 Two * RAID5(A-side) Two * RAID5(B-side) 1*preferred path + 1*other path per volume (Using 1* preferred path only) 4720 (redundant) FRUKC50 4* 8G FC,Linear FX, 4GB Arrandale 1.8GHz SC Vertex-6 FPGA (Jerboa) Dot Hill Systems

2d-LVM*2xfsresults • These show 2 volumes total • (6dRAID5*2 stripe, 12 drives on LV) * 2, 24 drives total • One volume performance is half of these • 2d-LVM*2 in total was best. Considering one volume only, 4d-LVM win. • If AP can do IO with QD=2, 16KB block I/O gives good performance. Dot Hill Systems

Configuration2.4d-LVM 3TB R5 3TB R5 DellR610 SuSELinux 12.3 QLE2564 HBA IOmeter 2010 Created host based 4 stripe LVM. Createxfsand200GBIOBW.tstfiles for each VG. Stripe size 256K, 512K, and 1M. 3TB R5 3TB R5 4720 and 4824 24 drives 1 chassis FC-DAS 8Gb*2 or 4 1 or 2 vd / port 600GB 10K SAS *6 600GB 10K SAS *6 FRUKA19 (shorty v2 chassis) KF64 (SFF 600GB 10K SAS) Hitachi HUC1060* x 24 600GB 10K SAS *6 600GB 10K SAS *6 Two * RAID5(A-side) Two * RAID5(B-side) 4720 (redundant) FRUKC50 4* 8G FC,Linear FX, 4GB Arrandale 1.8GHz SC Vertex-6 FPGA (Jerboa) Dot Hill Systems

4d LVM xfs 性能比較 • Even with QD=1, >=64KB block IO can achieve >=1,100MB/s • Both for Read or Write access • With QD=2, >=16KB block IO can achieve >=1,150MB/s • Similar result with QD=4 or 8 Dot Hill Systems

2d-LVMvs.4d-LVM • Graph resized to be able to directly compare 1 volume performance • If best single stream performance needed, need to use all 24 drives, configure 4d-LVM.Use 1M byte stripe size to create LV on XFS. • With 12 drives 2d-LVM, not so poor. • Has advantage when allover goodness. Performance, usable capacity and more WS can be supported. Dot Hill Systems

2d-LVMvs.4d-LVM (2) • Two drives LVM *2 gives best total throughput • All are using 24 drives. RAID5(5d+1p)*4RAID sets. • Using 8Gb*4 connections. Logical B/W max ~= 3,200MB/s Dot Hill Systems

ref)Linux vs. Windows Linux (left) vs.Windows(right) • LinuxXFS seems using more aggressive cache • NTFSsector size: default used 8KB? Dot Hill Systems